Professional Documents

Culture Documents

07cp18 Neural Networks and Applications 3 0 0 100

Uploaded by

tkec_csedeptOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

07cp18 Neural Networks and Applications 3 0 0 100

Uploaded by

tkec_csedeptCopyright:

Available Formats

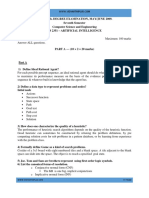

07CP18 NEURAL NETWORKS AND APPLICATIONS 3 0 0 100 UNIT I 9 BASIC LEARNING ALGORITHMS: Biological Neuron Artificial Neural Model

l - Types of activation functions Architecture: Feedforwardand Feedback Learning Process: Error Correction Learning Memory Based Learning Hebbian Learning Competitive Learning Boltzman Learning Supervised and Unsupervised Learning Learning Tasks: Pattern Space Weight Space Pattern Association Pattern Recognition Function Approximation Control Filtering - Beamforming Memory Adaptation - Statistical Learning Theory Single Layer Perceptron Perceptron Learning Algorithm Perceptron Convergence Theorem Least Mean Square Learning Algorithm Multilayer Perceptron Back Propagation Algorithm XOR problem Limitations of Back Propagation Algorithm. UNIT II 9 RADIAL-BASIS FUNCTION NETWORKS AND SUPPORT VECTOR MACHINES: RADIAL BASIS FUNCTION NETWORKS: Exact Interpolator Regularization Theory Generalized Radial Basis Function Networks - Learning in Radial Basis Function Networks - Applications: XOR Problem Image Classification. SUPPORT VECTOR MACHINES: 18 Optimal Hyperplane for Linearly Separable Patterns and Nonseparable Patterns Support Vector Machine for Pattern Recognition XOR Problem - -insensitive Loss Function Support Vector Machines for Nonlinear Regression UNIT III 9 ATTRACTOR NEURAL NETWORKS: Associative Learning Attractor Neural Network Associative Memory Linear Associative Memory Hopfield Network Content Addressable Memory Strange Attractors and Chaos - Error Performance of Hopfield Networks - Applications of Hopfield Networks Simulated Annealing Boltzmann Machine

Bidirectional Associative Memory BAM Stability Analysis Error Correction in BAMs - Memory Annihilation of Structured Maps in BAMS Continuous BAMs Adaptive BAMs Applications UNIT IV 9 ADAPTIVE RESONANCE THEORY: Noise-Saturation Dilemma - Solving Noise-Saturation Dilemma Recurrent Oncenter Off-surround Networks Building Blocks of Adaptive Resonance Substrate of Resonance Structural Details of Resonance Model Adaptive Resonance Theory Applications UNIT V 9 SELF ORGANISING MAPS: Self-organizing Map Maximal Eigenvector Filtering Sangers Rule Generalized Learning Law Competitive Learning - Vector Quantization Mexican Hat Networks - Selforganizing Feature Maps Applications Total: 45 REFERENCES: 1. Satish Kumar, Neural Networks: A Classroom Approach, Tata McGraw-Hill Publishing Company Limited, New Delhi, 2004. 2. Simon Haykin, Neural Networks: A Comprehensive Foundation, 2ed., Addison Wesley Longman (Singapore) Private Limited, Delhi, 2001. 3. Martin T.Hagan, Howard B. Demuth, and Mark Beale, Neural Network Design, Thomson Learning, New Delhi, 2003. 4. James A. Freeman and David M. Skapura, Neural Networks Algorithms, Applications, and Programming Techniques, Pearson Education (Singapore) Private Limited, Delhi, 2003. 5. S. Rajasekaran, G.A. Vijayalakshmi Pai, Neural Networks, Fuzzy Logic and Genetic

You might also like

- CP5191 Machine Learning Techniques L T P C3 0 0 3Document7 pagesCP5191 Machine Learning Techniques L T P C3 0 0 3indumathythanik933No ratings yet

- Introduction: Introduction To Soft Computing Introduction To Fuzzy Sets and Fuzzy Logic Systems IntroductionDocument1 pageIntroduction: Introduction To Soft Computing Introduction To Fuzzy Sets and Fuzzy Logic Systems IntroductionYaksh ShahNo ratings yet

- 15cs204j-Algorithm Design and AnalysisDocument3 pages15cs204j-Algorithm Design and AnalysisAnugrah SinghalNo ratings yet

- BDA (2019) Two Marks (QB)Document16 pagesBDA (2019) Two Marks (QB)sivaprakashNo ratings yet

- 18AI61Document3 pages18AI611DT20AI026 MOHAMMED ZABIULLAH CNo ratings yet

- Unit 4 - Neural Networks PDFDocument12 pagesUnit 4 - Neural Networks PDFflorinciriNo ratings yet

- Unit 1 - IntroductionDocument25 pagesUnit 1 - IntroductionPratiksha KambleNo ratings yet

- Jntuk r20 Unit-I Deep Learning Techniques (WWW - Jntumaterials.co - In)Document23 pagesJntuk r20 Unit-I Deep Learning Techniques (WWW - Jntumaterials.co - In)TARUN SAI PRADEEPNo ratings yet

- Active Online Learning For Social Media Analysis To Support Crisis ManagementDocument10 pagesActive Online Learning For Social Media Analysis To Support Crisis Managementrock starNo ratings yet

- EC360 Soft Computing S5-EC-SyllabusDocument2 pagesEC360 Soft Computing S5-EC-SyllabusRensiNo ratings yet

- 2-QUESTION PAPER DR K UMA Question Bank CS3001 SOFTWARE ENGG-converted1Document71 pages2-QUESTION PAPER DR K UMA Question Bank CS3001 SOFTWARE ENGG-converted1MAHENDRANo ratings yet

- 5TH Operating System NotesDocument8 pages5TH Operating System NotesNeha ChinniNo ratings yet

- 29-2 CDocument8 pages29-2 Canon_621618677No ratings yet

- PBG BCI RoboticsDocument5 pagesPBG BCI Roboticsbangalore2009No ratings yet

- DAA Manual (2021-2022)Document66 pagesDAA Manual (2021-2022)Aditya Aryan100% (1)

- Ece443 - Wireless Sensor Networks Course Information Sheet: Electronics and Communication Engineering DepartmentDocument10 pagesEce443 - Wireless Sensor Networks Course Information Sheet: Electronics and Communication Engineering DepartmentDr. M. Kalpana ECE-STAFFNo ratings yet

- Question Bank Module-1: Department of Computer Applications 18mca53 - Machine LearningDocument7 pagesQuestion Bank Module-1: Department of Computer Applications 18mca53 - Machine LearningShiva ShankaraNo ratings yet

- Enabling Technologies and Federated CloudDocument38 pagesEnabling Technologies and Federated Cloudasd100% (1)

- UNIT 4 MaterialDocument31 pagesUNIT 4 MaterialHaritha SasupalliNo ratings yet

- High Performance Computing L T P J C Pre-Requisite Nil Syllabus Version Course ObjectivesDocument2 pagesHigh Performance Computing L T P J C Pre-Requisite Nil Syllabus Version Course ObjectivesHarish MuthyalaNo ratings yet

- GCC Unit Iii NotesDocument22 pagesGCC Unit Iii NotesaslanNo ratings yet

- Elective-II Soft Computing PDFDocument3 pagesElective-II Soft Computing PDFKarthi BENo ratings yet

- HCI FinalDocument17 pagesHCI FinalRavi BikkumallaNo ratings yet

- Knowledge Level (Remembe R/ Apply/Ana Lyze/ Understan D Evaluate/C Reate) Ou PDocument6 pagesKnowledge Level (Remembe R/ Apply/Ana Lyze/ Understan D Evaluate/C Reate) Ou P2021 Batch First YearNo ratings yet

- 18CS653 - NOTES Module 1Document24 pages18CS653 - NOTES Module 1SuprithaNo ratings yet

- Ai PDFDocument85 pagesAi PDFRomaldoNo ratings yet

- 2 - UNIT - II - Synchronization and DeadlocksDocument45 pages2 - UNIT - II - Synchronization and DeadlocksTharaka Roopesh100% (1)

- Unit 1 Part-A: Department of Information Technology Question Bank - Even SemesterDocument11 pagesUnit 1 Part-A: Department of Information Technology Question Bank - Even SemesterPRIYA RAJINo ratings yet

- It1402 Mobile Computing (2 Marks & 16marks) ModelDocument33 pagesIt1402 Mobile Computing (2 Marks & 16marks) ModelDinesh CNo ratings yet

- Cse-CSEViii-web 2.0 & Rich Internet Application (06cs832) - NotesDocument86 pagesCse-CSEViii-web 2.0 & Rich Internet Application (06cs832) - NoteslifeoffameNo ratings yet

- Computer Organization & ArchitectureDocument55 pagesComputer Organization & ArchitectureSanam KhanNo ratings yet

- MPCDocument17 pagesMPCBoova RagavanNo ratings yet

- Artificial IntelligenceDocument2 pagesArtificial IntelligenceAbhi AndhariyaNo ratings yet

- Database Management System AssignmentDocument8 pagesDatabase Management System AssignmentRohit SharmaNo ratings yet

- Course Plan HCIDocument9 pagesCourse Plan HCISathyanarayananNo ratings yet

- RGPV 7th Sem Scheme CSE.Document1 pageRGPV 7th Sem Scheme CSE.Ankit AgrawalNo ratings yet

- BD Problem Solving - IDocument2 pagesBD Problem Solving - IRishab kumarNo ratings yet

- CS 391L Machine Learning Course SyllabusDocument2 pagesCS 391L Machine Learning Course SyllabusOm SinghNo ratings yet

- Unit 2aDocument31 pagesUnit 2aAkshaya GopalakrishnanNo ratings yet

- CS8451 DESIGN AND ANALYSIS OF ALGORITHMS QUESTION BANK - Watermark PDFDocument47 pagesCS8451 DESIGN AND ANALYSIS OF ALGORITHMS QUESTION BANK - Watermark PDFneelakandan50% (2)

- Gujarat Technological University: Computer Engineering Machine Learning SUBJECT CODE: 3710216Document2 pagesGujarat Technological University: Computer Engineering Machine Learning SUBJECT CODE: 3710216Er Umesh ThoriyaNo ratings yet

- Lab Manual B.Sc. (CA) : Department of Computer Science Ccb-2P2: Laboratory Course - IiDocument31 pagesLab Manual B.Sc. (CA) : Department of Computer Science Ccb-2P2: Laboratory Course - IiJennifer Ledesma-PidoNo ratings yet

- Genetic AlgorithmDocument33 pagesGenetic Algorithmsuhas199No ratings yet

- CS6801-Multi Core Architectures and ProgrammingDocument9 pagesCS6801-Multi Core Architectures and ProgramminggopitheprinceNo ratings yet

- Cse SyllabusDocument19 pagesCse SyllabusNarotham Reddy AnamNo ratings yet

- CS341 Course Outline Professional PracticesDocument3 pagesCS341 Course Outline Professional PracticesKinza ShakeelNo ratings yet

- 1-Introduction To Dynamic Web ContentDocument34 pages1-Introduction To Dynamic Web ContentRicky Noel Nabor Diancin Jr.No ratings yet

- QUESTION PAPER SET Data Structures CSEDocument4 pagesQUESTION PAPER SET Data Structures CSEPallavan TrustNo ratings yet

- Module 1Document91 pagesModule 1Nikitha NarendraNo ratings yet

- Artificial Intelligence - Based Multiopath Transmission Model For WSN Energy EfficiencyDocument11 pagesArtificial Intelligence - Based Multiopath Transmission Model For WSN Energy EfficiencyIJAR JOURNAL100% (1)

- Computer Organization and ArchitectureDocument21 pagesComputer Organization and ArchitectureHebziba Jeba Rani S100% (1)

- Deep Learning KathiDocument18 pagesDeep Learning KathiSai Raghu Kiran AvulaNo ratings yet

- Introduction of Ethical HackingDocument8 pagesIntroduction of Ethical HackingNair ShreejaNo ratings yet

- Ai QBDocument8 pagesAi QBjesudosssNo ratings yet

- Integrity and Security in DBMSDocument58 pagesIntegrity and Security in DBMSKAMAL KANT KUSHWAHA100% (1)

- Cognitive Big Data Intelligence with a Metaheuristic ApproachFrom EverandCognitive Big Data Intelligence with a Metaheuristic ApproachSushruta MishraNo ratings yet

- Chapter 2 Problem SolvingDocument190 pagesChapter 2 Problem SolvingMegha GuptaNo ratings yet

- Module 2B - Greedy - FinalDocument40 pagesModule 2B - Greedy - FinalSIDDHARTH CHATTERJEENo ratings yet

- Stack and QueueDocument61 pagesStack and QueueshemseNo ratings yet

- CISP 430 - Data StructuresDocument3 pagesCISP 430 - Data StructuresLuke KontesNo ratings yet

- Construct The Binary Tree From Preorder and InorderDocument2 pagesConstruct The Binary Tree From Preorder and InorderSarthak PatelNo ratings yet

- Data Structures LaboratoryDocument3 pagesData Structures Laboratorymaha_devan52No ratings yet

- TOTSOL - Total Solution: Exam Conducted byDocument6 pagesTOTSOL - Total Solution: Exam Conducted bygauravhchavdaNo ratings yet

- Numerical Methods Lesson PlanDocument2 pagesNumerical Methods Lesson PlanvrsafeNo ratings yet

- CS-301 Latest Mcq's Final Term by Vu Topper RMDocument24 pagesCS-301 Latest Mcq's Final Term by Vu Topper RMMuhammad ahzamNo ratings yet

- COMPROG Flowchart For DCIT22.documentDocument4 pagesCOMPROG Flowchart For DCIT22.documentKrish RuivasNo ratings yet

- Exercises 1Document3 pagesExercises 1Mamata Sreenivas100% (1)

- Artificial Intelligence - Alpha-Beta PruningDocument6 pagesArtificial Intelligence - Alpha-Beta PruningAfaque AlamNo ratings yet

- Numerical Optimization in MatlabDocument25 pagesNumerical Optimization in MatlabMahfuzulhoq ChowdhuryNo ratings yet

- Local Search Algorithms & Optimization Problems: Artificial Intelligence COSC-3112 Ms. Humaira AnwerDocument27 pagesLocal Search Algorithms & Optimization Problems: Artificial Intelligence COSC-3112 Ms. Humaira AnwerMUHAMMAD ALINo ratings yet

- String Searching Algorithms SlidesDocument102 pagesString Searching Algorithms SlidesaaNo ratings yet

- VTU ADA Lab ProgramsDocument31 pagesVTU ADA Lab ProgramsanmolbabuNo ratings yet

- Hackathlon 3Document2 pagesHackathlon 3Rameshwari RameshwariNo ratings yet

- Solution Manual For An Introduction To Optimization 4th Edition Edwin K P Chong Stanislaw H ZakDocument10 pagesSolution Manual For An Introduction To Optimization 4th Edition Edwin K P Chong Stanislaw H ZakMary Ohagan100% (35)

- Data Structures Through C++: Lab ManualDocument26 pagesData Structures Through C++: Lab Manualahmadhassan306No ratings yet

- A Modified Real Coded Genetic Algorithm For ConstrainedDocument26 pagesA Modified Real Coded Genetic Algorithm For ConstrainedgkgjNo ratings yet

- Scheduling PDFDocument6 pagesScheduling PDFAndrew LeeNo ratings yet

- Hrim Gandhi Google ExperienceDocument5 pagesHrim Gandhi Google ExperienceRahul SharmaNo ratings yet

- K. J. Somaiya College of EngineeringDocument6 pagesK. J. Somaiya College of EngineeringSaraunsh JadhavNo ratings yet

- Maximum Flow Problems: Flows and Cuts Augmenting Path AlgorithmDocument15 pagesMaximum Flow Problems: Flows and Cuts Augmenting Path AlgorithmTropa kong PalabanNo ratings yet

- Aim: To Solve A System of Linear Equations Using Gaussian Elimination. AlgorithmDocument7 pagesAim: To Solve A System of Linear Equations Using Gaussian Elimination. AlgorithmpeeyushNo ratings yet

- KL PartitioningDocument29 pagesKL Partitioningjakk234No ratings yet

- MATLAB Practical File (Codes) by Priyanshu SinhaDocument35 pagesMATLAB Practical File (Codes) by Priyanshu SinhaPriyanshu SinhaNo ratings yet

- DSA 1 Note-An IntroductionDocument6 pagesDSA 1 Note-An IntroductionuttamNo ratings yet

- Adaptive Learning-Based K-Nearest Neighbor Classifiers With Resilience To Class ImbalanceDocument17 pagesAdaptive Learning-Based K-Nearest Neighbor Classifiers With Resilience To Class ImbalanceAyushNo ratings yet

- Revised Simplex Method PDFDocument26 pagesRevised Simplex Method PDFAnimesh ChoudharyNo ratings yet