Professional Documents

Culture Documents

International Journal of Computational Engineering Research (IJCER)

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

International Journal of Computational Engineering Research (IJCER)

Copyright:

Available Formats

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue.

Image Compression: An Artificial Neural Network Approach

Anjana B1, Mrs Shreeja R2

1

Department of Co mputer Science and Engineering, Calicut University, Kuttippuram Email: babu.anjana@gmail.co m 2 Department of Co mputer Science and Engineering, Calicut University, Kuttippuram

Abstract

Image comp ression has become the most recent emerg ing trend throughout the world. Image co mpression is essential where images need to be stored, transmitted or viewed quickly and efficiently. The art ificial neural network is a recent tool in image compression as it processes the data in parallel and hence requires less time and is superior over any other technique. The reason that encourage researchers to use artificial neural networks as an image co mpression approach are adaptive learning, self organization, noise suppression, fault tolerance and optimized appro ximations. A survey about different methods used for compression has been done. Fro m the above study, recently used network is mu ltilayer feed forward network due to its efficiency. The choice of suitable learning algorithm is application dependent. A new approach by modify ing the training algorith m to improve the compression is proposed here. Protection of image contents is equally important as compression in order to maintain the privacy. If any malicious modification occurs either in storage or in transmission channel, such modifications should be identified. So authentication and protection are incorporated into the proposed system to enhance the security. Keywords: Jacobian, Levenberg-Marquardt, Multilayer perception, Neural network, Radial basis function. 1.

Introduction

Image co mpression has become the most recent emerging trend throughout the world. So me of the co mmon advantages of image compression over the internet are reduction in time of web page uploading and downloading and lesser storage space in terms of bandwidth. Compressed images make it possible to view more images in a shorter period of time. Image comp ression is essential where images need to be stored, transmitted or viewed quickly and efficiently. Image compression is the representation of image in a d igit ized form with a few bits maintenance only allo wing acceptable level o f image quality. A high quality image may require 10 to 100 million bits for representation. The large data files associated with images thus drive the need for extremely high compression ratio to make storage practical. Co mpressio n explo its the followin g facts. Imagery data has more redundancy than we can generally find in other types of data. The human eye is very tolerant of approximation error in an image. This tolerance has to be exploited in order to produce increased compression at the expense of image quality.

Artificial neural networks are simp lified models of the biological neuron system. A neural netwo rk is a h ighly interconnected network with a large nu mber of processing elements called neurons in an architecture inspired by the brain. Artificial neural networks are massively parallel adaptive networks which are intended to abstract and model some of the functionality of the human nervous system in an attempt to partially capture some of its computational strengths. A neural network can be viewed as comprising eight components which are neurons, activation state vector, signal function, pattern of connectivity, activity aggregation rule, activation rule, learning rule and environment. They are considered as the possible solutions to problems and for the applications where high computation rates are required. The BPNN has the simp lest architecture of ANN that has been developed for image compression but its drawback is very slow convergence. Image processing is a very interesting and are hot areas where day-to-day improvement is quite inexplicable and has become an integral part of o wn lives. It is the analysis, manipulation, storage, and display of graphical images. Image processing is a module primarily used to enhance the quality and appearance of black and white images. It enhances the quality of the scanned or faxed docu ment, by performing operations that remove imperfections. Image processing operations can be roughly divided into three major categories, image enhancement, image restoration and image compression. Image comp ression techniques aim to remove the redundancy present in data in a way, which makes image reconstruction possible. Image co mpression continues to be an important subject in many areas such as communication , data storage, computation etc.The report begins with an introduction to image compression following the need for the compression. The next section describes some of the underlyin g technologies for performing the image compression follows its observation and analysis. Last section is the future scope and conclusion.

Issn 2250-3005(online) December| 2012 Page 53

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue. 8

2. Related works 2.1 Back Propagation Neural Network [1] The neural network is designed with three layers, one input layer, one output layer and one hidden layer. The input layer an d output layer are fully connected to the hidden layer. Co mpression is achieved by designing the number of neurons at the hidden layer, less than that of neurons at both input and the output layers. Image compression is achieved by training the network i n such a way that the coupling weights scale the input vector of N-dimension into a narrow channel of K-dimension with K less than N, at the hidden layer and produce the optimu m output value which makes the quadratic error between input and output minimu m. Basic neural network used for compression is shown in Figure 1. The basic back-propagation network is further extended to construct a hierarchical neural network by adding two more hidden layers into the existing network.

Fig 2.1:- Back Propagation Neural Network 2.2 Hierarchical and adapti ve back-propag ation neural network [2] The basic back-propagation network is further extended to construct a hierarchical neural network by adding two more hidden layers into the existing network. A ll three hidden layers are fu lly conne cted. Nested training algorith m is proposed to reduce the overall neural network train ing time. The neuron weights are maintained the same throughout the image compression process. Hierarchical neural network for co mpression is shown in Figure 2. Adaptive schemes are based on the principle that different neural networks are used to compress image b locks with different extent of co mp lexity. The basic ide a is to classify the input image blocks into a few subsets with different features according to their comp lexity measurement. A fine tuned neural network then compresses each subset. Prior to train ing, all image blocks are classified into four classes accord ing to their activity values which are identified as very low, low, high and very high activities. The n etwork results in high complexity.

Fig 2.2:- Hierarchical Neural Network

Issn 2250-3005(online) December| 2012 Page 54

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue. 8

2.3 Multi l ayer Feed Forward Artificial Neural Network [3], [4] The network is designed in a way such that N will be greater than Y, where N is input layer/output layer neurons and Y is hidden layer neurons. Divide the training image into blocks. Scale each block and apply it to input layer and get the output of output layer. Adjust the weight to min imize the difference between the output and the desired output. Repeat until the error is small enough. The output of hidden layer is quantized and entropy coded to represent the compressed image. Two categories of optimization algorith ms are considered i.e., derivative-based and derivative-free [5]. Derivative based methods include gradient descent, conjugate-gradient,Quasi Newton and Levenberg-Marquardt methods. Gradient descent indicates the direction to move. The conjugate-gradient method reduces oscillatory behavior and adjusts weight according to the previously successful path directions as it uses a direction vector which is a linear co mbination of past direction vectors and the current negative gradient vector. LM and QN algorith m-based back propagation neural networks are equally efficient. Under derivative free, t wo of the popular developed approaches are Genetic Algorith m (GA) and Particle Swarm Optimization (PSO). 2.4 Multilayer Perception [6] Basic mult ilayer perception (M LP) build ing unit is a model of artificial neuron. This unit computes the weighted sum of the inputs plus the threshold weight and passes this sum through the activation function usually sigmo id. In a mult ilayer perception, the outputs of the units in one layer form the inputs to the next layer. The weights of the network are usually computed by training the network using the back propagation algorithm. The basic computational unit, often referred to as a neuron, consists of a set of synaptic weights, one for every input, plus a bias weight, a summer, and a nonlinear functio n referred to as the activation function. Each unit co mputes the weighted sum of the inputs plus the bias weight and passes this sum through the activation function to calculate the output value as (1) 2.5 Radi al B asis Functi on Ne twork [6] Radial basis function networks are feed-fo rward networks. They are trained using a supervised training algorith m. They are typically configured with a single hidden layer of units whose output function is selected from a class of functions called basis functions. The input layer is made up of source nodes (sensory units) whose number is equal to the dimension N of the input vector. The second layer is the hidden layer which is composed of nonlinear units that are connected directly to all of the nodes in the input layer. Each hidden unit takes its input from all the nodes at the input layer. The hidden units contain a basis function, which has the parameters centre and width. Observation and AnalysisThe back propagation neural network is generally used as a basic network through which different variat ions of image co mpression schemes can be implemented wit h different error functions and using overlapped blocks, which include hierarchical and adaptive back propagation neural networks. Later came neural network based adaptive image coding which was basically developed from the mathemat ical iterations for obtaining the K-L transform conventionally. To imp rove the compression performance, mu lti layer feed forward network is used. It uses different optimization methods of which Quasi Newton is better but takes a long time. There are different optimization techniques which can be combined with basic networks in order to improve the compression efficiency. Survey is concluded by giving a brief idea about ho w the authentication and protection to be incorporated into the neural network to enhance the security. 3. Proposed System Two different categories for improving the co mpression methods and their performance have been suggested. In the first case, conventional methods like SPIHT, vector quantization (VQ) etc., can be used with some enhancements. Secondly, apply neural network to develop the compression scheme, so that new methods can be developed and further research possibilit ies can be explo red in future.In this work, image compression using multi layer neural networks has been proposed. In the proposed system, there is a testing set consists of sub images that are not included in the training set. Levenberg -Marquardt algorith m is used for training purpose. Image pixels are normalized before the compression process. If the learning factor is very large, the LM algorithm beco mes the steepest decent. This parameter is automatically adjusted for all iterations in order to secure convergence. Here, a modified version of LM algorithm is proposed that provides a similar performance, while lacks the inconveniences of LM. It is more stable. The MSE between the target image and reconstructed image should be as small as possible so that the quality of reconstructed image should be near to the target image. The proposed method gives high compression ratio. (a) One to one mapping: For incorporating protection of the data, one to one property of the neural network can be used. If there are interactions of two parameters, resultant should be a unique value stated as: (2) (b) One way property:

Issn 2250-3005(online) December| 2012 Page 55

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue. 8

For authentication, the property allows to compute output from the input easily wh ile very difficult to co mpute input from the output. The input P is co mposed of n elements wh ile the output is unique C as: (3) It is easy to compute C fro m a given P, but difficu lt to 3.1 Neural Network Compression The compression process is described below:1. Read image pixels and then normalize it to range [0-1]. 2. Div ide the image into non-overlapping blocks. 3. Rasterize the pixels and apply to the input layer. 4. Co mpute the outputs of hidden layer units by mult iplying the input vector by the weight matrix (V). 5. Store the outputs in a co mpressed file after renormalization. 6. If there are more image vectors go to (4). 7. Stop. co mpute P fro m C.

Fig 3.1 Compression The decompression process is described below:1. Take one by one vector from the comp ressed image. 2. Normalize this vector. 3. The outputs of output layer units by mult iplying outputs of hidden layer units by the weight matrix. 4. Derasterize the outputs of output layer units to build the sub image. 5. Return this sub image to its proper location. 6. Renormalize th is block and store it in the reconstructed file. 7. If there are more vectors go to (1).

Fig 3.2 Decompression

Issn 2250-3005(online)

December| 2012

Page 56

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue. 8

4.

Implementati on 4.1 Preprocessing The neural network requires inputs with real type and the sigmoid function of each neuron requires the input data to be in the range [0-1]. For this reason, the image data values must be normalized. The normalization is the process of linearly transformation of image values fro m the range [0-255] into another range that is appropriate for neural netwo rk requirements. Segmentation is the process of dividing it into non overlapping blocks with equal size to simplify the learning/compressing processes. Image rasterization is the process of converting each sub image fro m a two dimensional block in to a one dimensional vector, to speed up the learning. 4.2 Neural Network Design Multilayer feedforward network is used for co mpressing the images. Neural network is designed in such a way that the numbers of input and output layer neurons are set to 64. Hidden layer neurons are set to 16. The two weight matrices are selected to small random nu mbers. 4.3 Trai ning The input image is split up into blocks or vectors of 4X4, 8X8 or 16X16 pixels. These vectors are used as inputs to the network. The network is provide by the expected output, and it is trained so that the coupling weights, {wij}, scale the inpu t vector of N -d imension into a narrow channel of Y -dimension, wh ich is less than N, at the hidden layer and produce the optimu m output value which makes the quadratic error between output and the desired one min imu m.

Fig 4.1 LM algorithm The LM algorith m has got some disadvantages. If the learning factor is very large, the LM algorith m beco mes the steepest decent. This parameter is automatically adjusted for all iterat ions in order to secure convergence. The LM algorithm co mputes the Jacobin J matrix at each iteration step and the inversion of square matrix. In the LM algorith m must be inverted for all iterations. Hence for large size neural networks, the LM algorith m is not practical. Here, a modified version of LM algorith m is proposed that provides a similar performance, wh ile lacks the inconveniences of LM. A new performance index is introduced, (4) where is the desired value of output and is the actual value of output and the pattern is the number of the weights, P is the number of patterns, and K is the number of network outputs. This index represents a global error, will later lead to a significant reduction of the size of a matrix to be inverted at each iteration step [6]. The learning factor, is modified as 0.01 , where E is a k1 matrix. If the error is small, then actual output approaches to desired output. The trained network is now ready to be used for image compress ion which, is achieved by dividing or input images into normalizat ion and segmentation. To decompress the image; first the compressed image is renormalized then applies it to the output of the hidden layer and get the one vector of the hidden layer output is normalized then it rasterization to represent the reconstruct the image.

Issn 2250-3005(online) December| 2012 Page 57

I nternational Journal Of Computational Engineering Research (ijceronline.com) Vol. 2 Issue. 8

MSE and PSNR are the parameters which define the quality of an image reconstructed at the output layer of neural network. a) Mean Square Error (MSE) The MSE between the target image and reconstructed image should be as small as possible so that the quality of reconstructed image should be near to the target image. Ideally, the mean square error should be zero for ideal decompression. The compression ratio is defined by the ratio of the data fed to the input layer neurons to the data out fro m the hidden layer neurons. In a structure 1, 016 neurons were used in the hidden layer. So it will results in the fixed 4:1 co mpression ratio. b) Peak Signal to Noise rat io (PSNR) The term peak signal-to-noise ratio (PSNR) is an expression for the ratio between the maximu m possible value (power) of a signal and the power of distorting noise that affects the quality of its representation. The PSNR co mputes by the followin g equation:(5) The compression ratio performance can be computed by, CR= where is the input layer neurons and is the hidden layer neurons . (6)

5. Conclusion

The need for effective data compression is evident in almost all applications where storage and transmission of digital images are involved. Neural networks offer the potential for providing a novel solution to the problem o f co mpression by it s ability to generate an internal data representation.Multilayer feed forward network is used due to its efficiency. Learnin g algorith ms has significant impact on the performance of neural networks, and the effects of this depend on the targeted application. The choice of suitable learning algorith ms is therefore applicat ion dependent. The performance can be increased by modifying the train ing algorith m wh ich outperforms the existing method. Protection of image contents is equally important as compressio n in order to maintain the privacy. If any malicious modification occurs either in storage or in transmission channel, such modifications should be identified. So the authenticat ion and protection can be incorporated into the proposed system in future by u tilizing the other properties of the neural network.

References

[1] [2] [3] [4] [5] J. Jiang, Image co mpression with neural networks a survey, Signal processing: image Co mmun ication, 1999, 737 760. M. Eg mont-Petersen, D. de Ridder, and H. Handels, Image processing wit h neural networksa review, Pattern recognition, vol. 35, no. 10, 2002, 2279 2301. F. Ibrahim, Image compression using multilayer feed forward art ificial neural network and dct, Journal of Applied Sciences Research, vol. 6, no. 10, 2010, 15541560. V. Gaidhane, V. Singh, Y. Hote, and M. Ku mar, New approaches for image co mpression using neural network, Journal of Intelligent Learning Systems and Applications, vol. 3, no. 4, 2011, 220229. N. Relhan, M. Jain, V. Sahni, J. Kaur, and S. Sharma, Analysis of optimization techniques for feed forward neural networks based image co mpression, International Journal of Co mputer Science and Info rmation Technologies, vol. 3, no. 2, 2012.

Issn 2250-3005(online)

December| 2012

Page 58

You might also like

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (894)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Employee Confidentiality and Non-Disclosure AgreementDocument5 pagesEmployee Confidentiality and Non-Disclosure AgreementshamoojeeNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- 2010 HD Part Cat. LBBDocument466 pages2010 HD Part Cat. LBBBuddy ButlerNo ratings yet

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- Eye Bags ReliefDocument27 pagesEye Bags ReliefNatsu DragneelNo ratings yet

- Mrs. Universe PH - Empowering Women, Inspiring ChildrenDocument2 pagesMrs. Universe PH - Empowering Women, Inspiring ChildrenKate PestanasNo ratings yet

- GP Rating GSK Exit ExamDocument108 pagesGP Rating GSK Exit ExamMicle VM100% (4)

- Audi Q5: First Generation (Typ 8R 2008-2017)Document19 pagesAudi Q5: First Generation (Typ 8R 2008-2017)roberto100% (1)

- Analysis of Metamaterial Based Microstrip Array AntennaDocument2 pagesAnalysis of Metamaterial Based Microstrip Array AntennaInternational Journal of computational Engineering research (IJCER)No ratings yet

- The Effect of Bottom Sediment Transport On Wave Set-UpDocument10 pagesThe Effect of Bottom Sediment Transport On Wave Set-UpInternational Journal of computational Engineering research (IJCER)No ratings yet

- Parametric Study On Analysis and Design of Permanently Anchored Secant Pile Wall For Earthquake LoadingDocument16 pagesParametric Study On Analysis and Design of Permanently Anchored Secant Pile Wall For Earthquake LoadingInternational Journal of computational Engineering research (IJCER)No ratings yet

- Parametric Study On Analysis and Design of Permanently Anchored Secant Pile Wall For Earthquake LoadingDocument16 pagesParametric Study On Analysis and Design of Permanently Anchored Secant Pile Wall For Earthquake LoadingInternational Journal of computational Engineering research (IJCER)No ratings yet

- An Analysis of The Implementation of Work Safety System in Underpass Development Projects of The Intersection of Mandai Makassar-IndonesiaDocument5 pagesAn Analysis of The Implementation of Work Safety System in Underpass Development Projects of The Intersection of Mandai Makassar-IndonesiaInternational Journal of computational Engineering research (IJCER)No ratings yet

- The Influence of Price Offers For Procurement of Goods and Services On The Quality of Road ConstructionsDocument7 pagesThe Influence of Price Offers For Procurement of Goods and Services On The Quality of Road ConstructionsInternational Journal of computational Engineering research (IJCER)No ratings yet

- Stiffness Analysis of Two Wheeler Tyre Using Air, Nitrogen and Argon As Inflating FluidsDocument8 pagesStiffness Analysis of Two Wheeler Tyre Using Air, Nitrogen and Argon As Inflating FluidsInternational Journal of computational Engineering research (IJCER)No ratings yet

- Wave-Current Interaction Model On An Exponential ProfileDocument10 pagesWave-Current Interaction Model On An Exponential ProfileInternational Journal of computational Engineering research (IJCER)No ratings yet

- Analysis of The Pedestrian System in Jayapura City (A Case Study of Pedestrian Line On Percetakan StreetDocument9 pagesAnalysis of The Pedestrian System in Jayapura City (A Case Study of Pedestrian Line On Percetakan StreetInternational Journal of computational Engineering research (IJCER)No ratings yet

- An Analysis of The Noise Level at The Residential Area As The Impact of Flight Operations at The International Airport of Sultan Hasanuddin Maros in South Sulawesi ProvinceDocument4 pagesAn Analysis of The Noise Level at The Residential Area As The Impact of Flight Operations at The International Airport of Sultan Hasanuddin Maros in South Sulawesi ProvinceInternational Journal of computational Engineering research (IJCER)No ratings yet

- The Influence of Price Offers For Procurement of Goods and Services On The Quality of Road ConstructionsDocument7 pagesThe Influence of Price Offers For Procurement of Goods and Services On The Quality of Road ConstructionsInternational Journal of computational Engineering research (IJCER)No ratings yet

- A Real Time Abandoned Object Detection and Addressing Using IoTDocument5 pagesA Real Time Abandoned Object Detection and Addressing Using IoTInternational Journal of computational Engineering research (IJCER)No ratings yet

- Drag Optimization of Bluff Bodies Using CFD For Aerodynamic ApplicationsDocument8 pagesDrag Optimization of Bluff Bodies Using CFD For Aerodynamic ApplicationsInternational Journal of computational Engineering research (IJCER)No ratings yet

- A Real Time Abandoned Object Detection and Addressing Using IoTDocument5 pagesA Real Time Abandoned Object Detection and Addressing Using IoTInternational Journal of computational Engineering research (IJCER)No ratings yet

- Effect of The Nipah Mall Development On The Performance Roads of Urip Sumohardjo in Makassar CityDocument5 pagesEffect of The Nipah Mall Development On The Performance Roads of Urip Sumohardjo in Makassar CityInternational Journal of computational Engineering research (IJCER)No ratings yet

- Modeling The Frictional Effect On The Rip Current On A Linear Depth ProfileDocument6 pagesModeling The Frictional Effect On The Rip Current On A Linear Depth ProfileInternational Journal of computational Engineering research (IJCER)No ratings yet

- Multi-Response Optimization of WEDM Process Parameters of Monel 400 Using Integrated RSM and GADocument8 pagesMulti-Response Optimization of WEDM Process Parameters of Monel 400 Using Integrated RSM and GAInternational Journal of computational Engineering research (IJCER)No ratings yet

- Comparison of Different Evapotranspiration Estimation Techniques For Mohanpur, Nadia District, West BengalDocument7 pagesComparison of Different Evapotranspiration Estimation Techniques For Mohanpur, Nadia District, West BengalInternational Journal of computational Engineering research (IJCER)No ratings yet

- Investigation of Vibration On Suspension Systems at Different Load and Operating ConditionsDocument5 pagesInvestigation of Vibration On Suspension Systems at Different Load and Operating ConditionsInternational Journal of computational Engineering research (IJCER)No ratings yet

- Anatomy of The Traffic Accidents On The RoadDocument6 pagesAnatomy of The Traffic Accidents On The RoadInternational Journal of computational Engineering research (IJCER)No ratings yet

- Location of Zeros of PolynomialsDocument7 pagesLocation of Zeros of PolynomialsInternational Journal of computational Engineering research (IJCER)No ratings yet

- A Holistic Approach For Determining The Characteristic Flow On Kangsabati CatchmentDocument8 pagesA Holistic Approach For Determining The Characteristic Flow On Kangsabati CatchmentInternational Journal of computational Engineering research (IJCER)No ratings yet

- PCB Faults Detection Using Image ProcessingDocument5 pagesPCB Faults Detection Using Image ProcessingInternational Journal of computational Engineering research (IJCER)No ratings yet

- Time-History Analysis On Seismic Stability of Nuclear Island Bedrock With Weak InterlayerDocument9 pagesTime-History Analysis On Seismic Stability of Nuclear Island Bedrock With Weak InterlayerInternational Journal of computational Engineering research (IJCER)No ratings yet

- Urban Town PlanningDocument4 pagesUrban Town PlanningInternational Journal of computational Engineering research (IJCER)No ratings yet

- Modelling, Fabrication & Analysis of Pelton Turbine For Different Head and MaterialsDocument17 pagesModelling, Fabrication & Analysis of Pelton Turbine For Different Head and MaterialsInternational Journal of computational Engineering research (IJCER)No ratings yet

- Effect of Turbulence Model in Numerical Simulation of Single Round Jet at Low Reynolds NumberDocument16 pagesEffect of Turbulence Model in Numerical Simulation of Single Round Jet at Low Reynolds NumberInternational Journal of computational Engineering research (IJCER)No ratings yet

- An Evaluation of The Taxi Supply Management at The International Airport of Sultan Hasanuddin in Makassar - IndonesiaDocument4 pagesAn Evaluation of The Taxi Supply Management at The International Airport of Sultan Hasanuddin in Makassar - IndonesiaInternational Journal of computational Engineering research (IJCER)No ratings yet

- Wi MAX Deinter Leaver's Address Generation Unit Through FPGA ImplementationDocument3 pagesWi MAX Deinter Leaver's Address Generation Unit Through FPGA ImplementationInternational Journal of computational Engineering research (IJCER)No ratings yet

- Study of The Class and Structural Changes Caused by Incorporating The Target Class Guided Feature Subsetting in High Dimensional DataDocument13 pagesStudy of The Class and Structural Changes Caused by Incorporating The Target Class Guided Feature Subsetting in High Dimensional DataInternational Journal of computational Engineering research (IJCER)No ratings yet

- Fast Aldol-Tishchenko ReactionDocument5 pagesFast Aldol-Tishchenko ReactionRSLNo ratings yet

- Beauty ProductDocument12 pagesBeauty ProductSrishti SoniNo ratings yet

- WindSonic GPA Manual Issue 20Document31 pagesWindSonic GPA Manual Issue 20stuartNo ratings yet

- Bula Defense M14 Operator's ManualDocument32 pagesBula Defense M14 Operator's ManualmeNo ratings yet

- Dolni VestoniceDocument34 pagesDolni VestoniceOlha PodufalovaNo ratings yet

- Simba s7d Long Hole Drill RigDocument2 pagesSimba s7d Long Hole Drill RigJaime Asis LopezNo ratings yet

- Chapter 19 - 20 Continuous Change - Transorganizational ChangeDocument12 pagesChapter 19 - 20 Continuous Change - Transorganizational ChangeGreen AvatarNo ratings yet

- ITP Exam SuggetionDocument252 pagesITP Exam SuggetionNurul AminNo ratings yet

- Prlude No BWV in C MinorDocument3 pagesPrlude No BWV in C MinorFrédéric LemaireNo ratings yet

- Jesd8 15aDocument22 pagesJesd8 15aSridhar PonnurangamNo ratings yet

- Propoxur PMRADocument2 pagesPropoxur PMRAuncleadolphNo ratings yet

- Philippine Army BDU BidDocument2 pagesPhilippine Army BDU BidMaria TeresaNo ratings yet

- Duca Industries March 2023 pay slip for Dipankar MondalDocument1 pageDuca Industries March 2023 pay slip for Dipankar MondalPritam GoswamiNo ratings yet

- Display PDFDocument6 pagesDisplay PDFoneoceannetwork3No ratings yet

- A Princess of Mars Part 3Document4 pagesA Princess of Mars Part 3Sheila Inca100% (1)

- Special Power of Attorney: Benedict Joseph M. CruzDocument1 pageSpecial Power of Attorney: Benedict Joseph M. CruzJson GalvezNo ratings yet

- Form 709 United States Gift Tax ReturnDocument5 pagesForm 709 United States Gift Tax ReturnBogdan PraščevićNo ratings yet

- Shouldice Hospital Ltd.Document5 pagesShouldice Hospital Ltd.Martín Gómez CortésNo ratings yet

- Steps To Christ AW November 2016 Page Spreaad PDFDocument2 pagesSteps To Christ AW November 2016 Page Spreaad PDFHampson MalekanoNo ratings yet

- STS Prelim ExamDocument2 pagesSTS Prelim ExamMychie Lynne MayugaNo ratings yet

- EN 12449 CuNi Pipe-2012Document47 pagesEN 12449 CuNi Pipe-2012DARYONO sudaryonoNo ratings yet

- Felizardo C. Lipana National High SchoolDocument3 pagesFelizardo C. Lipana National High SchoolMelody LanuzaNo ratings yet

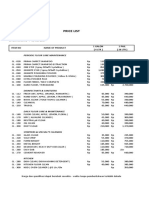

- Price List PPM TerbaruDocument7 pagesPrice List PPM TerbaruAvip HidayatNo ratings yet

- Ailunce HD1 Software ManualDocument33 pagesAilunce HD1 Software ManualMarc LaBarberaNo ratings yet