Professional Documents

Culture Documents

Design of Human Emotion Recognition System From Speech Using Particle Swarm Optimization

Uploaded by

editor_ijcat0 ratings0% found this document useful (0 votes)

12 views6 pagesThis paper proposed Design of Human Emotion Recognition System from speech using Particle Swarm Optimization. The system architecture contains feature extraction and selection, emotional speech database, classifier Algorithm, which is used as a emotion classifier and recognizer. The Algorithm is used to differentiate emotions such as anger, happiness, sadness, fear, Surprise, normal state, etc.

Original Description:

Copyright

© © All Rights Reserved

Available Formats

PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThis paper proposed Design of Human Emotion Recognition System from speech using Particle Swarm Optimization. The system architecture contains feature extraction and selection, emotional speech database, classifier Algorithm, which is used as a emotion classifier and recognizer. The Algorithm is used to differentiate emotions such as anger, happiness, sadness, fear, Surprise, normal state, etc.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

12 views6 pagesDesign of Human Emotion Recognition System From Speech Using Particle Swarm Optimization

Uploaded by

editor_ijcatThis paper proposed Design of Human Emotion Recognition System from speech using Particle Swarm Optimization. The system architecture contains feature extraction and selection, emotional speech database, classifier Algorithm, which is used as a emotion classifier and recognizer. The Algorithm is used to differentiate emotions such as anger, happiness, sadness, fear, Surprise, normal state, etc.

Copyright:

© All Rights Reserved

Available Formats

Download as PDF, TXT or read online from Scribd

You are on page 1of 6

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

177

Design of Human Emotion Recognition System

from Speech using Particle Swarm Optimization

1

Anjali Shelke ,

2

Sonali Joshi

1

PG Scholar,Department of Electronics Engineering, G. H. Raisoni College Of Engineering,

Nagpur, India 440016

2

Assistant Professor, Department of Electronics Engineering, G. H. Raisoni College Of Engineering,

Nagpur, India 440016

Abstract - Emotional speech recognition is an area of great

interest for human-computer interaction. The system

architecture contains feature extraction & Selection, emotional

speech database, classifier Algorithm, which is used as a

emotion classifier and recognizer. The system must be able to

recognize the users emotion and perform the actions

accordingly. Many of emotion recognition systems have been

constructed by different researchers for recognition of human

emotions in spoken utterances. This paper proposed the

technique used for recognition of human emotion from speech

using different methods of feature extraction for the emotion

recognition. For experimental study, the database for the speech

emotion recognition system is the emotional speech samples and

the features extracted from these speech samples are the energy,

pitch, Mel frequency cepstrum coefficient (MFCC).. The

Algorithm is used to differentiate emotions such as anger,

happiness, sadness, fear, Surprise, normal state, etc. This paper

proposed design of human emotion recognition system from

speech using Particle Swarm Optimization.

Keywords - Introduction, feature extracted, Proposed

Work.

1. Introduction

The term bio-inspired has been introduced to demonstrate

the strong relation between a particular system and

algorithm, which has been proposed to solve a specific

problem, and a biological system, which follows a similar

procedure or has similar capabilities. Bio-inspired

computing represents a class of algorithms focusing on

efficient computing, e.g. for optimization processes and

pattern recognition. The bio-inspired algorithms are very

easy to understand and simple to implement. These

algorithms especially dominate the classical optimization

methods. It is often closely related to the field of swarm

intelligence (SI), artificial immune system (AIS) and

cellular signaling pathways.

Speech emotion recognition is one of the latest challenges

in speech processing. Besides human facial expressions

speech has proven as one of the most promising

modalities for the automatic recognition of human

emotions. Affective computing is concerned with emotions

and machines. By giving machines the ability to recognize

different emotions and make them able to behave in

accordance with the emotional conditions of the user, human

computer interfaces will become more efficient.

Speech is a complex signal which contains information

about the message, speaker, language and emotions.

Emotions are produced in the speech from the nervous

system consciously, or unconsciously. Emotional speech

recognition is a system which basically identifies the

emotional as well as physical state of human being from

his or her voice. Emotion recognition is gaining attention

due to the widespread applications into various domains:

Intelligent Tutoring System, Lie Detection, emotion

recognition in Call Center, Sorting of Voice Mail, and

Diagnostic Tool by Speech Therapists.

2. Speech Emotion Recognition System

Speech emotion recognition aims to automatically identify

the emotional state of a human being from voice. Fig.1

indicates the speech emotion system components.

Fig1: Block diagram of Speech emotion recognition system

Speech emotion recognition system contains four main

modules: emotional speech input, feature extraction,

feature selection, classification, and emotion output. A

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

178

human cannot classify easily natural emotions, so it is

difficult to expect that machines can offer a higher correct

classification. A typical emotions set contains 300

emotional states which are decomposed into six primary

emotions like happiness, sadness, surprise, fear, anger,

neutral. Success of speech emotion recognition depends

on naturalness of database.

There are two databases which are available publically:

Danish Emotional Corpus (DSE) [4] and Berlin

Emotional Database (EMO-DB) [2, 12]. From these two

databases Danish Emotional Corpus (DSE) is used. With

respect to authenticity, there seems to be three types of

databases used in the SER research: type one is acted

emotional speech with human labeling. This database is

obtained by asking an actor to speak with a predefined

emotion. Type two is authentic emotional speech with

human labeling. These databases are coming from real-

life systems (for example call-centers) [3] and type three

is elicited emotional speech with self report instead of

labeling. [10]

3. Emotional Speech Database

In this research study we use the Danish Emotional

Speech (DES) Database as a Emotional Speech Database.

This includes expressions by four actors familiar with

radio theatre, two male and two female. In this database

speech is expressed in five emotional states: anger,

happiness, neutral, sadness and surprise.

.

4. Feature Extracted

Speech signal composed of large number of parameters

which indicates emotion contents of it. Changes in feature

parameters indicate changes in the emotions. Therefore

the proper choice of feature vectors is one of the most

important tasks in emotion recognition. There are several

approaches towards automatic recognition of emotion in

speech by using different feature vectors. In this research

work we use two feature extraction method.

The features extracted here are:

4.1. Statistical Feature

Energy and pitch are two important feature for speech

emotion recognition. The Energy is the basic and most

important feature in speech signal. We can obtain the

statistics of energy in the whole speech sample by

calculating the energy, such as mean value, max value,

variance, variation range, contour of energy. The value of

pitch frequency can be calculated in each speech frame

and the statistics of pitch can be obtained in the whole

speech sample. These statistical values reflect the global

properties of characteristic parameters. For our

experiment we calculated some descriptive statistical

minimum, maximum, Average, std. deviation, variance.

The pitch is estimated based on cepstral analysis.

(a)

(b)

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

179

(c)

Fig2:-Result of statistical feature extracted for anger having fundamental

frequency for (a) 916.84Hz, (b) 759.036Hz & (c) 746.193Hz.

4.2. Mel Frequency Cepstrum Coefficient (MFCC)

MFCC is a representation of the short-term power

spectrum of a sound, based on a liner cosine transform of

a log power spectrum on a nonlinear mel scale of

frequency.

Fig3: Block Diagram of the MFCC feature extraction process

Pre-processing: The continuous time signal (speech) is

sampled at sampling frequency. At the first stage in

MFCC feature extraction is to boost the amount of energy

in the high frequencies. This pre-emphasis is done by

using a filter [4].

Framing: it is a process of segmenting the speech samples

obtained from analog to digital conversion (ADC), into

the small frames with the time length within the range of

20-40 msec. Framing enables the non-stationary speech

signal to be segmented into quasi-stationary frames, and

enables Fourier Transformation of the speech signal. It is

because, speech signal is known to exhibit quasi-

stationary behaviour within the short time period of 20-40

msec [4].Windowing: Windowing step is meant to

window each individual frame, in order to minimize the

signal discontinuities at the beginning and the end of each

frame [4].

FFT: Fast Fourier Transform (FFT) algorithm is widely

used for evaluating the frequency spectrum of speech.

FFT converts each frame of N samples from the time

domain into the frequency domain [4].

Mel Filter bank and Frequency wrapping: The mel filter

bank consists of overlapping triangular filters with the

cut-off frequencies determined by the centre frequencies

of the two adjacent filters. The filters have linearly spaced

centre frequencies and fixed bandwidth on the mel scale

[4].

Take Logarithm: The logarithm has the effect of changing

multiplication into addition. Therefore, this step simply

converts the multiplication of the magnitude in the

Fourier transform into addition [4].

Take Discrete Cosine Transform: It is used to

orthogonalise the filter energy vectors. Because of this

orthogonalisation step, the information of the filter energy

vector is compacted into the first number of components

and shortens the vector to number of components [4].

In this work input is a .wav file .wav file containing

emotional speech utterances from Danish Emotion

Database. The five emotions used are, anger, happiness,

sadness, fear, and neutral state. Then Segmenting all

concatenated voiced speech signal into 25.6ms-length

frames. Then Estimate the logarithm of the magnitude of

the discrete Fourier Transform (DFT) for all signal

frames. Filtering out the center frequencies of the sixteen

triangle band-pass filters corresponding to the mel

frequency scale of individual segments. Estimate inversely

the IDFT to get all 10-order MFCC coefficients. After that

analyzing all extracted MFCC dataset for two dimension

principal components and then used as an input vector for

testing and training of PSO.

This all processes are implemented in Maltab program

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

180

(a)

(b)

Fig. 4: Waveforms of extracted features (a: Filtered

signal; b: MFCC & Spectrum of speech signal)

5. Classifier Selection

The classification-based approaches focus on designing a

classifier to determine distinctive boundaries between

emotions. The process of emotional speech detection also

requires the selection of a successful classifier which will

allow for quick and accurate emotion identification.

Currently, the most frequently used classifiers are linear

discriminate classifiers (LDC), k-nearest neighbor (k-

NN), Gaussian mixture model (GMM) [6], support vector

machines (SVM) [4], decision tree algorithms and hidden

Markov models(HMMs) [5].Various studies showed that

choosing the

appropriate classifier can enhance the overall

performance of the system.

Gaussian mixture model allows training the desired data

set from the databases. GMM are known to capture

distribution of data point from the input feature space,

therefore GMM are suitable for developing emotion

recognition model when large number of feature vector is

available. Given a set of inputs, GMM refines the weights

of each distribution through expectation-maximization

algorithm. GMMs are suitable for developing emotion

recognition models using spectral features, as the decision

regarding the emotion category of the feature vector is

taken based on its probability of coming from the feature

vectors of the specific model [6].

The artificial neural network (ANN) is also one of the

classifier, used for many pattern recognition applications.

They are known to be more effective in modeling

nonlinear mappings. Also, ANNs classification

performance is usually better than HMM and GMM when

the number of training examples is relatively low. The

classification accuracy of ANN is relatively low compared

to other classifiers. The ANN based classifiers may

achieve a correct classification rate of 51.19% in speaker

dependent recognition, and that of 52.87% for speaker

independent recognition [5].

Another classifier is Support Vector Classifier.SVM

classifiers are based on the use of kernel functions to

nonlinearly map the original features to a high

dimensional space where data can be well classified using

a linear classifier. SVM classifiers are widely used in

many pattern recognition applications and shown to

outperform other well-known classifiers [4].

In this work now we have a set of features available with

us we will take use of the classifiers to distinguish

between the different emotional states. Now we will go to

use the Particle Swam Optimization as classifier for

classification purpose. First we train the classifier with

some inputs of different emotional states. After training

the classifier, we will use it for recognizing the new given

input. The process of PSO training contains labeling of

extracted features and training PSO.

6. Particle Swarm Optimization

The bio-inspired computation algorithms are the excellent

tools for global optimization [1]. Particle swarm

optimization is global optimization algorithm that

originally took its inspiration from the biologically

examples by swarming, flocking and herding phenomena

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

181

in vertebrate. It uses a number of agents (particles) that

constitute a swarm moving around in the search space

looking for the best solution. Each practical keeps track of

its coordination in the solution space. Particle swarm

optimization is a population based optimizing tool which

work out by cooperation of each individual. The

convergence ability in PSO is faster than other

evolutionary algorithms (GA, SA and ACO) and also

requires the less parameter for calculating the optimizing

value [11].

7. Proposed Work

Fig5:-Generalized Block Diagram of Speech Emotion [10]

In this research work, The speech signal is used as input

signal. Statistical and, Mel frequency cepstrum coefficient

(MFCC) feature are extracted. These features will be then

given to the Particle Swarm Optimization as a model of

training and as a classifier, which classify the different

emotion.

8. Flow Chart

Fig6:-Proposed Flow chart of Emotion Recognition from Speech using

Particle Swarm optimization

8. Conclusion

From all the reference paper, most recent work done in

the field of Speech Emotion Recognition is discussed. The

several classifier performances are reviewed. Success of

emotion recognition is dependent on appropriate feature

extraction as well as proper classifier selection from the

sample emotional speech. Classifier performance is

needed to be increased for recognition of speaker

independent systems. In this research work we extracted

the feature which are now going to give as input to the

Particle swarm optimization which we are going to use as

a classifier, so it may give good result as compare to other

classifier. The application area of emotion recognition

from speech is expanding as it opens the new means of

communication between human and machine.

References

[1] Sonali Navghare,Shubh-angi Giripunje, Preeti Bajaj,

Biologically Inspired Computing System for Facial

Emotion Detection: a Design Approach International

Journal of Computer Applications (0975 8887)

[2] Simina Emerich , Eugen Lupu , Anca Apatean ,

Bimodal Approach in Emotion Recognition using

Speech and Facial Expressions 2009 IEEE

[3] Shubhangi Giripunje,Mr.Ash-ish Panat , Speech

recognition For Emotion With Neural Network: A

Design Approach 8th International Conference, KES

2004,

[4] Vaishali M. Chavan, V.V. Gohokar , Speech Emotion

Recognition by using SVM-Classifier , International

Journal of Engineering and Advanced Technology

(IJEAT)

[5] Firoz Shah.A, Raji Sukumar.A, Babu Anto.P, Automatic

Emotion Recognition from Speech using Artificial

Neural Networks with Gender- Dependent Databases

2009 IEEE DOI 10.1109/ACT.2009.4

[6] Angeliki Metallinou, Sungbok Lee and Shrikanth

Narayanan, Audio-Visual Emotion Recognition using

Gaussian Mixture Models for Face and Voice

2008 IEEE DOI 10.110!/ISM.2008.40

[7] Mandar Gilke , Pramod Kachare , Rohit Kothalikar ,

Varun Pius Rodrigues and Madhavi Pednekar , MFCC-

based Vocal Emotion Recognition UsingANN(ICEEI

2012)

[8] Bjrn Schuller, Gerhard Rigoll, and Manfred Lang,

HIDDEN MARKOV MODEL-BASED SPEECH

EMOTION RECOGNITION 2003 IEEE

[9] Hyoun-joo go,keun-chang-kwalk,dae-jong-lee,hyung-

genu-chun Emotion Recowition From the Facial Image

and Speech Signal SICE Annual Conference in Fukui,

August 4-6,2003 Fukui University, Japan

[10] S. Ramakrishnan, Recognition of Emotion from Speech:

A Review.

IJCAT International Journal of Computing and Technology, Volume 1, Issue 2, March 2014

ISSN : 2348 - 6090

www.IJCAT.org

182

[11] Hemlata s. Urade,Prof. Rahila Patel,Study and Analysis

of Particle Swarm Optimization:A Review,2

nd

National

Conference on Information and Communication

Technology(NCICT)2011.

[12] Kamran Soltani, Raja Noor Ainon,SPEECH EMOTION

DETECTION BASED ON NEURAL NETWORKS

2007 IEEE.

[13] Yongjin Wang and Ling Guan,AN INVESTIGATION OF

SPEECH-BASED HUMAN EMOTION

RECOGNITION 2004 IEEE.

You might also like

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Various Pitch Extraction Techniques of Audio Files For Audio Information RetrievalDocument4 pagesVarious Pitch Extraction Techniques of Audio Files For Audio Information Retrievaleditor_ijcatNo ratings yet

- Parallelization of BFS Graph Algorithm Using CUDADocument6 pagesParallelization of BFS Graph Algorithm Using CUDAeditor_ijcatNo ratings yet

- Multiple Disease Diagnosis by Using Fuzzy Pattern Applications On Large DatabaseDocument8 pagesMultiple Disease Diagnosis by Using Fuzzy Pattern Applications On Large Databaseeditor_ijcatNo ratings yet

- Missing Value Estimation and Impact On Heterogeneous Data SetDocument4 pagesMissing Value Estimation and Impact On Heterogeneous Data Seteditor_ijcatNo ratings yet

- Various Methods For Preventing Flooding Attack in MANET A Comparative AnalysisDocument3 pagesVarious Methods For Preventing Flooding Attack in MANET A Comparative Analysiseditor_ijcatNo ratings yet

- Text Cryptosystem Based On Elliptic Curve Cryptography For NetworksDocument4 pagesText Cryptosystem Based On Elliptic Curve Cryptography For Networkseditor_ijcatNo ratings yet

- NIOS II Based Embedded Web Server Development For Networking ApplicationsDocument5 pagesNIOS II Based Embedded Web Server Development For Networking Applicationseditor_ijcatNo ratings yet

- Android Help Alert System Using Speech Recognition PDFDocument5 pagesAndroid Help Alert System Using Speech Recognition PDFeditor_ijcatNo ratings yet

- A Distributed Method To Protect Disruption Tolerant Networks From The Effects of Packet Overflow PDFDocument6 pagesA Distributed Method To Protect Disruption Tolerant Networks From The Effects of Packet Overflow PDFeditor_ijcatNo ratings yet

- Review On Brain Tumor Detection Techniques From MRI Images and Rough Set TheoryDocument6 pagesReview On Brain Tumor Detection Techniques From MRI Images and Rough Set Theoryeditor_ijcatNo ratings yet

- Multi Region Extraction Image Segmentation Based On Centroid Clustering TechniqueDocument5 pagesMulti Region Extraction Image Segmentation Based On Centroid Clustering Techniqueeditor_ijcatNo ratings yet

- Performance Evaluation of Wireless Sensor Network Under Black Hole AttackDocument5 pagesPerformance Evaluation of Wireless Sensor Network Under Black Hole Attackeditor_ijcatNo ratings yet

- Preserving Privacy and Illegal Content Distribution For Cloud EnvironmentDocument7 pagesPreserving Privacy and Illegal Content Distribution For Cloud Environmenteditor_ijcatNo ratings yet

- User Deadline Based Job Scheduling in Grid Computing PDFDocument8 pagesUser Deadline Based Job Scheduling in Grid Computing PDFeditor_ijcatNo ratings yet

- Parallel K Means Clustering Based On Hadoop and HamaDocument5 pagesParallel K Means Clustering Based On Hadoop and Hamaeditor_ijcatNo ratings yet

- Implementation of Modified RSA Cryptosystem Based On Offline Storage and Prime Number PDFDocument3 pagesImplementation of Modified RSA Cryptosystem Based On Offline Storage and Prime Number PDFeditor_ijcatNo ratings yet

- Two Ways Security On Cloud Computing Using Cryptography and Intrusion Detection A Review PDFDocument3 pagesTwo Ways Security On Cloud Computing Using Cryptography and Intrusion Detection A Review PDFeditor_ijcatNo ratings yet

- Analysis of Noise Exposure From Different Machines by Graph Based Clustering Method PDFDocument6 pagesAnalysis of Noise Exposure From Different Machines by Graph Based Clustering Method PDFeditor_ijcatNo ratings yet

- Self Improved ODMP Protocol (ODMP E) PDFDocument6 pagesSelf Improved ODMP Protocol (ODMP E) PDFeditor_ijcatNo ratings yet

- Securing Images Using Encryption Techniques A Survey PDFDocument5 pagesSecuring Images Using Encryption Techniques A Survey PDFeditor_ijcatNo ratings yet

- Towards A Statistical Context For Source Obscurity in Sensor Network PDFDocument4 pagesTowards A Statistical Context For Source Obscurity in Sensor Network PDFeditor_ijcatNo ratings yet

- Study On Security Breaches in PHP Applications PDFDocument5 pagesStudy On Security Breaches in PHP Applications PDFeditor_ijcatNo ratings yet

- Securing Websites Through Multi CAPTCHA PDFDocument4 pagesSecuring Websites Through Multi CAPTCHA PDFeditor_ijcatNo ratings yet

- Power Quality Monitoring of Induction Motor Using Lab VIEW PDFDocument8 pagesPower Quality Monitoring of Induction Motor Using Lab VIEW PDFeditor_ijcatNo ratings yet

- Recognizing Character Using Bayesian Network in Data Mining PDFDocument5 pagesRecognizing Character Using Bayesian Network in Data Mining PDFeditor_ijcatNo ratings yet

- Selection of Accurate & Robust Model For Binary Classification Problems PDFDocument4 pagesSelection of Accurate & Robust Model For Binary Classification Problems PDFeditor_ijcatNo ratings yet

- Secure Sharing of Data Over Cloud Computing Using Different Encryption Schemes An Overview PDFDocument4 pagesSecure Sharing of Data Over Cloud Computing Using Different Encryption Schemes An Overview PDFeditor_ijcatNo ratings yet

- Relative Study On Malayalam English Translation Using Transfer Based Approach PDFDocument6 pagesRelative Study On Malayalam English Translation Using Transfer Based Approach PDFeditor_ijcatNo ratings yet

- Real Time Eye Tracking System For People With Several Disabilities Using Single Web Cam PDFDocument4 pagesReal Time Eye Tracking System For People With Several Disabilities Using Single Web Cam PDFeditor_ijcatNo ratings yet

- Proposed Model of Encryption Technique Using Block Cipher Concept To Enhance Avalanche Effect PDFDocument5 pagesProposed Model of Encryption Technique Using Block Cipher Concept To Enhance Avalanche Effect PDFeditor_ijcatNo ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Dwi Hadianti, S.E: Mypersonal Profile Work ExperienceDocument1 pageDwi Hadianti, S.E: Mypersonal Profile Work ExperienceBumn Kuala Tungkal HebatNo ratings yet

- MOCK Exams Submission TestDocument7 pagesMOCK Exams Submission TestPam MshweshweNo ratings yet

- Oracle® Service Fulfillment Manager: Implementation Guide Release 12.2Document148 pagesOracle® Service Fulfillment Manager: Implementation Guide Release 12.2yadavdevenderNo ratings yet

- Bosch Mems Inertial Sensor Smi700 Product Info SheetDocument2 pagesBosch Mems Inertial Sensor Smi700 Product Info SheetDarío Gabriel LipicarNo ratings yet

- 701MRF de PDFDocument92 pages701MRF de PDFPetros TsenesNo ratings yet

- Designing A 3D Jewelry ModelDocument4 pagesDesigning A 3D Jewelry ModelAbdulrahman JradiNo ratings yet

- f2806x Dev User GuideDocument148 pagesf2806x Dev User GuidedavidNo ratings yet

- Ajcpath125 0016Document10 pagesAjcpath125 0016Asad AliNo ratings yet

- Operating System VirtualizationDocument13 pagesOperating System VirtualizationSachin JainNo ratings yet

- ICF 3 - LAS - W6 - Inserting Clip Art, WordArt and PicturesDocument6 pagesICF 3 - LAS - W6 - Inserting Clip Art, WordArt and PicturesAljohn LumapasNo ratings yet

- Ha FailoverDocument42 pagesHa FailoverVishal BharadwajNo ratings yet

- UntitledDocument7 pagesUntitledIndra Rian JayaNo ratings yet

- QUINT-PS24DC48DC5 DC To DC ConverterDocument9 pagesQUINT-PS24DC48DC5 DC To DC ConverterMoutaz KhaterNo ratings yet

- Kisi-Kisi Web Technologies 2023Document36 pagesKisi-Kisi Web Technologies 2023Erhamna noor riskiNo ratings yet

- Alp19.2 ManualDocument83 pagesAlp19.2 ManualCuong TranNo ratings yet

- Techniques To Reduce Timing Violations Using Clock Tree Optimizations in Synopsys ICC2Document10 pagesTechniques To Reduce Timing Violations Using Clock Tree Optimizations in Synopsys ICC2Pardhasaradhi Damarla100% (1)

- Creating Delegate NodeDocument26 pagesCreating Delegate NodeRama Krishna BNo ratings yet

- Assignment Cyber Law E.N 54Document6 pagesAssignment Cyber Law E.N 54Tajamul IslamNo ratings yet

- 1746-Pm001a-En-p 1747-BAS Module BASIC Programming ManualDocument144 pages1746-Pm001a-En-p 1747-BAS Module BASIC Programming ManualjaysonlkhNo ratings yet

- The Paper Doctor TEXTODocument101 pagesThe Paper Doctor TEXTOondasdeforma67% (3)

- Itutor Prospectus 2013-2015 - FEB 16 - Itutor Fee StructureDocument3 pagesItutor Prospectus 2013-2015 - FEB 16 - Itutor Fee StructureAnshuman DashNo ratings yet

- Implementation With Ruby Features: One Contains Elements Smaller Than V The Other Contains Elements Greater Than VDocument12 pagesImplementation With Ruby Features: One Contains Elements Smaller Than V The Other Contains Elements Greater Than VXyz TkvNo ratings yet

- AutoCAD NotesDocument17 pagesAutoCAD NotesKriss_PsNo ratings yet

- Presentations - SPED - Society of Piping Engineers and DesignersDocument2 pagesPresentations - SPED - Society of Piping Engineers and DesignersEsteban CastellanoNo ratings yet

- Simple 4k Wallpaper - Google SearchDocument1 pageSimple 4k Wallpaper - Google SearchElix3rNo ratings yet

- CE-EMC CERT SHEM1806004552IT Network Camera DS-2CD2045FWD-I 20180710Document1 pageCE-EMC CERT SHEM1806004552IT Network Camera DS-2CD2045FWD-I 20180710DaniellMargaritNo ratings yet

- Math Mammoth - 1Document113 pagesMath Mammoth - 1ramjd100% (2)

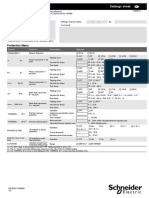

- VIP400 Settings Sheet NRJED311208ENDocument2 pagesVIP400 Settings Sheet NRJED311208ENVăn NguyễnNo ratings yet

- BitLocker Step by StepDocument33 pagesBitLocker Step by StepwahyuabadiNo ratings yet

- Lec 4 Paper - Cellular Neural NetworkDocument13 pagesLec 4 Paper - Cellular Neural NetworkPraveena AnnaduraiNo ratings yet