Professional Documents

Culture Documents

Machine Learning in Iterated Prisoner's Dilemma Using Evolutionary Algorithms

Uploaded by

Ysharath ChandramouliOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Machine Learning in Iterated Prisoner's Dilemma Using Evolutionary Algorithms

Uploaded by

Ysharath ChandramouliCopyright:

Available Formats

ITERATED PRISONERS DILEMMA

Machine Learning in

Iterated Prisoners Dilemma

using Evolutionary Algorithms

Shashi Mittal

Department of Computer Science and Engineering

Indian Institute of Technology, Kanpur.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

OUTLINE:

1. Description of the game

2. Strategies

3. Machine Learning with Single Objective EA

4. Machine Learning with Multi-Objective EA

5. Results obtained

6. Comparison of the two methods

7. Analysis of results, Implications

8. Conclusions

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

THE GAME

1. Two players in a game

2. Each player can either Cooperate (C) or Defect (D).

3. The score received by the players depends on the move made

by both of them

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

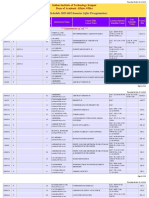

PAYOFF MATRIX

Player 2

Decision

Cooperate

Defect

P

Cooperate

l

a

1

y

e

r

Defect

R: REWARD

S: SUCKER

R=3 R=3

S=0 T=5

T=5 S=0

P=1 P=1

T: TEMPTATION

P: PENALTY

T > R > P > S, (T + S)/2 < R

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

WHY DILEMMA ?

If the opponent plays Defect then

1. If I play Cooperate - I will get the Suckers Payoff - Worst

Situation

2. If I play Defect - Low score for mutual defection, but better

than cooperation

If the opponent plays Cooperate then

1. If I play Cooperate - Reward for mutual cooperation

2. If I play Defect - Will get Temptation award - best scenario

Hence the best option is to play Defect

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

WHY DILEMMA ? (continued)

By the same reasoning, the opponent also plays Defect, and

hence both get the same score of punishment.

- But both of them could have done better by cooperating !

Hence the dilemma !!

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

ITERATED PRISONERS DILEMMA

The situation is more interesting when the players play the game

iteratively for a certain number of moves.

The number of moves should not be known to the two players.

The winner is the player with the highest score in the end.

Usually, there are many players in the fray, and there is a

Round Robin Tournament among all the players - the player

with the highest score wins.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

PROPERTIES OF IPD

Non zero Sum Game

- Both the players can simultaneously win or lose !!

No Universal Best Strategy

- The optimal strategy depends upon the opponents in the

fray

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

IPD IN LIFE AND NATURE

Symbiotism, Prey-Predator etc. behavior all can be captured

by IPD.

Business strategies

Politics

War scenarios

Even zero sum games sometimes can get degenerated to

Prisoners dilemma !! (Dawkins, The Selfish Gene).

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

10

STRATEGIES

Always Cooperate, Always Defect

Random

Tit For Tat, Tit for Two Tats

Naive Prober, Remorseful Prober

and many more . . .

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

11

AXELRODS STUDY

To study the best strategy for IPD, Axelrod in 1985 held a

competition in which strategies were submitted by Game

theorists.

In the first competition, 15 entries were submitted. The

winner was Tit for Tat, submitted by Anatol Rapoport.

Axelrod held a second round of competition. Again the

winner was Tit for Tat !

Many complex strategies performed badly !!

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

12

MEASURING PERFORMANCE OF PLAYERS

Suppose there are 15 players, each player plays against each other

as well as itself, and each game is of 200 moves.

- Maximum score : 15000

- Minimum score : 0

However, neither extreme is achieved in practice

Benchmark score : The score a player would have received

against an opponent if both the players always cooperated

Divide the score of a player by the number of players, then

express it as a percentage of Benchmark Score.

For Example : If a player scores 7500, then he has scored

(500/600), i.e. 83% of the Benchmark Score in this case.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

13

ENCODING STRATEGIES

Axelrods method :

The next move depends upon the behavior of both the parties

during previous three moves.

Four possibilities :

- CC or R for Reward

- CD or S for Sucker

- DC or T for Temptation

- DD or P for Penalty

Code the particular behavioral sequence as a 3-letter string.

e.g RRR represents the sequence where both parties cooperated over the

first three moves

SSP : The first player was played for sucker twice and defected.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

14

ENCODING STRATEGIES (contd . . . )

Use the 3-letter sequence to generated a number between 0 and

63 by interpreting it as an integer base 4.

- e.g. if CC = R = 0, DC = T = 1, CD = S = 2 and

DD = P = 3 then RRR will decode as 0 and SSP will

decode as 43.

Strategy string : 64-bit binary string of Cs and Ds where he ith

bit corresponds to the ith behavioral sequence.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

15

PREMISES

Behavior in the first three moves of the game undefined in the

above scheme.

- Add six bits to coding to specify a strategys premises, i.e.

assumption about the pregame behavior.

Together, each of the 70-bit strings thus represents a particular

strategy.

How to find the optimal strategy from the all the possible strings

??

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

16

WHY USE GA ?

Total number of all possible strategies very high - 270 .

- Exhaustive search will take more time than lifetime of the

Universe !!

Fitness function : Non continuous, classical methods will not

work

GAs emulate the natural process of evolution.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

17

WHY USE MULTI OBJECTIVE GA ?

Non zero sum game : My score and Opponents score may

not be directly related !!!

Treat Self Score and Opponent Score as two different

objectives : Maximize the first and minimize the second.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

18

SEARCHING OPTIMAL STRATEGIES

Axelrods approach :

Maximize the self score using Single Objective GA

Our Approach :

Maximize self score and minimize opponent score separately

using Single Objective GA

Optimize both the objectives simultaneously using NSGA-II

and compare with the results found above.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

19

USING SINGLE OBJECTIVE GA

Single Objective GA used to

Maximize self score

Minimize opponent score

Use the strategies obtained above in the Round Robin

Tournament and observe their behavior.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

20

440

420

Mean fitness

400

380

360

340

320

0

Figure 1:

20

40

60

80

100

Generation

120

140

160

180

200

Plot of the mean fitness of population when self score is maximized.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

21

445

440

435

Maximum fitness

430

425

420

415

410

405

400

0

20

40

60

80

100

Generation

120

140

160

180

200

Figure 2:

Plot of the maximum fitness of a sample in the population when self

score is maximized.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

22

400

350

Mean fitness

300

250

200

150

100

0

Figure 3:

20

40

60

80

100

Generation

120

140

160

180

200

Plot of the mean fitness of population when opponents score is mini-

mized.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

23

220

200

Minimum fitness

180

160

140

120

100

0

20

40

60

80

100

Generation

120

140

160

180

200

Figure 4:

Plot of the minimum fitness of a sample in the population when self

score is minimized.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

24

PERFORMANCE OF SINGLE OBJECTIVE GA

When the strategies obtained from maximizing self score is used

in the round robin tournament

These strategies always emerge as the winner - defeats the

Tit For Tat strategy

Score in the tournament is nearly 98% of the benchmark

score !

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

25

USING NSGA-II

Two objectives :

Maximize self score

Minimize opponents score

NSGA-II algorithm with niching used.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

26

500

450

Opponent Score

400

350

300

250

200

150

100

100

150

200

250

300

350

Self Score

400

450

500

Figure 5:

The initial random solution (shown with +) and the Pareto optimal

solutions(shown in x).

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

27

Pareto optimal front and other solutions

Always cooperate

500

450

opponent score

400

Tit for tat

350

300

250

200

150

Always defect

100

100

150

200

250

300

350

Player score

400

450

500

Figure 6:

Pareto Optimal front, together with the single objective EA results

(the upper and the left vertexes of the triangle) and a few other strategies.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

28

KEY OBSERVATIONS

Pareto - optimal front is obtained

- There is a trade off between self score and opponents score

Convergence towards Pareto optimal front

- NSGA-II is able to search a complex front, starting from a

random population

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

29

PERFORMANCE OF NSGA-II

When the strategy with the highest self score is fielded in

the round-robin tournament, it emerges as the winner,

winning by a significant margin .

- Scores about 99% of the benchmark score

- Also defeats the strategy obtained by Single Objective GA

The later strategies, with lower self score, do not perform as

well, and rank lower in the round robin tournament.

The strategy with the lowest self score behaves like Always

Defect strategy.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

30

RELATIONSHIP AMONG STRATEGIES

As such, there does not appear to be any relationship among the

different Pareto-optimal strategies.

DDCDDDCCCDDCDDDDDDCDDDCCCDCDDCDDDDCDCDCCDCDC. . .

DDCCDDDCDCCDDDCCDDCDCDDDDDDDDCDDDDCCCDCCDCDD. . .

DDCDDDDCCCCCDDDDDDCDDDDDCDDCDCDDDDCCCDDDDDDC. . .

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

31

FINDING RELATIONSHIP AMONG

STRATEGIES

Play the strategy in the round robin tournament

Keep track of how many times a particular bit position is

used in the tournament

Plot the frequency versus bit position,and then analyze

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

32

600

"freq1.txt"

500

400

300

200

100

0

0

10

20

30

40

50

60

70

Figure 7:

Plot for the move distribution with a particular strategy. Self score =

441, Opponent Score = 220

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

33

800

"freq5.txt"

700

600

500

400

300

200

100

0

0

10

20

30

40

50

60

70

Figure 8:

Plot for the move distribution with a particular strategy. Self score =

383, Opponent Score = 194

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

34

1400

"freq8.txt"

1200

1000

800

600

400

200

0

0

10

20

30

40

50

60

70

Figure 9:

Plot for the move distribution with a particular strategy. Self score =

290, Opponent Score = 114

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

35

Plot for the most frequently used bit positions in the strategy string

14

12

Strategy

10

0

0

10

20

30

Bit position

40

50

60

70

Figure 10:

Plot of the frequently used bit positions in strategy strings. Lower

one is for optimized strategies, upper is for random strategies.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

36

KEY OBSERVATIONS

Only some of the bit positions are used by the optimal

strategies, and rest are not used.

Random strategies do not show such a trend, the distribution

is practically uniform.

Thus, the Pareto-optimal strategies share some common

properties.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

37

CONCLUSIONS

Self score and Opponent score are not directly related, both

need to be optimized

Multi-objective algorithm is better suited to find optimal

strategies in this game

Game theoretic result : Desired behavior of strategies can be

studied by observing which bit positions of the strategy string

are frequently used.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

ITERATED PRISONERS DILEMMA

38

THANK YOU !

Questions are welcome.

Kanpur Genetic Algorithms Laboratory

Indian Institute of Technology, Kanpur

Shashi Mittal

You might also like

- Click Here, Type The Title of Your Paper, Capitalize First LetterDocument3 pagesClick Here, Type The Title of Your Paper, Capitalize First LetterYsharath ChandramouliNo ratings yet

- TEQIP School On Computational Methods in Engineering ApplicationsDocument3 pagesTEQIP School On Computational Methods in Engineering ApplicationsYsharath ChandramouliNo ratings yet

- Solving System of Linear Equations: Y. Sharath Chandra MouliDocument32 pagesSolving System of Linear Equations: Y. Sharath Chandra MouliYsharath ChandramouliNo ratings yet

- 1-D Beam Code: For EI (X) 1, F 1, L 1, With Boundary Conditions W 0, 0 at X 0 and M 0, V 1 at X 1.given T 0.01Document6 pages1-D Beam Code: For EI (X) 1, F 1, L 1, With Boundary Conditions W 0, 0 at X 0 and M 0, V 1 at X 1.given T 0.01Ysharath ChandramouliNo ratings yet

- Study On Material Selection of Fuselage SkinDocument10 pagesStudy On Material Selection of Fuselage SkinYsharath ChandramouliNo ratings yet

- Study On Material Selection of Fuselage SkinDocument10 pagesStudy On Material Selection of Fuselage SkinYsharath ChandramouliNo ratings yet

- Course Schedule2015162 PDFDocument52 pagesCourse Schedule2015162 PDFYsharath ChandramouliNo ratings yet

- Uni Variant MinimizationDocument15 pagesUni Variant MinimizationYsharath ChandramouliNo ratings yet

- Assumed Modes MethodsDocument17 pagesAssumed Modes MethodsYsharath Chandramouli100% (1)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (74)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (345)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Experiment - 1: Batch (Differential) Distillation: 1. ObjectiveDocument30 pagesExperiment - 1: Batch (Differential) Distillation: 1. ObjectiveNaren ParasharNo ratings yet

- BIAN How To Guide Developing Content V7.0 Final V1.0 PDFDocument72 pagesBIAN How To Guide Developing Content V7.0 Final V1.0 PDFميلاد نوروزي رهبرNo ratings yet

- Bad Memories Walkthrough 0.52Document10 pagesBad Memories Walkthrough 0.52Micael AkumaNo ratings yet

- 1.SDH Basics PDFDocument37 pages1.SDH Basics PDFsafder wahabNo ratings yet

- Module6 Quiz1Document4 pagesModule6 Quiz1karthik1555No ratings yet

- UM-140-D00221-07 SeaTrac Developer Guide (Firmware v2.4)Document154 pagesUM-140-D00221-07 SeaTrac Developer Guide (Firmware v2.4)Antony Jacob AshishNo ratings yet

- 82686b - LOAD SHARING MODULEDocument2 pages82686b - LOAD SHARING MODULENguyễn Đình ĐứcNo ratings yet

- Chemistry Investigatory Project (R)Document23 pagesChemistry Investigatory Project (R)BhagyashreeNo ratings yet

- Student Management SystemDocument232 pagesStudent Management Systemslu_mangal73% (37)

- Top Activist Stories - 5 - A Review of Financial Activism by Geneva PartnersDocument8 pagesTop Activist Stories - 5 - A Review of Financial Activism by Geneva PartnersBassignotNo ratings yet

- Smart Gas Leakage Detection With Monitoring and Automatic Safety SystemDocument4 pagesSmart Gas Leakage Detection With Monitoring and Automatic Safety SystemYeasin Arafat FahadNo ratings yet

- Cetie Guide No1 EngDocument55 pagesCetie Guide No1 EngJose Manuel Sepulveda RomanNo ratings yet

- Formula:: High Low Method (High - Low) Break-Even PointDocument24 pagesFormula:: High Low Method (High - Low) Break-Even PointRedgie Mark UrsalNo ratings yet

- A Hybrid Genetic-Neural Architecture For Stock Indexes ForecastingDocument31 pagesA Hybrid Genetic-Neural Architecture For Stock Indexes ForecastingMaurizio IdiniNo ratings yet

- Understanding PTS Security PDFDocument37 pagesUnderstanding PTS Security PDFNeon LogicNo ratings yet

- Frellwits Swedish Hosts FileDocument10 pagesFrellwits Swedish Hosts FileAnonymous DsGzm0hQf5No ratings yet

- BJAS - Volume 5 - Issue Issue 1 Part (2) - Pages 275-281Document7 pagesBJAS - Volume 5 - Issue Issue 1 Part (2) - Pages 275-281Vengky UtamiNo ratings yet

- Introduction - Livspace - RenoDocument12 pagesIntroduction - Livspace - RenoMêghnâ BîswâsNo ratings yet

- Pioneer vsx-1020-k 1025-k SM PDFDocument132 pagesPioneer vsx-1020-k 1025-k SM PDFluisclaudio31No ratings yet

- Bba VDocument2 pagesBba VkunalbrabbitNo ratings yet

- Ecs h61h2-m12 Motherboard ManualDocument70 pagesEcs h61h2-m12 Motherboard ManualsarokihNo ratings yet

- Feasibility Study For Cowboy Cricket Farms Final Report: Prepared For Prospera Business Network Bozeman, MTDocument42 pagesFeasibility Study For Cowboy Cricket Farms Final Report: Prepared For Prospera Business Network Bozeman, MTMyself IreneNo ratings yet

- Smart Protein Plant Based Food Sector Report 2Document199 pagesSmart Protein Plant Based Food Sector Report 2campeon00magnatesNo ratings yet

- Siemens Rapidlab 248, 348, 840, 845, 850, 855, 860, 865: Reagents & ControlsDocument2 pagesSiemens Rapidlab 248, 348, 840, 845, 850, 855, 860, 865: Reagents & ControlsJuan Carlos CrespoNo ratings yet

- Segregation in CastingDocument17 pagesSegregation in CastingAsmaa Smsm Abdallh78% (9)

- Math 9 Quiz 4Document3 pagesMath 9 Quiz 4Lin SisombounNo ratings yet

- C4 Vectors - Vector Lines PDFDocument33 pagesC4 Vectors - Vector Lines PDFMohsin NaveedNo ratings yet

- Trade MarkDocument2 pagesTrade MarkRohit ThoratNo ratings yet

- COURTESY Reception Good MannersDocument1 pageCOURTESY Reception Good MannersGulzina ZhumashevaNo ratings yet

- Stentofon Pulse: IP Based Intercom SystemDocument22 pagesStentofon Pulse: IP Based Intercom SystemCraigNo ratings yet