Professional Documents

Culture Documents

Advanced Artery Vein Classification System in Retinal Images For Diabetic Retinopathy

Uploaded by

Editor IJRITCCOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Advanced Artery Vein Classification System in Retinal Images For Diabetic Retinopathy

Uploaded by

Editor IJRITCCCopyright:

Available Formats

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

Advanced Artery / Vein Classification System in Retinal Images for Diabetic

Retinopathy

Leshmi Satheesh

Priyanka Prabhakar

Aneesh R P

ECE Dept

Mohandas College of Engineering &

Technology

Trivandrum, Kerala

leshmi24@gmail.com

ECE Dept.

Regional Centre IHRD

Trivandrum, Kerala

priya27982@gmail,.com

ECE Dept

Regional Centre IHRD

Trivandrum, Kerala

aneeshprakkulam@gmail.com

AbstractDiabetic retinopathy is that the single largest explanation for sight loss and visual impairment in eighteen to sixty five year olds.

Screening programs for the calculable 1 to 6 % of the diabetic population are incontestable to be value and sight saving, but unfortunately there

are inadequate screening resources. An automatic screening system might facilitate to solve this resource short fall.The retinal vasculature

consists of the arteries and veins with their tributaries that are visible at intervals in the retinal images.This paper proposes a graphbased artery

vein classification system inretinal images for diabetic retinopathybased on the structural informationextracted from the retinalvasculature. The

method at first extracts agraph from the vascular tree and then makes a decision on the typeof each intersection point (graph node).Based on this

node types one of the twolabels are assigned to each vessel segment.Finally, the A/V classes are assigned tothe sub graph labels by extracting a

set ofintensity features and using artificialneural network.

Keywords-Artery/vein classification, Diabetic retinopathy, graph nodes, neural network.

__________________________________________________*****_________________________________________________

I. INTRODUCTION

The retinal blood vessels are vulnerable to the abnormal

metabolism of glucose. In a long run, this metabolic

malfunction referred to as diabetes alters the structure and

consequently the performance of the retinal vessels. These

changes affecting the vessels trigger a sequence of injuries or

lesions to the membrane itself that are together called Diabetic

Retinopathy (DR). The diagnosing, observation and screening

of diabetic injuries to the retina over whelms current ocular

health care, inflicting loss of sight and vision defect. Therefore

the identification of the blood vessels constitutes a crucial step

or building block within the style of systems for automatic

screening or analysis. The structure of the eye is as shown in

Fig.1.

comprehensive of Bruch's membrane and therefore the choroid.

The colour of the anatomical structure varies each between and

among species. The retina of the primates is red,blue, green,

yellow, and orange; solely the human fundus is red. The most

abnormal options of diabetic retinopathy are exudates and blot

hemorrhages. The detection of OD, blood vessels and exudates

are going to be introduced. The eye's fundus is that the solely a

part of the physical body wherever the microcirculation are

often ascertained direct.

Figure 2. Fundus of the eye showing main stages of retinopathy with

disorders

Figure 1. Schematic illustration of the eye ball and the organisation of the

tissues within it.

The fundus of the eye covers the inside surface of the eye,

opposite to the lens and additionally includes the retina, macula

and fovea, blind spot and posterior pole. The anatomical

structures are often examined by an ophthalmoscope and/or by

a fundus photography. The term fundus may additionally be

.

The classification building procedure includes mainly three

parts i.e. the feature extraction, feature selection and finally the

classifier construction. In line with the analysis of retinal

image, the textural message and statistical of the image is

extracted as classification options. The classifier is built by

back propagation neural network that has 3 layers. Based on the

clearness degree of the retinal image, the retinal images are

394

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

classified as normal and abnormal categories. The initial

analysis results normally have nice potential to enhance

identification potency of the oculist and scale back the physical

and economic burden of the patients and society.

Graphs are a powerful representation for structured

information which can be very easily characterized by its

subparts and the relations between these subparts. This method

proposes a graph based artery /vein classification system in

retinal images for diabetic retinopathy using artificial neural

network. The method first extracts a graph from the vascular

tree and based on this a decision on the type of each

intersection point (graph node). Based on this node types one of

the two labels are assigned to each vessel segment. Finally, the

A/V classes are then assigned to the sub graph labels by

extracting a set of intensity features and using artificial neural

network. The abnormalities in the retina will change the

structure and appreance and those are shown in the figures 3 to

8.

Figure 6. a.

Drusen, Deep, whitishlessions more regular in outline

thanexudates b. HAemorrhages (HA),dispersed red blotches with

irregularperimiters.

Figure 7. .Pre-retinal Haemorrhage, extravasated blood betweenretina and

vitreous ETDRS ; b. Venous beading, irregular distension of large and

orsmaller retinal vein calibres

Figure 3. Monochrome fundus images showing twisted and overlapping

vessels

Figure 8. Neo VascularisationElsewhere, (NVE) Growth of capillary like

new vessels on fundus butnot OD ; b. NeoVascularisationDisk,(NVD),

Development of microvesselsinto the vitreo-retinal interface atOD

II. LITERATURE REVIEW

Figure 4. a.Microaneurysms, Small and punctuate red dots b. IRMA,

Irregular calibres and tortousity of the smaller retinal vessels

Figure 5. a. Hard exudates, areas oflipo protein mal-absorption, yellow-ish

well defined contours. b. Soft exudates (SE),areas of axoplasmic

accumulation

The wrong identification of vessels may result in a large

variation of these measurements, leading to a wrong clinical

diagnosis. Many previous are made for this propose. In [1] the

problem is addressed as automatically identifying true vessels

as a post processing step to vascular structure segmentation.

They model the segmented vascular structure as a vessel

segment graph and formulate the problem of identifying

vessels as one of finding the optimal forest in the graph given

a set of constraints. An operator for feature extraction based on

the optical and spatial properties of objects to be recognized is

introduced in [2]. The gray-level profile of the cross section of

a blood vessel is approximated by a Gaussian-shaped curve.

The concept of matched filter detection of signals is used to

detect piecewise linear segments of blood vessels in these

images. A novel representation called orientation space is

proposed in [3], which is derived by adding

the orientation axis to the abscissa and the ordinate of the

image. The orientation space representation is constructed by

treating the orientation parameter, to which Gabor filters can

395

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

be tuned, as a continuous variable. The problem of

segmenting lines at multiple orientations is dealt with by

thresholding 3D images in the orientation space and then

detecting the connected components therein. In this way, Xjunctions and T-junctions can be separated effectively. Curve

grouping can also be accomplished. The segmentation of

mathematically modeled X-, T-, and L-junctions is

demonstrated and analyzed. The sensitivity limits of the

method are also discussed. Experimental results using both

synthesized and real images show the method to be effective

for junction segmentation and curve grouping.

In [4] an algorithm called fuzzy convergence to determine

the origination of the blood vessel network is used. [5] presents

an automated method to identify arteries and veins in dualwavelength retinal fundus images recorded at 570 and 600

nm. Dual-wavelength imaging provides

both structural and

functional features that can be exploited for identification. The

processing begins with automated tracing of the vessels from

the 570-nm image. The 600-nm image is registered to

this image, and structural and functional features are computed

for each vessel segment. T the relative strength of the vessel

central reflex as the structural feature is used. The central reflex

phenomenon, caused by light reflection from vessel surfaces

that are parallel to the incident light, is especially pronounced

at longer wavelengths for arteries compared to veins .A dualGaussian to model the cross-sectional intensity profile of

vessels is used. The model parameters are estimated using a

robust -estimator, and the relative strength of the central reflex

is

then

computed

from

these

parameters.

The functional feature exploits the fact that arterial blood is

more oxygenated relative to that in veins. This motivates use of

the ratio of the vessel optical densities (ODs) from images at

oxygen-sensitive and oxygen-insensitive wavelengths as

a functional

indicator.

Finally,

the structural and functional features are combined in a

classifier to identify the type of the vessel. We experimented

with four different classifiers and the best result was given by a

support

vector

machine

(SVM)

classifier.

Optic disc (OD) detection is a main step while developing

automated screening systems for diabetic retinopathy.

In [6] a method to automatically detect the position of the

OD in digital retinal fundus images is proposed. The method

starts by normalizing luminosity and contrast throughout

the image using illumination equalization and adaptive

histogram equalization methods respectively. The OD

detection algorithm is based on matching the expected

directional pattern of the retinal blood vessels. Hence, a

simple matched filter

is

proposed

to

roughly

match the direction of the vessels at the OD vicinity. The

retinal vessels are segmented using a simple and standard 2-D

Gaussian matched filter. Consequently a vessel direction map

of the segmented retinal vessels is obtained using the same

segmentation algorithm. The segmented vessels are then

thinned, and filtered using local intensity, to represent finally

the OD-center candidates. Thedifference between the

proposed matched filter resized into four different sizes, and

the vessels' directions at the surrounding area of each of the

OD-center candidates is measured. The minimum difference

provides an estimate of the OD-center coordinates. The

proposed method was evaluated using a subset of the STARE

project's dataset, containing 81fundus images of both normal

and diseased retinas,

OD detection methods.

and

initially

used by literature

A novel multiconcavity modeling approach is proposed to

handle both healthy and unhealthy retinas simultaneously in

[7]. The differentiable concavity measure is proposed to handle

bright lesions in a perceptive space. The line-shape concavity

measure is proposed to remove dark lesions which have an

intensity structure different from the line-shaped vessels in a

retina. The locally normalized concavity measure is designed to

deal with unevenly distributed noise due to the spherical

intensity variation in a retinal image. These concavity measures

are combined together according to their statistical distributions

to detect vessels in general retinal images. Due to the high

ability of the curvelet transform in representing the edges,

modification of curvelet transform coefficients to enhance the

retinal image edges better prepares the image for the

segmentation part, the directionality feature of the

multistructure elements method makes it an effective tool in

edgedetection[8]. Hence morpholophy operators using

multistructure elements are applied to the enhanced image in

order to find the retinal images ridges. Afterward

morphological operators by reconstruction eliminate the ridges

not belonging to the vessel tree while trying to preserve the

thin vessels unchanged. In order to increase the efficiency of

the morphological operators by reconstruction, they were

applied using multistructure elements. A simple thresholding

method along with connected components analysis (CCA)

indicates the remained ridges belonging to vessels. In order to

utilize CCA more efficiently, we locally applied the CCA and

length filtering instead of considering the whole image.

Experimental results on a known database, DRIVE.The

method [9] is based on developing a novel model to describe

the temporal intensity variation of the image sequence. The

model parameters at each pixel are used to construct a feature

vector that is used to classify the different pixels into areas of

classic Choroidal Neovascularization CNV, occult CNV and

background. Preliminary results on four datasets show the

potential and effectiveness of the method to segment and

identify the different types of CNV lesions.

III. PROPOSED METHOD AND DATABASE

A. Image Database

FirstThe retinal fundus image used for this studyis obtained

from publicly available databaseDigital Retinal Images for

Vessel Extraction(DRIVE).The DRIVE database contains 40

color images of the retina ,with 565 x 584pixels and 8 bits per

color channel, which is represented in LZW compressed

TIFFformat. The 40 images were divided into atraining set and

a testing set. In the testing set images, a second

independentmanual segmentation exists as well.

B. Proposed Method

Most of the existing method for artery/veinclassification

uses intensity features todiscriminate between artery and vein.

Theproposed method uses the additionalstructural information

extracted from thegraph. The basic modules of the

proposedmethod include four sections: graphgeneration, graph

analysis, A/Vclassification and disease severity detection.

396

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

Disease Severity Detection

Image

Filtering

Normal

Proliferative

Retinopathy

Edge

Detection

NonProliferative

Retinopathy

DISEASE DETECTION

Vessel

segmentation

Centerline

extraction

and graph

generation

Graph

Modification

Artificial

neural

Network

Node type

detection

A/V class

emerging

Link labelling

GRAPH

GENERATION

GRAPH

ANALYSIS

, =

Feature

extraction

Vessel caliber

estimation

A/V

CLASSIFICATION

Figure 9. Proposed method

Fig.9 depicts the block diagram ofproposed method. There

are of mainly four sections:graph generation, graph analysis,

A/Vclassification and disease severitydetection. The method

first extracts a graphfrom the vascular treeand then based on

this makesa decision on the type of each intersectionpoint

(graph node). Based on this node typesone of the two labels are

assigned to eachvessel segment. Then A/V classes areassigned

to the sub graph labels byextracting a set of intensity features

andusing artificial neural network. Finally,based on the artery

vein classification resultthe status of the disease is detected.

IV.

proportion of images tobe of such poor quality so as to interfere

with analysis.

Preprocessing of the fundus data either removes or flags the

aforementioned interferences. A Gaussian filter can be used for

noise removal. The Gaussian filter modifies theinput by

convolution with Gaussian function given by

METHODOLOGY

A. Image acquisition

The healthy tissues and pathological manifestations of the

retina vary insizes. The smallest vessels and their pathology

(IRMA) areslightly larger thana capillary (approximately 8m).

The largest features such as pre-retinal haemorrhageand the

optic disk are 1600m or larger. The details of the anatomy

andlesions that are recorded by an image are largely defined by

the resolution.The capacity to resolve, or to spatially

distinguish two objects, is a criticalmeasure which is equally

applicable to images recorded on analog media,such as

photographic film, or those directly digitized from transducer

signals. The spatial resolutions that are used can be roughly

estimated to from the width of the vessels or the average size of

the optic disk to give a resolution of the order of 10m per

pixel.

B. Pre-processing

After acquisition, corrections for uneven illumination or

image quality maybe made. Also adjustments to regions of

interest or the need for re-samplingmay be evaluated.

Collectively these operations are termed image pre-processing.

Other factors and artifacts include poor focus,reflections,patient

movements, bad positioning, disease opacity or inadequate

illumination.These factors and artifacts will cause a significant

( + )

1

2 2

2

2

where x and y are the distance from origin to horizontal and

vertical directions respectively and is the standard deviation

which is the estimate of underlying brightness probability

distribution. The importance of edge detection in general is to

significantly decrease the amount of data in the image, mean

while preserving the important structural properties to be used

for further image processing. In this paper canny edge detection

is used because the canny algorithm preserves both the strong

edges and weak edges connected with the strong edges.

C. Detection and extractionof Blood Vessels

In an automated retinal image analysissystem, exact

detection of artery and vein incolor retinal images is a

significant task.Several segmentation methods can beadopted

for this purpose. Morphological image processing exploits

features of thevasculature shape that are known a priori.The

algorithms that extract linearshapes can be very useful for

vessel segmentation. The structuringelements of a particular

intensity can be added (dilation) orsubtracted (erosion) to the

underlying image. Opening(erosion followed by dilatation)

with a structuring element of acertain shape can separate

objects in an image by preserving the image structures which

can contain the structural element andremoving those that

cannot. Closing (dilatation followed byerosion) can be used to

fill-in small holes within an image. The morphological

operations play an important role in digital imageprocessing

with special application in the field of machinevision and

automatic object detection and these morphologicaloperations

include mainly dilation, erosion, opening, closing etc.

A As = {z|(As)z A }

A As = {z|(As)z Ac }

X Y = (X Y)Y

X Y = (XY)Y

The enhanced vessel segment in the Gabor filter response

image requires an effectivethresholding scheme. Theentropy

based thresholding using gray level co-occurrencematrix is

used and computes the optimal threshold by takinginto account

the spatial distribution of gray levels that areembedded in the

co-occurrence matrix. The GLCM containsinformation on the

distribution of gray level frequency andedge information which

is very helpful in finding the thresholdvalues.

The gray level co-occurrence matrix is a LL squarematrix

of the gray scale image I of spatial dimension MNwith gray

levels in the range [0, 1. . . L-1]. It is denoted by T =[ti,j ]LL

matrix. The elements of the matrix specify the numberof

transitions between all pairs of gray levels in a particularway.

For each image pixel at spatial co-ordinate (m, n) with itsgray

level specified by f(m ,n), and it considers its nearest

fourneighbouring pixels at locations of (m+1, n), (m-1, n), (m,

397

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

n +1) and (m, n - 1). The co-occurrence matrix is formed

bycomparing gray level changes of f(m, n) to its

correspondinggray levels, f(m +1, n), f(m -1, n), f(m, n + 1) and

f(m, n - 1).Depending upon the ways in which the gray level i

followsgray level j, they are many possibilities of different

definitions of co-occurrence matrix. The co-occurrence matrix

by consideringhorizontally right and vertically lower transitions

is given by

presenting the network with many examples of data of known

output, training is achieved . Once the weights in the hidden

layer have been adjusted by training, the network can be

shown a previously unseen input and categorize this into the

appropriate output. Once the network has been trained to

recognise the digitised visual fields, which has not previously

been seen can be presented. The selected features are fed into

the constructed neural network to train it to identify features.

, =

=1 =1

= 1

, = , + 1 =

, = , + 1 =

= 0

Where, the total number of transitions in the cooccurrencematrix, a desired transition probability from gray

level i togray level j is obtained as follows

, =

=1

=1 ,

The centerline image is extracted from the segmented result

by applying iterative thinning algorithm. This algorithm

removes border pixels until the object shrinks to a minimally

connected stroke. After that, graph nodes are obtained by

finding the intersection point and end points.

D. Neural Network

Artificial neural networks (ANNs) are a family of

statistical learning algorithms which is inspired by biological

neural networks. Generally, from large number of unknown

inputs ANN can estimate or approximate functions. A large

number of neurons, which can compute values from input, are

interconnected to form an artificial neural network. Neural

networks are similar to biological neural structures. A neural

networks starts as a model neuron which consists of multiple

inputs and a single output. The input is modified by a weight,

which multiplies with the input value. Then the neuron will

combine these weighted inputs. These are used to determine

its output with reference to a threshold value and activation

function.The concept of neural networks is inspired from

Human's central nervous system. In Artificial Neural Network

[14] the artificial nodes which are known as "neurons",

"processing elements" or "units"are connected together to form

a network this mimics a biological neural network.

Figure 10. ANN

The artificial neural network models can be used to infer a

function from observations made. This is mostly useful in

applications where the complexity of the data or task makes

the design of such a function by hand impractical.Neural

networks have performed successfully where other methods

failed in, predicting system behavior, recognising and

matching complicated, vague, or incomplete data patterns.

ANNs is applied to pattern recognition,diagnosis,

interpretation, prediction, planning, debugging, monitoring,

repair, control andinstruction [14]. Because of the prediction

capability of ANNs, they can be used in diagnosis in medical

field. Neural network Toolbox1 for MATLAB is one of the

most well-known toolboxes for constructing and training

neural networks. This toolbox provides GUIs for designing,

training and simulating a number of different neural network

types and allows custom extension of the toolbox

V. RESULTS

Figure 11.

a. Input image.

b. Dialation gradient image

Figure 12. a. Edge detection

b. Threshold gradient image

A neural network performs functions collectively and in

parallel by the units, than there being a clear delineation of

subtasks to which various units are assigned in a way which is

similar to biological neural network. The term "neural

network"

usually

refers

to

models

employed

in statisticscognitive psychology and artificial intelligence

[14].

In this study the ANN consisted of three layers: an input layer

and output layer connected by a hidden layer. The hidden layer

consists of a number of nodes connected to the input and

output nodes by mathematical algorithms (weights). By

398

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

Figure 13. a. Blood veseels detection

Figure 14. a. Exudates detected

the classification to specific regions of interestaround the optic

disc. Most of the previous methods mainly use intensity

features for discriminating between arteries and veins.This

method uses additional information extracted from a graph

which represents the vascular network. It is expected to

achieve high accuracy and better performance, especially for

the largest arteries and veins which confirms that this A/V

classification methodology is reliable for the calculation of

several characteristic signs associated with vascular

alterations. The method first extracted a graph from the

vascular tree and then makes a decision on the type of each

intersection point (graph node). Based on this node types one

of the two labels are assigned to each vessel segment. Finally,

the A/V classes are assigned to the sub graph labels by

extracting a set of intensity features and using artificial neural

network.

b. Graph generation

REFERENCES

b. Diease estimation

From

these

sensitivity

and

specificity

are

evaluated.Sensitivity gives the percentage of pixels correctly

classified as vessels by the method and specificity gives the

percentage eof non-vessels pixels classified as non-vessels by

the method as follows

=

+

=

+

+

=

+

Where Tprepresents the true positive, Tnrepresents the true

negative, Fp representsthe false positive and Fnrepresentsthe

false negative at each pixel obtained. Then the method is

compared with the various methods adjusting the DRIVE

database. Table given shows that the proposed is better in

classifications with less false positive fraction rate

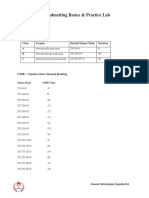

TABLE I.

Sensitivity

(%)

Specificity

(%)

Accuracy

(%)

Proposed Method

85.76

95.89

95.79

Hoover et.al [4]

75

92

91.7

Bayesian Method

75.13

95.29

95.25

77.63

97.23

----

72.82

95.51

----

66.34

96.82

93.16

77.63

97.23

94.7

Al-Diri et al [11]

Espona et al [12]

Non-expert Human

Qiangfeng Peter Lau, Mong Li Lee, Wynne Hsu, and Tien Yin

Wong, Simultaneously Identifying All True Vessels From

Segmented Retinal Images, IEEE Transactions On Biomedical

Engineering, Vol. 60, No. 7, July 2013.

[2]

Chaudhuri S., Chatterjee S., Katz N., Nelson M., and Goldbaum

M. Detection of blood vessels in retinal images using two

dimensional matched filters, IEEE transactions on Medical

Imaging, vol. 8, no. 3, pp. 263269. 1989.

[3]

Chen J., Sato Y., and Tamura S., Orientation spacefiltering for

multiple orientation line segmentation, IEEE Transactions of

Pattern Analysis and Machine Intelligence, vol.22, pp.417-429,

2000.

[4]

Hoover and M. GoldBaum. Locating the optic nerve in retinal

image using the fuzzy convergence of the blood vessels. IEEE

Trans. on Medical Imaging, 22:951958,Aug. 2003

[5]

Automatic Identification of Retinal Arteries and Veins From

Dual-Wavelength Images Using Structural and Functional

Features Harihar Narasimha-Iyer, Member, IEEE, James M.

Beach, Bahram Khoobehi, and Badrinath Roysam*, Member,

IEEE.

IEEE

TRANSACTIONS

ON

BIOMEDICAL

ENGINEERING, VOL. 54, NO. 8, AUGUST 2007 1427

[6]

Optic Disc Detection From Normalized Digital Fundus Images

by Means of a Vessels Direction Matched Filter Aliaa AbdelHaleim Abdel-Razik Youssif, Atef Zaki Ghalwash, and Amr

Ahmed

Sabry

Abdel-Rahman

Ghoneim*,

IEEE

TRANSACTIONS ON MEDICAL IMAGING, VOL. 27, NO. 1,

JANUARY 2008 11.

[7]

General Retinal Vessel Segmentation Using RegularizationBased Multiconcavity Modeling Benson S. Y. Lam*, Member,

IEEE, Yongsheng Gao, Senior Member, IEEE, and Alan WeeChung Liew, Senior Member, IEEE, IEEE TRANSACTIONS

ON MEDICAL IMAGING, VOL. 29, NO. 7, JULY 2010 1369.

[8]

Retinal Image Analysis Using Curvelet Transform and

Multistructure Elements Morphology by Reconstruction;

Mohammad Saleh Miri and Ali Mahloojifar*, IEEE

TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL.

58, NO. 5, MAY 2011 1183.

[9]

Segmentation of Choroidal Neovascularization in Fundus

Fluorescein Angiograms Walid M. Abdelmoula, Syed M. Shah,

and Ahmed S. Fahmy, IEEE TRANSACTIONS ON

399

COMPARISON OF RESULT

Method

Li etal [10]

[1]

VI. CONCLUSION

The classification of arteries and veins in retinal images is

essential for the automated assessment of vascular changes. A

new automatic methodology to classify retinal vessels into

arteries and veins is discussed which is distinct from prior

solutions. One major difference is the fact that this method is

able to classify the whole vascular tree and does not restrict

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

International Journal on Recent and Innovation Trends in Computing and Communication

Volume: 3 Issue: 1

ISSN: 2321-8169

394 - 400

_______________________________________________________________________________________________

BIOMEDICAL ENGINEERING, VOL. 60, NO. 5, MAY 2013

1439.

[10] L. Wang, A. Bhalerao, and R. Wilson, "Analysis of retinal

vasculatureusing a multiresolution hermite model," IEEE

Transactions on Medical Imaging, vol. 26, pp. 137-152, 2007.

[11] B. Al-Diri, A. Hunter, and D. Steel, "An active contour model

forsegmenting and measuring retinal vessels," IEEE

Transactions on Medical Imaging, vol. 28, pp. 1488-1497, 2009

[12] L. Espona, M. J. Carreira, M. Ortega, and M. G. Penedo, A

snake for retinal vessel segmentation, Pattern Recognition and

Image Analysis, vol. 4478, Lecture Notes Comput. Sci., pp.

178185, 2007.

[13] Zhiyong Xio * Mouloud Adel, and Salah Bourennan, Bayesian

Method with Spatial Constraint for Retinal Vessel

Segmentation, Comput Math Methods Med. 2013; 2013:

401413. JUly 2013

[14] http://neurosci.wikidot.com/artificial-neural-network

400

IJRITCC | January 2015, Available @ http://www.ijritcc.org

_______________________________________________________________________________________

You might also like

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (890)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- Music GcseDocument45 pagesMusic GcseAimee DohertyNo ratings yet

- Mitsubishi diesel forklifts 1.5-3.5 tonnesDocument2 pagesMitsubishi diesel forklifts 1.5-3.5 tonnesJoniNo ratings yet

- GX-2009 - Personal Multigas Detector. Operator's Manual (RKI, 2009)Document64 pagesGX-2009 - Personal Multigas Detector. Operator's Manual (RKI, 2009)Stasio80No ratings yet

- Xenomai 3 DocumentationDocument673 pagesXenomai 3 DocumentationGuile BonafiniNo ratings yet

- Admix Load CellDocument6 pagesAdmix Load Cellmanil_5No ratings yet

- The Order of Historical Time: The Longue Durée and Micro-HistoryDocument17 pagesThe Order of Historical Time: The Longue Durée and Micro-HistoryGeorgia KoutaNo ratings yet

- Image Restoration Techniques Using Fusion To Remove Motion BlurDocument5 pagesImage Restoration Techniques Using Fusion To Remove Motion Blurrahul sharmaNo ratings yet

- WPS Office 2016Document22 pagesWPS Office 2016Muhammad Aliff SyukriNo ratings yet

- 000 200 1210 Guidelines For Minimum Deliverables 3 November 2011Document22 pages000 200 1210 Guidelines For Minimum Deliverables 3 November 2011Raul Bautista100% (1)

- A Review of Wearable Antenna For Body Area Network ApplicationDocument4 pagesA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCNo ratings yet

- Channel Estimation Techniques Over MIMO-OFDM SystemDocument4 pagesChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCNo ratings yet

- A Review of 2D &3D Image Steganography TechniquesDocument5 pagesA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCNo ratings yet

- Regression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeDocument7 pagesRegression Based Comparative Study For Continuous BP Measurement Using Pulse Transit TimeEditor IJRITCCNo ratings yet

- Performance Analysis of Image Restoration Techniques at Different NoisesDocument4 pagesPerformance Analysis of Image Restoration Techniques at Different NoisesEditor IJRITCCNo ratings yet

- A Review of Wearable Antenna For Body Area Network ApplicationDocument4 pagesA Review of Wearable Antenna For Body Area Network ApplicationEditor IJRITCCNo ratings yet

- Importance of Similarity Measures in Effective Web Information RetrievalDocument5 pagesImportance of Similarity Measures in Effective Web Information RetrievalEditor IJRITCCNo ratings yet

- Comparative Analysis of Hybrid Algorithms in Information HidingDocument5 pagesComparative Analysis of Hybrid Algorithms in Information HidingEditor IJRITCCNo ratings yet

- Diagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmDocument5 pagesDiagnosis and Prognosis of Breast Cancer Using Multi Classification AlgorithmEditor IJRITCCNo ratings yet

- IJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Document3 pagesIJRITCC Call For Papers (October 2016 Issue) Citation in Google Scholar Impact Factor 5.837 DOI (CrossRef USA) For Each Paper, IC Value 5.075Editor IJRITCCNo ratings yet

- A Review of 2D &3D Image Steganography TechniquesDocument5 pagesA Review of 2D &3D Image Steganography TechniquesEditor IJRITCCNo ratings yet

- Channel Estimation Techniques Over MIMO-OFDM SystemDocument4 pagesChannel Estimation Techniques Over MIMO-OFDM SystemEditor IJRITCCNo ratings yet

- Modeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleDocument4 pagesModeling Heterogeneous Vehicle Routing Problem With Strict Time ScheduleEditor IJRITCCNo ratings yet

- A Study of Focused Web Crawling TechniquesDocument4 pagesA Study of Focused Web Crawling TechniquesEditor IJRITCCNo ratings yet

- Network Approach Based Hindi Numeral RecognitionDocument4 pagesNetwork Approach Based Hindi Numeral RecognitionEditor IJRITCCNo ratings yet

- Efficient Techniques For Image CompressionDocument4 pagesEfficient Techniques For Image CompressionEditor IJRITCCNo ratings yet

- 41 1530347319 - 30-06-2018 PDFDocument9 pages41 1530347319 - 30-06-2018 PDFrahul sharmaNo ratings yet

- Fuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewDocument4 pagesFuzzy Logic A Soft Computing Approach For E-Learning: A Qualitative ReviewEditor IJRITCCNo ratings yet

- Itimer: Count On Your TimeDocument4 pagesItimer: Count On Your Timerahul sharmaNo ratings yet

- Predictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth KotianDocument3 pagesPredictive Analysis For Diabetes Using Tableau: Dhanamma Jagli Siddhanth Kotianrahul sharmaNo ratings yet

- Prediction of Crop Yield Using LS-SVMDocument3 pagesPrediction of Crop Yield Using LS-SVMEditor IJRITCCNo ratings yet

- Safeguarding Data Privacy by Placing Multi-Level Access RestrictionsDocument3 pagesSafeguarding Data Privacy by Placing Multi-Level Access Restrictionsrahul sharmaNo ratings yet

- A Clustering and Associativity Analysis Based Probabilistic Method For Web Page PredictionDocument5 pagesA Clustering and Associativity Analysis Based Probabilistic Method For Web Page Predictionrahul sharmaNo ratings yet

- 45 1530697786 - 04-07-2018 PDFDocument5 pages45 1530697786 - 04-07-2018 PDFrahul sharmaNo ratings yet

- Hybrid Algorithm For Enhanced Watermark Security With Robust DetectionDocument5 pagesHybrid Algorithm For Enhanced Watermark Security With Robust Detectionrahul sharmaNo ratings yet

- 44 1530697679 - 04-07-2018 PDFDocument3 pages44 1530697679 - 04-07-2018 PDFrahul sharmaNo ratings yet

- Vehicular Ad-Hoc Network, Its Security and Issues: A ReviewDocument4 pagesVehicular Ad-Hoc Network, Its Security and Issues: A Reviewrahul sharmaNo ratings yet

- A Content Based Region Separation and Analysis Approach For Sar Image ClassificationDocument7 pagesA Content Based Region Separation and Analysis Approach For Sar Image Classificationrahul sharmaNo ratings yet

- Space Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud DataDocument12 pagesSpace Complexity Analysis of Rsa and Ecc Based Security Algorithms in Cloud Datarahul sharmaNo ratings yet

- QAF10A200S TheTimkenCompany 2DSalesDrawing 03 06 2023Document1 pageQAF10A200S TheTimkenCompany 2DSalesDrawing 03 06 2023LeroyNo ratings yet

- Meeting 5: Data Warehouses and SQL Query OptimizationDocument4 pagesMeeting 5: Data Warehouses and SQL Query Optimizationzvipev1050% (2)

- Pneumatic Tyres: CMR Engineering CollegeDocument17 pagesPneumatic Tyres: CMR Engineering CollegeHemanth Rama Krishna YernagulaNo ratings yet

- Craig Vaughan CHPTR 07Document44 pagesCraig Vaughan CHPTR 07Jorge CananeaNo ratings yet

- Visualizing Interstellar WormholesDocument14 pagesVisualizing Interstellar WormholesFranciscoNo ratings yet

- SubNetting Practice LabDocument3 pagesSubNetting Practice LabOdoch HerbertNo ratings yet

- VLSI Design: Introduction & Motivation Introduction & MotivationDocument33 pagesVLSI Design: Introduction & Motivation Introduction & MotivationPriyanka SharmaNo ratings yet

- 3BSE079234 - en 800xa 6.0 ReleasedDocument7 pages3BSE079234 - en 800xa 6.0 ReleasedFormat_CNo ratings yet

- PEE3-M Lec 1Document19 pagesPEE3-M Lec 1Ena Leanica DelgadoNo ratings yet

- Sample Question Paper Provides Practice for Class 9 Science ExamDocument5 pagesSample Question Paper Provides Practice for Class 9 Science ExamViswak BalajiNo ratings yet

- Introducing WESAD, A Multimodal Dataset For Wearable Stress and Affect DetectionDocument9 pagesIntroducing WESAD, A Multimodal Dataset For Wearable Stress and Affect DetectionJhónatan CarranzaNo ratings yet

- Digital Logic Design - Switch Logic & Basic GatesDocument27 pagesDigital Logic Design - Switch Logic & Basic GatesTroon SoonNo ratings yet

- Sequelize GuideDocument5 pagesSequelize Guidemathur1995No ratings yet

- Leroy Somer 3434c - GB-NyDocument28 pagesLeroy Somer 3434c - GB-NyCris_eu09100% (1)

- Ze500 4 Parts Catalog en UsDocument9 pagesZe500 4 Parts Catalog en UsClaudia LopezNo ratings yet

- Ferrites and AccessoriesDocument11 pagesFerrites and AccessoriesMaulik ShahNo ratings yet

- Javascript Api: Requirements Concepts Tutorial Api ReferenceDocument88 pagesJavascript Api: Requirements Concepts Tutorial Api ReferenceAshish BansalNo ratings yet

- Introduction To Curve FittingDocument10 pagesIntroduction To Curve FittingscjofyWFawlroa2r06YFVabfbajNo ratings yet

- Solution of Homework 4Document4 pagesSolution of Homework 4Kamalakar Reddy100% (2)

- Displaymax JR 1500 SpecsDocument1 pageDisplaymax JR 1500 SpecsFRANCISCONo ratings yet

- 2.6 Rational Functions Asymptotes TutorialDocument30 pages2.6 Rational Functions Asymptotes TutorialAljun Aldava BadeNo ratings yet