Professional Documents

Culture Documents

Audios DK

Uploaded by

vicvansOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Audios DK

Uploaded by

vicvansCopyright:

Available Formats

Audio SDK Documentation

Version 1.0.0-Beta

2|Introduction|Audio

Copyrights and Trademarks

2015 Oculus VR, LLC. All Rights Reserved.

OCULUS VR, OCULUS, and RIFT are trademarks of Oculus VR, LLC. (C) Oculus VR, LLC. All rights reserved.

BLUETOOTH is a registered trademark of Bluetooth SIG, Inc. All other trademarks are the property of their

respective owners. Certain materials included in this publication are reprinted with the permission of the

copyright holder.

2||

Audio|Contents|3

Contents

Introduction to Virtual Reality Audio................................................................... 5

Overview............................................................................................................................................................ 5

Localization and the Human Auditory System.................................................................................................. 5

Directional Localization................................................................................................................................ 6

Distance Localization.................................................................................................................................... 8

3D Audio Spatialization..................................................................................................................................... 9

Directional Spatialization with Head-Related Transfer Functions (HRTFs)................................................. 10

Distance Modeling..................................................................................................................................... 11

Listening Devices............................................................................................................................................. 11

Environmental Modeling..................................................................................................................................15

Sound Design for Spatialization...................................................................................................................... 17

Mixing Scenes for Virtual Reality.....................................................................................................................18

VR Audio Glossary........................................................................................................................................... 20

Oculus Audio SDK Guide.................................................................................. 22

SDK Contents and Features............................................................................................................................ 22

Requirements................................................................................................................................................... 22

Features............................................................................................................................................................23

Supported Features....................................................................................................................................23

Unsupported Features................................................................................................................................23

Sound Transport Time..................................................................................................................................... 24

Pitfalls and Workarounds................................................................................................................................. 24

Platform Notes................................................................................................................................................. 25

Middleware Support........................................................................................................................................ 25

Oculus Hardware Capabilities......................................................................................................................... 25

Oculus Native Spatializer for Unity....................................................................27

Overview.......................................................................................................................................................... 27

Requirements and Setup................................................................................................................................. 27

Exploring Oculus Native Spatializer with the Sample Scene.......................................................................... 29

Applying Spatialization.................................................................................................................................... 30

Legacy Oculus Spatializer for Unity Integration Guide......................................35

Overview.......................................................................................................................................................... 35

Reducing Android Latency: OpenSL Low-Latency Driver............................................................................... 35

Installing into Unity..........................................................................................................................................36

How to Use the Oculus Spatializer in Unity....................................................................................................36

OSPManager.................................................................................................................................................... 37

OSPAudioSource..............................................................................................................................................38

OSP Version Migration in Unity...................................................................................................................... 40

Oculus Spatializer for Wwise Integration Guide................................................41

Overview.......................................................................................................................................................... 41

Installing into Wwise Tool............................................................................................................................... 41

Installing into the Wwise Unity Integration..................................................................................................... 42

How to Use the Oculus Spatializer in Wwise..................................................................................................42

Global Properties............................................................................................................................................. 44

Sound Properties............................................................................................................................................. 45

Integrating the Oculus Spatializer................................................................................................................... 47

4|Contents|Audio

OSP Version Migration in Wwise.................................................................................................................... 48

Oculus Spatializer for FMOD Integration Guide............................................... 49

Overview.......................................................................................................................................................... 49

How to Use in FMOD Studio..........................................................................................................................49

Notes and Best Practices................................................................................................................................ 50

Integrating the Oculus Spatializer in the Application..................................................................................... 51

Installing with the FMOD Studio Unity Integration.........................................................................................53

OSP Version Migration in FMOD....................................................................................................................53

Oculus Spatializer VST Integration Guide......................................................... 54

Overview.......................................................................................................................................................... 54

Using the Plugin.............................................................................................................................................. 55

Track Parameters............................................................................................................................................. 55

DAW-Specific Notes........................................................................................................................................ 57

Legal Notifications........................................................................................................................................... 57

Oculus Spatializer AAX Integration Guide........................................................ 59

Overview.......................................................................................................................................................... 59

Using the Plugin.............................................................................................................................................. 60

Track Parameters............................................................................................................................................. 60

Release Notes.................................................................................................... 63

Audio SDK 1.0 Release Notes........................................................................................................................ 63

Audio SDK 0.11 Release Notes...................................................................................................................... 63

Audio SDK 0.10 Release Notes...................................................................................................................... 64

Audio|Introduction to Virtual Reality Audio|5

Introduction to Virtual Reality Audio

Welcome to audio development for virtual reality!

Overview

This document introduces fundamental concepts in audio development for virtual reality (VR) with an emphasis

on key factors that deserve development attention.

We hope to establish that audio is crucial for creating a persuasive VR experience. Because of the key role that

audio cues play in our cognitive perception of existing in space, any effort that development teams devote to

getting it right will pay off in spades, as it will contribute powerfully to the user's sense of immersion. This is as

true for small- or mid-sized teams as it is for design houses perhaps even more so.

Audio has been a crucial part of the computer and video gaming experience since the advent of the first coinop games, which filled arcades with bleeps, bloops, and digital explosions. Over time, the state of computer

audio has steadily improved, from simple wave generators (SID, 1983) to FM synthesis (AdLib, 1987), evolving

on to 8-bit mono samples (Amiga OCS, 1985; SoundBlaster, 1989) and 16-bit stereo samples (SoundBlaster

Pro), culminating in today's 5.1 surround sound systems on modern gaming consoles (XBox, 2001).

Since the development of 5.1 surround, little has changed. The fundamental technology of playing waveforms

over speakers is the same, and the game playing environment is still primarily the living room or den with a

large television and speakers.

Virtual reality, however, is changing all this. Instead of a large environment with speakers, virtual reality brings

the experience in close to the player via a head-mounted display (HMD) and headphones. The ability to track

the user's head orientation and position significantly empowers audio technology.

Until now, the emphasis has typically been placed on the visual aspects of virtual reality (resolution, latency,

tracking), but audio must now catch up in order to provide the greatest sense of presence possible.

This document discusses the challenges, opportunities, and solutions related to audio in VR, and how some

of the techniques learned in traditional game development must be revisited and modified for VR. It is not

intended to be a rigorous scientific study of the nature of acoustics, hearing and human auditory perception.

Its intended audience includes anyone with an interest in audio and VR, including sound designers, artists, and

programmers.

If you are interested in learning about these details in greater depth, we recommend searching the Web for the

following terms:

Head-Related Impulse Response

Head-Related Transfer Function

Sound Localization

Localization and the Human Auditory System

Consider the following: human beings have only two ears, but are able to locate sound sources within three

dimensions. That shouldn't be possible if you were given a stereo recording and were asked to determine if

the sound came from above or below the microphones, you would have no way to tell. If you can't do it from a

recording, how can you do it in reality?

6|Introduction to Virtual Reality Audio|Audio

Humans rely on psychoacoustics and inference to localize sounds in three dimensions, attending to factors such

as timing, phase, level, and spectral modifications.

This section summarizes how humans localize sound. Later, we will apply that knowledge to solving the

spatialization problem, and learn how developers can take a monophonic sound and transform its signal so that

it sounds like it comes from a specific point in space.

Directional Localization

In this section, we will look at the cues humans use to determine the direction to a sound source. The two key

components of localization are direction and distance.

Lateral

Laterally localizing a sound is the simplest type of localization, as one would expect. When a sound is closer to

the left, the left ear hears it before the right ear hears it, and it sounds louder. The closer to parity, the more

centered the sound, generally speaking.

There are, however, some interesting details. First, we may primarily localize a sound based on the delay

between the sound's arrival in both ears, or interaural time difference (ITD); or, we may primarily localize a

sound based on the difference in the sound's volume level in both ears, or the interaural level difference (ILD).

The localization technique we rely upon depends heavily on the frequency content of the signal.

Sounds below a certain frequency (anywhere from 500 to 800 Hz, depending on the source) are difficult to

distinguish based on level differences. However, sounds in this frequency range have half wavelengths greater

than the dimensions of a typical human head, allowing us to rely on timing information (or phase) between the

ears without confusion.

At the other extreme, sounds with frequencies above approximately 1500 Hz have half wavelengths smaller

than the typical head. Phase information is therefore no longer reliable for localizing the sound. At these

frequencies, we rely on level differences caused by head shadowing, or the sound attenuation that results from

our heads obstructing the far ear (see figure below).

We also key on the difference in time of the signal's onset. When a sound is played, which ear hears it first

is a big part of determining its location. However, this only helps us localize short sounds with transients as

opposed to continuous sounds.

There is a transitional zone between ~800 Hz and ~1500 Hz in which both level differences and time

differences are used for localization.

Audio|Introduction to Virtual Reality Audio|7

Front/Back/Elevation

Front versus back localization is significantly more difficult than lateral localization. We cannot rely on time

differences, since interaural time and/or level differences may be zero for a sound in front of or behind the

listener.

In the following figure we can see how sounds at locations A and B would be indistinguishable from each other

since they are the same distance from both ears, giving identical level and time differences.

Humans rely on spectral modifications of sounds caused by the head and body to resolve this ambiguity.

These spectral modifications are filters and reflections of sound caused by the shape and size of the head,

neck, shoulders, torso, and especially, by the outer ears (or pinnae). Because sounds originating from different

directions interact with the geometry of our bodies differently, our brains use spectral modification to infer

the direction of origin. For example, sounds approaching from the front produce resonances created by the

interior of our pinnae, while sounds from the back are shadowed by our pinnae. Similarly, sounds from above

may reflect off our shoulders, while sounds from below are shadowed by our torso and shoulders.

All of these reflections and shadowing effects combine to create a direction selective filter.

Head-Related Transfer Functions (HRTFs)

A direction selection filter can be encoded as a head-related transfer function (HRTF). The HRTF is the

cornerstone for most modern 3D sound spatialization techniques. How we measure and create an HRTF is

described in more detail elsewhere in this document.

Head Motion

HRTFs by themselves may not be enough to localize a sound precisely, so we often rely on head motion to

assist with localization. Simply turning our heads changes difficult front/back ambiguity problems into lateral

localization problems that we are better equipped to solve.

In the following figure sounds at A and B are indistinguishable from each other based on level or time

differences, since they are identical. By turning her head slightly, the listener alters the time and level

differences between ears, helping to disambiguate the location of the sound. D1 is closer than D2, which is a

cue that the sound is to the left (and thus behind) the listener.

8|Introduction to Virtual Reality Audio|Audio

Likewise, cocking our heads can help disambiguate objects vertically. In the following figure, the listener cocks

her head, which results in D1 shortening and D2 lengthening. This provides a cue that the object is above her

head instead of below it.

Distance Localization

ILD, ITD and HRTFs help us determine the direction to a sound source, but they give relatively sparse cues for

determining the distance to a sound. To determine distance we use a combination of factors, including initial

time delay, ratio of direct sound to reverberant sound, and motion parallax.

Loudness

Loudness is the most obvious distance cue, but it can be misleading. If we lack a frame of reference, we can't

judge how much the sound has diminished in volume from its source, and thus estimate a distance. Fortunately,

we are familiar with many of the sound sources that we encounter daily, such as musical instruments, human

voice, animals, vehicles, and so on, so we can predict these distances reasonably well.

For synthetic or unfamiliar sound sources, we have no such frame of reference, and we must rely on other cues

or relative volume changes to predict if a sound is approaching or receding.

Audio|Introduction to Virtual Reality Audio|9

Initial Time Delay

Initial time delay describes the interval between the direct sound and its first reflection. The longer this gap, the

closer we assume that we are to the sound source.

Anechoic (echoless) or open environments such as deserts may not generate appreciable reflections, which

makes estimating distances more difficult.

Ratio of Direct Sound to Reverberation

In a reverberant environment there is a long, diffuse sound tail consisting of all the late echoes interacting with

each other, bouncing off surfaces, and slowly fading away. The more we hear of a direct sound in comparison

to the late reverberations, the closer we assume it is.

This property has been used by audio engineers for decades to move a musical instrument or vocalist to the

front or to the back of a song by adjusting the wet/dry mix of an artificial reverb.

Motion Parallax

Motion parallax (the apparent movement of a sound source through space) indicates distance, since nearby

sounds typically exhibit a greater degree of parallax than far-away sounds. For example, a nearby insect can

traverse from the left to the right side of your head very quickly, but a distant airplane may take many seconds

to do the same. As a consequence, if a sound source travels quickly relative to a stationary perspective, we

tend to perceive that sound as coming from nearby.

High Frequency Attenuation

High frequencies attenuate faster than low frequencies, so over long distances we can infer a bit about distance

based on how attenuated those high frequencies are. This is often a little overstated in the literature, because

sounds must travel hundreds or thousands of feet before high frequencies are noticeably attenuated (i.e., well

above 10 kHz). This is also affected by atmospheric conditions, such as temperature and humidity.

3D Audio Spatialization

The previous section discussed how humans localize the sources of sounds in three dimensions. We now invert

that and ask, Can we apply that information to fool people into thinking that a sound is coming from a specific

point in space?

10|Introduction to Virtual Reality Audio|Audio

The answer, thankfully, is yes, otherwise this would be a pretty short document. A big part of VR audio is

spatialization: the ability to play a sound as if it is positioned at a specific point in three-dimensional space.

Spatialization is a key aspect of presence because it provides powerful cues suggesting the user is in an actual

3D environment, which contributes strongly to a sense of immersion.

As with localization, there are two key components to spatialization: direction and distance.

Directional Spatialization with Head-Related Transfer Functions

(HRTFs)

We know that sounds are transformed by our body and ear geometry differently depending on the incoming

direction. These different effects form the basis of HRTFs, which we use to localize a sound.

Capturing HRTFs

The most accurate method of HRTF capture is to take an individual, put a couple microphones in their ears

(right outside the ear canal), place them in an anechoic chamber (i.e., an echoless environment), play sounds in

the chamber from every direction we care about, and record those sounds from the mics. We can then compare

the original sound with the captured sound and compute the HRTF that takes you from one to the other.

We have to do this for both ears, and we have to capture sounds from a sufficient number of discrete directions

to build a usable sample set.

But wait we have only captured HRTFs for a specific person. If our brains are conditioned to interpret the

HRTFs of our own bodies, why would that work? Don't we have to go to a lab and capture a personalized HRTF

set?

In a perfect world, yes, we'd all have custom HRTFs measured that match our own body and ear geometry

precisely, but in reality this isn't practical. While our HRTFs are personal, they are similar enough to each other

that a generic reference set is adequate for most situations, especially when combined with head tracking.

Most HRTF-based spatialization implementations use one of a few publicly available data sets, captured either

from a range of human test subjects or from a synthetic head model such as the KEMAR.

IRCAM Listen Database

MIT KEMAR

CIPIC HRTF Database

ARI (Acoustics Research Institute) HRTF Database

Most HRTF databases do not have HRTFs in all directions. For example, there is often a large gap representing

the area beneath the subject's head, as it is difficult, if no impossible, to place a speaker one meter directly

below an individual's head. Some HRTF databases are sparsely sampled, including HRTFs only every 5 or 15

degrees.

Most implementations either snap to the nearest acquired HRTF (which exhibits audible discontinuities) or use

some method of HRTF interpolation. This is an ongoing area of research, but for VR applications on desktops, it

is often adequate to find and use a sufficiently-dense data set.

Applying HRTFs

Given an HRTF set, if we know the direction we want a sound to appear to come from, we can select

an appropriate HRTF and apply it to the sound. This is usually done either in the form of a time-domain

convolution or an FFT/IFFT pair.

If you don't know what these are, don't worry - those details are only relevant if you are implementing the HRTF

system yourself. Our discussion glosses over a lot of the implementation details (e.g., how we store an HRTF,

Audio|Introduction to Virtual Reality Audio|11

how we use it when processing a sound). For our purposes, what matters is the high-level concept: we are

simply filtering an audio signal to make it sound like it's coming from a specific direction.

Since HRTFs take the listener's head geometry into account, it is important to use headphones when

performing spatialization. Without headphones, you are effectively applying two HRTFs: the simulated one, and

the actual HRTF caused by the geometry of your body.

Head Tracking

Listeners instinctively use head motion to disambiguate and fix sound in space. If we take this ability away, our

capacity to locate sounds in space is diminished, particularly with respect to elevation and front/back. Even

ignoring localization, if we are unable to compensate for head motion, then sound reproduction is tenuous at

best. When a listener turns their head 45 degrees to the side, we must be able to reflect that in their auditory

environment, or the soundscape will ring false.

VR headsets such as the Rift provide the ability to track a listener's head orientation (and, sometimes, position).

By providing this information to a sound package, we can project a sound in the listener's space, regardless of

their head position.

This assumes that the listener is wearing headphones. It is possible to mimic this with a speaker array, but it is

significantly less reliable, more cumbersome, and more difficult to implement, and thus impractical for most VR

applications.

Distance Modeling

HRTFs help us identify a sound's direction, but they do not model our localization of distance. Humans use

several factors to infer the distance to a sound source. These can be simulated with varying degrees of accuracy

and cost in software:

Loudness, our most reliable cue, is trivial to model with simple attenuation based on distance between the

source and the listener.

Initial Time Delay is significantly harder to model, as it requires computing the early reflections for a given

set of geometry, along with that geometry's characteristics. This is both computationally expensive and

awkward to implement architecturally (specifically, sending world geometry to a lower level API is often

complex). Even so, several packages have made attempts at this, ranging from simple shoebox models to

elaborate full scene geometric modeling.

Direct vs. Reverberant Sound (or, in audio production, the wet/dry mix) is a natural byproduct of any

system that attempts to accurately model reflections and late reverberations. Unfortunately, such systems

tend to be very expensive computationally. With ad hoc models based on artificial reverberators, the mix

setting can be adjusted in software, but these are strictly empirical models.

Motion Parallax we get for free, because it is a byproduct of the velocity of a sound source.

High Frequency Attenuation due to air absorption is a minor effect, but it is also reasonably easy to model

by applying a simple low-pass filter, and by adjusting cutoff frequency and slope. In practice, HF attenuation

is not very significant in comparison to the other distance cues.

Listening Devices

Traditionally, high quality audio reproduction has been the domain of multi-speaker systems, often

accompanied by one or more subwoofers. However, with the rise of online gaming and voice chat, many

players have transitioned to headsets (headphones with integrated microphones).

12|Introduction to Virtual Reality Audio|Audio

For modern VR, especially with head tracking and user movement, speaker arrays are an evolutionary dead

end. Headphone audio will be the standard for VR into the future, as it provides better isolation, privacy,

portability, and spatialization.

Headphones

Headphones offer several significant advantages over free-field speaker systems for virtual reality audio:

Acoustic isolation from the listener's environment enhance realism and immersion.

Head tracking is greatly simplified.

HRTFs are more accurate since they don't suffer from the doubling down of HRTF effects (sounds

modified from the simulated HRTF, and again by the listener's actual body geometry).

Access to controls while wearing an HMD is far simpler when those controls are physically attached to the

listener.

Microphones are ideally placed and subject to much less echo/feedback.

Headphones are available in a variety of types with various trade-offs:

Closed Back Headphones

As a general rule of thumb, closed back headphones offer the most isolation and bass response. However,

the closed construction may lead to discomfort (due to heat and weight), and they tend to offer less accurate

reproduction due to internal resonance. Also, if placed on or over the ear, they cause the pinnae to impact

sound reproduction slightly.

Audio|Introduction to Virtual Reality Audio|13

While acoustic isolation can help with immersion, it cuts listeners off from their environment so they may be

unable to hear others entering the room, cell phone ringing, doorbell, et cetera. Whether that is a good thing

or not is up to the individual.

Open Back Headphones

Open back headphones are generally more accurate and comfortable than closed-back headphones, but they

do not isolate listeners from the exterior environment, and broadcast to the surrounding environment as well.

These are suitable for quiet areas devoted to a VR experience, possibly in conjunction with a subwoofer.

As with closed back headphones, when placed on or over the ear, open back headphones allow the pinnae to

impact sound reproduction slightly.

Earbuds

Earbuds (such as those that ship with cell phones or portable music players) are cheap, lightweight, and

very portable, though they typically lack bass. Some models, such as Apple EarPods, have surprisingly good

frequency response, albeit with a steady roll off of bass frequencies. These are mostly ignored for spatialization.

Most earbuds are poor at isolation.

14|Introduction to Virtual Reality Audio|Audio

In-Ear Monitors

In-ear monitors offer superior isolation from your environment, are very lightweight, and have excellent

frequency response over the entire range. They remove the effects of the listener's pinnae from sound (unlike

on-ear headphones). They have the downside of requiring insertion into the ear canal, which eliminates the

effects of the ear canal from sound reproduction entirely (since most HRTFs are captured with microphones

right outside the ear canal).

Impulse Responses

Headphones, like all transducers, impart their own characteristics on signals, and since HRTFs are frequency

sensitive, removing the headphone character from the signal will usually be beneficial. This can be

accomplished by deconvolving the output signal with the headphone's impulse response.

External Speaker Systems

Until recently, the most common way to provide sound immersion was to surround the listener with speakers,

such as a Dolby 5.1 or 7.1 speaker configuration. While partially effective for a fixed and narrow sitting position,

speaker array systems suffer from key drawbacks:

Imprecise imaging due to panning over large portions of the listening area.

No elevation cues, sounds only appear in a 360 degree circle around the listener.

Assumption of immobile listener; in particular, no head tracking.

Room effects such as reverberation and reflections impact the reproduced sound.

Poor isolation means that outside sounds can intrude on the VR experience.

It is doubtful that multi-speaker configurations will be common or effective for home VR applications, though

they may be viable for dedicated commercial installations.

Bluetooth

Bluetooth has become a popular communication method of wireless audio broadcast. Unfortunately, modern

Bluetooth implementations often incur significant latency, sometimes as high as 500 milliseconds. As a result,

Bluetooth technology is not recommended for audio output.

Audio|Introduction to Virtual Reality Audio|15

Environmental Modeling

HRTFs in conjunction with attenuation provide an anechoic model of three dimensional sound, which exhibits

strong directional cues but tends to sound dry and artificial due to lacking room ambiance. To compensate for

this, we can add environmental modeling to mimic the acoustic effects of nearby geometry.

Reverberation and Reflections

As sounds travel through space, they reflect off of surfaces, creating a series of echoes. The initial distinct

echoes (early reflections) help us determine the direction and distance to a sound. As these echoes propagate,

diminish, and interact they create a late reverberation tail, which contributes to our sense of space.

We can model reverberation and reflection using several different methods.

Shoebox Model

Some 3D positional implementations layer simple shoebox room modeling on top of their HRTF

implementation. These consist of specifying the distance and reflectivity of six parallel walls (i.e., the

shoebox) and sometimes the listener's position and orientation within that room as well. With that basic

model, you can simulate early reflections from walls and late reverberation characteristics.

While far from perfect, it's much better than artificial or no reverberation.

Artificial Reverberations

16|Introduction to Virtual Reality Audio|Audio

Since modeling physical walls and late reverberations can quickly become computationally expensive,

reverberation is often introduced via artificial, ad hoc methods such as those used in digital reverb units of

the 80s and 90s. While less computationally intensive than physical models, they may also sound unrealistic,

depending on the algorithm and implementation especially since they are unable to take the listener's

orientation into account.

Sampled Impulse Response Reverberation

Convolution reverbs sample the impulse response from a specific real-world location such as a recording

studio, stadium, or lecture hall. It can then be applied to a signal later, resulting in a signal that sounds as if it

were played back in that location. This can produce some phenomenally lifelike sounds, but there are some

drawbacks. Sampled impulse responses rarely match in-game synthetic environments; they represent a fixed

listener position and orientation; they are monophonic; they are difficult to transition between different areas.

Even with these limitations, they still provide high-quality results in many situations.

World Geometry and Acoustics

The shoebox model attempts to provide a simplified representation of an environment's geometry. It

assumes no occlusion, equal frequency absorption on all surfaces, and six parallel walls at a fixed distance

from the listener's head. Needless to say, this is a heavy simplification for the sake of performance, and as VR

environments become more complex and dynamic, it may not scale properly

Some solutions exist today to simulate diffraction and complex environmental geometry, but support is not

widespread and performance implications are still significant.

Environmental Transitions

Modeling a specific area is complex, but still relatively straightforward. Irrespective of choice of model,

however, there is a problem of audible discontinuities or artifacts when transitioning between areas. Some

systems require flushing and restarting the entire reverberator, and other systems introduce artifacts as

parameters are changed in real-time.

Presence and Immersion

By creating audio that is on par with high quality VR visuals, developers immerse the user in a true virtual world,

giving them a sense of presence.

Audio immersion is maximized when the listener is located inside the scene, as opposed to viewing it from

afar. For example, a 3D chess game in which the player looks down at a virtual board offers less compelling

spatialization opportunities than a game in which the player stands on the play field. By the same token, an

audioscape in which moving elements whiz past the listener's head with auditory verisimilitude is far more

compelling than one in which audio cues cut the listener off from the action by communicating that they're

outside of the field of activity.

Audio|Introduction to Virtual Reality Audio|17

Note: It should be noted that while the pursuit of realism is laudable, it is also optional, as we want

developers and sound designers to maintain creative control over the output.

Sound Design for Spatialization

Now that we've established how humans place sounds in the world and, more importantly, how we can fool

people into thinking that a sound is coming from a particular point in space, we need to examine how we must

change our approach to sound design to support spatialization.

Mono

Most spatialization techniques model sound sources as infinitely small point sources; that is, sound is treated

as if it were coming from a single point in space as opposed to a large area, or a pair of discrete speakers. As a

result, sounds should be authored as monophonic (single channel) sources.

Avoid Sine Waves

Pure tones such as sine waves lack harmonics or overtones, which presents several issues:

Pure tones do not commonly occur in the real world, so they often sound unnatural. This does not mean you

should avoid them entirely, since many VR experiences are abstract, but it is worth keeping in mind.

18|Introduction to Virtual Reality Audio|Audio

HRTFs work by filtering frequency content, and since pure tones lack that content, they are difficult to

spatialize with HRTFs

Any glitches or discontinuities in the HRTF process will be more audible since there is no additional

frequency content to mask the artifacts. A moving sine wave will often bring out the worst in a spatialization

implementation.

Use Wide Spectrum Sources

For the same reasons that pure tones are poor for spatialization, broad spectrum sounds work well by providing

lots of frequencies for the HRTF to work with. They also help mask audible glitches that result from dynamic

changes to HRTFs, pan, and attenuation. In addition to a broad spectrum of frequencies, ensure that there is

significant frequency content above 1500 Hz, since this is used heavily by humans for sound localization.

Low frequency sounds are difficult for humans to locate - this is why home theater systems use a monophonic

subwoofer channel. If a sound is predominantly low frequency (rumbles, drones, shakes, et cetera), then you

can avoid the overhead of spatialization and use pan/attenuation instead.

Avoid Real-time Format Conversions

Converting from one audio format to another can be costly and introduce latency, so sounds should be

delivered in the same output format (sampling rate and bit depth) as the target device. For most PCs, this will

be 16-bit, 44.1 kHz PCM, but some platforms may have different output formats (e.g. 16-bit, 48 kHz on Gear

VR).

Spatialized sounds are monophonic and should thus be authored as a single channel to avoid stereo-to-mono

merging at run-time (which can introduce phase and volume artifacts).

If your title ships with non-native format audio assets, consider converting to native format at installation or load

time to avoid a hit at run-time.

Mixing Scenes for Virtual Reality

As with sound design, mixing a scene for VR is an art as well as a science, and the following recommendations

may include caveats.

Creative Control

Realism is not necessarily the end goal! Keep this in mind at all times. As with lighting in computer

environments, what is consistent and/or correct may not be aesthetically desirable. Audio teams must be

careful not to back themselves into a corner by enforcing rigid notions of correctness on a VR experience.

This is especially true when considering issues such as dynamic range, attenuation curves, and direct time of

arrival.

Accurate 3D Positioning of Sources

Sounds must now be placed carefully in the 3D sound field. In the past a general approximation of location

was often sufficient since positioning was accomplished strictly through panning and attenuation. The default

location for an object might be its hips or where its feet met the ground plane, and if a sound is played from

those locations it will be jarring with spatialization, e.g. crotch steps or foot voices.

Audio|Introduction to Virtual Reality Audio|19

Directional Sources

The Oculus Audio SDK does not support directional sound sources (speakers, human voice, car horns, et

cetera). However, higher level SDKs often model these using angle-based attenuation that controls the

tightness of the direction. This directional attenuation should occur before the spatialization effect.

Area Sources

The Oculus Audio SDK does not support area sound sources such as waterfalls, rivers, crowds, and so on.

Doppler Effect

The Doppler effect is the apparent change of a sound's pitch as its source approaches or recedes. VR

experiences can emulate this by altering the playback based on the relative speed of a sound source and the

listener, however it is very easy to introduce artifacts inadvertently in the process.

The Oculus Audio SDK does not have native support for the Doppler effect, though some high-level SDKs do.

Sound Transport Time

In the real world, sound takes time to travel, so there is often a noticeable delay between seeing and hearing

something. For example, you would see the muzzle flash from a rifle fired at you 100 meters away roughly

330 ms before you would hear it. Modeling propagation time incurs some additional complexity and may

paradoxically make things seem less realistic, as we are conditioned by popular media to believe that loud

distance actions are immediately audible.

The Oculus Audio SDK supports time-of-arrival.

Non-Spatialized Audio

Not all sounds need to be spatialized. Plenty of sounds are static or head relative, such as:

User interface elements, such as button clicks, bleeps, transitions, and other cues

Background music

Narration

Body sounds, such as breathing or heart beats

Such sounds should be segregated during authoring as they will probably be stereo, and during mixing so they

are not inadvertently pushed through the 3D positional audio pipeline.

Performance

Spatialization incurs a performance hit for each additional sound that must be placed in the 3D sound field. This

cost varies, depending on the platform. For example, on a high end PC, it may be reasonable to spatialize 50+

sounds, while you may only be able to spatialize one or two sounds on a mobile device.

Some sounds may not benefit from spatialization even if placed in 3D in the world. For example, very low

rumbles or drones offer poor directionality and could be played as standard stereo sounds with some panning

and attenuation.

Ambiance

Aural immersion with traditional non-VR games was often impossible since many gamers or PC users relied on

low-quality desktop speakers, home theaters with poor environmental isolation, or gaming headsets optimized

for voice chat.

With headphones, positional tracking, and full visual immersion, it is now more important than ever that sound

designers focus on the user's audio experience.

20|Introduction to Virtual Reality Audio|Audio

This means:

Properly spatialized sound sources

Appropriate soundscapes that are neither too dense nor too sparse

Avoidance of user fatigue

Suitable volume levels comfortable for long-term listening

Room and environmental effects

Audible Artifacts

As a 3D sound moves through space, different HRTFs and attenuation functions may become active, potentially

introducing discontinuities at audio buffer boundaries. These discontinuities will often manifest as clicks, pops

or ripples. They may be masked to some extent by reducing the speed of traveling sounds and by ensuring that

your sounds have broad spectral content.

Latency

While latency affects all aspects of VR, it is often viewed as a graphical issue. However, audio latency can be

disruptive and immersion-breaking as well. Depending on the speed of the host system and the underlying

audio layer, the latency from buffer submission to audible output may be as short as 2 ms in high performance

PCs using high end, low-latency audio interfaces, or, in the worst case, as long as hundreds of milliseconds.

High system latency becomes an issue as the relative speed between an audio source and the listener's head

increases. In a relatively static scene with a slow moving viewer, audio latency is harder to detect.

Effects

Effects such as filtering, equalization, distortion, flanging, and so on can be an important part of the virtual

reality experience. For example, a low pass filter can emulate the sound of swimming underwater, where high

frequencies lose energy much more quickly than in air, or distortion may be used to simulate disorientation.

VR Audio Glossary

Definitions of technical terms VR audio terms.

Term

Definition

Anechoic

Producing no echoes; very low or no reverberation.

Attenuation

A loss of energy; in acoustics, typically a reduction in volume.

Direct Sound

Sound that has traveled directly to the listener without reflecting (versus reverberant

sound).

Early Reflections

Reflected sounds that arrive relatively soon at a listener's location (i.e., before Late

Reflections).

Head-Related

Impulse Response

(HRIR)

A formal characterization of the effect of sound interacting with the geometry of a

particular human body. Used to create head-related transfer functions.

Audio|Introduction to Virtual Reality Audio|21

Term

Definition

Head-Related

Transfer Function

(HRTF)

A transformation of an acoustic signal using a head-related impulse response. Used to

simulate the effects of interaction of a sound originating from a specific direction with

the geometry of a particular human body.

Head Shadowing

The attenuation of sound caused by the head lying between an ear and the sound

source.

Initial Time Delay

The interval between the arrival of a direct sound and its first reflection.

Interaural Level

Difference (ILD)

The difference in a sound's level or volume between the two ears.

Interaural Time

Difference (ITD)

The length of the interval between when a sound arrives at the first ear and when it

arrives as the second ear.

Late Reflections

Reflected sounds that arrive relatively late at a listener's location (i.e., after early

reflections).

Motion Parallax

When moving objects are father from a perceiver, their apparent speed of travel

decreases; for example, a moving airplane on the horizon appears to be travelling more

slowly than a nearby car. The apparent rate of travel of an object can therefore be used

as a distance cue.

Pinnae

The visible portion of the ear that lies outside the head.

Reverberant Sound

Sound that has reflected or reverberated before arriving at a listener's location (versus

direct sound).

Reverberation

The reflection of sound off of a surface, or the temporary persistence of sound in a

space caused by reverberation.

Sound Localization

1. The process of determining the location of a sound's origin; or 2. the suggestion of

an object's location based on the manipulation of auditory cues.

Sound Spatialization The representation of a sound within three-dimensional space.

22|Oculus Audio SDK Guide|Audio

Oculus Audio SDK Guide

This document describes how to install, configure, and use the Oculus Audio SDK.

The Audio SDK consists of documentation, samples, plugins, source code, libraries, and tools to help

developers implement immersive audio in their VR apps.

Thus far, much of the discussion about virtual reality has centered on visual aspects such as pixel density,

latency, frame rate, and visual artifacts. However, audio characteristics reinforce visual immersion by providing

compelling auditory cues which indicate the positions of objects around the viewer, including those that are

outside their field of view.

We strongly recommend beginning with Introduction to Virtual Reality Audio for a technical discussion of the

key concepts and terminology.

SDK Contents and Features

The Oculus Audio SDK provides tools to help VR developers incorporate high-quality audio into their apps and

experiences by providing:

OculusHQ sound rendering path, supporting a large number of sound sources, providing strong directional

cues, early reflections and late reverberations

Plugins for game engines such as Unity3D

Plugins for popular audio middleware such as FMOD and Wwise

AAX Plugin for use with Avid's Pro Tools

VST plugin for Windows and OS X

Tutorials and white papers

A low-level C/C++ SDK that provides spatialization and (optional) reverberation is also available by request.

Please submit requests to the Oculus Audio Development Forum if you are interested.

Requirements

Head Tracking

By tracking the listener's head position and orientation, we can achieve accurate 3D sound spatialization. As

the listener moves or rotates their head, they perceive the sound as remaining at a fixed location in the virtual

world.

Developers may pass Oculus PC SDK ovrPosef structures to the Oculus Audio SDK for head tracking support.

Alternatively, they can pass listener-space sound positions and no pose information for the same effect.

Headphones

The Oculus Audio SDK assumes that the end user is wearing headphones, which provide better isolation,

privacy, portability, and spatialization than free-field speaker systems. When combined with head tracking and

spatialization technology, headphones deliver an immersive sense of presence. For more on the advantages

and disadvantages of headphones for virtual reality, please refer to Listening Devices in Introduction to Audio

for Virtual Reality.

Audio|Oculus Audio SDK Guide|23

Features

This section describes the features supported by the Oculus Audio SDK.

Supported Features

This section describes supported features.

Spatialization

Spatialization is the process of transforming monophonic sound sources to make them sound as though they

originate from a specific desired direction. The Oculus Audio SDK uses head-related transfer functions

(HRTFs) to provide audio spatialization through the C/C++ SDK and plugins.

Note: It is important that all sounds passed to Oculus Audio Spatializers are monophonic - stereophonic

rendering will be handled by our spatialization, and must not be applied over additional stereophonic

rendering provided by your game engine or library.

Environmental Modeling

HRTFs provide strong directional cues, but without room effects, they often sound dry and lifeless. Some

environmental cues (e.g., early reflections and late reverberation) are also important in providing strong cues

about the distance to a sound source.

The Audio SDK supports early reflections and late reverberations using a simple 'shoebox model,' consisting

of a virtual room centered around the listener's head, with four parallel walls, a floor, and a ceiling at varying

distances, each with its own distinct reflection coefficient.

Unsupported Features

There are other aspects of a high quality audio experience, however these are often more appropriately

implemented by the application programmer or a higher level engine.

Occlusion

Sounds interact with a user's environment in many ways. Objects and walls may obstruct, reflect, or propagate

a sound through the virtual world. The Oculus SDK only supports direct reflections and does not factor in the

virtual world geometry. This problem needs to be solved at a higher level than the Oculus Audio SDK due to

the requirements of scanning and referencing world geometry.

Doppler Effect

The Doppler effect is the perceived change in pitch that occurs when a sound source is moving at a rapid rate

towards or away from a listener, such as the pitch change that is perceived when a car whooshes by. It is often

emulated in middleware with a simple change in playback rate by the sound engine.

Creative Effects

Effects such as equalization, distortion, flanging, and so on can be used to great effect in a virtual reality

experience. For example, a low pass filter can emulate the sound of swimming underwater, where high

frequencies lose energy much faster than in air, distortion may be used to simulate disorientation, a narrow

bandpass equalizer can give a 'radio' effect on sound sources, and so on.

The Oculus Audio SDK does not provide these effects. Typically, these would be applied by a higher level

middleware package either before or after the Audio SDK, depending on the desired outcome. For example, a

24|Oculus Audio SDK Guide|Audio

low-pass filter might be applied to the master stereo buffers to simulate swimming underwater, but a distortion

effect may be applied pre-spatialization for a broken radio effect in a game.

Area and directional Sound Sources

The Oculus Audio SDK supports monophonic point sources. When a sound is specified, it is assumed that the

waveform data represents the sound as audible to the listener. It is up to the caller to attenuate the incoming

sound to reflect speaker directional attenuation (e.g. someone speaking while facing away from the listener)

and area sources such as waterfalls or rivers.

Sound Transport Time

Sound takes time to travel, which can result in a noticeable delay between seeing something occur and hearing

it (e.g., you would see the muzzle flash from a rifle fired 100 m away roughly 330 ms before you would hear it).

We do not model sound propagation time, as it would incur additional complexity, and it can have the

paradoxical effect of making things seem less realistic, as most people are conditioned by popular media

to believe that loud distance actions are immediately audible. Developers may delay a sound's onset

appropriately to simulate that effect, if desired.

Pitfalls and Workarounds

Spatialization and room effects greatly contribute to realism, but they come at a cost.

Performance

Spatialization incurs a performance hit for each additional sound that must be placed in the 3D sound field. This

cost varies, depending on the platform. For example, on a high end PC, it may be reasonable to spatialize 50+

sounds, while you may only be able to spatialize one or two sounds on a mobile device.

Some sounds may not benefit from spatialization even if placed in 3D in the world. For example, very low

rumbles or drones offer poor directionality and could be played as standard stereo sounds with some panning

and attenuation.

Audible Artifacts

As a 3D sound moves through space, different HRTFs and attenuation functions may become active, potentially

introducing discontinuities at audio buffer boundaries. These discontinuities will often manifest as clicks, pops

or ripples. They may be masked to some extent by reducing the speed of traveling sounds and by ensuring that

your sounds have broad spectral content.

Latency

While latency affects all aspects of VR, it is often viewed as a graphical issue. However, audio latency can be

disruptive and immersion-breaking as well. Depending on the speed of the host system and the underlying

audio layer, the latency from buffer submission to audible output may be as short as 2 ms in high performance

PCs using high end, low-latency audio interfaces, or, in the worst case, as long as hundreds of milliseconds.

High system latency becomes an issue as the relative speed between an audio source and the listener's head

increases. In a relatively static scene with a slow moving viewer, audio latency is harder to detect.

Audio|Oculus Audio SDK Guide|25

Compatibility between VR and Non-VR Games

Few developers have the luxury of targeting VR headsets exclusively, and must support traditional, nonVR games using external speakers and without the benefit of positional tracking. Weighing quality versus

compatibility can be difficult and incur development time.

Platform Notes

The Oculus Audio SDK currently supports Windows 7+, Android (Gear VR), Mac OS X, and Linux. This section

covers issues that may arise with different versions.

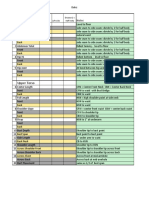

Component

Windows

Gear VR /

Android

Mac

Linux

C/C++ SDK

yes

yes

yes

yes

Wwise plugin

yes

TBD

TBD

no

Unity plugin

yes

yes

yes

TBD

FMOD plugin

yes

yes

yes

no

VST plugin

yes

no

yes

no

AAX plugin

soon!

no

soon!

no

Windows: The Oculus Audio SDK supports Windows 7 and later, both 32 and 64-bit.

Mac: The Oculus Audio SDK supports Mac OS X 10.9 and later, 32 and 64-bit.

Linux: The Oculus Audio SDK has been ported to Linux Ubuntu 14 (64-bit).

Android/Gear VR: Oculus Audio SDK supports Android phones on the Gear VR platform.

Middleware Support

Very few Oculus developers will use the Oculus Audio C/C++ SDK directly. Most developers use a middleware

framework, such as Audiokinetic Wwise or FMOD, and/or an engine such as Unity or Epic's Unreal. For this

reason, we support middleware packages and engines commonly used by developers.

Audiokinetic Wwise: Oculus provides a Wwise compatible plugin for Windows. More information about this

plugin can be found in the Oculus Spatializer for Wwise Integration Guide.

FMOD: The Oculus Audio SDK supports FMOD on the Windows, Mac and Android platforms. More

information about this plugin can be found in the Oculus Spatializer for FMOD Integration Guide.

Unity3D: The Oculus Audio SDK supports Unity 4.6 on Android, Mac OS X and Windows. More information

about this plugin can be found in the Oculus Spatializer for Unity Integration Guide.

Unreal Engine: Epic's Unreal Engine 4 supports numerous different audio subsystems. The Wwise

integration (available directly from Audiokinetic) has been tested with our Wwise Spatializer plugin (see

above).

Oculus Hardware Capabilities

Each Oculus hardware platform has different audio capabilities.

26|Oculus Audio SDK Guide|Audio

Note: All Oculus VR headsets requires headphones.

DK1 does not provide any audio output and thus relies on the end user's system for audio output.

DK2 also relies on the end user's system for audio output, but adds positional tracking.

The Oculus CV1 HMD has a built-in DAC (audio over USB connected to the headset) and ADC (for

microphone). The audio subsystem is class compliant and supports Windows, Mac and Linux. The HMD has

integrated headphones as well and a microphone.

The Samsung Gear VR mobile product supports 16-bit, 48 kHz sound. Like DK1, it supports head orientation

but not positional tracking. Headphone choice is dictated by the end user.

Mobile performance is significantly lower than desktop PC performance, so try to minimize the number of audio

sources.

Audio|Oculus Native Spatializer for Unity|27

Oculus Native Spatializer for Unity

Welcome to this guide to using the Oculus Native Spatializer plugin in Unity!

Overview

This guide describes how to install and use the Oculus Native Spatializer plugin in Unity 5.2+ and in end-user

applications.

The Oculus Native Spatializer Plugin (ONSP) is an add-on plugin for Unity that allows monophonic sound

sources to be spatialized in 3D relative to the user's head location.

The Native Oculus Spatializer is built on Unitys Native Audio Plugin, which removes redundant spatialization

logic and provides a first-party HRTF. It supersedes our previous Oculus Spatializer Plugin for Unity (available

prior to Audio SDK 1.0 ), which used scripts to set settings on an Audio Source.

Our ability to localize audio sources in three-dimensional space is a fundamental part of how we experience

sound. Spatialization is the process of modifying sounds to make them localizable, so they seem to originate

from distinct locations relative to the listener. It is a key part of creating a sense of presence in virtual reality

games and applications.

For a detailed discussion of audio spatialization and virtual reality audio, we recommend reviewing our

Introduction to Virtual Reality Audio guide before using the Oculus Native Spatializer. If youre unfamiliar with

Unitys audio handling, be sure to review the Unity Audio guide.

Note: Our previous OSP for Unity is now available as the Legacy Oculus Spatializer, and is intended

primarily for users of Unity 4.

Requirements and Setup

Requirements

Windows 7/8

Unity 5.2 Professional or Personal, or later. See Unity Compatibility and Requirements for details on our

recommended versions.

Note: The ONSP is not compatible with Unity 4. Projects build with Unity 4 should continue use the

Legacy OSP for Unity.

Download and Setup

Note: We recommend removing any previously-imported versions of the OSP or ONSP before

importing a new plugin. See Updating to Oculus Native Spatializer for Unity from previous OSP for Unity

Versions below for instructions.

To download the ONSP and import it into a Unity project:

1. Download the Oculus Audio SDK Plugins package from the Oculus Downloads page.

2. Extract the zip.

28|Oculus Native Spatializer for Unity|Audio

3. Open your project in the Unity Editor, or create a new project.

4. Select Assets > Import Package > Custom Package.

5. Select OculusNativeSpatializer.unitypackage and import.

6. When the Importing Package dialog opens, leave all assets selected and click Import.

To turn on the Native Spatializer:

1. Go to Edit > Project Settings > Audio in Unity Editor

2. Select Spatializer Plugin in the OculusSpatializer drop-down setting in the AudioManager Inspector panel as

shown below.

We recommend setting DSP Buffer Size to Best latency to set up the minimum buffer size for the platform that

is supported, reducing overall audio latency.

Updating to Oculus Native Spatializer for Unity from previous OSP for Unity Versions

1. Note the settings used in OSPManager in your project.

2. Replace OSPAudioSource.cs (from previous OSP) on AudioSources with OculusSpatializerUserParams.cs in

<project>/Assets/OSP.

3. Set the appropriate values previously used in OSPManager in the plugin effect found on the mixer channel.

Note that the native plugin adds functionality, so you will need to adjust to this new set of parameters.

4. Remove OSPManager from the project by deleting OSPManager*.* from <project>/Assets/OSP except

your newly-added OculusSpatializerUserParams.cs.

5. Verify that OculusSpatializer is set in the Audio Manager and that Spatialization is enabled for that voice.

All functions such as Play, Stop, et cetera, that used to be on the previous OSP no longer exist. Instead, one

uses the functions on AudioSource to start, stop and modify sounds as required.

Audio|Oculus Native Spatializer for Unity|29

Exploring Oculus Native Spatializer with the Sample

Scene

To get started, we recommend opening the supplied demonstration scene RedBallGreenBall, which provides a

simple introduction to OSNP resources and examples of how what the spatializer sounds like.

This simple scene includes a red ball and a green ball, which illustrate different spatializer settings. A looping

electronic music track is attached to the red ball, and a short human voice sequence is attached to the green

ball.

Launch the scene in the Unity Game View, navigate with the arrow keys, and control the camera orientation

with your mouse to quickly hear the spatialization effects.

To import and open RedBallGreenBall :

1.

2.

3.

4.

Create a new Unity project.

Import the OculusNativeSpatializer.unitypackage.

When the Importing Package dialog opens, leave all assets selected and click Import.

Enable the Spatializer as described in Download and Setup

5. Open RedBallGreenBall in /Assets/scenes.

To preview the scene with a Rift:

1.

2.

3.

4.

Import and launch RedBallGreenBall as described above.

In Build Settings, verify that the PC, Mac and Linux Standalone option is selected under Platform.

In Player Settings, select Virtual Reality Supported.

Preview the scene normally in the Unity Game View.

To preview the scene in Gear VR (requires gamepad):

1. Be sure you are able to build and run projects on your Samsung phone (Debug Mode enabled, adb

installed, etc.) See the Mobile SDK Setup Guide for more information.

2. Follow the setup steps at the top of this section.

30|Oculus Native Spatializer for Unity|Audio

3. In Build Settings

4.

5.

6.

7.

a. Select Android under Platform.

b. Select Add Current to Scenes in Build.

In Player Settings, select Virtual Reality Supported.

Copy your osig to <unity-project>/Assets/Plugins/Android/assets.

Build and run your project.

Navigate the scene with a compatible gamepad.

Applying Spatialization

Attach the helper script ONSPAudioSource.cs, found in Assets/OSPNative/scripts, to an AudioSource. This

script accesses the extended parameters required by the Oculus Native Spatializer. Note that all parameters

native to an AudioSource are still available, though some values may not be used if spatialization is enabled on

the audio source.

In this example, we look at the script attached to the green sphere in our sample RedBallGreenBall:

Audio|Oculus Native Spatializer for Unity|31

32|Oculus Native Spatializer for Unity|Audio

OculusSpatializerUserParams Properties

Enable Spatialization

Effectively, a bypass button for spatialization. If disabled, the attached Audio Source

will act as a native audio source without spatialization. This setting is linked to the

corresponding parameter in the Audio Source expandable pane (collapsed in the above

capture).

Disable Reflections

Select to disable early reflections and reverb for the spatialized audio source.

To use early reflections and reverb, you must deselect this value and add an

OculusSpatializerReflection plugin to the channel where you send the AudioSource in

the Audio Mixer. See Audio Mixer Setup below for more details.

Gain

Adds up to 24 dB gain to audio source volume (in db), with 0 equal to unity gain.

Inverse Square Attenuation Settings

Enable

If selected, the audio source will use an internal attenuation falloff curve controlled by

the Near and Far parameters. If deselected, the attenuation falloff will be controlled by

the authored Unity Volume curve within the Audio Source Inspector panel.

Note: We strongly recommend enabling internal attenuation falloff for a more accurate

rendering of spatialization. The internal curves match both the way the direct audio

falloff as well as how the early reflections and reverbs are modelled.

Falloff Near

Sets the point at which the audio source starts attenuating, in meters. It also influences

the reflection/reverb system, whether or not internal inverse square attenuation is

enabled. Larger values will result in less noticeable attenuation when the listener is near

the sound source.

Falloff Far

Sets the point at which the audio source reaches full volume attenuation, in meters.

It also influences the reflection/reverb system, whether or not internal inverse square

attenuation is enabled. Larger values allow for loud sounds that can be heard from a

distance.

Audio Mixer Setup

Note: In Unitys terminology, group is roughly the same as channel. In this section, we use the terms

interchangeably.

Unity 5 includes a flexible mixer architecture for routing audio sources. A mixer allows the audio engineer

to add multiple channels, each with their own volume and processing chain, and set an audio source to that

channel. For detailed information, see Unitys Mixer documentation.

Shared Reflection/Reverb

To allow for a shared reflection/reverb system within our Oculus Spatializer, you must create a mixer channel

and add the OculusSpatializerReflection effect to that channel.

1.

2.

3.

4.

5.

Select the Audio Mixer tab in your Project View.

Select Add Effect in the Inspector window.

Select OculusSpatializerReflection.

Set the Output of your attached Audio Source to Master (SpatializerMixer).

Set reflection/reverberation settings to globally affect spatialized voices.

Audio|Oculus Native Spatializer for Unity|33

Table 1: Mixer Settings

E.Rflt On

Set to any non-zero value to enable the early reflection system.

E.Rflt Rev On

Set to any non-zero value to enable global reverb (requires early reflection (E.Rflt On) to

be enabled).

Room X/Y/Z

Sets the dimensions of the theoretical room used to calculate reflections, in meters. The

greater the dimensions, the further apart the reflections. Range: 0 - 200m.

Left, Right, Up, Down,

Behind, Front

Sets the percentage of sound reflected by each respective wall. At 0, the reflection is

fully absorbed. At 1.0, the reflection bounces from the wall without any absorption.

Caps at 0.97 to avoid feedback.

RedBallGreenBall Example

To see how this works in RedBallGreenBall, access the Audio Mixer by selecting the Audio Mixer tab in your

Project View. Then select Master under Groups as shown below.

Select the green sphere in your Scene View. Note that the Output of the attached Audio Source vocal1 is set to

our Master (SpatializerMixer):

34|Oculus Native Spatializer for Unity|Audio

You can now set reflection/reverberation settings to globally affect spatialized voices:

Audio|Legacy Oculus Spatializer for Unity Integration Guide|35

Legacy Oculus Spatializer for Unity

Integration Guide

Welcome to this guide to using the Legacy Oculus Spatializer plugin in Unity!

Overview

The Legacy Oculus Spatializer Plugin (OSP) is an add-on plugin for the Unity tool set that allows monophonic

sound sources to be spatialized in 3D relative to the user's head location. This integration guide outlines how to

install and use OSP in the Unity editor and end-user application.

Note: Developers using Unity 5 should use the Oculus Native Spatializer Plugin. The Legacy Oculus

Spatializer Plugin is provided for Unity 4 development.

Version Compatibility

This integration has been tested with Unity 5.1 on Windows 7 / 8 (32 and 64-bit), as well as on Mac OS X

10.8.5 and Android 4.4.4 (KitKat). It is also known to work with Unity 4.5 and later.

Both Unity Professional and Free are supported.

General OSP Limitations

1. CPU usage increases when early reflections are turned on, and increases proportionately as room

dimensions become larger.

Reducing Android Latency: OpenSL Low-Latency

Driver

Android audio latency is known to be exceedingly high, generally hitting 100-200 ms between the time a

sound event is triggered to when the sound is actually heard. Gear VR minimizes audio latency within Unity

applications by switching to an optimized OpenSL driver.

Your Android application must be built with the proper AndroidManifest.xml file. This is accomplished by using

the official Gear VR Unity integration and following the relevant manifest instructions.

Use of the OpenSL driver is currently available in Unity v4.6.1p4 and higher. To find it:

1.

2.

3.

4.

Navigate to https://unity3d.com.

Click Download.

Click Looking for a patch releases?

Select Unity v4.6.1p4 or higher.

It is also publicly available in Unity 5.

Note: Use of OpenSL through Unity on a VR-enabled device is still a work in progress. As such, please

know that it will be easier to overload the audio CPU usage with OpenSL over the higher-latency

36|Legacy Oculus Spatializer for Unity Integration Guide|Audio

Android drivers; you may hear more buffer over-run issues depending on the complexity of your audio

scene. We recommend treating OpenSL as a preview of low-latency audio on mobile VR.

For up-to-date information about OpenSL support in Unity, please refer to the Oculus Unity Integration Guide.

Installing into Unity

Unity OSP contents are found in the Unity distribution package OculusSpatializer.unitypackage. This package

contains all of the components required to install the OculusSpatializer into your Unity project.

To import the package into Unity:

1. Open the project in which you would like to install the plugin, or create a new one.

2. Click on OculusSpatializer.unitypackage file from your operating system to install all files into the project.

Note: OculusSpatializer.unitypackage contains a test scene called OSPTest, which uses the Unity

standard asset FirstPersonController. If you install the package into a project which already includes this

asset, you will have a resource conflict. To avoid this conflict, install everything from the unitypackage

except for the OSPTestScene directory.

Once installed, you will see the following folders in your project:

OSP

OSPTestScene

Plugins: includes the OculusSpatializerPlugins for various platforms.

How to Use the Oculus Spatializer in Unity

To get started, we recommend that you create a new Unity project and import OculusSpatializer.unitypackage,

and then have a look at the test scene OSPTest found in the folder OSPTestScene. This is a good way to

become familiar with the OSP resources and to hear what the spatializer sounds like.

Audio|Legacy Oculus Spatializer for Unity Integration Guide|37

To add spatialization to audio in Unity, start with the prefabs OSPManager and OSPAudioSource in /OSP/

Prefabs.

OSPManager

Add OSPManager to a scene to initialize the spatialization engine. OSPManager contains global properties that