Professional Documents

Culture Documents

Titanic

Uploaded by

Raghu RamOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Titanic

Uploaded by

Raghu RamCopyright:

Available Formats

91.

545

Machine Learning: Final Project Report

2015

Titanic - Machine Learning From Disaster

91.523 Machine Learning Final Project, UMass Lowell

Kunal Vyas, Zeshi Zheng, Lin Li

{kunal_vyas, zeshi_zheng, lin_li} @student.uml.edu

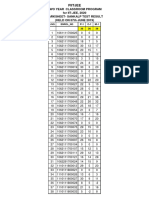

From the statistics of survivors by category as shown in

Figure 1, men were out of luck in this disaster. The

overall survival rate for men was 20%. While for

women, it was 74%, and for children was 52%. The

result is not common in maritime disaster and the rule of

"women and children first" was strictly carried out.

Besides, gender, there are other factors that affect the

result. According to statistics, the third class women

were 41% more likely to survive than first class men.

And third class men were twice as likely to survive as

second class men. It can be seen that class is a far weaker

variable in determining survival rate than gender or age.

Indeed, most of the variance in first class vs. third class

survival rates can be attributed to gender alone. The

reason for this is simple: 44% of the first class passengers

were women, while only 23% of the third class

passengers were women. Because the survival rate for

women was far greater than the survival rate for men, we

would thus expect a much higher survival rate for first

class passengers as a whole than for third class

passengers as a whole.

Abstract

RMS Titanic was a British passenger liner that sank in

the North Atlantic Ocean after colliding with an iceberg.

Although there was some element of luck involved in

surviving the sinking, some groups of people were more

likely to survive than others, such as women, children, and

the upper-class. In this project, three kinds of machinelearning techniques, including modified gender based

model, random forest, and support vector machines (SVM),

are applied to predict passengers likelihood of survival.

For modified gender based model, class, fare, port, ticket,

and family size are considered together with gender to

reach a maximum accuracy of 0.78469; for random forest

algorithm, the combined influence of passenger class,

ticket, fare, gender, port, age fill, family size, and age*class

gives a maximum accuracy of 0.77990; while for support

vector machines, only strong indicators of survival,

passenger class, gender, and port are taken into account,

which leads to a maximum accuracy of 0.76077. Our best

maximum accuracy gives a 2% increase over other models

and it is also concluded that more features do not

necessarily give better result.

1. Introduction

The sinking of the RMS Titanic is one of the most

infamous shipwrecks in history. One of the reasons that

the shipwreck led to such loss of life was that there were

not enough lifeboats for the passengers and crew.

Although there was some element of luck involved in

surviving the sinking, some groups of people were more

likely to survive than others, such as women, children,

and the upper-class.

The task of this project is to predict whether a given

passenger survived the sinking of the Titanic based on

various attributes including age, gender, family size,

ticket, the fare they paid, and other information using

tools of machine learning. Solutions are evaluated by

comparing the percentage of correct answers on a test

dataset.

Figure 1. Percentage of passengers saved by category [1]

Three kinds of machine-learning techniques are selected

to solve this problem. Gender seems to be a strong

indicator of survival, with women having a much better

chance. But it likely not be the best prediction - our

modified gender based model is an improved version of

gender based model. It is called gender-class-fare-port-

91.545

Machine Learning: Final Project Report

2015

ticket-familysize model. It takes gender, class, fare, port,

ticket, and family size into consideration to get an

improved prediction.

Random forest, and support vector machines (SVM), are

also applied to predict passengers likelihood of survival.

Random forests are a combination of tree predictors such

that each tree depends on the values of a random vector

sampled independently and with the same distribution

for all trees in the forest. Random forests correct for

decision trees' habit of over fitting to their training set. It

is a classifier consisting of a collection of tree-structured

classifiers {h(x, k), k= 1, }, where the {k} are

independent identically distributed random vectors and

each tree casts a unit vote for the most popular class at

input x.

SVM is supervised learning model with associated

learning algorithms that analyze data and recognize

patterns, used for classification and regression analysis.

Given a set of training examples, each marked as

belonging to one of two categories, an SVM training

algorithm builds a model that assigns new examples into

one category or the other, making it a non-probabilistic

binary linear classifier. An SVM model is a

representation of the examples as points in space,

mapped so that the examples of the separate categories

are divided by a clear gap that is as wide as possible.

New examples are then mapped into that same space and

predicted to belong to a category based on which side of

the gap they fall on. In addition to performing linear

classification, SVMs can efficiently perform a non-linear

classification using what is called the kernel trick,

implicitly mapping their inputs into high-dimensional

feature spaces.

These three machine-learning techniques are applied to

successfully predict which passengers survived the

sinking of the Titanic. This project is based on some code

provided by the problem on www.kaggle.com [2] and

with our contribution, data-set was analyzed and useful

insights to the problem were deduced. The detailed

background and approach are described in the following

sections.

SciPy, to predict the likelihood of survival of

individual passengers. The random forests technique is

used to train and test the data in order to predict the most

accurate rate, and they had a predictive accuracy of

78.47%. Yin [4] chose age, location of the passengers

cabin on the ship, family members, the fare they paid,

and other information to do the evaluation. From his

result, the score is 77.99%, which is just above the

benchmark that uses random forest model with default

parameters. Lam and Tang [5] used three different

models to deal with the classification problem. The first

one is Naive Bayes [6]; and the other is using SVM [7]

to map selected features to a higher dimensional space.

Decision tree analysis [8] is then utilized to find the

optimal decision boundaries. There were no significant

differences in accuracy between the three methods

they used. Even using every combination of features,

they were not able to produce an accuracy rate that

was much different than simple Naive Bayes classifier

using only sex as a feature. They also tried separating the

data based on other based such as the number of

siblings/spouses and the number of parents/children

aboard. But these features did not provide good insight

into survival rate.

Although these studies suggested that appropriate

combination of features with suitable method can

improve prediction rate, none of them achieves

sufficiently good performance. It would be interesting

to continue the analysis with other possible features

or with other machine learning algorithms.

3. Approach

3.1 Random Forest [9]

3.1.1. Introduction

The common element is that for the kth tree, a random

vector k is generated, independent of the past random

vectors 1, ..., k1 but with the same distribution; and a

tree is grown using the training set and k, resulting in a

classifier h(x, k) where x is an input vector. For

instance, in bagging the random vector is generated as

the counts in N boxes resulting from N darts thrown at

random at the boxes, where N is number of examples in

the training set. In random split selection consists of a

number of independent random integers between 1 and

K. The nature and dimensionality of depends on its use

in tree construction.

After a large number of trees are generated, they vote for

the most popular class. We call these procedures random

forests.

A random forest is a classifier consisting of a collection

of tree-structured classifiers {h(x, k), k = 1, . . .} where

the {k} are independent identically distributed random

2. Background

Recently, many studies have been conducted to study

this problem in order to compare and contrast the

different machine learning techniques. As an important

aspect of prediction, the trade-off between different

features has been addressed in several of these studies

that are summarized below.

Nguyen et al. [3] created a supervised learning model,

which used Random Forests in the R randomForest

package and the RandomForestClassifier package in

91.545

Machine Learning: Final Project Report

2015

vectors and each tree casts a unit vote for the most

popular class at input x.

A random forest is an ensemble of decision trees, which

will output a prediction value, in this case survival. Each

decision tree is constructed by using a random subset of

the training data. After you have trained your forest, you

can then pass each test row through it, in order to output

a prediction. This particular python function requires

floats for the input variables, so all strings need to be

converted, and any missing data needs to be filled.

made over these for the best split. This procedure is

called Forest-RC.

3.2 Improved Gender Model

3.1.2. Using Random Features

To improve accuracy, the randomness injected has to

minimize the correlation while maintaining strength. The

forests studied here consist of using randomly selected

inputs or combinations of inputs at each node to grow

each tree. The resulting forests give accuracy that

compare favorably with Adaboost.

Each new training set is drawn, with replacement, from

the original training set. Then a tree is grown on the new

training set using random feature selection. The trees

grown are not pruned.

Figure 2. Gender analysis

Intuitively, women are more likely getting help when a

misery happens. In the Titanic problem, women had

much bigger chance to survive than men, 74.2% and 18.9%

respectively. Therefore it looks straightforward that we

put other features on this gender model analysis, but the

features we are going to choose should have strong

connection with peoples death.

Fare and category of class can be a good choice, because

they all are related to money and status. In other words,

rich and powerful people usually are more likely to get

privilege than poor people. From the dataset we got that

classes are not always in direct proportion to fare, and

actually first class can be cheaper than third class. We

explain this situation by that powerful people can get

some special price to get on board. For this combination,

we built a 3-dimensional array to stand for survival table

that shown a person was alive based on which gender

lives in which class and spend how much money.

Because of the large range of fare, we divided fare to four

bins. These bins are 0~9 dollar, 10~19 dollar, 20~29

dollar, 30~39 dollar. And every higher fare than 39 will

be set to 39. On this array we counted the percentage of

survived people, and set 0.5 as threshold. If the mean

value of survived column in a hole is greater than

0.5 we regard the person as survival, otherwise he/she

died. However, the threshold is not specific enough, so

we changed gender-class model a little bit, that is, we

only serve survived percentage over 80% as survived

and percentage lower than 20% as died. And dont

predict other people between the two percentages.

Next step was to predict people who meet some very

special condition in the table, which are:

1) extremely rich people survived.

2) females embarked from C survived but males from

Q/S died.

3) people have tickets start with SOTON/A/W died.

3.1.3. Random forests using random input selection

Selecting at random forms the simplest random forest

with random features. At each node, a small group of

input variables are to split on. Grow the tree using CART

(single trees) methodology to maximum size and do not

prune. Denote this procedure by Forest-RI. The size F of

the group is fixed. Two values of F were tried. The first

used only one randomly selected variable, i.e., F = 1. The

second took F to be the first integer less than log2 M +

1, where M is the number of inputs.

Random input selection can be much faster than either

Adaboost or Bagging. A simple analysis shows that the

ratio of RI compute time to the compute time of

unpruned tree construction using all variables is F*

log2(N)/M where F is the number of variables used in

Forest-RI, N is the number of instances, and M the

number of input variables.

3.1.4. Random forests using linear combinations

If there are only a few inputs, says M, taking F an

appreciable fraction of M might lead an increase in

strength but higher correlation. Another approach

consists of defining more features by taking random

linear combinations of a number of the input variables.

That is, a feature is generated by specifying L, the

number of variables to be combined. At a given node, L

variables are randomly selected and added together with

coefficients that are uniform random numbers on [1, 1].

F linear combinations are generated, and then a search is

91.545

Machine Learning: Final Project Report

2015

5. Evaluation

4) people have family size of 4/5/7/10 died.

Of the three methods, SVM and random forest did not

perform as good as gender-based model for now. Among

them, SVM finally got around 77% of accuracy, random

forest got around 76% of accuracy, and gender-based

model got around 78% of accuracy.

3.3 Support Vector Machines [10]

SVM is a supervised learning model with associated

learning algorithms that analyzes data and recognizes

patterns, used for classification and regression analysis.

Given a set of training examples, each marked as

belonging to one of two categories, an SVM training

algorithm builds a model that assigns new examples into

one category or the other, making it a nonprobabilistic binary linear classifier. An SVM model is a

representation of the examples as points in space,

mapped so that the examples of the separate categories

are divided by a clear gap that is as wide as possible.

New examples are then mapped into that same space and

predicted to belong to a category based on which side of

the gap they fall on.

5.1 Random Forest

We did the test to add different features to the model, and

obtained the accuracy based on the adjustment. Some

features like fare, etc. that we thought that would affect

the prediction did not actually show any signs of

improvement. However, we found it did not improve the

accuracy significantly.

Added Feature

Accuracy

Gender*AgeFill*Fare

0.75120

Gender*AgeFill

0.76077

Gender*AgeFill*Pclass

0.74641

Gender* AgeFill,

0.75598

Gender* Pclass

AgeFill*Pclass*Pclass

0.73684

Gender* Fare

0.76077

Table 5.1. The accuracy of our model according to added

feature to the model

4. Dataset

The historical data has been split into two groups, a

'training set' and a 'test set'. As part of the problem

Original data set was provided by kaggle.com, we had

819 rows of labelled training data. And using that, we

had to submit survival prediction labels for 418 rows of

test data.

5.2 Improved gender model

Observation on train sample

Analysis

People who meet Fare > 150

condition have 68.97% chance to

survive, > 200 get 70%, >250

get 77.78%, >263 get 100%

survival chance.

richer people more likely to

survive, in this case its

reasonable to set fare>263

(stands for extremely rich) to

survive.

The features that the data has are:

Cabin data size only account for Too much missing data, so

1/4

dropped

Survival

Tickets start with SOTON, A, Should filter those people and

W have lower than 0.15 survival, predict died/survived

PC 17755 were all alive

Survival

(0 = No; 1 = Yes)

pclass

Passenger Class

(1 = 1st; 2 = 2nd; 3 = 3rd)

name

Name

sex

Sex

age

Age

sibsp

Number of Siblings/Spouses Aboard

parch

Number of Parents/Children Aboard

ticket

Ticket Number

fare

Passenger Fare

cabin

Cabin

embarked

Port of Embarkation

(C=Cherbourg;Q=Queenstown;S=Southampton)

Name

title

divided

by Survived rates are all close to

Mr./Mrs./Miss/Dr./Master/Col/Rev 50%

Sex, embark isnt number

Transfer to number

Age (741/891), Embark (889/891) Fill in missing data, age fill by

data miss

median

Embark from different port has From

C:

overall0.55

different survival rates

female0.88 male0.31

From

Q:

overall0.39

female0.75 male0.07

From

S:

overall0.34

female0.69 male0.17

Family size affects survived rate

Family size of 4/5/7/10 has

lower than 0.2 survived rate

91.545

Machine Learning: Final Project Report

2015

5.4 Formal Evaluation

For the training set, Kaggle provides the outcome

(ground truth') for each passenger. We used this set to

build the model to generate predictions for the test set.

For each passenger in the test set, we must predict

whether or not they survived the sinking (0 for deceased,

1 for survived). Our score is the percentage of

passengers you correctly predict.

The Kaggle leaderboard has a public and private

component. 50% of our predictions for the test set have

been randomly assigned to the public leaderboard (the

same 50% for all users ). Our score on this public

portion is what will appear on the leaderboard. At the

end of the contest, Kaggle will reveal our score on the

private 50% of the data, which will determine the final

winner.

We plotted ROC curves to measure the performance of

our classifier model.

The feature extraction is kind of tricky, because each

feature has different effects on the model and if combine

some features together by multiply, square operation,

they will have other effects. First of all, we observe every

single feature.

Obviously, gender is the most important element

affecting survival rate, because seven out of ten men died

while seven out of ten women survived. Besides, fare has

a huge effect in some degree. That is, when the fare is

lager than 263 dollars, the people who hold that ticket

was alive. We can think about rich people get some

privileges. So predicting rich people as survived is

reasonable. But since most of fares are between zero to

sixty dollars, we need to do more on it.

Cabin feature may contain some really useful data, but

we still have to delete it because it is missing three fourth

of its data. Age is another feature that missing partial data,

but just a few of them are missing. Thus, we can use

some approaches to modify it. In this case, we use

median to fill out missing ages. Because after viewing

the diagram of ages, we knew that almost all ages fall in

20 to 30 bin, and 22 has most of people, so we choose 22

as median.

Another important feature is ticket name. Specifically, as

shown above, tickets with SOTON/A/W barely survived.

Also, in this case, family size is also a key because we

found that big family has lower survival rate.

After doing all above analysis and predicting related data,

we got 78.469% correct prediction on Kaggle.

5.3 Support Vector Machines (SVM)

We used Support Vector Machines with RBF kernel.

After spending some time with Random Forest, we

wanted to try out an unsupervised model for our project.

We tried SVM with various combinations of features,

and also did feature scaling on Fare. We tried setting the

missing values of Age by running a logistic regressor

based on 'Embarked', 'Parch', 'SibSp', 'Pclass' instead of

the initial implementation that used median of all

available ages. Unfortunately, the results we got were

unsatisfactory, i.e in the 0.5-0.6 range.

However, when we tried reducing the features, we saw

an increase in accuracy, and we ended up using

Passenger Class, Sex and Embarkation point and

dropped features like parent/child ratio, age and fare

which we would never have imagined to be relatively

weaker indicators for the model. We got an accuracy of

0.77033 which was where the score peaked for this

approach.

Figure: ROC curve for the Random Forest implementation

We tried various approaches to make the data more

useful like:

1. Using Linear Regression to generate missing

values instead of using median method.

2. We noticed there was a huge irregularity in the

fare parameter, so we performed feature scaling

on that feature.

Also, when we were trying out new features, we were

having a hard time figuring out the reasons for not

achieving better accuracy than before, so we plotted

learning curves to learn bias variance tradeoff. This was

how we got the intuition to reduce the number of features

for SVM.

91.545

Machine Learning: Final Project Report

2015

To summarize, our results compared to approaches

people have followed before are:

Vyas,

Zheng, Li

0.78469

Lam,

Tang [5]

X

Yang

[11]

X

Modified

Gender

Based

Random

0.76077

X

0.8134

Forest

SVM

0.77033

0.7799

.7799

Decision

X

.7943

.7946

Tree

Table 5.1: Comparison of results.

Figure: SVM with all the features that were used for

Random Forest. (failure)

6. Conclusion

According to our research, our results can be obtained

with a higher accuracy, which improves 2% compared to

the results of other models. Besides, during the process

of our study, we find more features utilized in the models

do not necessarily make better result.

7. Team roles

There are 3 members, Kunal Vyas, Zeshi Zheng, and Lin

Li, in this team working together to use different

machine learning methods to do the prediction:

Kunal Vyas - Problem analysis, SVM/ random forest

code implementation, dataset analysis, visualization,

testing and debugging, Writing.

Zeshi Zheng - Dataset analysis, improved gender

model/ random forest code implementations,

visualization, algorithm, testing and debugging, writing.

Lin Li - Problem analysis, random forest code

implementations, visualization, algorithm development,

writing.

Figure: SVM with reduced features (best result)

Some things we think that would improve the

performance of the model would be:

a) Dimensionality Reduction. We think this would

be a useful addition to the model. That being

said, we dont expect more than just marginal

improvements to the present model, since our

data is small and simplified.

b) Nave Bayes and Decision Trees: These are the

next most popular approaches that have been

used to solve the problem, and have given good

results.

c) The number of ways we can experiment with

data is endless, so playing more with it should

give us better results. One idea that comes to

mind is using keywords like Dr, Col, etc.

from the Name field, since these would be

influential and respectable people.

Filename

TitanicIter1.py (258 lines)

TitanicIter2.py (200 lines)

Description

Main program,

gender part

Fare part

TitanicIter3.py (222 lines)

Class part

svm.py(230 lines)

SVM

implementation

Experiments on

the

Random

forest

algorithm

Randomforest.py(220

lines)

Author

Zeshi

Zheng

Zeshi

Zheng

Zeshi

Zheng

Kunal

Vyas

Kunal

Vyas

91.545

Machine Learning: Final Project Report

Titanic6NewFeaturesRF.py

(146 lines)

Using random

forest

algorithm

Table 1. Summary of Code

2015

Lin Li,

Zeshi

Zheng

References

[1] http://www.anesi.com/titanic.htm

[2] https://www.kaggle.com/c/titanic

[3] https://seelio.com/w/dgd/titanic-machine-learningfrom-disaster

[4] https://rstudio-pubsstatic.s3.amazonaws.com/25401_74410a27a4cf41a3b

257e42c50927d35.html

[5] http://cs229.stanford.edu/proj2012/LamTangTitanicMachineLearningFromDisaster.pdf

[6] A. Ng. CS229 Notes. Stanford University, 2012

[7] Cortes, Corinna; and Vapnik, Vladimir N.; "SupportVector Networks", Machine Learning, 20, 1995.

[8] Stuart J. Russell, Peter Norvig, Artificial Intelligence:

A Modern Approach, Pearson Education, 2003, pg

697-702

[9] Breiman, L. 2001a. Random forests. Machine Learning

45:5-32.

[10] http://en.wikipedia.org/wiki/Support_vector_machine

[11] http://murphy.wot.eecs.northwestern.edu/~xto633/xia

odong/fullreport.pdf

You might also like

- CEP FinalDocument11 pagesCEP FinalQasim RazaNo ratings yet

- Titanic Survival PredictionDocument14 pagesTitanic Survival PredictionNishit ChaudharyNo ratings yet

- Synopsis TSPDocument7 pagesSynopsis TSPNishit ChaudharyNo ratings yet

- A Mathematical Essay On Logistic Regression: Awik DharDocument4 pagesA Mathematical Essay On Logistic Regression: Awik DharAwik DharNo ratings yet

- Decision Tree Induction Based On Minority Entropy For The Class Imbalance ProblemDocument14 pagesDecision Tree Induction Based On Minority Entropy For The Class Imbalance ProblemFranco Roberto Lopez FernandezNo ratings yet

- The Genetic Algorithm For Finding The Maxima of Single-Variable FunctionsDocument9 pagesThe Genetic Algorithm For Finding The Maxima of Single-Variable FunctionsinventyNo ratings yet

- Group10 Mini Project ReportDocument13 pagesGroup10 Mini Project ReportNishit ChaudharyNo ratings yet

- AncesTrees Ancestry Estimation With RandDocument8 pagesAncesTrees Ancestry Estimation With RandCamila ZapataNo ratings yet

- 1 s2.0 S0001457521002712 MainDocument14 pages1 s2.0 S0001457521002712 MainrabiaNo ratings yet

- An Introduction to Probability and Stochastic ProcessesFrom EverandAn Introduction to Probability and Stochastic ProcessesRating: 4.5 out of 5 stars4.5/5 (2)

- Term Paper in Stat 276: An Exposition of A Published ArticleDocument8 pagesTerm Paper in Stat 276: An Exposition of A Published ArticleAngie Tobias - LozanoNo ratings yet

- Solving Generalized Assignment Problem With Genetic Algorithm and Lower Bound TheoryDocument5 pagesSolving Generalized Assignment Problem With Genetic Algorithm and Lower Bound TheoryVikasThadaNo ratings yet

- Report TSPDocument13 pagesReport TSPNishit ChaudharyNo ratings yet

- Optimization Using Genetic Algorithms: Conference PaperDocument9 pagesOptimization Using Genetic Algorithms: Conference PaperAnubhaw GoyalNo ratings yet

- This Dataset Comes From An Original (Non-Machine-Learning) Study and Received in December 1995Document4 pagesThis Dataset Comes From An Original (Non-Machine-Learning) Study and Received in December 1995Shivangi GuptaNo ratings yet

- Math in ML ALgoDocument18 pagesMath in ML ALgoNithya PrasathNo ratings yet

- A Communication-Efficient Parallel Algorithm For Decision TreeDocument9 pagesA Communication-Efficient Parallel Algorithm For Decision TreeRaanaNo ratings yet

- The Top-K Tau-Path Screen For Monotone Association: Yu Et Al. 2011Document40 pagesThe Top-K Tau-Path Screen For Monotone Association: Yu Et Al. 2011Yusuf RihabeNo ratings yet

- B. Tech, Batch, 2 Semester Subject Code: 18CS3064: Subject: Big Data OptimizationDocument11 pagesB. Tech, Batch, 2 Semester Subject Code: 18CS3064: Subject: Big Data Optimizationkrishnasai tadiboinaNo ratings yet

- Sequence Scheduling With Genetic Algorithms: GENITOR Algorithm We Obtain Best Known Solutions For 30 andDocument20 pagesSequence Scheduling With Genetic Algorithms: GENITOR Algorithm We Obtain Best Known Solutions For 30 andOlivia brianneNo ratings yet

- EE554 FinalProjectReport MatthewMcTaggart Sp2018Document6 pagesEE554 FinalProjectReport MatthewMcTaggart Sp2018MatthewMcTNo ratings yet

- A Comparative Study On Machine Learning Techniques Using Titanic DatasetDocument6 pagesA Comparative Study On Machine Learning Techniques Using Titanic Datasetfitoj akaNo ratings yet

- Performance Evaluation of Various Data Mining Algorithms On Road Traf Fic Accident DatasetDocument12 pagesPerformance Evaluation of Various Data Mining Algorithms On Road Traf Fic Accident DatasetcutdianNo ratings yet

- Wiley Journal of Applied Econometrics: This Content Downloaded From 128.143.23.241 On Sun, 08 May 2016 12:18:10 UTCDocument19 pagesWiley Journal of Applied Econometrics: This Content Downloaded From 128.143.23.241 On Sun, 08 May 2016 12:18:10 UTCmatcha ijoNo ratings yet

- Journal of Chemometrics - 2020 - Mehmood - Comparison of Variable Selection Methods in Partial Least Squares RegressionDocument14 pagesJournal of Chemometrics - 2020 - Mehmood - Comparison of Variable Selection Methods in Partial Least Squares Regressiongustavo rodriguezNo ratings yet

- E Thesis TuDocument5 pagesE Thesis Tulesliesanchezanchorage100% (2)

- Bayesian Hierarchical Analysis On Crash Prediction ModelsDocument22 pagesBayesian Hierarchical Analysis On Crash Prediction ModelsAhmed FenneurNo ratings yet

- Task 1Document3 pagesTask 1Adeel Nawaz0% (1)

- Genetic Algorithms: Department of Computer Science, University of New Mexico, AlbuquerqueDocument4 pagesGenetic Algorithms: Department of Computer Science, University of New Mexico, AlbuquerqueESMAILNo ratings yet

- Deep Learning AnswersDocument36 pagesDeep Learning AnswersmrunalNo ratings yet

- Ijarcce 140Document4 pagesIjarcce 140Agusti Frananda Alfonsus NaibahoNo ratings yet

- Modified Paper-2.1Document3 pagesModified Paper-2.1Ambica AnnavarapuNo ratings yet

- AcknowledgementDocument24 pagesAcknowledgementayan.mmantcNo ratings yet

- TR Rain ErrorDocument6 pagesTR Rain ErrorVaggelarasBNo ratings yet

- Unit 5: Advanced Optimization Techniques (M.Tech)Document10 pagesUnit 5: Advanced Optimization Techniques (M.Tech)tsnrao30No ratings yet

- Parallel Smith-Waterman Algorithm For Gene SequencingDocument4 pagesParallel Smith-Waterman Algorithm For Gene SequencingEditor IJRITCCNo ratings yet

- Data Science Project ReportDocument10 pagesData Science Project Reportankitaalai50% (2)

- Study of Genetic Algorithm An Evolutionary ApproachDocument4 pagesStudy of Genetic Algorithm An Evolutionary ApproachEditor IJRITCCNo ratings yet

- Customer Churn Prediction Using Improved Balanced Random ForestsDocument5 pagesCustomer Churn Prediction Using Improved Balanced Random ForestsGuillermo González RubilarNo ratings yet

- TitanicDocument1 pageTitaniccarloslariogomezNo ratings yet

- Solving N-Queens Problem Using Subproblems Based On Genetic AlgorithmDocument8 pagesSolving N-Queens Problem Using Subproblems Based On Genetic AlgorithmIAES IJAINo ratings yet

- GraphDocument243 pagesGraphsubash1111@gmail.comNo ratings yet

- Genetic Optimization Techniques for Sizing and Management of Modern Power SystemsFrom EverandGenetic Optimization Techniques for Sizing and Management of Modern Power SystemsNo ratings yet

- Size Population Vs Number GenerationsDocument24 pagesSize Population Vs Number GenerationshelliecotNo ratings yet

- Train Scheduling Using Ant Colony Optimization Technique: K.Sankar Lecturer / M.E., (P.HD) .Document4 pagesTrain Scheduling Using Ant Colony Optimization Technique: K.Sankar Lecturer / M.E., (P.HD) .Journal of Computer ApplicationsNo ratings yet

- Algorithmic Fairness: Advances in Big Data Research in EconomicsDocument6 pagesAlgorithmic Fairness: Advances in Big Data Research in EconomicsKawsar AhmedNo ratings yet

- Computational Intelligence Based Machine Fault Diagnosis: D. D. WANG, Debing YANG, Jinwu XU & Ke XUDocument5 pagesComputational Intelligence Based Machine Fault Diagnosis: D. D. WANG, Debing YANG, Jinwu XU & Ke XUvandersonpcNo ratings yet

- A Brief Tutorial On Maxent: by Steven J. Phillips, AT&T ResearchDocument40 pagesA Brief Tutorial On Maxent: by Steven J. Phillips, AT&T ResearchsatucitaNo ratings yet

- Zero Inefficiency Stochastic Frontier Models With V 2016 European Journal ofDocument11 pagesZero Inefficiency Stochastic Frontier Models With V 2016 European Journal ofKhalid ImranNo ratings yet

- Ensemble LearningDocument24 pagesEnsemble LearningAbel DemelashNo ratings yet

- Akay 2009Document8 pagesAkay 2009VivekNo ratings yet

- Project On Economic Load Dispatch Using Genetic Algorithm and Artificial Neural Network Optimization TechniquesDocument45 pagesProject On Economic Load Dispatch Using Genetic Algorithm and Artificial Neural Network Optimization Techniquesfekadu gebeyNo ratings yet

- Fake News Detection With Semantic Features and Text MiningDocument6 pagesFake News Detection With Semantic Features and Text MiningDarrenNo ratings yet

- PredictingTitanicSurvivorsusing by Applying Exploratory Data Anyltics and MLDocument7 pagesPredictingTitanicSurvivorsusing by Applying Exploratory Data Anyltics and MLfitoj akaNo ratings yet

- Applied Sciences: On The Optimal Size of Candidate Feature Set in Random ForestDocument13 pagesApplied Sciences: On The Optimal Size of Candidate Feature Set in Random ForestdomNo ratings yet

- PHD Dissertation DownloadDocument6 pagesPHD Dissertation DownloadScientificPaperWritingServicesUK100% (1)

- Paper 4Document4 pagesPaper 4RakeshconclaveNo ratings yet

- Machine Learning With Python (Vasavi)Document20 pagesMachine Learning With Python (Vasavi)K SanthiNo ratings yet

- Analysis of Accident Times For Highway Locations Using K-Means Clustering and Decision Rules Extracted From Decision TreesDocument34 pagesAnalysis of Accident Times For Highway Locations Using K-Means Clustering and Decision Rules Extracted From Decision Treesgunda prashanthNo ratings yet

- Analysis of Accident Times For Highway Locations Using K-Means Clustering and Decision Rules Extracted From Decision Trees.Document11 pagesAnalysis of Accident Times For Highway Locations Using K-Means Clustering and Decision Rules Extracted From Decision Trees.ATSNo ratings yet

- Physical Health in Mental HealthDocument72 pagesPhysical Health in Mental HealthjimmycoranNo ratings yet

- Aptitude For Learning A Foreign Language: Annual Review of Applied Linguistics (2001) 21, 90-111. Printed in The USADocument22 pagesAptitude For Learning A Foreign Language: Annual Review of Applied Linguistics (2001) 21, 90-111. Printed in The USAh.khbz1990No ratings yet

- A4 Lesson PlanDocument3 pagesA4 Lesson Planapi-349699549No ratings yet

- Sankalp SPL Practice Test Result For Batch Sankalp820lotheld On 07 June 2019 PDFDocument7 pagesSankalp SPL Practice Test Result For Batch Sankalp820lotheld On 07 June 2019 PDFLakshay GuptaNo ratings yet

- Concept Note 1Document22 pagesConcept Note 1AMIR LADJANo ratings yet

- Crittenden Ainsworth 1989 PDFDocument32 pagesCrittenden Ainsworth 1989 PDFHalima MarthaNo ratings yet

- Non-Family Employees in Small Family Business SuccDocument19 pagesNon-Family Employees in Small Family Business Succemanuelen14No ratings yet

- A.J. Ayer - The Concept of A Person 1963Document288 pagesA.J. Ayer - The Concept of A Person 1963JYDeGroot100% (4)

- K-12 Education in The Philippines ThesisDocument5 pagesK-12 Education in The Philippines Thesisjennifermoserreno100% (2)

- Completed NGC3 Example ReportDocument13 pagesCompleted NGC3 Example Reporteugeniuciobanu100% (1)

- Bus 5211 Unit 3 DiscussionDocument5 pagesBus 5211 Unit 3 DiscussionmahmoodNo ratings yet

- Monther-Tongue Curriculum Guide:: Grade Level Standards and DomainDocument8 pagesMonther-Tongue Curriculum Guide:: Grade Level Standards and DomainFaith MaratasNo ratings yet

- Sample SPM SpeechDocument2 pagesSample SPM SpeechAnonymous pOoMwcjNo ratings yet

- As 2550 - 6 Cranes Safe Use - Guided ST & Ret AppDocument23 pagesAs 2550 - 6 Cranes Safe Use - Guided ST & Ret AppAjNesh L AnNuNo ratings yet

- Minucciani, Valeria. Religion and MuseumsDocument114 pagesMinucciani, Valeria. Religion and MuseumsRodrigo Toniol100% (1)

- Anna University Chennai:: Chennai 600 025: Regulations 2009Document15 pagesAnna University Chennai:: Chennai 600 025: Regulations 2009nirmal_rockin09No ratings yet

- Week 4 Template Quantitative Article Qualitative Article: NSG3029 W4 Project Research Template Wildaliz ColonDocument4 pagesWeek 4 Template Quantitative Article Qualitative Article: NSG3029 W4 Project Research Template Wildaliz ColonMikey MadRatNo ratings yet

- Psychology BrochureDocument12 pagesPsychology BrochuretanasedanielaNo ratings yet

- Dog Training Homework HandoutsDocument5 pagesDog Training Homework Handoutsafodbdskjhibko100% (1)

- Multiliteracy Lesson PlanDocument6 pagesMultiliteracy Lesson Planapi-346065104No ratings yet

- Mailman PetitionDocument9 pagesMailman PetitionColumbia Daily SpectatorNo ratings yet

- Certificate Course On CSR: Reliance Industries LimitedDocument3 pagesCertificate Course On CSR: Reliance Industries LimitedHaritha K. PrahladNo ratings yet

- Episode-4 Management of The Learning EnvironmentDocument4 pagesEpisode-4 Management of The Learning EnvironmentJohn FordNo ratings yet

- Characterizing The Parent Role in School-Based Interventions For Autism: A Systematic Literature ReviewDocument14 pagesCharacterizing The Parent Role in School-Based Interventions For Autism: A Systematic Literature Reviewgabyliz52No ratings yet

- BCA - 4 Sem - Ranklist - May - June 2011Document30 pagesBCA - 4 Sem - Ranklist - May - June 2011Pulkit GosainNo ratings yet

- 4.HS6251 - Technical English IIDocument159 pages4.HS6251 - Technical English IINeel JhaNo ratings yet

- Level 5 Diploma in Health and Social CareDocument5 pagesLevel 5 Diploma in Health and Social CareGibsonNo ratings yet

- Audio-Lingual MethodDocument28 pagesAudio-Lingual MethodAnna Jean Tejada - CapillanNo ratings yet

- To Be or Not To BeDocument10 pagesTo Be or Not To BeJethro EncarnadoNo ratings yet

- WEEK2 Worksheet Perform Basic OperationsDocument3 pagesWEEK2 Worksheet Perform Basic OperationsAnn HuelvaNo ratings yet