Professional Documents

Culture Documents

The Map of X-Risk-Preventing Organizations, People and Internet Resources

Uploaded by

Turchin AlexeiOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

The Map of X-Risk-Preventing Organizations, People and Internet Resources

Uploaded by

Turchin AlexeiCopyright:

Available Formats

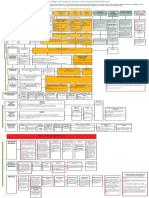

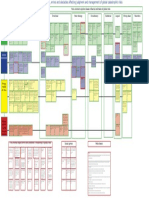

Organizations, sites and people involved in x-risks prevention

Known

very well

and large

amount of work

is done

MIRI

(Former Singularity institute)

E.Yudkowsky

link

Still exist!

Were famous in 1970s when they produced Limits of growth Link

OpenAI

Oxford Martin

Programme

CSER

has been created, but not

much people

knows them

Foundational

research

institute

Global catastrophic risks

institute,

Seth Baum

Phil Torrres

Interesting articles by its

main author, focus on existential terrorism and religion

link

Interesting newsletter, many

articles in scientific journals

link

Skoll Global

Threats Fund

Global

challenges

X-risks

net

Leveraged

research

Alexei Turchin

Creating full database on

x-risks and prevention plan

Elon Musk

Want AI safety through Open

AI and human on Mars as a

backup plan

the site is almost empty now

link

link

The Center for International Security and Cooperation is

Stanford Universitys hub for researchers tackling some of the

worlds most pressing security and international cooperation

problems

Nuclear, cybersecurity, bio, antiterrorism, link

To safeguard humanity from

global threats

Climate, water security, pandemics, nuclear proliferation, link

Currently, our research focuses on reducing risks of dystopian futures in the context of

emerging technologies.

Interesting work on AI safety

Convergence

Lifeboat

foundation

Justin Shovelain

Collective think tank concentrated on mathematical modeling of

x-risks

link

Stimson

Center

The Stimson Center is a

nonpartisan policy research

center working to solve the

worlds greatest threats to

security and prosperity.

non-prolifiration

link

Saving

Humanity

Very large scientific boards which

dont actually do anything, but

some useful discussion is going

in its mail list

site

Public

figures

Arctic news

Sam Carana

Irreversible global warming

because of methane hydrates

eruption

link

The Lawrence

Livermore National

Laboratory

has a division called the Global Security Principal Directorate which researches on behalf of the government

issues such as bio-security, counter-terrorism, etc. Link

Forum about risks of flu pandemic

Supports the smartest

minds and most effective organizations to reduce nuclear stockpiles, prevent new

nuclear states, and increase

global security

Link

Impact

risks

Nano

risks

Diffusing nuclear

threat

NASA

Foresight

institute

link

Has its own foundation and vision

of global risks

link

link

Zoltvan

Istavn

Stephen

Hawking

Warned about risks of

aliens and AI

Writers

has its Emerging Pandemic Threats

Program which aims to prevent and

contain naturally generated pandemics

at their source.[129]

Ploughshares

Fund

Bill Gates

investor in x-related

projects, wiki

Invested

in MIRI

includes a division called the Global

Alert and Response (GAR) which monitors and responds to global epidemic

crisis. GAR helps member states with

training and coordination of response to

epidemics. link

flutrackers.com

Small one person organisation

without any actual work

link

Jaan Tallinn

Peter Thiel

Bill Joy

Wrote famous article but now seems to

lost interest

World Health

Organization

(WHO)

The United States

Agency for International Development

(USAID)

from Homo Sapiens

mail list - good one

Sam Altman

Y combinator,

Confounded

Open AI

International

panel of climate

change

Nuclear

threat initiative link

CISAC

link

The Global Challenges Foundation

works to raise awareness of the

Global Catastrophic Risks. Primarily focused on climate change, other

environmental degradation and politically motivated violence as well

as how these threats are linked

to poverty and rapid population

growth, link

and important

figures

GCRI

Famous doomsday

clock

link

Bio-risks

IPCC

Cambridge center of existential risks

Martin Rees, link

on the Impacts of Future

Technology, link

link

Laszlo Szombatfalvy

Investors

X-risks

institute

Bulletin of

atomic

scientists

Club of Rome

Future of humanity institute

Oxford, link

Nick Bostrom

Elon Musk

wiki

Global Priorities Project

Nuclear

Future of life institute

Elon Musk

link

Effective altruism

EA forum

Created Global catastrophic risk

report-2016

Collaborate with UK government

Dr. Toby Ord is member

Connected with EA movement

FHI

FLI

EA

Large and

interesting

research

General x-risks

AI risks

Size and level of

influence

Global

warming

Presidential candidate

from transhumanist party

Wrote about x-risks

Vernor Vinge

Greg Igen

writer

Permutation

city

writer, created

Singularity idea

David Brin

John Barnes

writer,

Existence

Mother of

strorms

Holocen

impact working

group

Estimate risks of recent impacts

link

Scientists

and researchers

Open

places for

discussion

A. Sandberg

Adrian

Kent

Participated in

FHI and co-authored papers

LHC risks

Tobi Ord

site

existential

hope

Milan Circovic

Stevenson probe,

Anthropic shadow

Fermi paradox

Site

Bruce Tonn

Editor and writer

link

Max Tegmark

Wrote articles together

with Bostrom

Norvegian

transhumanists

Lesswrong

Existential risks (Adam Ford)

Global Catastrophic Risks Research and Discussion (Evan

Gaensbauer)

Global catastrophic risks

Stop existential risks

Robin

Hanson

Blog

Societal collapse

risks

Katja Grace

Willard Wells

R. Freitas

Fermi paradox

and DA

blog

AI impacts

Author of Apocalypses when

and prevention

plan

Nanotech risks

X-risks

on

Reddit

Existentiarisk

Control problem

Resource depletion risks

David

Denkenberger

agricultural risks

Large group of people working

on AI safety,

including, but not limited to:

Steve Omohundro

Luke Muehlhauser

Stuart Armstrong

Roman Yampolsky

Nate Soares

Vladimir Nesov

Kaj Sotala

Benja Fallenstein

Riva Melissa Tez

Jason Gaverick Matheny, wiki

Andrew Critch, blog

Paul Christiano

Karl Shulman

Anna Salamon

Dennis

Medows

Alexander

Kononov

Coined term

indestructibility of

civilization

R.Carrigan

Aaron Dar

Bill Napier

Risks of SETI

Risks of

supernovas

Risks of dark

comets

Longecity

subforum

Wikiresources

link

LW-wiki

EA forum

link

Intelligent

agents forum

Technical discussion

on AI safety

link

Discussion in

comments

IEET

Futureoflife

You might also like

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Resurrection of The Dead MapDocument1 pageResurrection of The Dead MapTurchin Alexei100% (1)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- Future SexDocument1 pageFuture SexTurchin AlexeiNo ratings yet

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Map of Agents Which May Create X-RisksDocument1 pageThe Map of Agents Which May Create X-RisksTurchin Alexei100% (1)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- The Map of Asteroids Risks and DefenceDocument1 pageThe Map of Asteroids Risks and DefenceTurchin AlexeiNo ratings yet

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

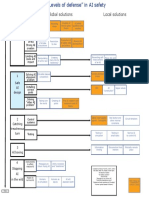

- AI Safety LevelsDocument1 pageAI Safety LevelsTurchin AlexeiNo ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- AI For Immortality MapDocument3 pagesAI For Immortality MapTurchin AlexeiNo ratings yet

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Map of Shelters and Refuges From Global Risks (Plan B of X-Risks Prevention)Document1 pageThe Map of Shelters and Refuges From Global Risks (Plan B of X-Risks Prevention)Turchin AlexeiNo ratings yet

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- The Map of Natural Global Catastrophic RisksDocument1 pageThe Map of Natural Global Catastrophic RisksTurchin AlexeiNo ratings yet

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- The Map of BiasesDocument1 pageThe Map of BiasesTurchin AlexeiNo ratings yet

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Map of Ideas About IdentityDocument1 pageThe Map of Ideas About IdentityTurchin AlexeiNo ratings yet

- The Map of MontenegroDocument1 pageThe Map of MontenegroTurchin AlexeiNo ratings yet

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The Map of Ideas of Global Warming PreventionDocument1 pageThe Map of Ideas of Global Warming PreventionTurchin AlexeiNo ratings yet

- The Map of Ideas About P-ZombiesDocument1 pageThe Map of Ideas About P-ZombiesTurchin AlexeiNo ratings yet

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- The Map of Ideas How Universe Appeared From NothingDocument1 pageThe Map of Ideas How Universe Appeared From NothingTurchin Alexei100% (1)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- Life Extension MapDocument1 pageLife Extension MapTurchin Alexei100% (1)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- The Map of Risks of AliensDocument1 pageThe Map of Risks of AliensTurchin AlexeiNo ratings yet

- Bio Risk MapDocument1 pageBio Risk MapTurchin AlexeiNo ratings yet

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

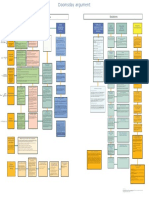

- Doomsday Argument MapDocument1 pageDoomsday Argument MapTurchin AlexeiNo ratings yet

- The Map of Methods of OptimisationDocument1 pageThe Map of Methods of OptimisationTurchin AlexeiNo ratings yet

- Global Catastrophic Risks Connected With Nuclear Weapons and Nuclear EnergyDocument5 pagesGlobal Catastrophic Risks Connected With Nuclear Weapons and Nuclear EnergyTurchin AlexeiNo ratings yet

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- Simulation MapDocument1 pageSimulation MapTurchin AlexeiNo ratings yet

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- Double Scenarios of A Global Catastrophe.Document1 pageDouble Scenarios of A Global Catastrophe.Turchin AlexeiNo ratings yet

- (Plan C From The Immortality Roadmap) Theory Practical StepsDocument1 page(Plan C From The Immortality Roadmap) Theory Practical StepsTurchin Alexei100% (1)

- The Roadmap To Personal ImmortalityDocument1 pageThe Roadmap To Personal ImmortalityTurchin Alexei100% (1)

- Typology of Human Extinction RisksDocument1 pageTypology of Human Extinction RisksTurchin AlexeiNo ratings yet

- AI Failures Modes and LevelsDocument1 pageAI Failures Modes and LevelsTurchin AlexeiNo ratings yet

- Russian Naive and Outsider Art MapDocument1 pageRussian Naive and Outsider Art MapTurchin AlexeiNo ratings yet

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- G4 Decimals #3Document2 pagesG4 Decimals #3Simply SaltNo ratings yet

- Wilo-Star RS, RSD: Pioneering For YouDocument136 pagesWilo-Star RS, RSD: Pioneering For YouKirill KNo ratings yet

- The Role of Underground Hydrogen Storage in Europe 1706118289Document47 pagesThe Role of Underground Hydrogen Storage in Europe 1706118289guillermo peralesNo ratings yet

- Curriculum Vitae Name: Sex: Marital Status: Date of Birth: Address: Telephone: Email Address: ObjectiveDocument2 pagesCurriculum Vitae Name: Sex: Marital Status: Date of Birth: Address: Telephone: Email Address: ObjectiveCandice LynchNo ratings yet

- 新托福百日百句百篇(第一册)Document274 pages新托福百日百句百篇(第一册)张子楚No ratings yet

- Sefirot - The GuidebookDocument28 pagesSefirot - The GuidebookOscarC2No ratings yet

- Theories On Human DevelopmentDocument42 pagesTheories On Human DevelopmentHANS CHRISTIAN DELOS REYESNo ratings yet

- Basal Energy ExpenditureDocument1 pageBasal Energy ExpenditureSucipto HartonoNo ratings yet

- 2015.31463.gazetteer of The Hazara District 1907 TextDocument458 pages2015.31463.gazetteer of The Hazara District 1907 TextMustar OulakhNo ratings yet

- Chapter 2 Preliminaries WorkDocument32 pagesChapter 2 Preliminaries WorkAfeeq Rosli100% (1)

- Faculty Science and Techonlogy: Matriculation No: Identity Card No.: Telephone No.: E-Mail: Learning CentreDocument21 pagesFaculty Science and Techonlogy: Matriculation No: Identity Card No.: Telephone No.: E-Mail: Learning CentreNatasha YusofNo ratings yet

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- 0 BibliografíaDocument3 pages0 BibliografíaDaniel Espinosa RodriguezNo ratings yet

- 11galilean RelativityDocument5 pages11galilean Relativitycastillo61No ratings yet

- Simon E3 CatalogueDocument16 pagesSimon E3 CataloguemarketingsneNo ratings yet

- CS-114 Fundamentals of Computer Programming: Control Statements - IIIDocument34 pagesCS-114 Fundamentals of Computer Programming: Control Statements - IIIAbdullah RiasatNo ratings yet

- Lesson From Seveso ItalyDocument48 pagesLesson From Seveso ItalyBilal ZamanNo ratings yet

- Coding & Decoding (Presentation)Document67 pagesCoding & Decoding (Presentation)SANSKAR GUPTANo ratings yet

- Introduction Computational Ingineering MatlabDocument439 pagesIntroduction Computational Ingineering MatlabJose CapablancaNo ratings yet

- Efficacy of Ayurvedic Interventions in Hypothyroidism: A Comprehensive ReviewDocument7 pagesEfficacy of Ayurvedic Interventions in Hypothyroidism: A Comprehensive ReviewMikel MillerNo ratings yet

- Material Specification For Preformed Plastic Pavement Marking TapeDocument10 pagesMaterial Specification For Preformed Plastic Pavement Marking TapeTony ParkNo ratings yet

- Plant ModuleDocument26 pagesPlant ModuleHitesh JainNo ratings yet

- Block 7Document113 pagesBlock 7KK ArticlesNo ratings yet

- Mobile Phones and RadiationDocument6 pagesMobile Phones and RadiationGehanShabanNo ratings yet

- 178 Capstone: - DigitalDocument1 page178 Capstone: - DigitalAbdul Nafi SarwariNo ratings yet

- 3.4 Analytical Exposition and 3.5 Passive Voice - SELF EALUATION 3.4 Analytical ExpositionDocument2 pages3.4 Analytical Exposition and 3.5 Passive Voice - SELF EALUATION 3.4 Analytical Expositionsugar gliderNo ratings yet

- Worm Gear OilDocument2 pagesWorm Gear OilDavidNo ratings yet

- Difference Between Face To Face Communication and Telephone Conversation (With Table)Document5 pagesDifference Between Face To Face Communication and Telephone Conversation (With Table)Beki Ye Biriye LijNo ratings yet

- Agathias The HistoriesDocument101 pagesAgathias The HistoriesFrançois88% (24)

- Nygård 2019Document9 pagesNygård 2019Wágner B SilvaNo ratings yet

- Jmse 10 01965 v3Document26 pagesJmse 10 01965 v3waa123sNo ratings yet