Professional Documents

Culture Documents

The QRAQ Project Quality of Risk Assessm PDF

Uploaded by

RafaelOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

The QRAQ Project Quality of Risk Assessm PDF

Uploaded by

RafaelCopyright:

Available Formats

Taylor Associates ApS

The QRAQ Project

Quality of Risk Assessment for

Process Plant

Volume 1

Introduction

Version 1 Issue 1

January 2005

Taylor Associates ApS

© Taylor Associates ApS 2005 1

QRAQ 1 The quality of risk analysis

The QRAQ project

Quality of Risk Assessment for Process Plant

Vol 1 Introduction

Taylor Associates ApS,

Prunusvej 39,

3450 Allerød,

Denmark

Issue Date Author Approval Release

V1I1 Jan 05

© Taylor Associates ApS 2005 i

QRAQ 1 The quality of risk analysis

Preface

This report is the first of several volumes intended to provide a sound basis for

risk assessment calculations to be used for land use planning and emergency

planning. Studies by others have shown that risk analysis results can vary by as

much as a factor 10, even when high quality methods are applied and can vary

by as much as a factor 100 in benchmark studies. This study is intended to

determine why this is so, and provide guidance on choice of method and

choice of parameters.

© Taylor Associates ApS 2005 ii

QRAQ 1 The quality of risk analysis

Updating history

Issue Date Affected Change

© Taylor Associates ApS 2005 iii

QRAQ 1 The quality of risk analysis

Contents

1. The Quality of Risk Analysis.............................................................................................1

1.1 Some successes of QRA ............................................................................................1

1.2 Some problems with the state of the art in QRA .......................................................2

1.3 Problems with existing methodologies, and some solutions .....................................3

2. Quality Criteria for Risk Assessment.................................................................................7

3. The QRAQ project .............................................................................................................9

4. Earlier work .....................................................................................................................11

References and Project Bibliography.......................................................................................13

Studies carried out as part of the QRA Quality project ...........................................................15

© Taylor Associates ApS 2005 iv

1. The Quality of Risk Analysis

Quantitative risk assessment has become an important tool for process plant design, approval,

and for land use planning around the world. The techniques for such analysis have risen in

sophistication since the early 1970’s, and the methods are now built into the regulations for

heath and safety approval and land use planning for a large number of countries. Most major

oil companies and several major chemical companies have adopted quantitative risk

assessment as a key part of their design procedures. Nevertheless there still exists much

scepticism about the results of QRA studies. As will be seen, much of this scepticism is

justified. It is still possible for two teams professional analysis teams to arrive at very

different results, even when following nominally identical methodologies.

At the outset of the author’s own use of QRA, starting in 1972, the dangers of using risk

assessment were apparent. In particular, the possibility of overlooking a source of risk, and

thereby contributing to causing an accident, was an important motivation. The question of

choice of methodology became acute when the author, together with O.Platz, was requested

to make a comparative risk analysis for all of the Danish plants falling under the major

hazards directive (Seveso I directive). Concerns about the possible oversights and errors have

led to a series of studies over a period of 30 years, the results of which are summarised in

these volumes.

1.1 Some successes of QRA

At its best, quantitative risk assessment can produce really satisfying results. Practical proof

of the value, in the form of lives actually saved, is in fact rarely experienced. A few cases

from the author’s own experience can be given though:

- A group of persons at a ferry terminal saved by being in a safe haven shelter, when

a chlorine release occurred. The release was predicted after hazard identification,

and the cost of the safe haven justified by QRA. In all 12 lives were saved.

- A reduction in the number of serious accidents at a chemical waste treatment plan,

from one per year to one in twenty years, after an extensive risk assessment and

remediation project. The reduction cannot be proved to have arisen from the QRA,

but the correlation is highly suggestive.

- Justification of a pipeline to transport LPG after a risk assessment, with an LPG

tank truck BLEVE occurring during the pipeline construction period, fortunately

outside the villages the pipeline was intended to protect.

Less clear, but still quite a good demonstration of the value of the techniques are cases in

which clear and obvious improvements in safety have been justified by means of risk

assessment. Examples from my own country are the introduction of advanced passive fire

protection for LPG storage tanks, and the replacement of a large cryogenic ammonia storage

tank in a city centre by a cryogenic ammonia pipeline.

© Taylor Associates ApS 2005 1

QRAQ 1 The quality of risk analysis

QRA is especially useful where large impact hazards exist, which require large investments in

safety equipment or other safety measures. Out of about 100 land use planning projects

undertaken over half were “difficult cases, where fairly large investments in safety needed to

be justified” (The remainder resulted in simpler decisions, such as safety distances which

were well within the feasible range, and projects where large reductions in risk were achieved

with fairly simple and inexpensive means. None produced results where no risk reduction

was necessary). Safety decisions costing over a million dollars are today only rarely made

without the use of some form of risk assessment, nor should they be.

1.2 Some problems with the state of the art in QRA

Despite the successes, the current state of the art in risk assessment is far from problem free.

Risk analysis has long been an art, with wide variations in results arrived at by different

practitioners. As the saying goes, it requires a strong moral character to sell elastic by the

meter. Unfortunately, “favourably weighted” results are produced not only by analyses which

are less than honest. Favourable results can also be produced by analysts who are too

inexperienced to recognise important hazards, or insufficiently competent to recognise that

standard methods are in a particular case inappropriate. Of course such problems can in some

case give needlessly unfavourable results in a land use planning project, as well as giving

unjustifiably optimistic ones. As an example of this, two of the standard gas release

dispersion programs made available by well respected authorities, lead to differences in safety

zone distances of over a factor of 2. These differences apply even when the programs are used

correctly. It is still apparent that the start of the art is very dependent on the artist.

In order to be useful in the legal systems of land use planning, the methods need to be:

- Reproducible, so that one set of analysis results is not subject to immediate

challenge by counter expertise from an alternative set of experts.

- Reasonably reflective of actual risk conditions, so that analyses do not result in

counterintuitive or directly and obviously inappropriate recommendations. A

certain factor of uncertainty is acceptable, but not when it leads to a reversal of

ranking orders of preferred planning solutions, and definitely not when it leads to

safety planning distances being described as “between one and three kilometres”.

- Transparent, so that ordinary persons can see that the analyses are reasonable, and

detect the cases where the analysis does not reflect the conditions on the ground.

The needs for reproducibility can be met by providing a fixed algorithm for the analyses, as

for example in the Dutch Yellow, Green and Purple Book system (ref 2.), the RISKAT

analysis method (ref. 3) or the collection of methods given in the CCPS guide (ref. 4). The

desire for transparency can be met by supplementing the algorithm by means of a good

pedagogic reporting practice, and by illustrating the analyses with examples of earlier

accidents (there are unfortunately plenty of them). However, the use of fixed algorithms

means at the same time a fairly rigid system, which can lead to counter intuitive results,

especially when the project lies outside the originally intended area of application of the

algorithm and data base.

© Taylor Associates ApS 2005 2

QRAQ 1 The quality of risk analysis

1.3 Problems with existing methodologies, and

some solutions

Lacunae

Some types of hazards are not covered in existing standard risk assessment methodologies

(e.g. ref. 7, 14, 32). Examples are:

- Explosions in chemical reactors

- Explosions in sewers

- Splashing and spray releases of volatile liquids

- Large continuing flows from pipelines

- Fire induced tank explosions (this type of incident is not even described in the

available text books or handbooks, but there are many examples in the accident

data bases)

- Toxic smoke

- Lagoon wall collapse

- Toxic dust releases

Examples of major accidents which have occurred both within and outside the scope of

standard risk assessment methodologies are given in Volume 4.The solution to this problem

is fairly straightforward – add the problem types to the required list of accident types to be

considered in land use planning assessments.

Relevance of frequency data

The release frequency values available in data bases or standard references have been

collected in the best fashion possible at the time when the methods were established. The

accuracy of the data, though, is less important than the question of appropriateness of the data

in a specific case. Some examples can be given of just how important this question can be:

- Risk assessments for road transport of hazardous goods very often refer to the

authoritative study carried out on behalf of the UK HSC (ref.5). Most of the

frequency values in this report are based on collections of data which are directly

relevant to British hazardous goods transport and are well supported by data from

the actual systems concerned. Just one number in the report has a doubtful

pedigree, namely that for road transport pressurised tank truck accidents. The

value given was originally derived from US LPG transport data, and adapted to

apply to British transport, on one specially chosen route, using unhardened carbon

steel tanks. The adjustment from the US data reduced frequencies by a factor of

400. (see ref. 6 for similar data). When a full set of data were investigated, in

connection with a particular study, it was found that road tanker accident

frequencies with large releases could be a factor 1000 higher, depending on traffic

and road type, number of rail crossings, and on used of thin hardened steel tanks.

The question here is not one of uncertainty – most of the data sets compared were

© Taylor Associates ApS 2005 3

QRAQ 1 The quality of risk analysis

both extensive and relatively free of confounding factors. The question is one of

use of appropriate data.

- Calculations were made for the frequency of large oil releases from piping in a

refinery. A fairly good, and well supported value for piping failure rates is 30*10-6

per m. year. (Literature values vary by a factor of up to 20 below this. The values

given in the Rijnmond study, ref 2., for example are 5*10-6 per m. year). When

the was applied for a refinery, a values of 6*10-2 major releases from piping per

year was calculated. The company pointed out that this value is very optimistic,

and that they had 2 major releases in 10 years. Investigation showed that the two

releases arose due to an erroneous delivery of steel elbows, of the wrong quality,

for a highly loaded pump. The question then arises – to what extent are problems

such as design error, wrong choice of steel, poor pipe support etc. included into

the data bases used for risk calculations ? Certainly not sufficiently to cover this

kind of case.

Multi company sites

One problem which occurs quite often in land use planning is that in industrial areas, there

may be several companies, each contributing to risk, with their risk “footprints” overlapping.

Should each company be allowed to contribute to the maximum of the risk acceptance limit?

In this case the individual risk for neighbours could grow, in some practical cases by as much

as 10 times that set as the original limit. Or should there be some kind of “risk rationing” in

an industrial area? In this case, should a very small formulating and repackaging company be

allowed to have the same size of risk footprint as a large refinery?

Safety management

As a final issue, consider the impact of safety management on risk. Poor safety management

can increase risk by factors of many orders of magnitude. As an example, in one plant the

maintenance manager was reluctant to have anything to do with risk analysis, and also lacked

a good deal of knowledge about safety systems design. Several valves introduced as a result

of hazops were removed from the plant, to avoid the need for maintenance. As a result, and

explosion occurred just five years after plant commissioning. The original calculated

frequency of such explosions was 4*10-5. The direct cause was lack of the valves, the root

cause lack of knowledge and poor safety attitude. No risk assessment has any validity if

problems of this kind exist.

In order to take this kind of dependency into account, some groups have introduced a

“management factor” into the risk assessment calculation (ref. 6, 7 ). Whether these factors

can cover the full range of variation seen around the world can be doubted, but at least the

problem is acknowledged, and some weighting given to it. Others have argued that the

problems of poor safety management should be solved, rather than calculated. From study of

accident records from the US RMP data base it appears though that safety management issues

dominate the pattern of risk, even for plants in USA, where plants have been subject to US

OSHA/EPA regulations on safety management for ten years. (see fig 1). One could perhaps,

on the basis of the data, take standard equipment release rates and multiply by a factor 3, to

guarantee that safety management were taken into account. This approach though overlooks

© Taylor Associates ApS 2005 4

QRAQ 1 The quality of risk analysis

the wide swings in both engineering design and safety management standards which exist, as

will be seen below.

Cause percentages for offsite Anhydrous

ammonia releases

Other

Management

Weather

Materials

Design

Maintenance

Bypassing

Procedures

Human error

Equipment

0 5 10 15 20 25 30 35

Figure 1. Cause profile for anhydrous ammonia releases.

The “Other” category covers loss of power and start up faults

Insensitivity to the standard of safety engineering

One problem with all of the currently published risk assessment methodologies is that they do

not take into account the efforts made by plant engineers in reducing risks, except in a few

highly standardised areas such as fitting of emergency shutdown valves, or mounding of LPG

vessels. Current published methodologies do mot provide methods which allow for high

quality piping, for risk based inspection(!), for high states of readiness if fire fighting, for

fixed fire protection systems, for water curtains, and many other small details. It is hard to

describe how frustrating engineers find this. Trying to improve safety, and then to be given no

credit for the result, results in a very negative attitude. One could ignore this frustration in

regulation, of course, but the really bad result is that companies direct their attention

elsewhere, often to less effective methods of risk reduction for which they can be given

credit.

At the worst, this can lead to an increase in risk. An example is the insistence of some

methodologies on giving credit only for passive safety measures. The US RMP guidance on

offsite consequence assessment, for example, bypasses this issue in its worst case

calculations, by requiring scenarios with total release of vessel inventory within 10 minutes.

This ignores the fact that a good modern ESD system, designed and maintained to SIL 2

standards, reduces risk by two orders of magnitude. Even worse, it ignores the fact that

© Taylor Associates ApS 2005 5

QRAQ 1 The quality of risk analysis

reducing piping diameter by a factor of two reduces hazard distances typically also by a factor

of two, for nearly all realistic scenarios having effects outside the plant.

It is highly desirable to have methodologies which encourage good safety engineering.

© Taylor Associates ApS 2005 6

QRAQ 1 The quality of risk analysis

2. Quality Criteria for Risk Assessment

The prime requirement for a risk assessment is that it should be repeatable. That is, at least if

the same analyst, using the same data, makes a new analysis, the results should be the same.

Ideally, if two analysts make the same analysis using the same procedure, the results will be

very similar.

Unfortunately, it is rare that such repeatability can be achieved. The reason is that there is a

very large number of assumptions involved in any risk analysis, from the basic data

concerning vessel contents (Is the tank always full, or most often nearly empty ?) through to

details such as the type of soil and its water content, important for conductivity calculations.

While all critical assumptions could in principle be recorded and checked, the number of

these in a high quality analysis is so large as to make such recording impractical.

In order to overcome this difficulty during the present investigations, a computer program,

QRA Pro was written. This program allows each parameter in every model to be recorded,

and allows the underlying assumptions behind each choice to be recorded. A large number of

standard choices is provided, in order to keep the work load in recording to a minimum. The

program also allows all of the calculations to be carried out automatically, guaranteeing

reproducibility [ref 30.] (This kind of reproducibility has been demonstrated in practice. It

turns out to be extremely useful in making comparisons, for example for before and after

assessment of risk reduction measures). The program provides a very large number of

different models and input parameters, and can be extended with special purpose sub

programs, so that flexibility in analysis is not restricted to any large extent.

Another approach to achieving reproducibility is to provide a very complete guideline for

analysis. An example of what can be achieved in this direction is given in Volume 5 of this

report.

Once a reasonable degree of reproducibility can be achieved in risk assessment, it becomes

desirable also to achieve accuracy. There are limitations on the extent to which accuracy can

be achieved however – both frequency and consequence calculations are associated with

uncertainties, often with uncertain values being multiplied. We should therefore not expect a

very high degree of accuracy, even though such accuracy is desirable. At present, if frequency

and consequence calculations can be made accurate to within a factor of two, most risk

analysts would be very happy. As will be seen in Chapter 3 and 4, such accuracy can be

achieved for some calculations, but it requires a great deal of effort.

A further issue here is what we mean by accuracy. A usual criterion for a scientifically based

model is that results calculated with the model should agree with experiment or observation.

In risk assessment, we cannot carry out experiments on full scale accidents, and we certainly

cannot investigate a full range of accident scenarios in this way. We are forced to rely on

observations from accidents which have actually occurred. Only a few of these however have

been documented in depth, so our assessments of accuracy and uncertainty will themselves be

uncertain. Nevertheless, there are some cases in which risk assessments can be validated, as

will be seen in later volumes.

© Taylor Associates ApS 2005 7

QRAQ 1 The quality of risk analysis

Comparing risk calculations made with industry average data against actual accident

frequencies means that we are aiming at “accuracy on average”. If we have a procedure

which provides good results of this type, then we will be able to predict the average accident

rate for a number of similar plants, and find that this agrees with experience.

Unfortunately, this kind of accuracy is rather unsatisfactory. As inspection audits and accident

records show, no two plants are ever identical, and risk in two nominally identical plants can

vary in practice by a factor as large as 100 (see e.g. ref. 33) Ideally, we should have “ plant

specific accuracy”. This means that if the same (unchanging) plant is observed over a

number of years, the observed accident frequency should agree with predictions. Such a test

could obviously never be carried out in practice, if only because accident frequencies increase

with plant age, and fall as a result of the risk analysis process itself in any properly organised

company. Nevertheless, some aspects of plant specific accuracy of risk estimates can be

made, especially if causal analyses are made of near misses and potential accident initiating

events.

Plant specific accuracy is desirable because it is often the weak points in safety defences of a

plant which actually give rise to accidents. A risk assessment which is “accurate on average”

may fail to identify weaknesses, or even worse, serve to reassure when reassurance is

unjustified. Risk analysis should not become a cushion to sleep on.

Achieving plant specific accuracy requires significant extension to current risk analysis

practices. Some factors which will be important in this sense are:

• Age effects need to be taken into account in the analyses

• Inspection and maintenance need to be taken into account explicitly

• Design and construction standards, such as corrosion allowances, need to be taken

into account

In addition to these kinds of factors, which can be determined straightforwardly, safety

management factors should ideally be taken into account. Unfortunately, present approaches,

while providing in many cases an index value, by which different plants can be compared, do

not provide numbers which correlate well with objective measures of risk. Some steps in this

direction are described in volume 6 of this report.

© Taylor Associates ApS 2005 8

QRAQ 1 The quality of risk analysis

3. The QRAQ project

Because of the real need for a degree of certainty in risk assessment, a number of projects

have been undertaken. The main approach has been to study real life situations , with full

scale risk assessments, and compare the results of different methodologies.

The first studies undertaken were those for completeness of hazard identification, starting in

the 1970’s with comparison studies of HAZOP analyses carried out by different persons and

using different approaches. (ref. 33) These were followed up with a review of a large number

of Hazop studies carried out by various teams of consultants and company staff in the early

1990’s (ref. ). These studies have been followed up with a review of the currently most

effective methods, with results reported in volume 2 of this report.

The data used for risk assessment, particularly release frequencies and hole size distributions,

is critical to the performance of risk assessment. High quality data has become available

during recent years for offshore plant, but most data for chemical and petrochemical plant

onshore has been derived by engineering judgement, and often is secondary data which can

be traced back to studies carried out in the 1970’s.

In order to solve this problem, an exhaustive in depth study was carried out of release data,

covering about 12000 plant years of experience. A causal analysis was also made, so that the

effect of changes in engineering and integrity management standards can be calculated. QRA

results using this data have been compared with results using data which are drawn from the

literature. These results are discussed in volume 5 of this report.

There is still a wide uncertainty in the choice of consequence models used in risk assessment.

These in part reflect experimental uncertainties, but it was found in this study that many

models used in present day risk assessments are actually inconsistent with well established

experimental evidence. In order to assess the impact of this, a full scale risk calculation

package was written, in which alternative models can be chosen. A sensitivity study was then

carried out, to determine the importance of the choice of model in determining risk analysis

results. A study was also carried out comparing the results of calculations with those from

experiments, and with observations from actual accidents. The results of these studies are

given in volume 3 of this report.

The actual methodology used for risk assessment affects the results. In particular, there are

the following differences between standard published methodologies:

- The actual choice of items to be analysed, in particular the inventory threshold

above which analyses are required to be made.

- The frequency data to be used (if any)

- The hole size distribution to be used

- The ignition probability calculation methodology

- The way of accounting for safety measures

- The types of accident scenarios included and excluded

- Whether emergency action is taken into account

© Taylor Associates ApS 2005 9

QRAQ 1 The quality of risk analysis

- Whether domino effects and escalation are accounted for.

- The way in which public and employee exposure is assessed.

- The way in which safety management standards are accounted for.

In order to investigate these aspects, these aspects, an extended sensitivity analysis was

carried out. Six methodologies were rigorously defined, and applied to six different “virtual”

plants. This allowed detailed sensitivity analysis to be carried out, both on variations in

methodology, and variations in plant design. The plants were a 300000 bbl per day refinery, a

fertilizer plant with ammonia and sulphuric acid production, a speciality chemicals plant, a

pharmaceuticals plant, a chemicals warehouse, and an LPG storage terminal. Flow sheets,

piping and instrumentation diagrams and plant layout were developed for these. Three

different sitings were investigated for the plants, one typical of Central and South America,

one typical of the Middle East, and one European. These different sites affect the distance to

other industry and to population centres, average temperatures, and wind speeds.

The methodologies chosen for investigation were based on the Dutch “Purple Book; a

methodology based on deterministic criteria; one which is based on quantified hazard and

operability analysis; one based on the CCPS guideline; and an upgraded QRA methodology

which uses US statistics for plant accidents collected under the RMP rule. The methodologies

differ in the degree to which they use plant failure rate data. In some methodologies accident

frequency data is only given for “vessels, tanks, hoses”,i.e. a limited list. In the last

methodology, accident frequency data were obtained for 60 different equipment types.

Upgraded consequence models were also used, based on reports published by UK HSE and

by Shell (see Vol 3 for a full list). Results were checked using four different consequence

calculation packages, the main calculations being done using the author’s QRA Pro

consequence calculation suite, which was upgraded for the project, in order to allow a range

of different consequence calculation methods to be investigated. The results of these studies

are given in vol4 of this report.

In order to investigate the effect of safety engineering, each plant was analyzed with three

assumptions about engineering standards. The first used practices based on US and

international standards from the 1970’s, the second made use of modern US international

standards and especially modern oil company standards. The third made use of “high integrity

engineering” principles. Each of these sets of assumptions was rigorously defined, by means

of a design handbook. Plant layout and spacing were also investigated. The effect of this are

considered in vol 5 of this report.

The effect of differing safety management standards was investigated by means of a detailed

model, based on an extensive review of accident cases, and on data from safety audits at a

large number of plants of the kind studied. The effects of these aspects are considered in vol.

6 of this report.

Because the plants investigated are “virtual” i.e. do not exist in reality, it is possible to review

the analysis results openly, without problems of commercial security arising. The examples

can therefore be investigated and reviewed by specialists quite openly.

© Taylor Associates ApS 2005 10

QRAQ 1 The quality of risk analysis

4. Earlier work

Suokas and his colleagues carried out a series of studies in the late 1970’s and early 1980’s

on the completeness of hazard identification. (ref.31 ). These studies were parallel to, and

with similar approaches to those reported in volume 2, and resulted in a short monograph by

Suokas and Taylor on the pitfalls of risk assessment (ref.34)

There have been several earlier critical reviews of consequence calculation methods. Two

which are of special interest here are the review of the use of heavy gas dispersion models by

Hanna, Rivas and Chang , the review of gas dispersion by Kaiser. Descriptions of, and

references for these, are given in volume 3 of this report. A series of reviews by Deaves, Rew

and their colleagues, and by the Health and Safety laboratory, commissioned by the UK

Health and Safety Executive, provide an up to date review of a large range of models, which

have been used in this study as a basis for comparisons with the standard models, as given for

example in the Dutch Puple Book (ref. 14) The models described in these reviews have been

investigated alongside the standard models, in Ch. 3, and have been used as alternatives in the

full scale risk assessments in Ch. 4.

The published project most relevant to this study is the benchmark study carried out by a

consortium of specialist groups under the auspices of the European Community (ref. 6 ) This

project established a “virtual” process plant, in a similar way to the present study. The plant

(an ammonia fertiliser plant) was analysed independently by all the groups. The risk analysis

was carried out by in all nine teams, and their results compared. The initial set of results

varied widely, with some results deviating in critical parameters varied widely. The number

of fatalities for accidents occurring at 10-6 per year varied from 300 to 30000, and the

frequency for 100 person accidents varied from 5*10-6 to 2*10-4 per year. The ratio between

the largest safety distances (distance to 10-6 per year fatality risk) varied less, with a factor of

2 between the smallest and largest safety distances. In a second phase of the project, the

differences were investigated, and as far as possible resolved. (ref. 4, 5)

The results in the benchmark study have served as a guide to the present study. Even with

seven comparable studies, it is not possible to investigate all aspects of variation in a risk

analysis. The present study is intended to complement the benchmark, by providing a single

framework, in which many significant parameters can be varied independently.

Unfortunately, the benchmark studies did not provide a definitive answer to the questions of

repeatability and accuracy, or rather, they provided negative results, showing that the

uncertainties in analysis gave variations of up to five orders of magnitude. Such a result is

useless in engineering. The earlier studies however, have for the most part been flawed by

including unvalidated methods in the comparisons.

The QRAQ project takes use of a different approach. A fairly wide range of chemical plants

were analysed using a wide range of methods, but all within the same analysis framework.

This allows individual fractures of the analysis methodology to be investigated and sensitivity

© Taylor Associates ApS 2005 11

QRAQ 1 The quality of risk analysis

analyses to be carried out. Also, many of the results could be compared with worldwide

experience of large accidents, so that the overall performance could be validated.

It may seem strange that an in depth study of QRA methodology and validity is made now,

after QRA has been in use for over 30 years. However, there has so far been little published

work of this kind producing anything but negative results. An exception is the RIVM

Benchmark study, which demonstrated reproducibility to within a factor of 2 when using a

fixed procedure and fixed set of models.

© Taylor Associates ApS 2005 12

QRAQ 1 The quality of risk analysis

References and Project Bibliography

[1] J.R.Taylor, C.G.Petersen, J Kampmann, L. Schepper, EK Kragh, RS Selig, P.Becher,

K.E.Petersen, Quantitative and Qualitative Criteria for Risk Assessment, Miljøprojekt

112, Danish Environmental Agency 1989

[2] J.R.Taylor and E Vangsted A Comparative Evaluation of Safety Features Based on

Risk Analysis for 25 Plants. Int Symp Loss Prevention and Safety Promotion in the

Process Industries, Taormina, 1992

[3] Office federal de l’environment, des forêts et du paysage, Critères d’appreciation

pour l’ordonnance sur les accidents majeurs OPAM, 1996

[4] Int conf. Ammonia Transportation Risk, Haifa 1999

[5] A.Amendola, Contini, Ziomas, Uncertainties in Chemical Risk Assessment,: Results

of a European bench mark exercise J. Hazardous Materials, 1992

[6] A.Amendola. Presentation to Danish Engineering Society, RISK, 2000

[7] Technica Ltd. Techniques for Assessing Industrial Hazards, technical paper 55, The

World Bank,

[8] Interprovincial Overleg. Guidelines for the Preparation of Off site Safety Reports,

Report IPO A-73, 1994

[9] Hurst, Hankin, Wilkinson, Nussey, and Williams, Failure Rate and Incident Data

Bases for Major Hazards,, Int Symp Loss Prevention and Safety Promotion in the

Process Industries, Taormina, 1992

[10] Munday, Phillips, Singh and Windebank, Instantaneous Fractional Annual Loss,

Loss prevention and Safety Promotion in the Process Industries, 3rd Int Symp. Basle

1980

[11] Bellamy and Geyer, Organisational, Management and Human Factors in Quantified

Risk Assessment, HSE CSR No. 33/1992

[12] C. Matthiessen, Current status of the RMP reporting. Workshop presentation, Int conf

and Workshop on Modeling the Consequences of Accidental Releases of hazardous

materials AIChE 1999

[13] Cox, Ang, and Lees in Hazardous Area Classification , I Chem E. Although the

purpose of this publication was to derive a better basis for hazardous area

classification, it provides one of the few risk analyses for a complete plant in which all

the basis assumptions are explained clearly, and it is, in addition to this, a piece of

work of high quality.

[14] B Ale et al. Guidelines for quantitative risk assessment, Purple Book, Director

General for Social Affairs and Employment, Holland 1999

[15] Rijnmond public Authority Risk Analysis of Six potentially hazardous Objects in The

Rijnmond Area, Reidel, 1982

[16] J.R.Taylor Review of Release Frequency data for Risk Assessment 2nd edition, Taylor

Associates ApS, 1999

[17] J.R.Taylor A process plant release frequency data base, Taylor Associates, 2004

[18] J.R.Taylor Process Safety References, Taylor Associate 1994-2000.

[19] J.R.Taylor Risk analysis Methodology for Process Plants. 3rd Edition 1999

[20] J.R.Taylor. Guidelines for application of failure rate data, 1998

[21] J.R.Taylor Process Safety Engineering Manual, 2nd Edition, 1999

[22] J.R.Taylor. Comparative study of Process Plant Risk Assessments, 2000

© Taylor Associates ApS 2005 13

QRAQ 1 The quality of risk analysis

[23] J.R.Taylor Quality Standards for Risk Assessment, 1996

[24] J.R.Taylor , Hazardous Materials Accidents in Train Marshalling, 2000

[25] J.R.Taylor, Review of Road transport Accident frequencies, 1999

[26] J.R.Taylor, Review of Component Failure Rates for Risk Analyses, 1998

[27] J.R.Taylor, A Deterministic Approach to Risk Assessment for land use planning, 2000

[28] J.R.Taylor, Application of Risk Acceptance Criteria and QRA Methodologies for Land

Use Planning in Four Countries , Int. Conf. On Risk Acceptance Criteria, Sao Paolo,

2000

[29] J.R.Taylor, The QRAQ Project – Quality of Risk Assessments for Land Use Planning,

Int Conf on Land Use Planning, Lille, 2003

[30] CCPS, Guidelines for Chemical Process Quantitative Risk Analysis AIChem E, 2000

[31] J Suokas, P Pyy, Evaluation of the Validity of four hazard Identification methods,

VTT research report 516, 1988

[32] J.R.Taylor, QRA Pro – Users Manual Version 7.4, 2006

[33] J.R.Taylor, Burteilung, Vollstänigkeit und Nützen von Risikoanalyseverfahren,

(Quality, completeness and benefit of Risk Analysis), in Ermittlung und Bewertung

Industrieller Risiken, S.Lange, Springer Verlag 1984

[34] J Suokas, J.R.Taylor Take Care, Pitfalls in the use of risk assessmenyt, Yrkeslitteratur

1981

[35] Ale, Golbach, Goos, Ham Hansen, Shield, Benchmark risk analysis models, RIVM

report 610066015, 2001

© Taylor Associates ApS 2005 14

QRAQ 1 The quality of risk analysis

Studies carried out as part of the QRA

Quality project

Study Description

Frequency of releases of hazardous materials An in depth study of release data from 5 years

of operating experience from 2600 plants.

The data is now available in the RELBASE data

base, and is the basis for the European Union

effort to develop basis data for risk calculation

for land use planning risk assessments.

To be published Spring 2006 (preliminary

version available, Frequency of Releases of

Hazardous Material Vol 1 to 3, Version 6, 2004

This study provides actual hole size

distributions, based on data from 540 plants.

Quality and completeness of hazard A study of Hazop and operator error analysis

identification techniques is provided, with data on

performance of the techniques, in particular an

assessment of the completeness of hazard

identification in 30 risk analysis studies.

Comparison of two phase release models A study of the models currently in use, from

Fauske, Roberts, Ermak, Webber, Kukkonen

(Yellow Book), Leung, and comparison with

experimental data. Shows that release amounts

can be predicted reliably, but only a few models

predict initial velocity reliably, so that initial

dispersion in the jet is inaccurate for some

models.

Effect of pipe length and hole size on two Examines the effect of choosing different

phase release scenario locations for releases on overall risk

assessment.

Effect of choice of hole size categories on Examines the effect of choice of hole sizes and

risk assessment number of hole size categories on risk

assessment, by carrying out 6 full QRA’s with

different choice of hole size selection

methodology

Effect of rain out on risk assessment Compares rain out models (Yellow book, CCPS

and FLIE), and compares with actual accidents.

Shows the effect of rain out on risk for

ammonia, propane and chlorine releases.

New models for impinging jets.

Shows the effect of building high basins around

storage vessels.

© Taylor Associates ApS 2005 15

QRAQ 1 The quality of risk analysis

Study Description

Comparison of jet dispersion models Comparison of the Yellow Book (Chen and

Rodi), simple momentum jet, Quest, and Hoot,

Meroney and Peturka models with experimental

data, and sensitivity study of the effect of choice

of jet model on risk analysis

Comparison of blow up models Comparison of the models available for initial

dispersion of liquefied gases with actual

observations from accidents, and sensitivity of

risk analyses to the choice of model

Comparison of plume dispersion models Comparison of the widely used models, Cox

Carpenter, SLAB, Degadis, HG system, and

UML models and a newly developed model,

TAPlume, with actual accident data.

The study investigates not just the dispersion

models themselves, but also the source term

(release rate) and initial jet dispersion model

used.

Considers the effect of new models for

turbulence velocity in industrial and urban

locations

The study investigates the effect of choice of

model on risk assessment, land use planning,

and on emergency planning.

Gas dispersion in industrial and urban Compares widely used gas dispersion models

locations with the results from CFD modelling, in order

to assess near field effects. Provide

phenomenological models for impinging and

semi confined jets.

Selection of gas toxicity criteria Investigates the effect of choice of criterion,

LC50 only, a full range of LC criteria, and of

the use of time scaling of toxic gas exposure on

risk assessment.

Investigates the use of AEGL, ERPG, and IDLH

data on emergency planning.

Effect of choice of wind speed categories In risk analyses it is usual to choose one or two

wind speeds, and typically two or three stability

categories for risk assessments. This study

provides a sensitivity study on the effect of the

choice on risk assessment.

Pool spread model selection The study compares the effect of different

models, and different choice of pool limitation

assumptions, on the rate of evaporation of

volatile materials. A comparison is made with

observation from actual spills, and the effect of

this on risk assessment is made.

© Taylor Associates ApS 2005 16

QRAQ 1 The quality of risk analysis

Study Description

Very low wind speed dispersion This study implements the recommendations

from the UK HSE review of low wind speed

dispersion, and determines the effect of this on

land use planning and emergency planning.

Indoor release The study investigates models for release of

gases indoors, such as ammonia from

refrigeration systems, and the choice of plume

dispersion source terms. Provides comparison

with actual accident data, and evaluation of the

effect of the modelling on land use planning and

emergency planning.

Ignition probability models This study compares five different approaches

to detemining ignition probabilities (Cox Lees

and Ang, IFAL, Purple Book, JIP and UK HSE

Atkins (Rew) models. Results are compared

with actual accident experience, and assesses

the usefulness of the models.

Pool fire model selection The study compares pool fire models with

observations from actual fires, and in particular,

the effect of heat radiation on emergency

personnel fighting fires. Provides conclusions

on proper location for installation of fire water

monitors

Jet fire model selection The study compares pool fire models with

observations from actual fires, and in particular,

the effect of heat radiation on emergency

personnel fighting fires. Provides conclusions

on proper location for installation of fire water

monitors, and on the value of upgraded

emergency shutdown systems and of passive

fire protection.

Unconfined vapour cloud explosion Compares TNT equivalence type models, the

modelling multi energy model in its Yellow Book form,

the UK HSE GAME upgrades to the multi

energy model, and phenomenological models.

Compares the model predictions with actual

accident data (Flixborough, Milford Haven,

Copenhagen), and determines the effect of

choice of model on land use planning and on

control room building design.

Effect of water curtains, steam curtains and Describes the available models, and their effects

water sprays from hoses on heavy gas on plume sizes. Investigates the effect on risk.

dispersion

© Taylor Associates ApS 2005 17

QRAQ 1 The quality of risk analysis

Study Description

Effect of walls, berms and slopes on heavy Investigates heavy gas flow and the way this can

gas dispersion be obstructed or channelled. Provides

conclusions about the value of fitting protective

walls for the case of low wind speed.

Risk calculation for transport of hazardous Provides a study of the accuracy of different

material methods of calculating risk along road transport

routes.

Effect of safety engineering design standards Compares the effect of traditional design with

on risk modern high integrity design on risk for major

hazards plants. Compares the effect of modern

emergency measures on emergency planning.

© Taylor Associates ApS 2005 18

You might also like

- 30 Hour Construction Final Exam Answer KeyDocument74 pages30 Hour Construction Final Exam Answer KeyJames Warden79% (24)

- Cmaa #78 PDFDocument41 pagesCmaa #78 PDFrafael1978100% (3)

- Aws D8.1M 2013Document40 pagesAws D8.1M 2013vishesh dharaiya100% (3)

- Rweteba Nelson Risk Assessment WorkDocument26 pagesRweteba Nelson Risk Assessment WorkKofi Sam100% (8)

- Accuracy in QRADocument8 pagesAccuracy in QRAJohm Robert taylor100% (1)

- Conduct Effective Quantitative Risk Assessment (QRA) - StepsDocument14 pagesConduct Effective Quantitative Risk Assessment (QRA) - Stepsamal118No ratings yet

- FMDS0834Document38 pagesFMDS0834RafaelNo ratings yet

- SITXWHS003 - Implement and Monitor Work Health and Safety PracticesDocument14 pagesSITXWHS003 - Implement and Monitor Work Health and Safety PracticesALI HYDERNo ratings yet

- Windrive - 2001 Assembly InstructionDocument45 pagesWindrive - 2001 Assembly Instructionname family100% (1)

- QraDocument10 pagesQrauserscribd2011No ratings yet

- COMAH and Safety Case RegulationsDocument16 pagesCOMAH and Safety Case RegulationsAdeel RazaNo ratings yet

- ALARP JustificationDocument8 pagesALARP JustificationAli RizviNo ratings yet

- Kenexis: Understanding Fire and Gas Mapping Software and EffigyDocument34 pagesKenexis: Understanding Fire and Gas Mapping Software and EffigyShakeel AhmedNo ratings yet

- Comparison of Methane and Ethane Gas Release Using ALOHADocument1 pageComparison of Methane and Ethane Gas Release Using ALOHAShashank TiwariNo ratings yet

- Risk Based Process Safety DesignDocument13 pagesRisk Based Process Safety DesignVan SotNo ratings yet

- Chapter 1 - Chemical Process QRADocument56 pagesChapter 1 - Chemical Process QRANitesh KirnakeNo ratings yet

- EERA SampleDocument48 pagesEERA SampleAnggun Rush100% (1)

- F EI Calculation WorkbookDocument51 pagesF EI Calculation WorkbookWu DangNo ratings yet

- Comparison of Pha Methods PDFDocument10 pagesComparison of Pha Methods PDFQayyum KhanNo ratings yet

- Bow TieDocument2 pagesBow TieAnonymous ocCa18RNo ratings yet

- Efficient PHA of Non-Continuous Operating ModesDocument25 pagesEfficient PHA of Non-Continuous Operating ModesShakirNo ratings yet

- The Evaluation of Safety Barriers Using The Method LopaDocument7 pagesThe Evaluation of Safety Barriers Using The Method LopaDiegoNo ratings yet

- Paper 2Document8 pagesPaper 2Nitesh Kirnake100% (1)

- Risktec Essence of The Accuracy and Acceptability of Failure Rate DataDocument1 pageRisktec Essence of The Accuracy and Acceptability of Failure Rate DataKrishna KusumaNo ratings yet

- 5.5 Semi QRADocument11 pages5.5 Semi QRAscrbdgharaviNo ratings yet

- References QRADocument5 pagesReferences QRAMusafir LaluNo ratings yet

- Process Safety Mangement v4Document6 pagesProcess Safety Mangement v4Argha Kusumah ReiNo ratings yet

- HSE Methodology For Sociatal RiskDocument27 pagesHSE Methodology For Sociatal RiskshaileshgadbailNo ratings yet

- WWW - Hse.gov - Uk Comah Buncefield Bstgfinalreport PDFDocument118 pagesWWW - Hse.gov - Uk Comah Buncefield Bstgfinalreport PDFhulmanNo ratings yet

- ASI Performance Standard V2 Guidance Dec2017 PDFDocument117 pagesASI Performance Standard V2 Guidance Dec2017 PDFBayu AkbarNo ratings yet

- Hemp RaddDocument78 pagesHemp RaddMuhammad.SaimNo ratings yet

- Depressurization WebinarDocument15 pagesDepressurization WebinarMuhammad.SaimNo ratings yet

- Proposal Event TreeDocument8 pagesProposal Event TreeMufti Sinergi SolusiNo ratings yet

- Structural Integrity Management Cranfield UniversityDocument4 pagesStructural Integrity Management Cranfield UniversityAndreasNo ratings yet

- Fera Full Topic Assessment TemplateDocument19 pagesFera Full Topic Assessment TemplateasdasdNo ratings yet

- Consequenc Assessment Model For Incidents Involving Release From LNG CarrierDocument128 pagesConsequenc Assessment Model For Incidents Involving Release From LNG CarrierRichard HollidayNo ratings yet

- Improved Integration of LOPA With HAZOP Analyses: Dick Baum, Nancy Faulk, and P.E. John Pe RezDocument4 pagesImproved Integration of LOPA With HAZOP Analyses: Dick Baum, Nancy Faulk, and P.E. John Pe RezJéssica LimaNo ratings yet

- Offshore Installations (Safety Case) Regulations 2005 (PDF 181KB)Document12 pagesOffshore Installations (Safety Case) Regulations 2005 (PDF 181KB)Jaewan LeeNo ratings yet

- CC H 05 L3 - Asset IntegrityDocument35 pagesCC H 05 L3 - Asset Integrityhayder ahmedNo ratings yet

- Safety Manual: Risk Ranking MatrixDocument3 pagesSafety Manual: Risk Ranking MatrixMohammed Zubair100% (1)

- Consequence Modeling Using AlohaDocument40 pagesConsequence Modeling Using AlohaNageswar MakalaNo ratings yet

- Asset Integrity - The Key To Managing Major Incident Risks (Ogp)Document20 pagesAsset Integrity - The Key To Managing Major Incident Risks (Ogp)Martín Diego MastandreaNo ratings yet

- QuanRiskAssessment and Consequence Modelling-Student MaterialDocument108 pagesQuanRiskAssessment and Consequence Modelling-Student MaterialBedalo BesanlaNo ratings yet

- 1 RiskNIS-FinalReportDocument193 pages1 RiskNIS-FinalReportRomeo BoiiNo ratings yet

- Chemical Exposure IndexDocument23 pagesChemical Exposure IndexwankartikaNo ratings yet

- Gas Detection As A Risk Mitigation TechniqueDocument8 pagesGas Detection As A Risk Mitigation TechniqueFede Martinez100% (1)

- TN 015 Overrides 15Document8 pagesTN 015 Overrides 15Luis SantosNo ratings yet

- Fire Explosion Hazard ManagementDocument11 pagesFire Explosion Hazard ManagementEbenezer AaronNo ratings yet

- Prediction of Propane Release Consequences at Different Time - Scales From Different Sources Using ALOHADocument7 pagesPrediction of Propane Release Consequences at Different Time - Scales From Different Sources Using ALOHAShashank TiwariNo ratings yet

- Bowtie ExplicadoDocument10 pagesBowtie ExplicadoVan SotNo ratings yet

- GAPS Guidelines: Measuring Management of Change PerformanceDocument2 pagesGAPS Guidelines: Measuring Management of Change PerformanceAsad KhanNo ratings yet

- Busby Risk Matrix Pitfalls 180302Document12 pagesBusby Risk Matrix Pitfalls 180302Ronald Aranha100% (2)

- HASILT - An - Intelligent Software Platform For HAZOP - LOPA - SRS and SIL VerificationDocument9 pagesHASILT - An - Intelligent Software Platform For HAZOP - LOPA - SRS and SIL VerificationRonyNo ratings yet

- Quantitative Risk AssessmentDocument113 pagesQuantitative Risk AssessmentJay JayNo ratings yet

- Dust ExplosionDocument4 pagesDust ExplosionAtul TripathiNo ratings yet

- HAZID PresentationDocument11 pagesHAZID PresentationAlvian FachrurroziNo ratings yet

- Classification of PSM Barriers I Travers P McCullochDocument9 pagesClassification of PSM Barriers I Travers P McCullochNhut NguyenNo ratings yet

- Guidance On Performing Transportation Risk Analysis of Hazardous MaterialsDocument221 pagesGuidance On Performing Transportation Risk Analysis of Hazardous MaterialsDidik SupriyadiNo ratings yet

- Module 9 - ALARP 20150305Document32 pagesModule 9 - ALARP 20150305Muhammad.SaimNo ratings yet

- The Quantitative Assessment of Domino EfDocument14 pagesThe Quantitative Assessment of Domino EfAndrzej BąkałaNo ratings yet

- Fire and Explosion Hazard Management Rev 1Document36 pagesFire and Explosion Hazard Management Rev 1fxgbNo ratings yet

- Threat And Risk Assessment A Complete Guide - 2020 EditionFrom EverandThreat And Risk Assessment A Complete Guide - 2020 EditionNo ratings yet

- Risk Management of Complex Inorganic Materials: A Practical GuideFrom EverandRisk Management of Complex Inorganic Materials: A Practical GuideViolaine VerougstraeteNo ratings yet

- Corrective And Preventative Action A Complete Guide - 2020 EditionFrom EverandCorrective And Preventative Action A Complete Guide - 2020 EditionNo ratings yet

- Recognizing and Responding to Normalization of DevianceFrom EverandRecognizing and Responding to Normalization of DevianceNo ratings yet

- Fundamentals of Risk Management for Process Industry EngineersFrom EverandFundamentals of Risk Management for Process Industry EngineersNo ratings yet

- Essential Practices for Creating, Strengthening, and Sustaining Process Safety CultureFrom EverandEssential Practices for Creating, Strengthening, and Sustaining Process Safety CultureNo ratings yet

- ML 012600417Document140 pagesML 012600417RafaelNo ratings yet

- FM Global Property Loss Prevention Data Sheets: List of FiguresDocument12 pagesFM Global Property Loss Prevention Data Sheets: List of FiguresRafaelNo ratings yet

- Publication 1 - 92 - A Study of Tank Farm Fires in KuwaitDocument122 pagesPublication 1 - 92 - A Study of Tank Farm Fires in KuwaitRafaelNo ratings yet

- 0 Merton Bunker NFPA 72 Changes 2019 - AFAA NJDocument72 pages0 Merton Bunker NFPA 72 Changes 2019 - AFAA NJRafaelNo ratings yet

- IP-15 (Part 1)Document69 pagesIP-15 (Part 1)RafaelNo ratings yet

- Bowtie Pro™: Customising Diagrams in Version 3.3Document10 pagesBowtie Pro™: Customising Diagrams in Version 3.3RafaelNo ratings yet

- 12 Field Hydraulic ExerciseDocument11 pages12 Field Hydraulic ExerciseRafaelNo ratings yet

- (Perspectives on Individual Differences) Lawrence C. Hartlage (Auth.), Lawrence C. Hartlage, Cathy F. Telzrow (Eds.)-The Neuropsychology of Individual Differences_ a Developmental Perspective-Springer (1)Document335 pages(Perspectives on Individual Differences) Lawrence C. Hartlage (Auth.), Lawrence C. Hartlage, Cathy F. Telzrow (Eds.)-The Neuropsychology of Individual Differences_ a Developmental Perspective-Springer (1)Rafael100% (1)

- Types and Origins of Substation FiresDocument6 pagesTypes and Origins of Substation FiresRafael100% (1)

- ARFFChapter 9Document40 pagesARFFChapter 9Rafael100% (1)

- Safety Plan TemplateDocument22 pagesSafety Plan TemplateIsabelle LunaNo ratings yet

- HCR910 DS F105eDocument368 pagesHCR910 DS F105ejulioNo ratings yet

- Contractor's HSE RequirementsDocument1 pageContractor's HSE RequirementsAkd DeshmukhNo ratings yet

- DOMEMSP004 - R03 - HSE Management System ManualDocument27 pagesDOMEMSP004 - R03 - HSE Management System ManualCarolyne Ndeverah KaloNo ratings yet

- ATV630 650 Installation Manual en EAV64301 03Document117 pagesATV630 650 Installation Manual en EAV64301 03Mário EscadaNo ratings yet

- Fire and Safety in Edible Oil Industries To Be AdoptedDocument68 pagesFire and Safety in Edible Oil Industries To Be AdoptedRushiram Reddy60% (5)

- HSE CV DraftDocument11 pagesHSE CV DraftTata BelvizNo ratings yet

- Bfe Company ProfileDocument32 pagesBfe Company ProfileDaniel Edward OmondiNo ratings yet

- 8A. FDAS - Presentation - IIEE-CRCSA 1st Technical Webinar - March 25, 2022Document61 pages8A. FDAS - Presentation - IIEE-CRCSA 1st Technical Webinar - March 25, 2022Nels OdrajafNo ratings yet

- YVL E.3e PDFDocument62 pagesYVL E.3e PDFBhavani PrasadNo ratings yet

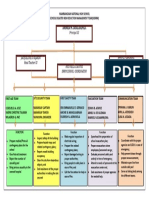

- DRRMDocument1 pageDRRMlesterNo ratings yet

- Longquiz Q1Document2 pagesLongquiz Q1Joyce PolintanNo ratings yet

- INTERZINC 22 Part B - SDSDocument7 pagesINTERZINC 22 Part B - SDSjorgekarlosprNo ratings yet

- Gender Into CBDRMDocument20 pagesGender Into CBDRMLorna VictoriaNo ratings yet

- MSDS этилмагнийбромидDocument7 pagesMSDS этилмагнийбромидJenia KachanNo ratings yet

- MSDS - PIDICRYL 4260 A - CAS No.Document4 pagesMSDS - PIDICRYL 4260 A - CAS No.Miguel Polanco BastidasNo ratings yet

- Volunteer Appointment Letter Template inDocument4 pagesVolunteer Appointment Letter Template inDhexter Villa67% (3)

- Sta. Fe National High SchoolDocument10 pagesSta. Fe National High SchoolCris Fredrich AndalizaNo ratings yet

- Topic 02 - OSH LEGISLATIONDocument47 pagesTopic 02 - OSH LEGISLATIONbyron eugenioNo ratings yet

- Advantest R3267 Opt66 BluetoothDocument149 pagesAdvantest R3267 Opt66 BluetoothDrewNo ratings yet

- ISOfocus 127 enDocument27 pagesISOfocus 127 ennusaNo ratings yet

- Liposomal Vitamin CDocument2 pagesLiposomal Vitamin CZahid MahmoodNo ratings yet

- Letter For BarrangayDocument12 pagesLetter For BarrangaystamonicafsNo ratings yet

- Sports InjuriesDocument128 pagesSports Injuriesagxibaih1100% (5)