Professional Documents

Culture Documents

1146 Full

Uploaded by

AndaOriginal Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

1146 Full

Uploaded by

AndaCopyright:

Available Formats

R ES E A RC H

SOCIAL SCIENCE support their positions as unreliable or fake news,

whereas sources that support their positions are

labeled reliable or not fake, the term has lost all

The spread of true and false connection to the actual veracity of the informa-

tion presented, rendering it meaningless for use

news online in academic classification. We have therefore ex-

plicitly avoided the term fake news throughout

this paper and instead use the more objectively

Soroush Vosoughi,1 Deb Roy,1 Sinan Aral2* verifiable terms “true” or “false” news. Although

the terms fake news and misinformation also

We investigated the differential diffusion of all of the verified true and false news stories imply a willful distortion of the truth, we do not

distributed on Twitter from 2006 to 2017. The data comprise ~126,000 stories tweeted by make any claims about the intent of the purveyors

~3 million people more than 4.5 million times. We classified news as true or false using of the information in our analyses. We instead

information from six independent fact-checking organizations that exhibited 95 to 98% focus our attention on veracity and stories that

agreement on the classifications. Falsehood diffused significantly farther, faster, deeper, and have been verified as true or false.

more broadly than the truth in all categories of information, and the effects were more We also purposefully adopt a broad definition

pronounced for false political news than for false news about terrorism, natural disasters, of the term news. Rather than defining what

science, urban legends, or financial information. We found that false news was more novel than constitutes news on the basis of the institutional

true news, which suggests that people were more likely to share novel information. Whereas source of the assertions in a story, we refer to any

false stories inspired fear, disgust, and surprise in replies, true stories inspired anticipation, asserted claim made on Twitter as news (we de-

sadness, joy, and trust. Contrary to conventional wisdom, robots accelerated the spread fend this decision in the supplementary materials

Downloaded from http://science.sciencemag.org/ on March 10, 2018

of true and false news at the same rate, implying that false news spreads more than the truth section on “reliable sources,” section S1.2). We

because humans, not robots, are more likely to spread it. define news as any story or claim with an asser-

F

tion in it and a rumor as the social phenomena

oundational theories of decision-making Current work analyzes the spread of single of a news story or claim spreading or diffusing

(1–3), cooperation (4), communication (5), rumors, like the discovery of the Higgs boson through the Twitter network. That is, rumors are

and markets (6) all view some concep- (13) or the Haitian earthquake of 2010 (14), and inherently social and involve the sharing of claims

tualization of truth or accuracy as central multiple rumors from a single disaster event, like between people. News, on the other hand, is an

to the functioning of nearly every human the Boston Marathon bombing of 2013 (10), or it assertion with claims, whether it is shared or not.

endeavor. Yet, both true and false information develops theoretical models of rumor diffusion A rumor cascade begins on Twitter when a

spreads rapidly through online media. Defining (15), methods for rumor detection (16), credibility user makes an assertion about a topic in a tweet,

what is true and false has become a common evaluation (17, 18), or interventions to curtail the which could include written text, photos, or links

political strategy, replacing debates based on spread of rumors (19). But almost no studies com- to articles online. Others then propagate the

a mutually agreed on set of facts. Our economies prehensively evaluate differences in the spread rumor by retweeting it. A rumor’s diffusion pro-

are not immune to the spread of falsity either. of truth and falsity across topics or examine cess can be characterized as having one or more

False rumors have affected stock prices and the why false news may spread differently than the cascades, which we define as instances of a rumor-

motivation for large-scale investments, for ex- truth. For example, although Del Vicario et al. spreading pattern that exhibit an unbroken re-

ample, wiping out $130 billion in stock value (20) and Bessi et al. (21) studied the spread of tweet chain with a common, singular origin. For

after a false tweet claimed that Barack Obama scientific and conspiracy-theory stories, they example, an individual could start a rumor cas-

was injured in an explosion (7). Indeed, our re- did not evaluate their veracity. Scientific and cade by tweeting a story or claim with an assertion

sponses to everything from natural disasters conspiracy-theory stories can both be either true in it, and another individual could independently

(8, 9) to terrorist attacks (10) have been disrupted or false, and they differ on stylistic dimensions start a second cascade of the same rumor (per-

by the spread of false news online. that are important to their spread but orthogonal taining to the same story or claim) that is com-

New social technologies, which facilitate rapid to their veracity. To understand the spread of pletely independent of the first cascade, except

information sharing and large-scale information false news, it is necessary to examine diffusion that it pertains to the same story or claim. If they

cascades, can enable the spread of misinformation after differentiating true and false scientific stories remain independent, they represent two cascades

(i.e., information that is inaccurate or misleading). and true and false conspiracy-theory stories and of the same rumor. Cascades can be as small as size

But although more and more of our access to controlling for the topical and stylistic differences one (meaning no one retweeted the original tweet).

information and news is guided by these new between the categories themselves. The only study The number of cascades that make up a rumor is

technologies (11), we know little about their con- to date that segments rumors by veracity is that of equal to the number of times the story or claim was

tribution to the spread of falsity online. Though Friggeri et al. (19), who analyzed ~4000 rumors independently tweeted by a user (not retweeted).

considerable attention has been paid to anecdotal spreading on Facebook and focused more on how So, if a rumor “A” is tweeted by 10 people separate-

analyses of the spread of false news by the media fact checking affects rumor propagation than on ly, but not retweeted, it would have 10 cascades,

(12), there are few large-scale empirical investiga- how falsity diffuses differently than the truth (22). each of size one. Conversely, if a second rumor

tions of the diffusion of misinformation or its social In our current political climate and in the “B” is independently tweeted by two people and

origins. Studies of the spread of misinformation academic literature, a fluid terminology has arisen each of those two tweets is retweeted 100 times,

are currently limited to analyses of small, ad hoc around “fake news,” foreign interventions in the rumor would consist of two cascades, each

samples that ignore two of the most important U.S. politics through social media, and our under- of size 100.

scientific questions: How do truth and falsity standing of what constitutes news, fake news, Here we investigate the differential diffusion

diffuse differently, and what factors of human false news, rumors, rumor cascades, and other of true, false, and mixed (partially true, partially

judgment explain these differences? related terms. Although, at one time, it may have false) news stories using a comprehensive data

been appropriate to think of fake news as refer- set of all of the fact-checked rumor cascades that

ring to the veracity of a news story, we now spread on Twitter from its inception in 2006 to

1

believe that this phrase has been irredeemably 2017. The data include ~126,000 rumor cascades

Massachusetts Institute of Technology (MIT), the Media Lab,

E14-526, 75 Amherst Street, Cambridge, MA 02142, USA. 2MIT,

polarized in our current political and media cli- spread by ~3 million people more than 4.5 million

E62-364, 100 Main Street, Cambridge, MA 02142, USA. mate. As politicians have implemented a political times. We sampled all rumor cascades investigated

*Corresponding author. Email: sinan@mit.edu strategy of labeling news sources that do not by six independent fact-checking organizations

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 1 of 6

R ES E A RC H | R E PO R T

(snopes.com, politifact.com, factcheck.org, truthor- depth (the number of retweet hops from the end of 2016, corresponding to the last U.S. presi-

fiction.com, hoax-slayer.com, and urbanlegends. origin tweet over time, where a hop is a retweet dential election (Fig. 1C). The data also show

about.com) by parsing the title, body, and verdict by a new unique user), size (the number of users clear increases in the total number of false polit-

(true, false, or mixed) of each rumor investigation involved in the cascade over time), maximum ical rumors during the 2012 and 2016 U.S. presi-

reported on their websites and automatically breadth (the maximum number of users involved dential elections (Fig. 1E) and a spike in rumors

collecting the cascades corresponding to those in the cascade at any depth), and structural vi- that contained partially true and partially false

rumors on Twitter. The result was a sample of rality (23) (a measure that interpolates between information during the Russian annexation of

rumor cascades whose veracity had been agreed content spread through a single, large broadcast Crimea in 2014 (Fig. 1E). Politics was the largest

on by these organizations between 95 and 98% of and that which spreads through multiple gen- rumor category in our data, with ~45,000 cas-

the time. We cataloged the diffusion of the rumor erations, with any one individual directly respon- cades, followed by urban legends, business, terror-

cascades by collecting all English-language replies sible for only a fraction of the total spread) (see ism, science, entertainment, and natural disasters

to tweets that contained a link to any of the the supplementary materials for more detail on (Fig. 1F).

aforementioned websites from 2006 to 2017 and the measurement of rumor diffusion). When we analyzed the diffusion dynamics of

used optical character recognition to extract text As a rumor is retweeted, the depth, size, max- true and false rumors, we found that falsehood

from images where needed. For each reply tweet, imum breadth, and structural virality of the cas- diffused significantly farther, faster, deeper, and

we extracted the original tweet being replied to cade increase (Fig. 1A). A greater fraction of false more broadly than the truth in all categories of

and all the retweets of the original tweet. Each rumors experienced between 1 and 1000 cascades, information [Kolmogorov-Smirnov (K-S) tests are

retweet cascade represents a rumor propagating whereas a greater fraction of true rumors experi- reported in tables S3 to S10]. A significantly greater

on Twitter that has been verified as true or false enced more than 1000 cascades (Fig. 1B); this was fraction of false cascades than true cascades

by the fact-checking organizations (see the sup- also true for rumors based on political news (Fig. exceeded a depth of 10, and the top 0.01% of false

plementary materials for more details on cascade 1D). The total number of false rumors peaked at cascades diffused eight hops deeper into the

Downloaded from http://science.sciencemag.org/ on March 10, 2018

construction). We then quantified the cascades’ the end of both 2013 and 2015 and again at the Twittersphere than the truth, diffusing to depths

Fig. 1. Rumor cascades. (A) An example rumor cascade collected by our that diffused on Twitter between 2006 and 2017, annotated with example rumors

method as well as its depth, size, maximum breadth, and structural virality over in each category. (D) The CCDFs of true, false, and mixed political cascades.

time. “Nodes” are users. (B) The complementary cumulative distribution (E) Quarterly counts of all true, false, and mixed political rumor cascades that

functions (CCDFs) of true, false, and mixed (partially true and partially false) diffused on Twitter between 2006 and 2017, annotated with example rumors in

cascades, measuring the fraction of rumors that exhibit a given number of each category. (F) A histogram of the total number of rumor cascades in our

cascades. (C) Quarterly counts of all true, false, and mixed rumor cascades data across the seven most frequent topical categories.

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 2 of 6

R ES E A RC H | R E PO R T

Fig. 2. Complementary cumulative distribution functions (CCDFs) of users reached at every depth and (H) the mean breadth of true and

Downloaded from http://science.sciencemag.org/ on March 10, 2018

true and false rumor cascades. (A) Depth. (B) Size. (C) Maximum false rumor cascades at every depth. In (H), plot is lognormal. Standard

breadth. (D) Structural virality. (E and F) The number of minutes it errors were clustered at the rumor level (i.e., cascades belonging to

takes for true and false rumor cascades to reach any (E) depth and (F) the same rumor were clustered together; see supplementary materials

number of unique Twitter users. (G) The number of unique Twitter for additional details).

Fig. 3. Complementary cumulative distribution functions (CCDFs) of any (E) depth and (F) number of unique Twitter users. (G) The number

false political and other types of rumor cascades. (A) Depth. (B) Size. of unique Twitter users reached at every depth and (H) the mean breadth

(C) Maximum breadth. (D) Structural virality. (E and F) The number of of these false rumor cascades at every depth. In (H), plot is lognormal.

minutes it takes for false political and other false news cascades to reach Standard errors were clustered at the rumor level.

greater than 19 hops from the origin tweet (Fig. It took the truth about six times as long as litical news also diffused deeper more quickly

2A). Falsehood also reached far more people than falsehood to reach 1500 people (Fig. 2F) and (Fig. 3E) and reached more than 20,000 people

the truth. Whereas the truth rarely diffused to 20 times as long as falsehood to reach a cascade nearly three times faster than all other types of

more than 1000 people, the top 1% of false-news depth of 10 (Fig. 2E). As the truth never diffused false news reached 10,000 people (Fig. 3F). Al-

cascades routinely diffused to between 1000 and beyond a depth of 10, we saw that falsehood though the other categories of false news reached

100,000 people (Fig. 2B). Falsehood reached more reached a depth of 19 nearly 10 times faster than about the same number of unique users at depths

people at every depth of a cascade than the truth, the truth reached a depth of 10 (Fig. 2E). Falsehood between 1 and 10, false political news routinely

meaning that many more people retweeted false- also diffused significantly more broadly (Fig. 2H) reached the most unique users at depths greater

hood than they did the truth (Fig. 2C). The spread and was retweeted by more unique users than the than 10 (Fig. 3G). Although all other categories

of falsehood was aided by its virality, meaning truth at every cascade depth (Fig. 2G). of false news traveled slightly more broadly at

that falsehood did not simply spread through False political news (Fig. 1D) traveled deeper shallower depths, false political news traveled

broadcast dynamics but rather through peer-to- (Fig. 3A) and more broadly (Fig. 3C), reached more more broadly at greater depths, indicating that

peer diffusion characterized by a viral branching people (Fig. 3B), and was more viral than any other more-popular false political news items exhibited

process (Fig. 2D). category of false information (Fig. 3D). False po- broader and more-accelerated diffusion dynamics

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 3 of 6

R ES E A RC H | R E PO R T

Downloaded from http://science.sciencemag.org/ on March 10, 2018

Fig. 4. Models estimating correlates of news diffusion, the novelty of content of replies to true (green) and false (red) rumor tweets across

true and false news, and the emotional content of replies to news. seven dimensions categorized by the NRC. (E) Mean and variance

(A) Descriptive statistics on users who participated in true and false rumor of the IU, KL, and BD of true and false rumor tweets compared to the

cascades as well as K-S tests of the differences in the distributions of these corpus of prior tweets the user has seen in the 60 days before seeing the

measures across true and false rumor cascades. (B) Results of a logistic rumor tweet as well as K-S tests of their differences across true and false

regression model estimating users’ likelihood of retweeting a rumor as a rumors. (F) Mean and variance of the emotional content of replies to

function of variables shown at the left. coeff, logit coefficient; z, z score. true and false rumor tweets across seven dimensions categorized

(C) Differences in the information uniqueness (IU), scaled Bhattacharyya by the NRC as well as K-S tests of their differences across true and

distance (BD), and K-L divergence (KL) of true (green) and false (red) false rumors. All standard errors are clustered at the rumor level,

rumor tweets compared to the corpus of prior tweets the user was exposed and all models are estimated with cluster-robust standard errors at

to in the 60 days before retweeting the rumor tweet. (D) The emotional the rumor level.

(Fig. 3H). Analysis of all news categories showed diffused farther and faster than the truth despite cial status on one that is “in the know” or has

that news about politics, urban legends, and science these differences, not because of them. access to unique “inside” information (26)]. We

spread to the most people, whereas news about When we estimated a model of the likelihood therefore tested whether falsity was more novel

politics and urban legends spread the fastest of retweeting, we found that falsehoods were than the truth and whether Twitter users were

and were the most viral in terms of their struc- 70% more likely to be retweeted than the truth more likely to retweet information that was

tural virality (see fig. S11 for detailed comparisons (Wald chi-square test, P ~ 0.0), even when con- more novel.

across all topics). trolling for the account age, activity level, and To assess novelty, we randomly selected ~5000

One might suspect that structural elements of number of followers and followees of the origi- users who propagated true and false rumors and

the network or individual characteristics of the nal tweeter, as well as whether the original tweet- extracted a random sample of ~25,000 tweets

users involved in the cascades explain why falsity er was a verified user (Fig. 4B). Because user that they were exposed to in the 60 days prior

travels with greater velocity than the truth. Per- characteristics and network structure could not to their decision to retweet a rumor. We then

haps those who spread falsity “followed” more explain the differential diffusion of truth and specified a latent Dirichlet Allocation Topic model

people, had more followers, tweeted more often, falsity, we sought alternative explanations for (27), with 200 topics and trained on 10 million

were more often “verified” users, or had been on the differences in their diffusion dynamics. English-language tweets, to calculate the in-

Twitter longer. But when we compared users in- One alternative explanation emerges from in- formation distance between the rumor tweets

volved in true and false rumor cascades, we formation theory and Bayesian decision theory. and all the prior tweets that users were exposed

found that the opposite was true in every case. Novelty attracts human attention (24), con- to before retweeting the rumor tweets. This

Users who spread false news had significant- tributes to productive decision-making (25), and generated a probability distribution over the

ly fewer followers (K-S test = 0.104, P ~ 0.0), encourages information sharing (26) because 200 topics for each tweet in our data set. We then

followed significantly fewer people (K-S test = novelty updates our understanding of the world. measured how novel the information in the true

0.136, P ~ 0.0), were significantly less active on When information is novel, it is not only surpris- and false rumors was by comparing the topic

Twitter (K-S test = 0.054, P ~ 0.0), were verified ing, but also more valuable, both from an infor- distributions of the rumor tweets with the topic

significantly less often (K-S test = 0.004, P < 0.001), mation theoretic perspective [in that it provides distributions of the tweets to which users were

and had been on Twitter for significantly less time the greatest aid to decision-making (25)] and exposed in the 60 days before their retweet. We

(K-S test = 0.125, P ~ 0.0) (Fig. 4A). Falsehood from a social perspective [in that it conveys so- found that false rumors were significantly more

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 4 of 6

R ES E A RC H | R E PO R T

novel than the truth across all novelty metrics, Second, a selection bias may arise from the news spreads farther, faster, deeper, and more

displaying significantly higher information restriction of our sample to tweets fact checked broadly than the truth because humans, not ro-

uniqueness (K-S test = 0.457, P ~ 0.0) (28), by the six organizations we relied on. Fact checking bots, are more likely to spread it.

Kullback-Leibler (K-L) divergence (K-S test = may select certain types of rumors or draw addi- Finally, more research on the behavioral ex-

0.433, P ~ 0.0) (29), and Bhattacharyya distance tional attention to them. To validate the robust- planations of differences in the diffusion of true

(K-S test = 0.415, P ~ 0.0) (which is similar to the ness of our analysis to this selection and the and false news is clearly warranted. In par-

Hellinger distance) (30). The last two metrics generalizability of our results to all true and false ticular, more robust identification of the factors

measure differences between probability distri- rumor cascades, we independently verified a sec- of human judgment that drive the spread of true

butions representing the topical content of the ond sample of rumor cascades that were not ver- and false news online requires more direct inter-

incoming tweet and the corpus of previous tweets ified by any fact-checking organization. These action with users through interviews, surveys, lab

to which users were exposed. rumors were fact checked by three undergrad- experiments, and even neuroimaging. We encour-

Although false rumors were measurably more uate students at Massachusetts Institute of Tech- age these and other approaches to the investiga-

novel than true rumors, users may not have per- nology (MIT) and Wellesley College. We trained tion of the factors of human judgment that drive

ceived them as such. We therefore assessed users’ the students to detect and investigate rumors with the spread of true and false news in future work.

perceptions of the information contained in true our automated rumor-detection algorithm run- False news can drive the misallocation of re-

and false rumors by comparing the emotional ning on 3 million English-language tweets from sources during terror attacks and natural disas-

content of replies to true and false rumors. We 2016 (34). The undergraduate annotators inves- ters, the misalignment of business investments,

categorized the emotion in the replies by using tigated the veracity of the detected rumors using and misinformed elections. Unfortunately, although

the leading lexicon curated by the National Re- simple search queries on the web. We asked them the amount of false news online is clearly in-

search Council Canada (NRC), which provides a to label the rumors as true, false, or mixed on the creasing (Fig. 1, C and E), the scientific under-

comprehensive list of ~140,000 English words basis of their research and to discard all rumors standing of how and why false news spreads is

Downloaded from http://science.sciencemag.org/ on March 10, 2018

and their associations with eight emotions based previously investigated by one of the fact-checking currently based on ad hoc rather than large-scale

on Plutchik’s (31) work on basic emotion—anger, organizations. The annotators, who worked in- systematic analyses. Our analysis of all the ver-

fear, anticipation, trust, surprise, sadness, joy, dependently and were not aware of one another, ified true and false rumors that spread on Twitter

and disgust (32)—and a list of ~32,000 Twitter agreed on the veracity of 90% of the 13,240 rumor confirms that false news spreads more pervasively

hashtags and their weighted associations with cascades that they investigated and achieved a than the truth online. It also overturns conven-

the same emotions (33). We removed stop words Fleiss’ kappa of 0.88. When we compared the tional wisdom about how false news spreads.

and URLs from the reply tweets and calculated diffusion dynamics of the true and false rumors Though one might expect network structure

the fraction of words in the tweets that related to that the annotators agreed on, we found results and individual characteristics of spreaders to

each of the eight emotions, creating a vector of nearly identical to those estimated with our favor and promote false news, the opposite is

emotion weights for each reply that summed to main data set (see fig. S17). False rumors in the true. The greater likelihood of people to re-

one across the emotions. We found that false robustness data set had greater depth (K-S test = tweet falsity more than the truth is what drives

rumors inspired replies expressing greater sur- 0.139, P ~ 0.0), size (K-S test = 0.131, P ~ 0.0), max- the spread of false news, despite network and

prise (K-S test = 0.205, P ~ 0.0), corroborating the imum breadth (K-S test = 0.139, P ~ 0.0), structural individual factors that favor the truth. Further-

novelty hypothesis, and greater disgust (K-S test = virality (K-S test = 0.066, P ~ 0.0), and speed more, although recent testimony before con-

0.102, P ~ 0.0), whereas the truth inspired replies (fig. S17) and a greater number of unique users gressional committees on misinformation in the

that expressed greater sadness (K-S test = 0.037, at each depth (fig. S17). When we broadened the United States has focused on the role of bots in

P ~ 0.0), anticipation (K-S test = 0.038, P ~ 0.0), analysis to include majority-rule labeling, rather spreading false news (36), we conclude that

joy (K-S test = 0.061, P ~ 0.0), and trust (K-S test = than unanimity, we again found the same results human behavior contributes more to the differ-

0.060, P ~ 0.0) (Fig. 4, D and F). The emotions (see supplementary materials for results using ential spread of falsity and truth than automated

expressed in reply to falsehoods may illuminate majority-rule labeling). robots do. This implies that misinformation-

additional factors, beyond novelty, that inspire Third, although the differential diffusion of containment policies should also emphasize be-

people to share false news. Although we cannot truth and falsity is interesting with or without havioral interventions, like labeling and incentives

claim that novelty causes retweets or that novel- robot, or bot, activity, one may worry that our to dissuade the spread of misinformation, rather

ty is the only reason why false news is retweeted conclusions about human judgment may be than focusing exclusively on curtailing bots. Un-

more often, we do find that false news is more biased by the presence of bots in our analysis. derstanding how false news spreads is the first

novel and that novel information is more likely We therefore used a sophisticated bot-detection step toward containing it. We hope our work in-

to be retweeted. algorithm (35) to identify and remove all bots spires more large-scale research into the causes

Numerous diagnostic statistics and manipula- before running the analysis. When we added and consequences of the spread of false news as

tion checks validated our results and confirmed bot traffic back into the analysis, we found that well as its potential cures.

their robustness. First, as there were multiple none of our main conclusions changed—false

cascades for every true and false rumor, the var- news still spread farther, faster, deeper, and more

REFERENCES AND NOTES

iance of and error terms associated with cascades broadly than the truth in all categories of infor-

1. L. J. Savage, J. Am. Stat. Assoc. 46, 55–67 (1951).

corresponding to the same rumor will be cor- mation. The results remained the same when we

2. H. A. Simon, The New Science of Management Decision (Harper

related. We therefore specified cluster-robust removed all tweet cascades started by bots, includ- & Brothers Publishers, New York, 1960).

standard errors and calculated all variance statis- ing human retweets of original bot tweets (see 3. R. Wedgwood, Noûs 36, 267–297 (2002).

tics clustered at the rumor level. We tested the supplementary materials, section S8.3) and when 4. E. Fehr, U. Fischbacher, Nature 425, 785–791 (2003).

robustness of our findings to this specification we used a second, independent bot-detection 5. C. E. Shannon, Bell Syst. Tech. J. 27, 379–423 (1948).

by comparing analyses with and without clustered algorithm (see supplementary materials, sec- 6. S. Bikhchandani, D. Hirshleifer, I. Welch, J. Polit. Econ. 100,

992–1026 (1992).

errors and found that, although clustering reduced tion S8.3.5) and varied the algorithm’s sensitivity

7. K. Rapoza, “Can ‘fake news’ impact the stock market?” Forbes,

the precision of our estimates as expected, the threshold to verify the robustness of our analy- 26 February 2017; www.forbes.com/sites/kenrapoza/2017/

directions, magnitudes, and significance of our sis (see supplementary materials, section S8.3.4). 02/26/can-fake-news-impact-the-stock-market/.

results did not change, and chi-square (P ~ 0.0) Although the inclusion of bots, as measured by 8. M. Mendoza, B. Poblete, C. Castillo, in Proceedings of the First

and deviance (d) goodness-of-fit tests (d = 3.4649 × the two state-of-the-art bot-detection algorithms Workshop on Social Media Analytics (Association for Computing

Machinery, ACM, 2010), pp. 71–79.

10–6, P ~ 1.0) indicate that the models are well we used in our analysis, accelerated the spread 9. A. Gupta, H. Lamba, P. Kumaraguru, A. Joshi, in Proceedings

specified (see supplementary materials for more of both true and false news, it affected their of the 22nd International Conference on World Wide Web

detail). spread roughly equally. This suggests that false (ACM, 2010), pp. 729–736.

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 5 of 6

R ES E A RC H | R E PO R T

10. K. Starbird, J. Maddock, M. Orand, P. Achterman, R. M. Mason, for false rumors. Although these results are important, they Research Institute and Senior Fellow at the Center for Cyber

in iConference 2014 Proceedings (iSchools, 2014). are not definitive owing to the smaller sample size of the study; and Homeland Security at George Washington University—

11. J. Gottfried, E. Shearer, “News use across social media platforms,” the early timing of the sample, which misses the rise of false given during the U.S. Senate Select Committee on Intelligence

Pew Research Center, 26 May 2016; www.journalism.org/ news after 2013; and the fact that more shares per rumor do not hearing on “Disinformation: A Primer in Russian Active

2016/05/26/news-use-across-social-media-platforms-2016/. necessarily equate to deeper, broader, or more rapid diffusion. Measures and Influence Campaigns” on 30 March 2017;

12. C. Silverman, “This analysis shows how viral fake election news 23. S. Goel, A. Anderson, J. Hofman, D. J. Watts, Manage. Sci. 62, www.intelligence.senate.gov/sites/default/files/documents/

stories outperformed real news on Facebook,” BuzzFeed News, 180–196 (2015). os-cwatts-033017.pdf.

16 November 2016; www.buzzfeed.com/craigsilverman/viral- 24. L. Itti, P. Baldi, Vision Res. 49, 1295–1306 (2009).

fake-election-news-outperformed-real-news-on-facebook/. AC KNOWLED GME NTS

25. S. Aral, M. Van Alstyne, Am. J. Sociol. 117, 90–171 (2011).

13. M. De Domenico, A. Lima, P. Mougel, M. Musolesi, Sci. Rep. 3, We are indebted to Twitter for providing funding and access to

26. J. Berger, K. L. Milkman, J. Mark. Res. 49, 192–205 (2012).

2980 (2013). the data. We are also grateful to members of the MIT research

14. O. Oh, K. H. Kwon, H. R. Rao, in Proceedings of the International 27. D. M. Blei, A. Y. Ng, M. I. Jordan, J. Mach. Learn. Res. 3,

993–1022 (2003). community for invaluable discussions. The research was approved

Conference on Information Systems (International Conference

by the MIT institutional review board. The analysis code is freely

on Information Systems, ICIS, paper 231, 2010). 28. S. Aral, P. Dhillon, “Unpacking novelty: The anatomy of vision

available at https://goo.gl/forms/AKIlZujpexhN7fY33. The entire

15. M. Tambuscio, G. Ruffo, A. Flammini, F. Menczer, in Proceedings advantages,” Working paper, MIT–Sloan School of

data set is also available, from the same link, upon signing an

of the 24th International Conference on World Wide Web Management, Cambridge, MA, 22 June 2016; https://papers.

access agreement stating that (i) you shall only use the data set

(ACM, 2015), pp. 977–982. ssrn.com/sol3/papers.cfm?abstract_id=2388254.

for the purpose of validating the results of the MIT study and for no

16. Z. Zhao, P. Resnick, Q. Mei, in Proceedings of the 24th 29. T. M. Cover, J. A. Thomas, Elements of Information Theory

other purpose; (ii) you shall not attempt to identify, reidentify, or

International Conference on World Wide Web (ACM, 2015), (Wiley, 2012).

otherwise deanonymize the data set; and (iii) you shall not further

pp. 1395–1405. 30. T. Kailath, IEEE Trans. Commun. Technol. 15, 52–60

17. M. Gupta, P. Zhao, J. Han, in Proceedings of the 2012 share, distribute, publish, or otherwise disseminate the data set.

(1967).

Society for Industrial and Applied Mathematics International Those who wish to use the data for any other purposes can contact

31. R. Plutchik, Am. Sci. 89, 344–350 (2001). and make a separate agreement with Twitter.

Conference on Data Mining (Society for Industrial and Applied 32. S. M. Mohammad, P. D. Turney, Comput. Intell. 29, 436–465

Mathematics, SIAM, 2012), pp. 153–164. (2013).

18. G. L. Ciampaglia et al., PLOS ONE 10, e0128193 (2015).

19. A. Friggeri, L. A. Adamic, D. Eckles, J. Cheng, in Proceedings of 33. S. M. Mohammad, S. Kiritchenko, Comput. Intell. 31, 301–326 SUPPLEMENTARY MATERIALS

the International Conference on Weblogs and Social Media (2015).

www.sciencemag.org/content/359/6380/1146/suppl/DC1

Downloaded from http://science.sciencemag.org/ on March 10, 2018

(Association for the Advancement of Artificial Intelligence, 34. S. Vosoughi, D. Roy, in Proceedings of the 10th International

Materials and Methods

AAAI, 2014) AAAI Conference on Weblogs and Social Media (AAAI, 2016),

Figs. S1 to S20

20. M. Del Vicario et al., Proc. Natl. Acad. Sci. U.S.A. 113, 554–559 pp. 707–710.

Tables S1 to S39

(2016). 35. C. A. Davis, O. Varol, E. Ferrara, A. Flammini, F. Menczer, in

References (37–75)

21. A. Bessi et al., PLOS ONE 10, e0118093 (2015). Proceedings of the 25th International Conference Companion on

22. Friggeri et al. (19) do evaluate two metrics of diffusion: depth, World Wide Web (ACM, 2016), pp. 273–274.

which shows little difference between true and false rumors, 36. For example, this is an argument made in recent testimony by 14 September 2017; accepted 19 January 2018

and shares per rumor, which is higher for true rumors than it is Clint Watts—Robert A. Fox Fellow at the Foreign Policy 10.1126/science.aap9559

Vosoughi et al., Science 359, 1146–1151 (2018) 9 March 2018 6 of 6

The spread of true and false news online

Soroush Vosoughi, Deb Roy and Sinan Aral

Science 359 (6380), 1146-1151.

DOI: 10.1126/science.aap9559

Lies spread faster than the truth

There is worldwide concern over false news and the possibility that it can influence political, economic, and social

well-being. To understand how false news spreads, Vosoughi et al. used a data set of rumor cascades on Twitter from

2006 to 2017. About 126,000 rumors were spread by ∼3 million people. False news reached more people than the truth;

the top 1% of false news cascades diffused to between 1000 and 100,000 people, whereas the truth rarely diffused to

Downloaded from http://science.sciencemag.org/ on March 10, 2018

more than 1000 people. Falsehood also diffused faster than the truth. The degree of novelty and the emotional reactions

of recipients may be responsible for the differences observed.

Science, this issue p. 1146

ARTICLE TOOLS http://science.sciencemag.org/content/359/6380/1146

SUPPLEMENTARY http://science.sciencemag.org/content/suppl/2018/03/07/359.6380.1146.DC1

MATERIALS

RELATED http://science.sciencemag.org/content/sci/359/6380/1094.full

CONTENT

REFERENCES This article cites 32 articles, 1 of which you can access for free

http://science.sciencemag.org/content/359/6380/1146#BIBL

PERMISSIONS http://www.sciencemag.org/help/reprints-and-permissions

Use of this article is subject to the Terms of Service

Science (print ISSN 0036-8075; online ISSN 1095-9203) is published by the American Association for the Advancement of

Science, 1200 New York Avenue NW, Washington, DC 20005. 2017 © The Authors, some rights reserved; exclusive

licensee American Association for the Advancement of Science. No claim to original U.S. Government Works. The title

Science is a registered trademark of AAAS.

You might also like

- Forna (Force-Directed RNA) : Simple and Effective Online RNA Secondary Structure DiagramsDocument3 pagesForna (Force-Directed RNA) : Simple and Effective Online RNA Secondary Structure DiagramsAndaNo ratings yet

- Bak-Clafer Unifying Class-2014 PDFDocument35 pagesBak-Clafer Unifying Class-2014 PDFAndaNo ratings yet

- Nielsen Genetic Circuit Design 2016Document13 pagesNielsen Genetic Circuit Design 2016AndaNo ratings yet

- Karr A Whole Cell Computational Model 2012Document25 pagesKarr A Whole Cell Computational Model 2012AndaNo ratings yet

- Cardinale-Contextualizing Context For Synthetic-2012Document11 pagesCardinale-Contextualizing Context For Synthetic-2012AndaNo ratings yet

- Dalchau Computational Design of Reaction Diffusion 2014Document16 pagesDalchau Computational Design of Reaction Diffusion 2014AndaNo ratings yet

- Johnsen Integrating Deployment Architectures 2015Document25 pagesJohnsen Integrating Deployment Architectures 2015AndaNo ratings yet

- Pedersen Towards Programming Languages 2009Document14 pagesPedersen Towards Programming Languages 2009AndaNo ratings yet

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (895)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5794)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (400)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (266)

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (588)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2259)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (73)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (121)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Ebook Stackoverflow For ItextDocument336 pagesEbook Stackoverflow For ItextAnonymous cZTeTlkag9No ratings yet

- Department of Education: Republic of The PhilippinesDocument1 pageDepartment of Education: Republic of The PhilippinesKlaribelle VillaceranNo ratings yet

- 5G NR Essentials Guide From IntelefyDocument15 pages5G NR Essentials Guide From IntelefyUzair KhanNo ratings yet

- Catch Up RPHDocument6 pagesCatch Up RPHபிரதீபன் இராதேNo ratings yet

- Eaai S 23 02045 PDFDocument28 pagesEaai S 23 02045 PDFAnjali JainNo ratings yet

- Matrices and Vectors. - . in A Nutshell: AT Patera, M Yano October 9, 2014Document19 pagesMatrices and Vectors. - . in A Nutshell: AT Patera, M Yano October 9, 2014navigareeNo ratings yet

- DXFtoGerberConversionGuide Rev2!12!10 13Document8 pagesDXFtoGerberConversionGuide Rev2!12!10 13Tomasz BarwińskiNo ratings yet

- Operator'S Manual PM20X-X-X-BXX: 2" Diaphragm PumpDocument12 pagesOperator'S Manual PM20X-X-X-BXX: 2" Diaphragm PumpOmar TadeoNo ratings yet

- Poka-Yoke or Mistake Proofing: Historical Evolution.Document5 pagesPoka-Yoke or Mistake Proofing: Historical Evolution.Harris ChackoNo ratings yet

- Chalcedony Value, Price, and Jewelry Information - International Gem SocietyDocument8 pagesChalcedony Value, Price, and Jewelry Information - International Gem Societyasset68No ratings yet

- Ce Licensure Examination Problems Rectilinear Translation 6Document2 pagesCe Licensure Examination Problems Rectilinear Translation 6Ginto AquinoNo ratings yet

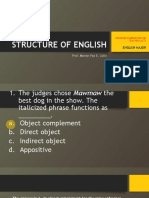

- LET-English-Structure of English-ExamDocument57 pagesLET-English-Structure of English-ExamMarian Paz E Callo80% (5)

- TRAVEL POLICY CARLO URRIZA OLIVAR Standard Insurance Co. Inc - Travel Protect - Print CertificateDocument4 pagesTRAVEL POLICY CARLO URRIZA OLIVAR Standard Insurance Co. Inc - Travel Protect - Print CertificateCarlo OlivarNo ratings yet

- Partnership LiquidationDocument46 pagesPartnership LiquidationSewale Abate79% (19)

- CO 101 Introductory Computing CO 102 Computing LabDocument17 pagesCO 101 Introductory Computing CO 102 Computing Labadityabaid4No ratings yet

- Manual TV Hyundai HYLED3239iNTMDocument40 pagesManual TV Hyundai HYLED3239iNTMReinaldo TorresNo ratings yet

- Adding and Subtracting FractionsDocument4 pagesAdding and Subtracting Fractionsapi-508898016No ratings yet

- Mini Project A-9-1Document12 pagesMini Project A-9-1santhoshrao19No ratings yet

- Newcastle University Dissertation FormatDocument6 pagesNewcastle University Dissertation FormatWriteMyEnglishPaperForMeSterlingHeights100% (1)

- Introduction of ProtozoaDocument31 pagesIntroduction of ProtozoaEINSTEIN2D100% (2)

- Iroquois Clothes and WampumDocument3 pagesIroquois Clothes and Wampumapi-254323856No ratings yet

- Teambinder Product BrochureDocument7 pagesTeambinder Product BrochurePrinceNo ratings yet

- Phenomenology of The SelfDocument5 pagesPhenomenology of The SelfGuilherme CastelucciNo ratings yet

- Paper 4 Material Management Question BankDocument3 pagesPaper 4 Material Management Question BankDr. Rakshit Solanki100% (2)

- Tutorial 6Document3 pagesTutorial 6Lai Qing YaoNo ratings yet

- Characteristics of Trochoids and Their Application To Determining Gear Teeth Fillet ShapesDocument14 pagesCharacteristics of Trochoids and Their Application To Determining Gear Teeth Fillet ShapesJohn FelemegkasNo ratings yet

- Multi-Media Approach To Teaching-LearningDocument8 pagesMulti-Media Approach To Teaching-LearningswethashakiNo ratings yet

- Alto Hotel Melbourne GreenDocument2 pagesAlto Hotel Melbourne GreenShubham GuptaNo ratings yet

- Honeywell Rondostat Hr20 SpesificationDocument2 pagesHoneywell Rondostat Hr20 Spesificationfrox123No ratings yet

- Weekly Lesson Plan: Pry 3 (8years) Third Term Week 1Document12 pagesWeekly Lesson Plan: Pry 3 (8years) Third Term Week 1Kunbi Santos-ArinzeNo ratings yet