Professional Documents

Culture Documents

SAP Cloud Platform

Uploaded by

Ariel MartinezCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

SAP Cloud Platform

Uploaded by

Ariel MartinezCopyright:

Available Formats

PUBLIC

2018-06-07

SAP Cloud Platform

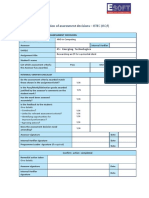

Content

1 What is SAP Cloud Platform. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

1.1 Accounts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .10

User Accounts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

Global Accounts and Subaccounts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

1.2 Environments. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

When to Use Which Environment?. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.3 Regions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

Regions and API Endpoints Available for the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . 22

Regions and Hosts Available for the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

1.4 Capabilities. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

Analytics. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

Business Services. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .25

Collaboration. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

Data & Storage. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

DevOps. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

Integration. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

Internet of Things. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

Machine Learning. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

Mobile Services. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

Runtimes & Containers. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

Security. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

User Experience. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 32

1.5 Connectivity. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 32

Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .35

Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

Cloud Connector. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 253

Connectivity via Reverse Proxy. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 427

Connectivity Support. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 428

Archive: What's New for Connectivity (2017). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 429

1.6 Document Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 433

Consuming the Document Service (Java). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 435

Consume Document Service (HTML5 Applications). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 483

Managing Repositories in the Cockpit. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 484

Managing a Repository with Console Client Commands. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 487

SAP Cloud Platform

2 PUBLIC Content

Terminate an Account and Export Data. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 487

1.7 Feedback Service (Beta). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 488

Consuming the Feedback Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .489

Getting Feedback for Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 500

1.8 Gamification. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 505

Getting Started. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 506

Gamification Development Cycle. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 516

Gamification Workbench. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 518

Security. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 522

Managing Apps. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .525

Configuring Game Mechanics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 530

Integrating Gamification into a Target Application. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 564

Analyzing Gamification Concepts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 573

Case Study: Gamified Help Desk Application. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 575

1.9 Git Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 595

Managing Repositories. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 596

Working with Repositories. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 601

Security. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .605

Best Practices. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 607

Troubleshooting. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 609

1.10 Messaging Concepts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 611

SAP Enterprise Messaging Architecture. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .613

Configuring SAP Enterprise Messaging. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 615

1.11 Monitoring Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 618

Monitoring Service Response for Java Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 618

Tutorial: Implement a Dashboard Application. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 621

Tutorial: Implement a Notification Application. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 624

1.12 Remote Data Sync Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 627

Get Access to the Remote Data Sync Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 630

Provide a MobiLink Server in Your Subaccount. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 631

Develop Client-Initiated Synchronization. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 633

MobiLink Server Logs. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 640

Configure Authentication for a MobiLink Server. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 643

Connecting SQL Anywhere Tools to MobiLink Servers. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 644

Monitor the Availability of the MobiLink Server. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .647

Performance and Scalability of the MobiLink Server. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 648

About This Guide. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 650

SAP HANA Service in the Cloud Foundry Environment (Before Update). . . . . . . . . . . . . . . . . . . . . 651

SAP HANA Service in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 701

Data Protection and Privacy. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .890

Archive - Release Notes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 891

SAP Cloud Platform

Content PUBLIC 3

1.13 Tools. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .897

SAP Cloud Platform SDK for Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 898

SAP Cloud Platform SDK for iOS. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 900

SAP Cloud Platform Cockpit. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 900

Eclipse Tools. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 903

SAP Web IDE. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 904

Maven Plugin. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 905

Console Client for the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 905

Cloud Foundry Command Line Interface. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 906

1.14 Product Prerequisites and Restrictions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 906

1.15 Related Information. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 908

2 Getting Started. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .909

2.1 Getting Started with a Trial Account in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . .909

Try It Out: 3 Easy Steps to Get You Started With the Cloud Foundry Environment. . . . . . . . . . . . . . 909

About Trial Accounts in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 913

Get Started with a Trial Account: Workflow in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . 914

2.2 Getting Started with a Trial Account in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 918

Try It Out: 3 Easy Steps to Get You Started With the Neo Environment. . . . . . . . . . . . . . . . . . . . . . 918

About Trial Accounts in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 924

Get Started with a Trial Account: Workflow in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . 925

2.3 Getting Started with a Customer Account: Workflow in the Cloud Foundry Environment. . . . . . . . . . . . 927

2.4 Getting Started with a Customer Account: Workflow in the Neo Environment. . . . . . . . . . . . . . . . . . . . 931

2.5 Getting a Global Account. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 935

Purchase a Customer Account. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 935

Join the Partner Program. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .935

2.6 Setting Up a Global Account. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 936

Log On to Your Global Account. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 936

Add Global Account Members. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 937

Create Subaccounts Using the Cockpit. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 938

Add Quotas to Subaccounts Using the Cockpit. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 944

Create Cloud Foundry Spaces Using the Cockpit. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 946

Configuring Your Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 951

Configuring Your Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 963

2.7 Getting Started with Business Application Subscriptions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 967

Subscribing to Business Applications in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . 968

Getting Started with Business Applications Subscriptions in the Neo Environment. . . . . . . . . . . . . 972

2.8 Tutorials. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 980

2.9 Glossary. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 983

3 Development. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .990

3.1 Applications in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 990

SAP Cloud Platform

4 PUBLIC Content

Developing SAP HANA in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 991

Developing Java in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 993

Developing Node.js in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1037

Developing Python in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1049

Developing SAPUI5 in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1060

Business Application Pattern. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1062

Using Services in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1066

Configure Application Router . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1075

Deploy Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1118

3.2 Applications in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1119

Using Services in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1119

Using Multiple Subaccounts for Staged Application Development. . . . . . . . . . . . . . . . . . . . . . . . . 1122

Java: Development. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1124

SAP HANA: Development. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1222

HTML5: Development. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1259

Creating Your First Cloud Application. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1287

API Documentation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1288

3.3 Multi-Target Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1292

Multi-Target Applications for the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . 1294

Multi-Target Applications for the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1340

3.4 Business Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1438

Getting Started Tutorial. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1441

Best Practices. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1453

Core Data and Services (CDS) Language Reference. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1465

Adding Custom Logic. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1486

Localization. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1554

References. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1559

4 Extensions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1610

4.1 Basic Concepts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1612

Extension Application Front End. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1612

Extension Application Back End. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1615

4.2 Extending SAP Hybris Cloud for Customer. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1620

Create an Integration Token for SAP Hybris Cloud for Customer. . . . . . . . . . . . . . . . . . . . . . . . . .1622

4.3 Extending SAP SuccessFactors. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1624

Create an Integration Token for SAP SuccessFactors. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1627

Installing and Configuring Extension Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1628

5 Administration. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1658

5.1 Account Operations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1658

Change Global Account Display Name. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1658

Managing Subaccounts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1659

SAP Cloud Platform

Content PUBLIC 5

Managing Spaces. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1664

Managing Quotas. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1666

Managing Members. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1668

View Resource Consumption. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1684

Configuring and Executing End-to-End Trace Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1687

Account Termination. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1689

Configure Legal Information. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1690

5.2 Application Operations in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1691

Using Multiple Subaccounts for Staged Application Development. . . . . . . . . . . . . . . . . . . . . . . . .1691

Java: Application Operations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1693

SAP HANA: Application Operations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1731

HTML5: Application Operations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1732

Configuring Application URLs. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1743

Configuring Application Access via On-Premise Reverse Proxy. . . . . . . . . . . . . . . . . . . . . . . . . . . 1759

5.3 Virtual Machines. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1761

Manage Virtual Machines. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1764

Manage Network Communication for SAP Cloud Platform Virtual Machines. . . . . . . . . . . . . . . . . 1770

Manage Volumes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1780

Manage Volume Snapshots. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1782

Consume an SAP HANA Database From a Virtual Machine. . . . . . . . . . . . . . . . . . . . . . . . . . . . . .1785

Virtual Machine Tutorials. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1785

Get Started: Virtual Machines API (Neo Environment). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1786

5.4 Console Client for the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1792

Using the Console Client. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1792

Verbose Mode of the Console Commands Output. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1795

Machine-Readable Command Output. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1796

Console Client Commands. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1799

Exit Codes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2003

5.5 Cloud Foundry Command Line Interface. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2005

Download and Install the Cloud Foundry Command Line Interface. . . . . . . . . . . . . . . . . . . . . . . . 2006

CF CLI: Plug-ins. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2006

6 Security. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2031

6.1 Authorization and Trust Management. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2031

Authorization and Trust Management in the Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . 2032

Authorization and Trust Management in the Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . 2116

6.2 Platform Identity Provider. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2200

Overview. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2200

1. Create Trust with the Identity Authentication Tenant . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2201

2. Add Global Account Members from the Identity Authentication Tenant User Base. . . . . . . . . . . 2202

3. Add Subaccount Members from the Identity Authentication Tenant User Base. . . . . . . . . . . . . 2203

(Optional) 4. Configure the Identity Authentication Tenant for the Required Scenarios. . . . . . . . . .2204

SAP Cloud Platform

6 PUBLIC Content

Accessing the Cockpit with the Tenant User Base. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2205

Using the Console Client with the Tenant User Base. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2206

6.3 OAuth 2.0 Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2206

OAuth 2.0 Authorization Code Grant. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2208

OAuth 2.0 Client Credentials Grant. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2214

OAuth 2.0 Configuration. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2215

Principal Propagation to OAuth-Protected Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2223

6.4 Keystore Service. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2229

Keystore API. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2229

Keys and Certificates. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2231

Enabling Client Certificate Authentication. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2245

Enable Strong Encryption in Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2255

Storing Passwords. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2256

6.5 Protection from Web Attacks. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2258

Protection from Cross-Site Scripting (XSS). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2259

Protection from Cross-Site Request Forgery. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2262

6.6 Data Protection and Privacy. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2269

Glossary for Data Protection and Privacy. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2271

Change Logging and Read-Access Logging. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2272

Information Report. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2277

Erasure. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2278

Consent. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2279

7 Getting Support. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2280

7.1 Gather Support Information. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2282

7.2 Platform Updates and Notifications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2283

7.3 Operating Model. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2283

7.4 Request Extraction of Audit Logs. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2291

Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .2292

Neo Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2292

8 Archive - Release Notes for Platform Core 2017. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2293

SAP Cloud Platform

Content PUBLIC 7

1 What is SAP Cloud Platform

SAP Cloud Platform is an enterprise platform-as-a-service (enterprise PaaS) that provides comprehensive

application development services and capabilities, which lets you build, extend, and integrate business

applications in the cloud.

SAP Cloud Platform is SAP's innovative cloud development and deployment platform. It is supported by multiple

cloud infrastructure providers and enables innovative technologies such as the Internet of Things, machine

learning, artificial intelligence, and big data, thereby enabling you to achieve business agility and accerelate digital

transformation across your business. SAP Cloud Platform offers different development environments, including

the Cloud Foundry and Neo environments, and provides a broad choice of programming languages.

Scenarios

At its core, SAP Cloud Platform enables you to do the following:

● Extend on-premise and cloud solutions

Personalize and extend your existing applications, regardless of whether they run on-premise or in the cloud,

to achieve agility and go to market faster.

● Integrate on-premise and cloud solutions

Bring together disparate systems and IT landscapes to streamline your business processes and increase

efficiency.

● Develop new cloud applications

Create new cloud applications with a small capital investment in software and hardware.

Environments

Environments constitute the actual platform-as-a-service offering of SAP Cloud Platform that allows for the

development and administration of business applications. Each environment provides at least one application

runtime and comes with its own domain model, user and role management logic, and tools (for example,

command line utility). SAP Cloud Platform provides different environments: Cloud Foundry and Neo. For a detailed

overview of the features and capabilities of each environment, see Environments [page 16].

Regions

You can deploy applications in different regions. Each region represents the location of a data center, the physical

location (for example, Europe, US East) where applications, data, or services are hosted. These data centers are

operated either by SAP or by third-party data center providers such as Amazon Web Services (AWS) or Microsoft

Azure. You can optimize application performance (response time, latency) by selecting a region close to your

users. For more information, see Regions [page 21] and Global Accounts: Enterprise versus Trial [page 11].

SAP Cloud Platform

8 PUBLIC What is SAP Cloud Platform

Capabilities and Services

According to your preferred development environment and your use cases, you may want to consume a set of

services that are provided by SAP Cloud Platform. For more information, see Capabilities [page 24] and

Availability of SAP Cloud Platform Services.

Integration with SAP and Non-SAP Software

SAP Cloud Platform facilitates secure integration with on-premise systems that are running software from SAP

and other vendors. Using the platform services, such as the connectivity service, applications can establish secure

connections to on-premise solutions, enabling integration scenarios with your cloud-based applications. For more

information about the connectivity service, see Connectivity [page 32]

Secure Data

The comprehensive, multilevel security measures that are built into SAP Cloud Platform. are engineered to protect

your mission-critical business data and assets, and to provide the necessary industry-standard compliance

certifications.

Quality Certificates

Third-party certification bodies provide independent confirmation that SAP meets the requirements of

international standards. You can find all certificates at https://www.sap.com/corporate/en/company/quality.html

.

Free Trial

Get a free SAP Cloud Platform trial license that also gives you access to our community and all the technical

resources, tutorials, blogs, and support you need. Visit the SAP Cloud Platform Developer Center at https://

cloudplatform.sap.com/developers.html or the SAP Cloud Platform cockpit at https://

account.hanatrial.ondemand.com/.

Related Information

Environments [page 16]

Accounts [page 10]

Regions [page 21]

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 9

Getting Started [page 909]

1.1 Accounts

Learn more about the different types of accounts on SAP Cloud Platform and how they relate to each other.

1.1.1 User Accounts

User accounts enable users to log on to SAP Cloud Platform and access subaccounts and use services according

to the permissions given to them.

There are two types of users on SAP Cloud Platform: platform and business. Platform users are usually

developers, administrators, or operators who deploy, administer, and troubleshoot applications and services.

Business users are those who use the applications that are deployed to SAP Cloud Platform.

A user account corresponds to a particular user in the SAP ID service and consists, for example, of a user ID and

password. You can also integrate your own identity management systems to manage business users in both the

Cloud Foundry environment and the Neo environment. However, managing platform users using your own SAP

Cloud Platform Identity Authentication Service tenant is possible only in the Neo environment. For more

information, see Platform Identity Provider [page 2200].

A user account can be assigned to one or more global accounts, subaccounts, and Cloud Foundry spaces. As a

user, you can view a list of all global accounts, subaccounts, and Cloud Foundry spaces that are available to you,

and access them using the cockpit. A user with administrative permissions can create subaccounts and Cloud

Foundry spaces, add users to subaccounts and Cloud Foundry spaces, and assign roles to users for the

subaccount or Cloud Foundry space in question.

1.1.2 Global Accounts and Subaccounts

Global accounts are hosted environments that represent the scope of the functionality and the level of support

based on a customer or partner’s entitlement to platform resources and services.

The global account is the realization of the commercial contract with SAP. You can choose an enterprise global

account or a trial global account. The type you choose determines pricing, conditions of use, resources, and

services available. For more information, see Global Accounts: Enterprise versus Trial [page 11].

A global account can contain one or more subaccounts in which you deploy applications, use services, and

manage your subscriptions.

SAP Cloud Platform

10 PUBLIC What is SAP Cloud Platform

1.1.2.1 Global Accounts: Enterprise versus Trial

SAP Cloud Platform provides different types of global accounts, enterprise and trial. The type you choose

determines pricing, conditions of use, resources, available services, and hosts.

An enterprise account is usually associated with one SAP customer or partner and contains their purchased

entitlements to platform resources and services. It groups together different subaccounts that an administrator

makes available to users for deploying applications. Administrators can assign the available quotas to the different

subaccounts and move it between subaccounts that belong to the same enterprise account.

A trial account lets you try out SAP Cloud Platform for free. Access is open to everyone. Trial accounts are intended

for personal exploration, and not for production use or team development. They allow restricted use of the

platform resources and services. The trial period varies depending on the environment.

A trial account in the Cloud Foundry environment can contain multiple subaccounts. In the Neo environment, you

canmanage only one trial subaccount.

It depends on your use case whether you choose a free trial account or a paid enterprise account. You may want to

start out with an SAP Cloud Platform trial account that also gives you access to our community, including free

technical resources such as tutorials and blogs. If you plan to use your global account in productive mode, you

must purchase a paid enterprise account. It is important that you are aware of these differences when you are

planning and setting up your account model.

The main features of each global account type are described in the following tables:

Enterprise Accounts

Customer Account Partner Account

Use case A customer account is a global account that ena A partner account is a global account that ena

bles you to host productive, business-critical ap bles you to build applications and to sell them to

plications with 24/7 support. your customers.

You can purchase a customer account just like

any other SAP software.

Benefits Support for productive applications. ● Includes SAP Application Development li

censes that enable you to get started with

scenarios across cloud and on-premise ap

plications.

● Offers the opportunity to certify applications

and receive SAP partner logo package with

usage policies.

● Advertise and sell applications via the SAP

Store

Services available Productive services. Productive and beta services.

Limitations Resources according to your contract. Predefined resources according to your partner

package. You can purchase additional resources if

necessary.

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 11

Customer Account Partner Account

Registration For more information, see https://hcp.sap.com/ To join the partner program, sign up at the SAP

pricing.html . Application Development Partner Center .

Contact us on SAP Cloud Platform or via an

SAP sales representative.

Available Regions See Regions [page 21]. See Regions [page 21].

Trial Accounts

Cloud Foundry Environment Neo Environment

Use case A trial account enables you to explore the basic A trial account enables you to explore the basic

functionality of the Cloud Foundry environment functionality of the Neo environment for a non

for 90 days. Access is open to everyone. committal and unlimited period. Access is open

to everyone.

Benefits ● Free of charge ● Free of charge

● Self-service registration ● Self-service registration

● Usage possible for 30 days. You can extend ● Usage possible for an unlimited period of

the trial period to a maximum of 90 days, af time

ter which your account is automatically de ● A trial tenant database on a shared HANA

leted. MDC system that you can use for 12 hours.

The cockpit shows the time left in a free trial.

Once a trial is suspended, you can still log on Restriction

to it, but you won’t be able to use applica

After 12 hours, it is shut down automati

tions or services. Between 30 and 90 days

cally to free resources (see ).

after the creation of the trial, you can renew

the trial by using the Extend Free Trial button If you do not use the tenant database for

in the cockpit. 7 days, it is automatically deleted.

After your trial account has been deleted,

you can create a new trial account in the ● Multiple deployed Java applications

Cloud Foundry environment, unless you

would like to purchase an enterprise ac

count.

Services available Productive and beta services. Productive and beta services.

SAP Cloud Platform

12 PUBLIC What is SAP Cloud Platform

Cloud Foundry Environment Neo Environment

Limitations ● One trial account for a trial user ● One trial account for a trial user

● Subaccount creation is possible ● No subaccount creation allowed

● Trial account allows for member manage ● Does not allow for member management,

ment only one user per trial account

● 1 GB of memory for applications ● 1 GB of memory for applications

● 2 GB of instance memory ● 1 GB of database storage

● 20 total routes ● 1 GB of document storage

● 20 total services ● One SAP HANA MDC tenant database

● Two configured on-premise systems with the ● 100 MB of memory for all Git repositories

Cloud connector ● Two configured on-premise systems with the

● No service level agreement with regard to Cloud connector

the availability of the platform ● Cloud connector supported only for Java

● Usage of HDI containers in a shared SAP and HTML5 applications

HANA database

● No service level agreement with regard to

the availability of the platform

Registration Get a Free Trial Account in the Cloud Foundry En Get a Free Trial Account in the Neo Environment

vironment [page 910] [page 919]

Available Regions See Regions [page 21]. See Regions [page 21].

1.1.2.2 Relationship Between Global Accounts and

Subaccounts

A global account can group together different subaccounts that an administrator makes available to users.

Administrators can assign the available quotas of a global account to its different subaccounts and move it

between subaccounts that belong to the same global account.

The hierarchical structure of global accounts and subaccounts lets you define an account model that accurately

fits your business and development needs. For example, if you want to set up different environments for

development, testing, and productive usage, you can create a subaccount for each of these scenarios in your

global account. You can also create subaccounts for different development teams or departments in your

organizations. You can make additional elements available in this hierarchy, depending on the environment you

work in. For example, this can be the organization that is associated with your subaccount and that can contain

one or more spaces in the Cloud Foundry environment. Each subaccount comprises exactly one organization.

Subaccounts in a global account are independent from each other. This is important to consider with respect to

security, member management, data management, data migration and management, integration, and so on, when

you plan your landscape and overall architecture.

Each subaccount is associated with a particular region, which is the physical location where applications, data, or

services are hosted. Since the Cloud Foundry and the Neo environments run in different regions, each subaccount

comprises exactly one of these development environments. The specific region associated with a subaccount is

relevant when you deploy applications (region host) and access the SAP Cloud Platform cockpit (cockpit URL).

The region assigned to your subaccount doesn't have to be directly related to your location. You could be located in

the United States, for example, but operate your subaccount in Europe. For more information, see Regions [page

21].

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 13

You can enable a subaccount to use beta features, including services and applications, which are occasionally

made available by SAP for SAP Cloud Platform. This option, unselected by default, is available only to

administrators, for your enterprise account.

Caution

You should not use SAP Cloud Platform beta features in subaccounts that belong to productive enterprise

accounts. Any use of beta functionality is at the customer's own risk, and SAP shall not be liable for errors or

damages caused by the use of beta features.

For more information, see Using Beta Features in Subaccounts [page 16].

Cloud Foundry Environment Versus Neo Environment

For enterprise global accounts, you can create multiple subaccounts, Cloud Foundry or Neo.

Subaccounts in Enterprise Global Accounts

Every trial user must have a subaccount in the Neo environment. There is no global account associated with this

trial account. For a Cloud Foundry trial, you get a trial global account in addition to your Neo trial. Within your trial

global account, you can have multiple subaccounts in the Cloud Foundry environment.

SAP Cloud Platform

14 PUBLIC What is SAP Cloud Platform

Structure of a Neo Trial Subaccount

When you create a subaccount in a trial account in the Cloud Foundry environment, the system creates a Cloud

Foundry org automatically.

Note

The subaccount and the org have a 1:1 relationship. They have the same name and therefore also the same

navigation level in the cockpit.

Within that Cloud Foundry org, you can create spaces. Spaces enable you to further break down your account

model and use services and functions in the Cloud Foundry environment.

Structure of a Cloud Foundry Trial Global Account

Related Information

Environments [page 16]

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 15

Regions [page 21]

1.1.2.3 Using Beta Features in Subaccounts

SAP may offer, and a customer may choose to accept access to functionality, such as a service or application,

which is not generally available and has not been validated and quality assured in accordance with SAP standard

processes. Such functionality is defined as a beta feature.

Beta features let customers, developers, and partners test new features on SAP Cloud Platform. The beta features

have the following characteristics:

● SAP may require that customers accept additional terms to use beta features.

● Beta features are released for enterprise accounts, trial accounts, or both.

● To allow the use of beta features in the subaccounts available to you in the SAP Cloud Platform cockpit, you

need to set the Enable beta features option in the subaccount's details.

● No personal data may be processed by beta functionality in the context of contractual data processing without

additional written agreement.

Caution

You should not use SAP Cloud Platform beta features in subaccounts that belong to productive enterprise

accounts. Any use of beta functionality is at the customer's own risk, and SAP shall not be liable for errors or

damages caused by the use of beta features.

Related Information

Accounts [page 10]

Regions [page 21]

Change Subaccount Details [page 1660]

Managing Quotas [page 1666]

Create Subaccounts Using the Cockpit [page 938]

1.2 Environments

SAP Cloud Platform provides different development environments, for example, the Cloud Foundry environment

and the Neo environment.

The environments are open source and based on open standards. The availability of different environments

provides choices for technologies, runtimes, and services when using SAP Cloud Platform, allowing for great

flexibility in your development process. You can enhance SAP products, integrate business applications, as well as

develop entirely new enterprise applications based on services and business APIs that are hosted on SAP Cloud

Platform.

SAP Cloud Platform

16 PUBLIC What is SAP Cloud Platform

Each of your subaccounts is associated with a particular environment.

Related Information

Relationship Between Global Accounts and Subaccounts [page 13]

When to Use Which Environment? [page 18]

1.2.1 Cloud Foundry Environment

The Cloud Foundry environment contains the Cloud Foundry Application Runtime, which is based on the open-

source application platform managed by the Cloud Foundry Foundation.

You can deploy your Cloud Foundry applications in different regions, each of which represents the location of a

data center. For more information on regional availability of the Cloud Foundry environment, see Regions and API

Endpoints Available for the Cloud Foundry Environment [page 22].

You can leverage a multitude of buildpacks, including community innovations and self-developed buildpacks. It

also integrates with SAP HANA extended application services, advanced model (SAP HANA XSA). This runtime

platform enables you to develop and deploy web applications, supporting multiple runtimes, programming

languages, libraries, and services.

The following table shows which Cloud Foundry features are supported by the Cloud Foundry environment on SAP

Cloud Platform and which aren't.

Supported Features Unsupported Features

● Diego runtime. See https://docs.cloudfoundry.org/ ● Container-to-Container Networking. See https://

concepts/diego/diego-architecture.html . docs.cloudfoundry.org/concepts/understand-cf-network

● SSH. See https://docs.cloudfoundry.org/devguide/ ing.html .

deploy-apps/app-ssh-overview.html . ● Isolation Segments. See https://docs.cloudfoundry.org/

● Docker. See https://docs.cloudfoundry.org/adminguide/ adminguide/isolation-segments.html .

docker.html . ● TCP Routing. See https://docs.cloudfoundry.org/admin

● Running Tasks. See https://docs.cloudfoundry.org/ guide/enabling-tcp-routing.html .

devguide/using-tasks.html . ● Custom Domains. See https://docs.cloudfoundry.org/

● Zipkin Tracing. See https://docs.cloudfoundry.org/admin devguide/deploy-apps/routes-domains.html#domains

guide/zipkin_tracing.html . .

● Websockets. See https://docs.cloudfoundry.org/admin ● Sharing Service Instances. See https://docs.cloudfoun

guide/supporting-websockets.html . dry.org/devguide/services/sharing-instances.html .

● Space-Scoped Service Brokers. See https://docs.cloud

foundry.org/services/managing-service-brokers.html .

● Route Services (only user-provided and fully-brokered

services). See https://docs.cloudfoundry.org/services/

route-services.html .

For more information about Cloud Foundry, see the official Cloud Foundry documentation at https://

www.cloudfoundry.org/ .

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 17

1.2.2 Neo Environment

The Neo environment lets you develop HTML5, Java, and SAP HANA extended application services (SAP HANA

XS) applications. You can also use the UI Development Toolkit for HTML5 (SAPUI5) to develop rich user interfaces

for modern web-based business applications.

The Neo environment also allows you to deploy solutions on SAP Cloud Platform. In the context of SAP Cloud

Platform, a solution is made up of various application types and configurations created with different technologies,

designed to implement a certain scenario or task flow. You can deploy solutions by using the Change and Transport

System (CTS+) tool, the console client, or the SAP Cloud Platform cockpit, which also lets you monitor your

solutions. The SAP multitarget application (MTA) model encompasses and describes application modules,

dependencies, and interfaces in an approach that facilitates validation, orchestration, maintenance, and

automation of the application throughout its life cycle.

The Neo environment lets you use virtual machines, allowing you to install and maintain your own applications in

scenarios that aren't covered by the platform. A virtual machine is the virtualized hardware resource (CPU, RAM,

disk space, installed OS) that blends the line between Platform-as-a-Service and Infrastructure-as-a-Service.

You can deploy applications developed in the Neo environment to various SAP data centers around the world. For

more information about regional availability of the Neo environment, see Regions and Hosts Available for the Neo

Environment [page 23].

1.2.3 When to Use Which Environment?

Choose the development environment that is most suitable for your business needs.

Use Cases for the Cloud Foundry Environment

Application developers can use the Cloud Foundry environment to enhance SAP products and to integrate

business applications, as well as to develop entirely new enterprise applications based on business APIs that are

hosted on SAP Cloud Platform. The Cloud Foundry environment allows you to use multiple programming

languages such as Java, Node.js, and community/bring-your-own language options. We recommend that you use

the Cloud Foundry environment for 12-factor and/or micro-services-based applications, for Internet of Things and

machine learning scenarios, and for developing applications using SAP HANA extended application services,

advanced model (SAP HANA XSA).

Use Cases for the Neo Environment

Neo is a feature-rich and easy-to-use development environment, allowing you to develop Java, SAP HANA XS, and

HTML5 applications. We recommend that you use the Neo environment to develop HTML5 and complex Java

applications and for complex integration and extension scenarios.

SAP Cloud Platform

18 PUBLIC What is SAP Cloud Platform

Comparing the Cloud Foundry and the Neo Environment

The following table provides an overview of the features, capabilities, and restrictions of each environment:

Criteria Cloud Foundry Environment Neo

Environment

Best used for 12-factor- and/or microservice-based HTML5-based, SAP HANA XS, and com

applications and services, IoT and ma plex Java applications.

chine learning scenarios, and XSA appli

cations. Allows you to use multiple pro

gramming languages such as Java,

Node.js, and community / bring-your-

own language options.

Available regions See Regions [page 21]. See Regions [page 21].

Services See Capabilities [page 24]. See Capabilities [page 24].

Buildpacks/programming languages SAP-Java, Node.js. Java, HTML5, SAP HANA XS.

supported by SAP

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 19

Criteria Cloud Foundry Environment Neo

Environment

Additional buildpacks ● Java. See https://github.com/ Not available.

cloudfoundry/java-buildpack .

● Python. See https://github.com/

cloudfoundry/python-buildpack .

● Staticfile. See https://github.com/

cloudfoundry/staticfile-buildpack

.

● Ruby. See https://github.com/

cloudfoundry/ruby-buildpack .

● Go. See https://github.com/cloud

foundry/go-buildpack .

● PHP. See https://github.com/cloud

foundry/php-buildpack .

● Binary. See https://github.com/

cloudfoundry/binary-buildpack .

● Bring your own buildpack. See

http://docs.cloudfoundry.org/build

packs/developing-build

packs.html#create-buildpacks .

Note

Support for additional buildpacks is

limited. SAP regularly upgrades to

new versions of the Cloud Foundry

environment. Any fixes provided in

updated versions of the buildpacks

are available after the relevant up

grade. You can report issues with

these buildpacks to SAP. Issues de

tected in the buildpacks are ad

dressed to the relevant community,

however, SAP only fixes issues that

are related to SAP Cloud Platform it

self.

Maximum memory consumption of a 4 GB 16 GB

single application instance

SAP HANA programming model SAP HANA extended application serv SAP HANA extended application serv

ices, advanced model (SAP HANA XSA). ices, classic model (SAP HANA XS).

Virtual machines Not available. Available in selected regions.

Docker support Docker with Diego. For more information, Not available.

see the Cloud Foundry environment doc

umentation at http://docs.cloudfoun

dry.org/adminguide/docker.html .

SAP Cloud Platform

20 PUBLIC What is SAP Cloud Platform

Criteria Cloud Foundry Environment Neo

Environment

Certification Not available. ISO 27001, SOC1 Type 2, SOC2 Type 2.

On-premise connectivity HTTP on-premise connectivity is availa The following is available:

ble.

● HTTP on-premise connectivity

● Service channels on-premise to

cloud

● LDAP integration

● RFC on-premise connectivity.

Extension development Only selected scenarios. Available for SAP SuccessFactors and

SAP S/4HANA.

1.3 Regions

Depending on the type of your global account and the environment you're using, you can deploy applications in

different regions.

Each region represents the location of a data center, the physical location (for example, Europe, US East) where

applications, data, or services are hosted. Application performance (response time, latency) can be optimized by

selecting a region close to the users. When deploying applications, consider that a subaccount is associated with a

particular region and that this is independent of your own location. You may be located in the United States, for

example, but operate your subaccount in a region in Europe.

To deploy an application in more than one region, execute the deployment separately for each host.

The different development environments – Cloud Foundry environment and Neo environment – are both available

in different regions. All regions that are available for the Neo environment are exclusively provided by SAP, whereas

regions that are available for the Cloud Foundry environment might also be provided by third-party data center

providers such as Amazon or Microsoft. These third-party data center providers operate the infrastructure layer of

regions. By contrast, SAP operates the platform layer and Cloud Foundry.

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 21

1.3.1 Regions and API Endpoints Available for the Cloud

Foundry Environment

Regions and API Endpoints Available for the Cloud Foundry Environment

Global Account Type Region Technical Key API Endpoint

Enterprise account Brazil (São Paulo) (running on cf-br10 api.cf.br10.hana.onde

Amazon Web Services) mand.com

Enterprise & trial account Europe (Frankfurt) (running cf-eu10 api.cf.eu10.hana.onde

on Amazon Web Services) mand.com

Europe (Netherlands) (run cf-eu20 api.cf.eu20.hana.onde

ning on Microsoft Azure) mand.com

US East (VA) (running on Am cf-us10 api.cf.us10.hana.onde

azon Web Services) mand.com

US Central (IA) (running on cf-us30 api.cf.us30.hana.onde

Google Cloud Platform) mand.com

Trial account US West (CA) Beta (running cf-us20 api.cf.us20.hana.onde

on Microsoft Azure) mand.com

Caution

Some customer contracts include EU access, which means that we only use European subprocessors to access

personal data in cloud services, such as when we provide support. We currently cannot guarantee EU access in

the Cloud Foundry environment. If your contract includes EU access, we cannot move services to the Cloud

Foundry environment, without changing your contract.

Tip

To log on to the cockpit and navigate to a space in the Cloud Foundry environment, choose any of the cockpit

URLs provided in the following table for the Neo environment, then choose a Cloud Foundry region.

SAP Cloud Platform

22 PUBLIC What is SAP Cloud Platform

1.3.2 Regions and Hosts Available for the Neo Environment

Regions, Hosts, and IP Ranges Available for the Neo Environment

Global Account Region (all oper Technical Key Host Cockpit Logon IP Ranges

Type ated by SAP)

Enterprise account Australia (Sydney) neo-ap1 ap1.hana.onde Link 210.80.140.0/24

mand.com and

157.133.96.0/23

Brazil (São Paulo) neo-br1 br1.hana.onde Link 157.133.246.0/24

mand.com

Canada (Toronto) neo-ca1 ca1.hana.onde Link 157.133.54.0/24

mand.com

Europe (Amster neo-eu3 eu3.hana.onde Link 157.133.141.0/24

dam) mand.com

Europe (Rot) neo-eu1 hana.onde Link 155.56.128.0/17

mand.com

eu1.hana.onde

mand.com

Japan (Tokyo) neo-jp1 jp1.hana.onde Link 157.133.150.0/24

mand.com

Kingdom of Saudi neo-sa1 sa1.hana.onde Link 157.133.93.0/24

Arabia (Riyadh) mand.com

Russia (Moscow) neo-ru1 ru1.hana.onde Link 157.133.2.0/24

mand.com

UAE (Dubai) neo-ae1 ae1.hana.onde Link 157.133.85.0/24

mand.com

US East (Ashburn) neo-us1 us1.hana.onde Link 65.221.12.0/24 and

mand.com 206.112.73.0/24

US West (Chan neo-us2 us2.hana.onde Link 64.95.110.0/24 and

dler) mand.com 64.95.111.0/26

US East (Sterling) neo-us3 us3.hana.onde Link 169.145.118.0/24

mand.com

Trial account Europe (Rot) - Trial neo-eu1-trial hanatrial.onde Link 155.56.128.0/17

mand.com

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 23

Related Information

Accounts [page 10]

Environments [page 16]

Availability of SAP Cloud Platform Services

1.4 Capabilities

SAP Cloud Platform provides a rich set of capabilities that group together different technical components, like

services, tools and runtimes.

Find an overview of available capabilities in the figure below. For detailed information about the regional availability

of services, see Availability of SAP Cloud Platform Services.

● Analytics [page 24]

● Business Services [page 25]

● Collaboration [page 26]

● DevOps [page 27]

● Integration [page 28]

● Internet of Things [page 29]

● Mobile Services [page 30]

● Runtimes & Containers [page 31]

● Security [page 31]

● Data & Storage [page 26]

● Machine Learning [page 30]

● User Experience [page 32]

1.4.1 Analytics

Embeds advanced analytics into application solutions, empowering you to identify, combine, and manage multiple

sources of data and build advanced analytics models within business applications for personalized, contextual,

SAP Cloud Platform

24 PUBLIC What is SAP Cloud Platform

real-time applications. This capability includes the following services: Predictive service, SAP Analytics Cloud, SAP

Smart Business, and Streaming Analytics.

● https://help.sap.com/viewer/p/PREDICTIVE_SERVICE [https://help.sap.com/viewer/p/

PREDICTIVE_SERVICE]

● https://help.sap.com/viewer/product/SAP_BusinessObjects_Cloud/release/en-US [https://help.sap.com/

viewer/product/SAP_BusinessObjects_Cloud/release/en-US]

● https://help.sap.com/viewer/352c8328eab24b80be4bf876355d340c/Cloud/en-US [https://help.sap.com/

viewer/352c8328eab24b80be4bf876355d340c/Cloud/en-US]

● https://help.sap.com/viewer/p/Streaming_Analytics [https://help.sap.com/viewer/p/Streaming_Analytics]

1.4.2 Business Services

SAP Business Services allows for the fast development of business applications and services for the cloud, and

powers an open marketplace for new business apps, which includes SAP, hybris, and other 3rd party applications.

This includes pre-packaged applications for customer service and ecommerce, micro business services, and a

marketplace of services for quickly creating business-ready applications. This capability includes the following

services: SAP Data Quality Management, microservices for location data, SAP RealSpend, and SAP Localization

Hub, tax service.

● https://help.sap.com/viewer/d95546360fea44988eb614718ff7e959/Cloud/en-US

● https://help.sap.com/viewer/p/SAP_RealSpend

● https://help.sap.com/viewer/SLH_tax_service

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 25

1.4.3 Collaboration

Brings people together with secure access to shared business content, information, applications, and processes to

drive results and increase team productivity. This capability includes the following services: Gamification, SAP Live

Link 365, SAP Document Center, and SAP Jam.

● https://help.sap.com/viewer/850b6386f85d49699cfa908a5bc99d99/Cloud/en-US [https://help.sap.com/

viewer/850b6386f85d49699cfa908a5bc99d99/Cloud/en-US]

● https://livelink.sapmobileservices.com/documentation/ [https://livelink.sapmobileservices.com/

documentation/]

● https://help.sap.com/viewer/p/SAP_Document_Center [https://help.sap.com/viewer/p/

SAP_Document_Center]

● https://help.sap.com/viewer/product/SAP_JAM_COLLABORATION/en-US [https://help.sap.com/viewer/

product/SAP_JAM_COLLABORATION/en-US]

1.4.4 Data & Storage

By eliminating the division between transactions and analytics, SAP HANA powers any business question

anywhere in real time. With SAP HANA, spatial processing and data virtualization on the same architecture,

innovating with big data is simplified and accelereated. With SAP Adaptive Server Enterprise (SAP ASE),

customers can drive faster, more reliable transaction processing for less. ASE is an affordable relational database

management system designed for high-performance transaction-based applications involving massive volumes of

data and thousands of concurrent users. This capability includes the following services: Document service, SAP

HANA, SAP ASE, MongoDB, Object Store, PostgreSQL, and Redis.

SAP Cloud Platform

26 PUBLIC What is SAP Cloud Platform

● https://help.sap.com/viewer/product/DOCUMENT_SERVICE/Cloud/en-US [https://help.sap.com/viewer/

product/DOCUMENT_SERVICE/Cloud/en-US]

● https://help.sap.com/viewer/product/HANA_SERVICE/Cloud/en-US

● https://help.sap.com/viewer/product/ASE_SERVICE/Cloud/en-US

● https://help.sap.com/viewer/82f8a493cc6e4672a23a7e46bb11d4fa/Cloud/en-US [https://help.sap.com/

viewer/82f8a493cc6e4672a23a7e46bb11d4fa/Cloud/en-US]

● https://help.sap.com/viewer/2ee77ef7ea4648f9ab2c54ee3aef0a29/Cloud/en-US [https://help.sap.com/

viewer/2ee77ef7ea4648f9ab2c54ee3aef0a29/Cloud/en-US]

● https://help.sap.com/viewer/6be7ed96ddeb4e158c2107c434142545/Cloud/en-US [https://help.sap.com/

viewer/6be7ed96ddeb4e158c2107c434142545/Cloud/en-US]

● https://help.sap.com/viewer/d6429ae8d9384822939bf809078d8ff2/Cloud/en-US [https://help.sap.com/

viewer/d6429ae8d9384822939bf809078d8ff2/Cloud/en-US]

1.4.5 DevOps

Development and IT Operations allow you to develop and manage applications including complete life cycle

management. This capability includes the following services: Application Autoscaler, Corporate Git Link for SAP

Web IDE, Debugging service, Feature Flags service, Git service, Java Apps Lifecycle Management, Job Scheduler

(Beta), Logging services, Monitoring service, Profiling service, SAP Translation Hub, SAP Web IDE, SAP Web IDE

Full-Stack, and Solutions Lifecycle Management.

●

● https://help.sap.com/viewer/825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US/

b8427ec16ae64347b97d2d46fb28f7cd.html [https://help.sap.com/viewer/

825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US/b8427ec16ae64347b97d2d46fb28f7cd.html]

● https://help.sap.com/viewer/product/DEBUGGING_SERVICE/Cloud/en-US [https://help.sap.com/viewer/

product/DEBUGGING_SERVICE/Cloud/en-US]

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 27

● https://help.sap.com/viewer/p/DISASTER_RECOVERY [https://help.sap.com/viewer/p/

DISASTER_RECOVERY]

●

● https://help.sap.com/viewer/product/GIT_SERVICE/Cloud/en-US [https://help.sap.com/viewer/product/

GIT_SERVICE/Cloud/en-US]

● https://api.hana.ondemand.com/lifecycle/v1/documentation [https://api.hana.ondemand.com/lifecycle/v1/

documentation]

●

● https://help.sap.com/viewer/product/LOGGING_SERVICES/Cloud/en-US [https://help.sap.com/viewer/

product/LOGGING_SERVICES/Cloud/en-US]

● https://help.sap.com/viewer/product/MONITORING_SERVICE/Cloud/en-US [https://help.sap.com/viewer/

product/MONITORING_SERVICE/Cloud/en-US]

● https://help.sap.com/viewer/product/PROFILING_SERVICE/Cloud/en-US [https://help.sap.com/viewer/

product/PROFILING_SERVICE/Cloud/en-US]

● https://help.sap.com/viewer/ed6ce7a29bdd42169f5f0d7868bce6eb/Cloud/en-US [https://help.sap.com/

viewer/ed6ce7a29bdd42169f5f0d7868bce6eb/Cloud/en-US]

● https://help.sap.com/viewer/825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US [https://help.sap.com/

viewer/825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US]

● https://help.sap.com/viewer/825270ffffe74d9f988a0f0066ad59f0/CF/en-US [https://help.sap.com/viewer/

825270ffffe74d9f988a0f0066ad59f0/CF/en-US]

● Multi-Target Applications for the Neo Environment [page 1340]

1.4.6 Integration

Improves business agility while preventing data and application silos by seamlessly and securely integrating cloud

applications into business landscapes. Securely collaborate with customers and partners at scale to improve

efficiencies as well as gain real-time insights from sensors, devices, and social sentiment. This capability includes

the following services: API Management, Business Rules, Integration, Connectivity, Destination service, Enterprise

Messaging, OData Provisioning, RabbitMQ, and Workflow.

SAP Cloud Platform

28 PUBLIC What is SAP Cloud Platform

● https://help.sap.com/viewer/66d066d903c2473f81ec33acfe2ccdb4/Cloud/en-US [https://help.sap.com/

viewer/66d066d903c2473f81ec33acfe2ccdb4/Cloud/en-US]

● https://help.sap.com/viewer/9d7cfeaba766433eaea8a29fdb8a688c/Cloud/en-US [https://help.sap.com/

viewer/9d7cfeaba766433eaea8a29fdb8a688c/Cloud/en-US]

● https://help.sap.com/viewer/product/CLOUD_INTEGRATION/Cloud/en-US [https://help.sap.com/viewer/

product/CLOUD_INTEGRATION/Cloud/en-US]

● https://help.sap.com/viewer/product/CP_CONNECTIVITY/Cloud/en-US [https://help.sap.com/viewer/

product/CP_CONNECTIVITY/Cloud/en-US]

● https://help.sap.com/viewer/cca91383641e40ffbe03bdc78f00f681/Cloud/en-US/

7e306250e08340f89d6c103e28840f30.html [https://help.sap.com/viewer/

cca91383641e40ffbe03bdc78f00f681/Cloud/en-US/7e306250e08340f89d6c103e28840f30.html]

● https://help.sap.com/viewer/product/SAP_ENTERPRISE_MESSAGING/Cloud/en-US [https://help.sap.com/

viewer/product/SAP_ENTERPRISE_MESSAGING/Cloud/en-US]

● https://help.sap.com/viewer/product/ODATA_PROVISIONING/Cloud/en-US [https://help.sap.com/viewer/

product/ODATA_PROVISIONING/Cloud/en-US]

● https://help.sap.com/viewer/15a22358e1984002b6b8ecd55960f49f/Cloud/en-US/

9b68fdd62c064fe99d7bcce7f5f77a8c.html [https://help.sap.com/viewer/

15a22358e1984002b6b8ecd55960f49f/Cloud/en-US/9b68fdd62c064fe99d7bcce7f5f77a8c.html]

● https://help.sap.com/viewer/product/WORKFLOW_SERVICE/Cloud/en-US [https://help.sap.com/viewer/

product/WORKFLOW_SERVICE/Cloud/en-US]

1.4.7 Internet of Things

Provides the ability to quickly develop, deploy, and manage real-time IoT and machine-to-machine and remote

data synch applications. Onboard and manage connected remote devices, get real-time predictive analysis to

improve intelligence and decision-making at the edge of the network, and optimize business processes at the core

of any business. This capability includes the following services: Internet of Things, SAP IoT Application

Enablement, and Remote Data Sync.

● https://help.sap.com/viewer/product/SAP_CP_IOT_CF/Cloud/en-US [https://help.sap.com/viewer/product/

SAP_CP_IOT_CF/Cloud/en-US]

● https://help.sap.com/viewer/product/SAP_CP_IOT_NEO/Cloud/en-US [https://help.sap.com/viewer/

product/SAP_CP_IOT_NEO/Cloud/en-US]

● https://help.sap.com/viewer/p/SAP_IOT_APPLICATION_SERVICES [https://help.sap.com/viewer/p/

SAP_IOT_APPLICATION_SERVICES]

● https://help.sap.com/viewer/ee5a2592b2884ea795b7cb1ed96299c7/Cloud/en-US [https://help.sap.com/

viewer/ee5a2592b2884ea795b7cb1ed96299c7/Cloud/en-US]

SAP Cloud Platform

What is SAP Cloud Platform PUBLIC 29

1.4.8 Machine Learning

SAP Leonardo Machine Learning Foundation, built on SAP Cloud Platform, provides advanced machine learning

capabilities that help applications recognize patterns and correlations in data. It offers instantly consumable

services that let you learn from data and extract knowledge that was previously inaccessible for computers.

● https://help.sap.com/viewer/p/SAP_LEONARDO_MACHINE_LEARNING_FOUNDATION

1.4.9 Mobile Services

Deliver enterprise-grade native and hybrid mobile apps. The mobile portfolio delivers key capabilities such as

multiple authentication methods, secure access to on-premises and cloud-based systems, offline synchronization,

remote logging control and retrieval, automatic application updates for hybrid applications, one-to-one and one-

to-many push notifications. This capability includes the following services: Agentry, App & Device Management,

Development & Operations, SAP Fiori Mobile, and SAP Live Link 365.

● https://help.sap.com/viewer/38dbd9fbb49240f3b4d954e92335e670/Cloud/en-US/

642d3a98d510496a99ab6bbb48910762.html [https://help.sap.com/viewer/

38dbd9fbb49240f3b4d954e92335e670/Cloud/en-US/642d3a98d510496a99ab6bbb48910762.html]

● https://help.sap.com/viewer/p/MOBILE_SERVICE_FOR_APP_AND_DEVICE_MANAGEMENT

● https://help.sap.com/viewer/p/

SAP_CLOUD_PLATFORM_MOBILE_SERVICE_FOR_DEVELOPMENT_AND_OPERATIONS [https://

help.sap.com/viewer/p/

SAP_CLOUD_PLATFORM_MOBILE_SERVICE_FOR_DEVELOPMENT_AND_OPERATIONS]

SAP Cloud Platform

30 PUBLIC What is SAP Cloud Platform

● https://help.sap.com/viewer/p/MOBILE_SERVICE_FOR_SAP_FIORI

● https://livelink.sapmobileservices.com/documentation/

1.4.10 Runtimes & Containers

SAP Cloud Platform supports different programming languages/models and offers standards-based development.

SAP Cloud Platform Virtual Machine gives you full control over virtualized hardware resources enabling you to

install everything you need next to complement your cloud applications. The HTML5 Application Repository

service (Beta) enables you to centrally store and provision HTML5 applications in the Cloud Foundry environment.

● Virtual Machines [page 1761]

●

1.4.11 Security

SAP Cloud Platform's closely integrated security services include authentication, single sign-on, on-premises

integration and self-services such as registration and password reset for employees, customers, partners, and

consumers. This capability includes the following services: Authorization & Trust Management, Identity

Authentication, Identity Provisioning, Keystore Service, and OAuth 2.0 Service.

● https://help.sap.com/viewer/product/CP_AUTHORIZ_TRUST_MNG/Cloud/en-US

● https://help.sap.com/viewer/product/IDENTITY_AUTHENTICATION/Cloud/en-US [https://help.sap.com/