Professional Documents

Culture Documents

I Electronic Devices & Circuits: Mahaveer Institute of Science and Technology

Uploaded by

Sriram ReddyOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

I Electronic Devices & Circuits: Mahaveer Institute of Science and Technology

Uploaded by

Sriram ReddyCopyright:

Available Formats

I

Electronic

Devices

&

Circuits

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 1

Chapter 1

Semiconductor Diode

The semiconductordiode is formed by simply bringing p-type and n type materials

together (constructed from thesame base—Ge or Si), as shown in Fig 1.1. At the instant the

two materials are “joined” the electrons and holes inthe region of the junction will combine,

resulting in a lack of carriers in the regionnear the junction.

This region of uncovered positive and negative ions is called the depletion

regiondue to the depletion of carriers in this region.

Since the diode is a two-terminal device, the application of a voltage across

itsterminals leaves three possibilities: no bias (V= 0 V), forward bias (V> 0 V), andreverse

bias (V<0 V). Each is a condition that will result in a response that theuser must clearly

understand if the device is to be applied effectively.

Fig 1.1 P-N junction diode under no bias condition

No Applied Bias (V= 0 V)

Under no-bias (no applied voltage) conditions, any minority carriers (holes) in the n-type

material that find themselves within the depletion region will pass directly into the p-type

material. The closer the minority carrier is to the junction, the greater the attraction for the

layer of negative ions and the less the opposition of the positive ions in the depletion region

of the n-type material. For the purposes of future discussions we shall assume that all the

minority carriers of the n-type material that find themselves in the depletion region due to

their random motion will pass directly into the p-type material. Similar discussion can be

applied to the minority carriers (electrons) of the p-type material. This carrier flow has been

indicated in Fig 1.1 for the minority carriers of each material. The majority carriers

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 2

(electrons) of the n-type material must overcome the attractive forces of the layer of positive

ions in the n-type material and the shield of negative ions in the p-type material to migrate

into the area beyond the depletion region of the p-type material. However, the number of

majority carriers is so large in the n-type material that there will invariably be a small number

of majority carriers with sufficient kinetic energy to pass through the depletion region into the

p-type material. Again, the same type of discussion can be applied to the majority carriers

(holes) of the p-type material. The resulting flow due to the majority carriers is also shown in

Fig 1.1.

A close examination of Fig 1.1 will reveal that the relative magnitudes of the flow vectors are

such that the net flow in either direction is zero. This cancellation of vectors has been

indicated by crossed lines. The length of the vector representing hole flow has been drawn

longer than that for electron flow to demonstrate that the magnitude of each need not be the

same for cancellation and that the doping levels for each material may result in an unequal

carrier flow of holes and electrons. In summary, therefore:

In the absence of an applied bias voltage, the net flow of charge in any one

direction for a semiconductor diode is zero.

Reverse-Bias Condition (V<0 V)

If an external potential of V volts is applied across the p-n junction such that the

positive terminal is connected to the n-type material and the negative terminal is connected to

the p-type material , the number of uncovered positive ions in the depletion region of the

n-type material will increase due to the large number of “free” electrons drawn to the positive

potential of the applied voltage. For similar reasons, the number of uncovered negative ions

will increase in the p-type material. The net effect, therefore, is a widening of the depletion

region. This widening of the depletion region will establish too great a barrier for the majority

carriers to overcome, effectively reducing the majority carrier flow to zero

The current that exists under reverse-bias conditions is called the reverse

saturationcurrent and is represented by Is.

Fig 1.2 P N Junction Diode

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 3

1.2 V-I Characteristics in forward bias

Fig 1.3 V-I characteristics of a forward bias diode

The application of a forward-bias potential VDwill “pressure” electrons in the n-type material

and holes in the p-type material to recombine with the ions near the boundary and reduce the

width of the depletion region. The resulting minority-carrier flow of electrons from the p-type

material to the n-type material (and of holes from the n-type material to the p-type material)

has not changed in magnitude (since the conduction level is controlled primarily by the

limited number of impurities in the material), but the reduction in the width of the depletion

region has resulted in a heavy majority flow across the junction. An electron of the n-type

material now “sees” a reduced barrier at the junction due to the reduced depletion region and

a strong attraction for the positive potential applied to the p-type material. As the applied bias

increases in magnitude the depletion region will continue to decrease in width until a flood of

electrons can pass through the junction, resulting in an exponential rise in current as shown in

the forward-bias region of the characteristics of Fig 1.3. Note that the vertical scale of Fig 1.3

is measured in mill amperes (although some semiconductor diodes will have a vertical scale

measured in amperes) and the horizontal scale in the forward-bias region has a maximum of 1

V. Typically, therefore, the voltage across a forward-biased diode will be less than 1 V. Note

also, how quickly the current rises beyond the knee of the curve

Some elements are linear (resistors, capacitors, inductors), which means that doubling the

applied signal (let us say a voltage) produces a doubling of the response (let us say a current).

They are also passive – they do not have built-in source of power. They are two-terminal

devices, (which is self-explanatory).

Diode is also two-terminal, passive but non-linear a device. Figure 1 shows the diode.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 4

Fig.1.4. Diode. Fig.1.5.Diode voltage-current curve,U-I

curve.

In Fig. 1.5 there is U-I (voltage-current) curve (characteristic). The diode arrow, anode

terminal, shows the direction of forward current flow. If the diode is in a circuit in which a

current of 10mA=10*10-3A is flowing from anode to cathode, then the anode is

approximately 0.5 volt more positive than cathode. We call it the forward voltage drop. The

reverse current is measured in nanoampers and 1nA=1*10-9A. It is so small in comparison to

mA, which can be neglected until we reach the reverse breakdown voltage. Typically it is

approximately 75V and normally we never subject a diode to voltage large enough to cause

reverse breakdown.

Similarly, the forward voltage drop, which is about 0.5 or 0.8 V, is of little concern. For

these reasons we treat the diode as a good approximation of an ideal one-way conductor.

1.3 Diode Equations

1. ID=Is(eKVd/Tk- 1)

Where

Is = reverse saturation current

k = 11.600/ŋ with ŋ= 1for Ge and ŋ=2 for Si for relatively low levels of diode current

Tk = Tc + 273o

Chapter 2

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 5

ZENER DIODE

2.1 Zener Region

Even though the scale is in tens of volts in the negative region, there is a point where the

application of too negative a voltage will result in a sharp change in the characteristics, as

shown in Fig. 2.1.

Fig 2.1

Zener Region

The current increases at a very rapid ratein a direction opposite to that of the positive

voltage region. The reverse-bias potentialthat results in this dramatic change in characteristics

is called the Zener potentialand is given the symbol VZ.As the voltage across the diode

increases in the reverse-bias region, the velocityof the minority carriers responsible for the

reverse saturation current Iswill also increase.Eventually, their velocity and associated kinetic

energy (WK=1/2(mv)2) will besufficient to release additional carriers through collisions with

otherwise stable atomicstructures. That is, an ionization process will result whereby valence

electrons absorbsufficient energy to leave the parent atom. These additional carriers can then

aid theionization process to the point where a high avalanche current is established and

theavalanche breakdown region determined.

The avalanche region (VZ) can be brought closer to the vertical axis by increasingthe

doping levels in the p- and n-type materials. However, as VZdecreases to very lowlevels, such

as _5 V, another mechanism, called Zener breakdown, will contribute tothe sharp change in

the characteristic. It occurs because there is a strong electric fieldin the region of the junction

that can disrupt the bonding forces within the atom and“generate” carriers. Although the

Zener breakdown mechanism is a significant contributoronly at lower levels of VZ, this sharp

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 6

change in the characteristic at any level iscalled the Zener region and diodes employing this

unique portion of the characteristicof a p-n junction are called Zener diodes.The Zener region

of the semiconductor diode described must be avoided if the responseof a system is not to be

completely altered by the sharp change in characteristicsin this reverse-voltage region.

The maximum reverse-bias potential that can be applied before entering theZener region is

called the peak inverse voltage (referred to simply as the PIVrating) or the peak reverse

voltage (denoted by PRV rating)

If an application requires a PIV rating greater than that of a single unit, a numberof

diodes of the same characteristics can be connected in series. Diodes are alsoconnected in

parallel to increase the current-carrying capacity.

Fig 2.2 Zener diode and semiconductor Diode

This region of unique characteristics is employed in the design of Zener diodes,which

have the graphic symbol appearing in Fig. 2.2. Both the semiconductor diodeand Zener diode

are presented side by side in Fig. 2.2 to ensure that the direction ofconduction of each is

clearly understood together with the required polarity of the appliedvoltage. For the

semiconductor diode the “on” state will support a current in thedirection of the arrow in the

symbol. For the Zener diode the direction of conductionis opposite to that of the arrow in the

symbol as pointed out in the introduction to thissection. Note also that the polarity of VDand

VZ are the same as would be obtainedif each were a resistive element.

The location of the Zener region can be controlled by varying the doping levels.An

increase in doping, producing an increase in the number of added impurities, willdecrease the

Zener potential. Zener diodes are available having Zener potentials of1.8 to 200 V with

power ratings from14 W to 50 W. Because of its higher temperatureand current capability,

silicon is usually preferred in the manufacture of Zener diodes. The term nominal associated

with VZindicates that it is a typical average value.

Since this is a 20% diode, the Zener potential can be expected to vary as 10 V+/-

20%or from 8 to 12 V in its range of application. Also available are 10% and 5% diodeswith

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 7

the same specifications. The test current IZTis the current defined by the ¼ powerlevel, and

ZZTis the dynamic impedance at this current level. The maximum knee impedanceoccurs at

the knee current of IZK. The reverse saturation current is providedat a particular potential

level, and IZMis the maximum current for the 20% unit.

The temperature coefficient reflects the percent change in VZwith temperature. Itis defined by

the equation

Tc = ΔVz/Vz(T1-T0) x 100 %/0C

Where VZis the resulting change in Zener potential due to temperature variation.A positive

value would reflect an increase in VZwith an increase in temperature, while a negative value

would result in a decrease invalue with increase in temperature. The 24-V, 6.8-V, and 3.6-V

levels refer to threeZener diodes having these nominal values within the same family of

Zeners. The curvefor the 10-V Zener would naturally lie between the curves of the 6.8-V and

24-V devices.Returning to Equation above,T0 is the temperature at which VZis provided

(normallyroom temperature—25°C), and T1 is the new level

Chapter 3

LIGHT-EMITTING DIODES

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 8

The increasing use of digital displays in calculators, watches, and all forms of

instrumentationhas contributed to the current extensive interest in structures that willemit

light when properly biased. The two types in common use today to perform thisfunction are

the light-emitting diode (LED) and the liquid-crystal display (LCD).

As the name implies, the light-emitting diode (LED) is a diode that will give offvisible light

when it is energized. In any forward-biased p-n junction there is, withinthe structure and

primarily close to the junction, a recombination of holes and electrons.This recombination

requires that the energy possessed by the unbound free electronbe transferred to another state.

In all semiconductor p-n junctions some of thisenergy will be given off as heat and some in

the form of photons. In silicon and germaniumthe greater percentage is given up in the form

of heat and the emitted lightis insignificant. In other materials, such as gallium arsenide

phosphide (GaAs P) orgallium phosphide (GaP), the number of photons of light energy

emitted is sufficientto create a very visible light source.

The process of giving off light by applying an electrical source of energy iscalled

electroluminescence.

Fig 3.1 Graphic symbol of a diode

As shown in Fig. 3.1 with its graphic symbol, the conducting surface connectedto the

p-material is much smaller, to permit the emergence of the maximum numberof photons of

light energy. Note in the figure that the recombination of the injectedcarriers due to the

forward-biased junction results in emitted light at the site of recombination.There may, of

course, be some absorption of the packages of photon energyin the structure itself, but a very

large percentage are able to leave, as shown inthe figure.

Two quantities yet undefined appear under the heading Electrical/Optical

Characteristicsat TA _ 25°C. They are the axial luminous intensity (IV) and the

luminousefficacy (_v). Light intensity is measured in candela. One candela emits a light

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 9

fluxof 4_ lumens and establishes an illumination of 1 foot-candle on a 1-ft2 area 1 ft fromthe

light source. Even though this description may not provide a clear understandingof the

candela as a unit of measure, its level can certainly be compared between similardevices. The

term efficacy is, by definition, a measure of the ability of a deviceto produce a desired effect.

For the LED this is the ratio of the number of lumensgenerated per applied watt of electrical

energy. The relative intensityof each color versus wavelength appears in Fig. 3.2.

Fig 3.2 Color Intensity vs eavelength

Since the LED is a p-n junction device, it will have a forward-biased characteristicsimilar to

the diode response curves. Note the almost linear increase in relativeluminous intensity with

forward current.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 10

Fig 3.3 Maximum peak current vs. pulse duration

Figure 3.3 reveals that thelonger the pulse duration at a particular frequency, the

lower the permitted peak current(after you pass the break value of tp). LED displays are

available today in many different sizes and shapes. The lightemittingregion is available in

lengths from 0.1 to 1 inThere are also two-lead LED lamps that contain two LEDs, so that a

reversal inbiasing will change the color from green to red, or vice versa. LEDs are

presentlyavailable in red, green, yellow, orange, and white, and white with blue soon to

becommercially available. In general, LEDs operate at voltage levels from 1.7 to 3.3 V,which

makes them completely compatible with solid-state circuits. They have a fastresponse time

(nanoseconds) and offer good contrast ratios for visibility. The powerrequirement is typically

from 10 to 150 mW with a lifetime of 100,000_ hours. Theirsemiconductor construction adds

a significant ruggedness factor.

APPLICATIONS

1. Indicators and signs

2. Lighting

3. Light sources for machine vision systems ( LED Televisions)

4. Light Source in Fiber Optic Communications

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 11

Chapter 4

DIODE APPLICATION

1. Rectification

2. Power supply filter(regulator)

3. Specific Applications of diodes

° Signal rectifier

° Diode gate

° Diode clamps

° Limiter

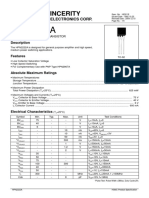

Commercially available diodes are described also by other important characteristics, e.g.:

maximum forward current, capacitance (measured in pF), leaking current, reverse recovery

time (measured in nanoseconds, 0-2-4-5000).

4.1 Rectification

A rectifier changes ac (alternating current) to dc (direct current). This is the most

important application of diodes. Diodes are sometimes called rectifiers.

The basic circuit is shown in Fig. 4.1.

Fig.4.1. Half-wave rectifier.

The ac represents a source of ac voltage. It can be a transformer or just ac sine-wave

power line. For sine-wave input, of amplitude much larger than forward voltage drop, the

output will look like it is shown in Fig. 4.2.

Fig.4.2. Voltage across Rload in Fig.3.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 12

The process and the circuit we call a half-wave rectifier, because only half of the input

waveform is used.

In Fig. 4.3 is shown a full-wave rectifier and Fig. 4.4 shows the voltage across the

load. The small gaps across zero voltage occur because of the forward voltage drop.

Fig.4.3. Full-wave bridge rectifier. Fig.4.4. Voltage across Rload in Fig.4.3.

4.2 Power supply filtering

The rectified wave from Fig.4.4 is not good for application: it is dc only in the sense

that it does not change polarity. But it does not have constant value and has plenty of ripples

i.e. small waves or undulations (wave like forms). It has to be smoothed out in order to obtain

authentic direct current. This can be done by means of a low-pass filter, which is shown in

Fig. 4.5.

Fig.4.5. Full-wave bridge with RC filter.

The full-wave bridge diodes prevent flow of current back out of capacitor. The capacitor is an

1

energy storage element. The energy stored in a capacitor is E = CU 2 . For C in F (farads)

2

and U in V (Volts), E comes out in J (joules) and J=Watt/sec. The capacitor value is chosen

so that R load >> 1/ f , where f is the ripple frequency. For power line sine wave it is

2*50Hz=100Hz. It allows ensuring small ripples, by making the time constant for discharge

much longer than the time between recharging (the capacitor is charging very quickly, while

discharging is very slow).

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 13

It is quite easy to calculate the approximate ripple voltage (see Fig. 4.6). Let us assume

that the load current stays constant (it will, for small ripples). The load causes the capacitor to

discharge somewhat between cycles. The capacitor will lose some voltage, let us say ∆U . In

this case, we have:

I dU

∆U = ∆t , (from I = C )

C dt

Fig.4.6. Power-supply ripple calculation.

Instead of ∆t we use 1/ f or 1/ 2f respectively for half-wave rectification and for

full-wave rectification. Finally we obtain approximate ripple voltage:

I load

° for halve-wave ∆U = ,

fC

I load

° for full-wave ∆U = .

2fC

If one wanted to do exact calculation (with no approximation), one would use the exact

exponential formula (see lecture Capacitors, RC circuits). Sometimes it may be necessary.

A dc power supply using the bridge circuit looks (in the USA) as shown in Fig.4.7.

Fig.4.7. Bridge rectifier circuit.

The curved electrode indicates a polarized capacitor, which must not be allowed the

opposite polarity.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 14

4.3 Signal rectifier

If the input is not a sine wave, we usually do not think of it as a rectification in the sense as it

was for power supply. For instance, we might want to have a series of pulses corresponding

to the rising edge of a square wave (see Fig. 4.8), left hand side and right hand side of the

capacitor C. While both, the rising and the falling, pulses are in the output after

differentiation performed by CR circuit. The simplest way is to rectify the differentiated

wave.

Fig.4.8. A series of pulses' rectifier.

We should remember about forward drop voltage of the diode: This circuit gives no output

for signal for input smaller then, forward drop voltage, let us say 0.5 V pp (peak to peak). If

this is a problem, there are various tricks that help to combat this limitation. For instance:

1. use Schottky diodes with smaller forward drop voltage (approximately 0.2V),

2. use so called circuit solution, which means modifying the circuit structure and

compensating the drop,

3. use matched-pair compensation, use transistors, FETs.

4.4 Diode clamps

Sometimes it is necessary to limit the range of signal (for instance not to exceed certain

voltage limit and not to destroy a device). The circuit in Fig. 4.9 will accomplish this.

Fig.4.9. Diode voltage clamp.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 15

The diode prevents the output from exceeding ≅ 5.6V, with no effect on voltages smaller

than this, including negative voltages. The only limitation is that the input must not be so

negative that the reverse breakdown voltage is exceeded. Diode clamps are the standard

equipment on all inputs in the CMOS family of digital logic (Complementary Metal Oxide

Semiconductor). Without them, the delicate input circuits are easily destroyed by static

electricity.

4.5 Limiter

The circuit in Fig.4.10 limits the output swing to one diode drop, roughly 0.6V.

Fig.4.10 Diode limiter.

It might seem very small, but if the next device is an amplifier with large voltage

amplification, its input has to be always near zero voltage. Otherwise the output is in state of

saturation. For instance we have an op amp with a gain of 1000. The amplifier operates with

supply voltage ± 15V. Sometimes it can be ± 12V or ± 18V or something in between. It will

never give output voltage bigger than the supply voltage, i.e. ± 15V. It means that the input

signal ± 15mV (± 15V/1000) or bigger will saturate the output. This particular amplifier

gives the output proportional to the input (proportionality factor is 1000) only for input

signals from the interval (-15mV,+15mV).

This diode limiter is often used as input protection for high-gain amplifiers.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 16

Chapter 5

BJT TRANSISTOR

A transistor is a semiconductor active device which has the property of transfer of resistance.

It is used to amplify and switch electronic signals. A BJT is a three terminal, three regions;

two junction bipolar device. It consists of back-to-back two P-N junction diodes. The below

figure 5.1 shows two types of transistors based on the polarity and majority and minority

charge carriers in the semiconductor materials.

Fig 5.1 PNP & NPN Transistor

These two junctions give rise to three regions as follows:

(a)EMITTER

It is on the left-hand side of the transistor .It is heavily doped regions because its

main function is to emit majority charge carriers (either electrons or holes) to the

base.

(b) BASE

It is the middle region of the transistor. It is very thin (10-6) as compared to either the

emitter or collector and is very lightly-doped.

(c)COLLECTOR

It is on the right-hand side of the transistor it is medially doped and its main function

is to collect majority charge carriers through the base.

As to find the given device as transistor the four basic guide posts are:

(1) Conventional current flows along the arrow whereas electrons flow against it.

(2) E/B junction is always forward-biased.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 17

(3) C/B junction is always reverse-biased.

(4) IE=IB+ IC

A junction transistor is simply a sandwich of one type of semiconductor material between

two layers of the other types.

(a) PNP Transistor: N-type material sandwiched between two layers of P-type material. It

is called as PNP transistor.

(b) NPN Transistor: P-type material sandwiched between two layers of N-type material.

It is called as NPN transistor.

The symbols employed for PNP and NPN transistors are shown. The arrowhead is always at

the emitter (not at the collector) and in each case, its direction indicates the conventional

direction of current flow. For a PNP transistor, arrowhead points from emitter to base

meaning that emitter is positive with respect to base (and also with respect to collector). For

NPN transistor, it points positive with respect to emitter.

The emitter, base and collector are provided with terminals which are labelled as E, B and C.

The two junctions are:

(a) Emitter-Base (E/B) junction and

(b) Collector-Base (C/B) junction.

It may be noted, in passing, that transistors are made by growing, alloying or diffusing

processes.

5.1 TRANSISTOR BIASING

For proper working of a transistor, it is essential to apply voltages of correct polarity across

its two junctions. It is worthwhile to remember that for normal operation

(a)Emitter-Base junction is always forward-biased, and

(b) Collector-Base junction is always reverse-biased.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 18

Fig 5.2 Transistor Biasing

Figure 5.2 for properly biased NPN and PNP transistors is shown beside. In the fig,

two batteries respectively provide the dc emitter supply voltage VEE and collector supply

voltage Vcc for properly biasing the two junctions of the transistor. In fig (a), positive terminal

of VEE is connected to P-type emitter in order to repel or Push holes into the base.The

negative terminal of Vcc is connected to the collector so that it may attract or pull holes

through the base. Similar considerations apply to the NPN transistor of fig (b).

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 19

Chapter 6

TRANSISTOR CIRCUIT CONFIGURATIONS

Basically, there are three types of circuit connections (called configurations) for operating a

transistor.

(1) Common-base (CB),

(2) Common-emitter (CE),

(3) Common – collector (CC),

The term ‘common’ is used to denote the electrode that is common to the input and output

circuits. Because the common electrode is generally grounded, these modes of operation are

frequently referred to as grounded-base, grounded-emitter and grounded-collector

configurations.

Since transistor is a 3-terminal device, one of its terminals has to be common to the input and

output circuits.

6.1 CB CONFIGURATION

In this configuration, emitter current IE is the input current and collector current I C is the

output current. The input signal is applied between the emitter and base whereas output is

taken out from the collector and base. The Common base configuration is as shown in the

following figure. 6.1.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 20

Fig 6.1 Common Base Configuration

6.2 CC CONFIGURATION

In this case, input signal is applied between base and collector and output signal is taken out

from emitter-collector circuit. Here IB is the input current and IE is the output current. The

common collector Configuration is as shown in the following Figure 6.2.

Fig 6.2 Common Collector Configuration

6.3 CE CONFIGURATION

Fig 5.3 Common Emitter configuration

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 21

The common Emitter configuration is shown in the figure 5.3. Here, input is applied

between the base and emitter and output signal is taken out from collector and emitter circuit.

IB is the input current and IC is the output current.

CE configuration provides high voltage gain, high power gain and high current gain. It has

moderate input impedance and high output impedance.it is the only configuration which

satisfies the fourth condition for identifying the transistor in all the cases

IE = IB + IC

6.4 USING A TRANSISTOR AS A SWITCH

Fig 6.4 Transistor as a switch

The Transistor working as a switch is shown in the figure 6.4. When a transistor is

used as a switch it must be either OFF or fully ON. In the fully ON state the voltage VCE

across the transistor is almost zero and the transistor is said to be saturated because it cannot

pass any more collector current Ic. The output device switched by the transistor is usually

called the 'load'.

The power developed in a switching transistor is very small:

• In the OFF state: power = Ic × VCE, but Ic = 0, so the power is zero.

In the full ON state: power = Ic × VCE, but VCE = 0 (almost), so the power is very small

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 22

Chapter 7

FIELD EFFECT TRANSISTORS

The field effect transistor is a three terminal unipolar solid-state device in which

current is controlled by an electric field as is done in vacuum tubes.

Broadly speaking, there are two types of FETS:

(a) Junction field effect transistor (JFET)

(b) Metal-oxide semiconductor FET (MOSFET).

It is also called insulated-gate FET (IGFET).

It may be further subdivided into:

(i) Depletion – enhancement MOSFET i.e., DE MOSFET

(ii) Enhancement-only MOSFET i.e., E-only MOSFET.

Both of these can be either P-channel or N-channel devices.

7.1 JUNCTION FET (JFET)

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 23

Fig 7.1 FET symbol

The symbols of both n-channel and p-channel FET are shown in the figure 7.1. It can

be fabricated with either an N-channel or P-channel is generally preferred. For fabricating an

N-channel JFET, first a narrow bar of N-type semiconductor material is taken and then two

P-type junctions are diffused on opposite sides of its middle part. These junctions form two

P-N diodes or gates and the area between these gates is called channel. The two P-regions are

internally connected and a single lead is brought out which is called gate terminal.

Ohmic direct electrical contacts (connections) are made at two ends of the bar-one

lead is calledsource terminal S and the other drain Terminal When the potential difference is

established between drain and source, current flows along the length of the ‘bar’ through the

channel located between the two P-regions. The current consists of only majority carriers

which, in the present case, are electrons. P-channel FET is similar in construction except that

it uses P-type bar and two N-type junctions. The majority carriers are holes which flow

through the channel located between the two N-regions or gates.The structures of N-channel

JFET and P-channel JFET are as shown the below figure 7.2.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 24

Fig 7.2 N-channel and P-Channel JFET

Following FET notation is worth remembering:

(1) SOURCE: it is the terminal through which majority carriers enter the bar. Since

carriers come from it, it is called the source.

(2) DRAIN: It is the terminal through which majority carriers leave the bar. The drain-to-

source voltage VDS drives the drain current ID.

(3) GATE: These are two internally-connected heavily-doped regions which form two P-

N junctions. The gate-source voltage VGS reverse-biases the gates.

(4) CHANNEL: It is the space between two gates through which majority carriers pass

from source to drain when VDS is applied.

It must be kept in mind that gate arrow always points to N-type material.

7.2 MOSFET or IGFET

The metal–oxide–semiconductor field-effect transistor (MOSFET) is a device used for

amplifying or switching electronic signals. The basic principle of the MOSFET is that the

source-to-drain current (SD current) is controlled by the gate voltage, or better, by the gate

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 25

electric field. The electric field induces charge (field effect) in the semiconductor at the

semiconductor –oxide-interface. Thus the MOSFET is a voltage-controlled current source.

The channel can be of n-type or p-type, and is accordingly called an nMOS or a pMOS). The

MOSFET is used in digital CMOS logic, which uses P- and N- channel MOSFETs as

building blocks.

IGFET is a related term meaning insulated-gate field-effect transistor, and is almost

synonymous with MOSFET, though it can refer to FETs with a gate insulator that is not

oxide. Another synonym is MISFET for metal–insulator–semiconductor FET.

It could be further subdivided as follows:

(i) ENHANCEMENT - ONLY MOSFET

As the name indicates, the MOSFET operates only in the enhancement mode and has no

depletion mode. It works with large values of VGS only. It differs in construction from the

DE MOSFET in that structurally there exists no channel between the drain and source.

Hence, it does not conduct when VGS=0. That is why it is called normally OFF MOSFET.

(ii) DEPLETION-ENHANCEMENT MOSFET or DE MOSFET

This MOSFET is so called because it can be operated in both depletion mode and

enhancement mode by changing the polarity of VGS. When negative gate-to-source

voltage is applied, the N-channel DE MOSFET operates in the depletion mode. However,

with positive gate voltage, it operates in the enhancement mode. Since a channel exists

between drain and source,ID flows even when VGS=0. That is why DE MOSFET is known

as normally ON MOSFET.

7.3 SCHEMATIC SYMBOLS

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 26

Fig 7.3 Schematic Symbols

The figure 7.3 shows different symbolic representations of N-channel and P-channel FET

7.4 DISADVANTAGESOF FETS

(1) Small gain-bandwidth product,

(2) Greater susceptibility to damage in handling them.

7.5 FET APPLICATIONS

FETs can be used in almost every application in which bipolar transistors can be used.

However, they have certain applications which are exclusive to them:

(1) As input amplifiers in oscilloscopes, electronic voltmeters and other measuring and

testing equipment because their higher rin reduces loading effect to the minimum.

(2) In logic circuits where it is kept OFF when there is zero input while it is turned ON

with very little power input.

(3) For mixer operation of FM and TV receivers.

(4) As voltage-variables resistor (V V R) in operational amplifiers and tone control etc.

(5) Large-scale integration (LSI) and computer memories because of very small size.

Chapter 8

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 27

RECTIFIERS

The electronic circuits require a D.C. source of power. For transistor A.c. amplifier

circuit forbiasing, D.C supply is required. The input signal can be A.C. and so the output

signal will beamplified A.c. signal. But without biasing with D.C. supply, the circuit will not

work. So more orless all electronic A.C. instruments require D.C. power. To get this, D.C.

batteries can be used.But they will get dried quickly and replacing them every time is a costly

affair.

Rectifier is a circuit which offers low resistance to the current in one direction and

highresistance in the opposite direction.

Rectifier converts sinusoidal signal to unidirectional flow and not pure D.C.

Filter converts unidirectional flow into pure D.C.

If the input to the rectifier is a pure sinusoidal wave, the average value of such a wave iszero,

since the positive half cycle and negative half cycle are exactly equal.

8.1 HALF-WAVE RECTIFIER

If this signal is given to the rectifier circuit, say Half Wave Rectifier Circuit, the output will

be as shown in Fig. 8.1

.

Fig 8.1 Half wave Rectified out put

Now the average value of this waveform is not zero, since there is no negative half.Hence a

rectifier circuit converts A.C. Signal with zero average value to a unidirectional waveform

with non zero average value.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 28

The rectifying devices are semiconductor diodes for low voltage signals and vacuum

diodesfor high voltage circuit. A basic Half wave Rectifier circuit for rectification is as

shown in Fig. 8.2.

Fig 8.2Half wave rectifier Circuit

A.C input is normally the A.C. main supply. Since the voltage is 230V, and such a

highvoltage cannot be applied to the semiconductor diode, step down transformer should be

used. Iflarge D.C. voltage is required vacuum tubes should be used. Output voltage is taken

across theload resistorRL. Since the peak value of A.C. signal is much larger than Vy' we

neglect Vy' foranalysis.

The purpose of a rectifier circuit is to convert A.C. to D.C. But the simple circuit

shown before will not achieve this. Rectifier converts A.C. to unidirectional flow and not

D.C. So filters are used to get pure D.C. Filters convert unidirectional flow into D.C.

8.2 Ripple Factor

Ripple factor is a measure of the fluctuating components present in rectifier circuits.

Ripple factor, y = RMS value of AC components/Average value of waveform

The ripple factor for half wave rectifier after making some necessary assumptions is 1.21

The ripple factor for Full wave rectifier is 0.482

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 29

8.3 TRANSFORMER UTILIZATION FACTOR

Transformer Utilization Factor (TUF) = DC power delivered to the load/AC rating of

transformer secondary

Transformer Utilization Factor for Half Wave Rectifier is 0.287

Transformer Utilization Factor for Full Wave Rectifier is 0.693

8.4 FULL WAVE RECTIFIER (FWR)

Since half wave rectifier circuit has poor ripple factor, for ripple voltage is greater than DC

voltage,it cannot be used. So now analyze a full wave rectifier circuit.The circuit is as shown

in Fig. 8.3.

Fig 8.3 Full Wave Rectifier and Waveforms

8.5 BRIDGE RECTIFIERS

The circuit is shown in Fig. 8.4. During the positive half cycle, DJ and D2 are forward

biased. D3 and D4 are open. So current will flow through DJ first and then through RL and

then through D2 back to the ground. During the -ve half cycle D 4 and D~ are forward biased

and they conduct. The current flows from D3 through RL to D4. Hence the direction of

current is the same. So we get full wave rectified output. In Bridge rectifier circuit, there is no

need for centre tapped transformer. So the transformer secondary line to line voltage should

be one half of that, used for the FWR circuit, employing two diodes.

2Rf should be used since two diodes in series are conducting at the same time. The

ripple actor and ratio of rectification are the same as for Fu II Wave Rectifier.

The circuit of a bridge rectifier is as shown in the below figure 8.4

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 30

Fig 8.4 Bridge rectifier Circuit

Where equation for dc current is

Idc = 2 Im/Π

Where,

Im = Vm/(Rs+ 2Rf + Rl)

Vdc= 2Vm/Π - Idc(Rs + 2Rf)

Rs = Resistance of Transformer Secondary

Rf = Forward Resistance of a diode

Rl = Load Resistance

2Rf should be used since two diodes in series are conducting at the same time. The

ripplefactor and ratio of rectification are the same as for Full Wave Rectifier.

8.6 ADVANTAGES OF BRIDGE RECTIFIER

1. The peak inverse voltage (PIV) across each diode is Vm and not 2Vm as in the case of

FWR. Hence the Voltage rating of the diodes can be less.

2. Centre tapped transformer is not required.

3. There is no D.C. current flowing through the transformer since there is no centre tapping

and the return path is to the ground. So the transformer utilization factor is high.

8.7 DISADVANTAGES

1. Four diodes are to be used.

2. There is some voltage drop across each diode and so output voltage will be slightly less

compared to FWR. But these factors are minor compared to the advantages.

Bridge rectifiers are available in a package with all the 4 diodes incorporated in one

unit. It will have two terminals for A.C. Input and two terminals for DC output. Selenium

rectifiers are also available as a package.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 31

8.8 COMPARISON OF RECTIFIER CIRCUITS

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 32

Chapter 9

AMPLIFIERS

9.1 Introduction

Any system whether it is electrical, mechanical, hydraulic or pneumatic may be

considered to have at least one input and one output. If the system is to perform smoothly, we

must be able to measure or control output. If the input is 10m V, gain of the amplifier is 100,

output will be I V. If the input deviates to 9m V or 11 m V, output will be 0.9 V or 1.1 V. So

there is no control over the output. But by introducing feedback between the output and input,

there can be control over the output. If the input is increased, it can be made to increase by

having a link between the output and input. By providing feedback, the input can be made to

depend on output.

Basic definitions

Ideally an amplifier should reproduce the input signal, with change in magnitude and with or

without change in phase. But some of the short comings of the amplifier circuit are

I. Change in the value of the gain due to variation in supplying voltage, temperature or due to

components.

2. Distortion in wave-form due to non linearities in the operating characters of the amplifying

device.

3. The amplifier may introduce noise (undesired signals) the above drawbacks can be

minimizing if we introduce feedback

9.2 Voltage amplifier

This circuit is a 2-port network and it represents an amplifier (see in Fig 9.1). Suppose

R,» Rs, drop across Rs is very small.

Fig 9.1 Equivalent circuit of a voltage amplifier

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 33

Vt =Vs

Similarly if Rl>>Ro, Vo = Av Vi

Vo = Av. Vs

Output voltage is proportional to input voltage. The constant of proportionality Ay

doesn't depend on the impedances. (Source or load). Such a circuit is called as Voltage

Amplifier. Therefore, for ideal voltage amplifier

R1= ∞

R0= 0

Av = V0/Vi

RL = ∞

AV represents the open circuit voltage gain. For ideal voltage amplifier, output voltage

is proportional to input voltage and the constant of proportionality is independent of Rs or RL.

9.3. COMPARATOR OR MIXER NETWORK

This is usually a differential amplifier. It has two inputs and gives a single output which is

thedifference of the two inputs.

V = Output voltage of the basic amplifier before sampling [see the blockdiagram of

feedback]

V, = Input voltage to the basic amplifier

Av = Voltage amplification = V N,

AI = Current amplification = III,

GM = Transconductance ofbasic amplifier = IN i

RM = Trans resistance = VII,

All these four quantities, Av, Af, GM and RM represent the transfer gains (Though

GM and RM are not actually gains) of the basic amplifier without feedback. So the symbol

'A' is used to represent these quantities. Ar is used to represent the ratio of the output to the

input with feedback. This is called as the transfer gain of the amplifier with feedback.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 34

Chapter 10

CLASSIFICATION OF FEEDBACK NETWORKS

10.1 Feedback Concept

A sampling network samples the output voltage or current and this signal is applied to

the input through a feedback two port network. The block diagram representation is as shown

in Fig. 10.1.

Fig 10.1 Block Diagram of a feedback Network

Signal Source

It can be a voltage source V s or a current source Is

Feedback Network

It is a passive two port network. It may contain resistors, capacitors or inductors. But usually

a resistance is used as the feedback element. Here the output current is sampled and feedback.

The feedback network is connected in series with the output. This is called as Current

Sampling or Loop Sampling.

A voltage feedback is distinguished in this way from current feedback. For voltage feedback,

the feedback element (resistor) will be in parallel with the output. For current feedback the

element will be in series.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 35

Feedback amplifiers are classified as shown below.

10.2 TYPES OF FEEDBACK

Feedback means a portion of the output of the amplifier circuit is sent back or given

back or feedback at the input terminals. By this mechanism the characteristics of the

amplifier circuit can be changed. Hence feedback is employed in circuits. There are two types

of feedback.

I. Positive Feedback

2. Negative Feedback

Negative feedback is also called as degenerative feedback. Because in negative feedback, the

feedback signal opposes the input signal. So it is called as degenerative feedback. But there

are many advantages with negative feedback.

Advantages of Negative Feedback

I. Input impedance can be increased.

2. Output impedance can be decreased.

3. Transfer gain Afcan be stabilized against variations in It-parameter of the transistor with

temperature etc. i.e. stability is improved.

4. Bandwidth is increased.

5. Linearity of operation is improved.

6. Dist0l1ion is reduced.

7. Noise reduces.

Based on series/shunt and voltage/current the four different types of feedback are,

1. Voltage series feedback.

2. Voltage shunt feedback.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 36

3. Current series feedback.

4. Current shunt feedback.

Figure 10.2 below shows the different types of feedback

Fig 10.2 Different types of feedback

10.3Improvement of Stability with Feedback

Stability means, the stability of the voltage gain. The voltage gain must have a stable value,

with frequency. Let the change in Av is represented by S.

S = dAv/(1+βAv)

For negative Feedback, β is negative: denominator> 1;S' < S

i.e., variation in Ay' or % change in A, is less with -negative feedback.

:. Stability is good.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 37

10.4 Summary

An Amplifier circuit is to provide voltage gain or current gain or both in theform of

power gain. But the other desirable characteristics of the amplifiercircuits are high Z, Low

Z,and Large B. W, low distortion, low noise and highstability. To achieve these

characteristics a part of output signal is feedbackand coupled to input, to oppose in phase

with (V). So it is negative feedback.Though gain reduces due to negative feedback, it is

employed in amplifiercircuits to get the other advantages.

• Feedback factor β = V ~V o' The product I3A is called returned ratio. (1 + I3A) is

called return difference D or desensitivity factor.

• The different types of feedback are (i) voltage series (ii) current series (iii)

voltage shunt and (iv) current shunt.

• With voltage feedback (series or shunt) output resistance Ro decreases.

• With current feedback (series or shunt) Ro increases.

• With series feedback (current or voltage) R increases.

• With shunt feedback (current or voltage) Ro decreases.

• With negative feedback, distortion, noise, gain reduces by a factor (1 + I3A).

Bandwidth, f2' stability improve by (l + I3A).

• If the feedback signal is proportional to voltage, it is voltage feedback. If the

feedback signal is proportional to current, it is current feedback. If the

feedback signal V] is coming in series with input signal V" it is series

feedback. If V] is in parallel with V, it is shunt feedback.

• If the feedback signal VI is out of phase with V" opposing it is negative

feedback. IfVj is in phase with V" adding to it or aiding V" it is positive

feedback.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 38

Chapter 11

OSCILLATORS

11.1 Introduction

Oscillator is a source of AC voltage or current. We get A.C output from the oscillator

circuit. In alternators (AC generators) the thermal energy is converted to electric energy at

50Hz. In the oscillator circuits that we are describing now, the electric energy in the form of

DC is converted into electric energy in the form of AC. 'Invertors ' in electrical engineering

convert DC to AC, but there, only output power is the criterion and not the actual shape of the

wave form.

An amplifier is different from oscillator in the sense that an amplifier requires some

A.C. input which will be amplified. But an oscillator doesn't need any external AC signal.

This is shown in Fig. 11.1 below:

Fig 11.1 the difference between an amplifier and oscillator

For an amplifier, the additional power due to amplification is derived from the DC

bias supply. So an amplifier effectively converts DC to AC. But it needs AC input. Without

AC input, there is no AC output. In the oscillator circuits also DC power is converted to AC.

But there is no AC input signal. So the difference between amplifier and oscillator is in

amplifiers circuits, the DC power conversion to AC is controlled by the AC input signal. But

in oscillators, it is not so. There are two types of oscillator’s circuits:

I. Harmonic Oscillators

2. Relaxation Oscillators.

Harmonic Oscillators produce sine waves. Relaxation Oscillators produce saw tooth and

square waves etc. Oscillator circuits employ both active and passive devices. Active devices

convert the DC power to AC. Passive components determine the frequency of oscillators

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 39

11.2 PERFORMANCE MEASURES OF OSCILLATOR CIRCUITS:

1. Stability: This is determined by the passive components. R,C and L determine frequencyof

oscillations.Capacitors should beof high quantity with low leakage. So silver mica and

ceramic capacitors are widely used.

2. Amplitude stability: To get large output voltage, amplification is to be done.

3. Output Power: Class A, Band C operations can be done. Class C gives largest outputpower

but harmonics are more.Class A gives less output power but harmonics are low.

4. Harmonics: Undesirable frequency components are harmonics.

11.3 SINUSOIDAL OSCILLATORS

Figure 11.2 shows an amplifier, a feedback network and input mixing circuit not yet

connected toform a closed loop. The amplifier provides an output signal Xo' as a

consequence of the signalXI applied directly to the amplifier input terminal. Output of

feedback network

Fig 11.2 Sinusoidal Amplifier

11.4 BARKHAUSEN CRITERION

We make an assumption that the circuit operates only in the linear region, and the

amplifier feedback network contains reactive elements. For a sinusoidal wave form, if XI =

X~ , the amplitude, phase and frequency of Xi and X~ be identical. The frequency of a

sinusoidal oscillator is determined by the condition that loop gain, Phase shift is zero at

that frequency. For oscillator circuits positive feedback must be there i.e., V [must be in

phase with VI to get added to VI . When active device BJT or FET gives 180° phase shift, the

feedback network must produce another 180° phase shift so that net phase shift is 0° or 360°

and V [is in phase with VI to make it positive feedback. Oscillations will not be sustained if,

at the oscillator frequency the magnitude of the product of the transfer gain of the amplifier

and of P are less than unity.

The conditions - Aβ = 1 is called Barkhausen criterion

i.e. |βA| = 1 and phase of - Aβ = 0

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 40

11.5 R - C PHASE-SHIFT OSCILLATOR (USING JFET)

This is voltage series feedback. FET amplifier is followed by three cascaded

arrangements of a capacitor C, resistor R. The output of the last RC combination is returned

to the gate. This forms the feedback connection. The FET amplifier shifts the phase of

voltage appearing at the gate by 180°. The RC network shifts the phase by additional amount.

At some frequency, the phase-shift introduced by this network will be exactly 180°. The total

phase-shift at this frequency, from the gate around the circuit and back to the gate is + 180 -

180 = 0°. At this particular frequency, the circuit will oscillate.

11.6 Hartley and Colpitts Oscillators

The Hartley and Colpitts Oscillators can be studied from the figure 11.3 given below

Fig 11.3 Colpitts and Hartley Oscillators Circuit Diagram

FOR HARTELY OSCILLATOR

f = [2 Π ]-1

FOR COLPITTS OSCILLATOR

f = [2 Π ]-1

Ct = C1C2/(C1 + C2)

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 41

11.7 WIEN BRIDGE OSCILLATORS

In this circuit, a balanced bridge is used as the feedback network. The active element

is an operational amplifier. It employs lead-lag Network. Frequency focan be varied in the

ratio of 10:1 compared to 3:1 in other oscillator circuits. External voltage V 0 is applied

between 3 and 4, as shown in Fig.11.4

Fig 11.4 Wien Bridge Oscillator

11.8 CRYSTAL OSCILLATORS

When certain solid materials are deformed, they generate within them, an electric charge.

This effect is reversible in that, if a charge is applied, the material will mechanically deform

in response. This is called Piezoelectric effect.

Naturally available materials: 1. Quartz 2. Rochelle salt.

Synthetic materials: 1. Lithium sulphate 2. Ammonium-di-hydrogen phosphate, PZT(Lead

Zircon ate Titanate), BaTi03 (Barium Titanate).

If the crystal is properly mounted, deformations take place within the crystal, and an

electromechanical system is formed which will vibrate when properly excited. The resonant

frequency andQ depend upon crystal dimensions etc. With these, frequencies from few KHz

to MHz and Q in therange from 1000s to 100,000 can be obtained. Since Q is high, and for

Quartz, the characteristics areextremely stable, with respect to time, temperature etc., very

stable oscillators can be designed. Thefrequency stability will be ± 0.00 I %. It is same as ±

10 parts per million (l0 ppm).

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 42

Values for a 90 kHz crystal are L = 137 H, C = 0.023pF; R = 15kO corresponding to

Q = 5,500. The dimension of a crystal will be 30 x 4 x 1.5 mm. C' is the electrostatic

capacitance between electrodes with the crystal as a dielectric. C' = 3.5 pF and is larger than

C. When the crystal slabs are cut in proper directions, with regard to the crystal axis, a

potential difference exists between the faces of the crystal slab when pressure is brought to

bear on them. And if the slab is placed in an electrostatic field, the slab undergoes

deformation. (Fig.11.5).

If the electric field is an alternating one, with a frequency which sets the slab into

mechanical resonance, the slab will physically vibrate vigorously. Such a crystal can be

employed to maintain an oscillation of great frequency stability. When the L.C. circuit in the

plate circuit is tuned close to the crystal resonant frequency, steady oscillations will be

established. These are maintained by C whose oscillations value is small. By placing crystal

between the gate andsource, of the FET amplifier, and .feeding back a small A.C. voltage

from the output, to keep crystal vibrating, the circuit becomes an oscillator with precise

stability. Accuracy =~0.01 % 'f range is 0.1 to 20 MHz’s.

By keeping crystal in an oven, accuracy can be improved by 0.001 %.

Fig 11.5 Crystal Oscillator

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 43

II

MICROWAVE

ENGINEERING

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 44

Chapter 1

INTRODUCTION

1.1 Microwave Frequencies

The descriptive term microwave is used to describe electromagnetic waves with

wavelengths ranging from 1 cm to 1 m. The corresponding frequency range is 300 MHz up to

30 GHz for 1-cm-wavelength waves. Electromagnetic waves with wavelengths ranging from

1 to 10 mm are called millimetre waves. The infrared radiation spectrum comprises

electromagnetic waves with wavelengths in the range 1 am (10 6 m) up to 1 mm. Beyond the

infrared range is the visible optical spectrum, the ultraviolet spectrum, and finally x-rays.

Several different classification schemes are in use to designate frequency bands in the

electromagnetic spectrum. These classification schemes are summarized in Tables 1.1 and

1.2. The radar band classification came into use during World War II and is still in common

use today even though the new military band classification is the recommended one. In the

UHF band up to around a frequency of 1 GHz, most communications circuits are constructed

using lumped-parameter circuit component. In the frequency range from 1 up to 100 GHz.

lumped circuit elements are usually replaced by transmission-line and waveguide

components. Thus by the term microwave engineering we shall mean generally the

engineering and design of information-handling systems in the frequency range from 1 to 100

GHz corresponding to wavelengths as long as 30 cm and as short as 3 mm. At shorter

wavelengths we have what can be called optical engineering since many of the techniques

used are derived from classical optical techniques. The characteristic feature of microwave

engineering is the short wavelengths involved, these being of the same order of magnitude as

the circuit elements and devices employed.

The Table 1.1 shows the various bands of Microwave frequencies, their designations

and general applications of each and every band in modern field of Science and Technology

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 45

Table 1.1 Different bands of Frequencies and their applications

The short wavelengths involved in turn mean that the propagation time for electrical

effects from one point in a circuit to another point is comparable with the period of the

oscillating currents and charges in the system. As a result, conventional low-frequency circuit

analysis based on Kirchhoffs laws and voltage-current concepts no longer suffices for an

adequate description of the electrical phenomena taking place. It is necessary instead to cany

out the analysis in terms of a description of the electric and magnetic fields associated with

the device. In essence, it might be said, microwave engineering is applied electromagnetic

fields engineering. For this reason the successful engineer in this area must have a good

working knowledge of electromagnetic field theory.

Table 1.2 represents modern labelling of frequency bands according to IEEE

standards.

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 46

Table 1.2 IEEE Frequency band Designation

There is no distinct frequency boundary at which lumped-parameter circuit elements

must be replaced by distributed circuit elements. With modern technological processes it is

possible to construct printed-circuit inductors that are so small that they retain their lumped-

parameter characteristics at frequencies as high as 10 GHz or even higher. Likewise, optical

components, such as parabolic reflectors and lenses, are used to focus microwaves with

wavelengths as long as 1 m or more. Consequently, the microwave engineer will frequently

employ low-frequency lumped-parameter circuit elements, such as miniaturized inductors

and capacitors, as well as optical devices in the design of a microwave system.

1.2 Microwave History of Development

The great interest in microwave frequencies arises for a variety of reasons. Basic

among these is the ever-increasing need for more radio-frequency-spectrum space and the

rather unique uses to which microwave frequencies can be applied. When it is noted that the

frequency range 109 to 1012 Hz contains a thousand sections like the frequency spectrum from

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 47

0 to 109 Hz, the value of developing the microwave band as a means of increasing the

available usable frequency spectrum may be readily appreciated.

In more recent years microwave frequencies have also come into widespread use in

communication links, generally referred to as microwave links. Since the propagation of

microwaves is effectively along line-of-sight paths, these links employ high towers with

reflector or lens-type antennas as repeater stations spaced along the communication path.

Such links are a familiar sight to the motorist traveling across the country because of their

frequent use by highway authorities, utility companies, and television networks. A further

interesting means of communication by microwaves is the use of satellites as microwave

relay stations. The first of these, the Telstar, launched in July 1962, provided the first

transmission of live television programs from the United States to Europe.

At the present time most communication systems are shifting to the use of digital

transmission, i.e., analogue signals are digitized before transmission. Microwave digital

communication system development is progressing rapidly. In the early systems simple

modulation schemes were used and resulted in inefficient use of the available frequency

spectrum. The development of 64-state quadrature amplitude modulation (64-QAM) has

made it possible to transmit 2,016 voice channels within a single 30-MHz RF channel. This is

competitive with FM analog modulation schemes for voice.

1.3 Microwave Applications

Even though such uses of microwaves are of great importance, the applications of

microwaves and microwave technology extend much further, into a variety of areas of basic

and applied research, and including a number of diverse practical devices, such as microwave

ovens that can cook a small roast in just a few minutes

Waveguides periodically loaded with shunt susceptance elements support slow waves

having velocities much less than the velocity of light, and are used in linear accelerators.

These produce high-energy beams of charged particles for use in atomic and nuclear

research. The slow-traveling electromagnetic waves interact very efficiently with charged-

particle beams having the same velocity, and thereby give up energy to the beam. Another

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 48

possibility is for the energy in an electron beam to be given up to the electromagnetic wave,

with resultant amplification

Sensitive microwave receivers are used in radio astronomy to detect and study the

electromagnetic radiation from the sun and a number of radio stars that emit radiation in this

band. Such receivers are also used to detect the noise radiated from plasmas (an

approximately neutral collection of electrons and ions, e.g., a gas discharge). The information

obtained enables scientists to analyze and predict the various mechanisms responsible for

plasma radiation. Microwave radiometers are also used to map atmospheric temperature

profiles, moisture conditions in soils and crops, and for other remote-sensing applications as

well.

The development of the laser, a generator of essentially monochromatic (single-

frequency) coherent-light waves, has stimulated a great interest in the possibilities of

developing communication systems at optical wavelengths. This frequency band is

sometimes referred to as the ultra-microwave band. With some modification, a good deal of

the present microwave technology can be exploited in the development of optical systems.

For this reason, familiarity with conventional microwave theory and devices provides a good

background for work in the new frontiers of the electromagnetic spectrum.

The domestic microwave oven operates at 2,450 MHz and uses a magnetron tube with

a power output of 500 to 1000 W. For industrial heating applications, such as drying grain,

manufacturing wood and paper products, and material curing, the frequencies of 915 and

2,450 MHz have been assigned. Microwave radiation has also found some application for

medical hyperthermia or localized heating of tumours

Chapter 2

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 49

WAVEGUIDES –I

2.1 Modes of Propagation

For a large variety of waveguides of practical interest it turns out that all the boundary

conditions can be satisfied by fields that do not have all components present. Specifically, for

transmission lines, the solution of interest is a transverse electromagnetic wave with

transverse components only, that is, Ez = Hz = 0, whereas for waveguides, solutions with Ez =

0 or Hz = 0 are possible. Because of the widespread occurrence of such field solutions, the

following classification of solutions is of particular interest.

1. Transverse electromagnetic (TEM) waves. For TEM waves, Ez = Hz = 0. The electric

field may be found from the transverse gradient of a scalar function *(x,y), which is a

function of the transverse coordinates only and is a solution of the two-dimensional

Laplace equation.

2. Transverse electric (TE), or H, modes. These solutions have Ez = 0, but Hz ¥= 0. All the

field components may be derived from the axial component Hz of magnetic field.

3. Transverse magnetic (TM), or E, modes. These solutions have Hz = "» but Ez ¥= 0. The

field components may be derived from Ez.

In some cases it will be found that a TE or TM mode by itself will not satisfy all the

boundary conditions. However, in such cases linear combinations of TE and TM modes may

be used, since such linear combinations always provide a complete and general solution.

Although other possible types of wave solutions may be constructed, the above three types

are the most useful in practice and by far the most commonly used ones.

The appropriate equations to be solved to obtain TEM, TE, or TM modes will be

derived below by placing E, and Hz, Ez, and Hz. respectively, equal to zero in Maxwell's

equations.

2.2 Rectangular vs Circular Waveguides

Hollow-pipe waveguides do not support a TEM wave. In hollow-pipe waveguides the waves

are of the TE and TM variety. The waveguide with a rectangular cross section is the most

widely used one. It is available in sizes for use at frequencies from 320 MHz up to 333 GHz.

The WR-2300 waveguide for use at 320 MHz has internal dimensions of 58.42 in by 29.1 in

and is a very large duct. By contrast, the WR-3 waveguide for use at 333 GHz has internal

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 50

dimensions of 0.034 in by 0.017 in and is a very miniature structure. The standard WR-90 X-

band waveguide has internal dimensions of 0.9 in by 0.4 in and is used in the frequency range

of 8.2 to 12.5 GHz. The rectangular waveguide is widely used to couple transmitters and

receivers the antenna. For high-power applications the waveguide is filled with j inert gas

such as nitrogen and pressurized in order to increase the voltage breakdown rating.

Circular waveguides are not as widely used as rectangular waveguidesbut are

available in diameters of 25.18 in down to 0.239 in to cover tn frequency range 800 MHz up

to 116 GHz.

2.3 Rectangular Waveguides

Fig 2.1 Rectangular Waveguide

Modes of Propagation

The rectangular waveguide with a cross section as illustrated in Fig. 2.1 is an example of

a wave guiding device that will not support a TEM wave. Consequently, it turns out that

unique voltage and current waves do not exist, and the analysis of the waveguide properties

has to be carried out as a field problem rather than as a distributed-parameter-circuit problem.

In a hollow cylindrical waveguide a transverse electric field can exist only if a time-

varying axial magnetic held is present. Similarly, a transverse magnetic field can exist only if

either an axial displacement current or an axial conduction current is present, as Maxwell's

equations show. Since a TEM wave does not have any axial field components and there is no

center conductor on which a conduction current can exist, a TEM wave cannot be propagated

in a cylindrical waveguide.

The types of waves that can be supported (propagated) in a hollow empty waveguide are

the TE and TM modes. The essential properties of all hollow cylindrical waveguides are the

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 51

same, so that an understanding of the rectangular guide provides insight into the behavior of

other types as well. As for the case of the transmission line, the effect of losses is initially

neglected. The attenuation is computed later by using the perturbation method given earlier,

together with the loss-free solution for the currents on the walls.

The essential properties of empty loss-free waveguides, which the detailed analysis to follow

will establish, are that there is a double infinity of possible solutions for both TE and TM

waves. These waves, or modes, may be labeled by two identifying integer subscripts n and

m, for example, TEmn.

The integers n and in pertain to the number of standing-wave interference maxima

occurring in the field solutions that describe the variation of the fields along the two

transverse coordinates. It will be found that each mode has associated with it a characteristic

cut-off frequency fcm below which the mode does not propagate and above which the mode

does propagate

TE10 mode is the most dominant mode in Rectangular waveguides

For a rectangular waveguide with a width aequal to twice the height ft, the maximum

bandwidth of operation over which only the dominant TE10 mode propagates is a 2:1 band.

For some system applications it is necessary to have a waveguide that operates with only a

single mode of propagation over much larger bandwidths. A transmission line supporting

only a TEM mode can fulfil this requirement but must then have cross-sectional dimensions

that are small relative to the shortest wavelength of interest. A coaxial transmission line will

support higher-order TE and TM modes in addition to the TEM mode. Thus, to avoid

excitation of a higher-order mode of propagation, the outer radius must be kept small relative

to the wavelength. The small cross section implies a relatively large attenuation; so some

other form of waveguide is needed.

Chapter 3

WAVEGUIDE COMPONENTS-1

3.1 ATTENUATORS

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 52

Attenuators may be of the fixed or the variable type, the first is used only if a fixed

amount of attenuation is to be provided. For bridge setups used to measure transmission

coefficients, the variable attenuator is used. There are many ways of constructing a variable

attenuator; only one type, the rotary attenuator, is considered

A simple form of consists of a thin tapered resistive card, of the type used for mat t

whose depth of penetration into the waveguide is adjustable

Perhaps the most satisfactory precision attenuator developed is the rotary attenuator,

which we now examine in some detail. The h components of this instrument consist of two

rectangular-to-circular waveguide tapered transitions, together with an intermediate section of

circular waveguide that is free to rotate,. A thin tapered resistive card is placed at the output

end of each transition section and oriented parallel to the broad walls of the rectangular

guide. A similar resistive card is located in the intermediate circular-guide section. The

incoming TE10 mode in rectangular guide is transformed into the TE1n mode in the circular

guide with negligible reflection by means of the tapered transition. The polarization of the

TE1n mode is such that the electric field is perpendicular to the thin resistive card in the

transition section. As such, this resistive card has a negligible effect on the TEn mode. Since

the resistive card in the centre section can be rotated, its orientation relative to the electric

field of the incoming TE1n mode can be varied so that the amount by which this mode is

attenuated: adjustable.

3.2 Electronically Controlled Attenuators

For applications in various microwave systems, it is desirable to have an attenuator

whose attenuation can be controlled by the application of a suitable signal, such as a dc

voltage or a bias current. Two devices that are suitable for use in an electronically controlled

attenuator are the PIN diode and a field-effect transistor. These devices can be used as

variable resistors whose resistance is controlled by the applied signal.

3.3 PHASE SHIFTERS

MAHAVEER INSTITUTE OF SCIENCE AND TECHNOLOGY Page 53

A phase shifter is an instrument that produces an adjustable change in the phase angle of the

wave transmitted through it. Ideally, it should perfectly matched to the input and output lines

and should produce zero attenuation. These requirements can be met to within a reasonable

deg of approximation. There are a variety of designs for phase shifters mechanically

adjustable type. The rotary phase shifter is the best in class

Phase shifters are used to change the transmission phase angle (phase of S21) of a network.

Ideal phase shifters provide low insertion loss, and equal amplitude (or loss) in all phase

states. While the loss of a phase shifter is often overcome using an amplifier stage, the less

loss, the less power that is needed to overcome it. Most phase shifters are reciprocal

networks, meaning that they work effectively on signals passing in either direction. Phase

shifters can be controlled electrically, magnetically or mechanically. Most of the phase

shifters described on this web site are passive reciprocal networks; we will concentrate

mainly on those that are electrically-controlled.

While the applications of microwave phase shifters are numerous, perhaps the most important

application is within a phased array antenna system (a.k.a. electrically steerable array, or

ESA), in which the phase of a large number of radiating elements are controlled to force the

electro-magnetic wave to add up at a particular angle to the array. The total phase variation of

a phase shifter need only be 360 degrees to control an ESA of moderate bandwidth. Networks

that stretch phase more than 360 degrees are often called line stretchers, and are constructed

similar to the switched line phase shifters to be described below.