Professional Documents

Culture Documents

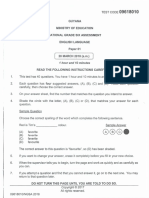

Db2cert7014 A4

Uploaded by

api-3731933Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Db2cert7014 A4

Uploaded by

api-3731933Copyright:

Available Formats

Monitoring DB2 Activity

Presented by DB2 Developer Domain

http://www7b.software.ibm.com/dmdd/

Table of Contents

If you're viewing this document online, you can click any of the topics below to link directly to that section.

1. Introduction.............................................................. 2

2. Capturing snapshots................................................... 4

3. Capturing event monitor data ........................................ 17

4. Analyzing SQL with Explains......................................... 27

5. Using the Health Center and Memory Visualizer ................. 45

6. Leveraging the DB2 Governor and the Query Patroller ......... 53

7. Conclusion............................................................... 57

Monitoring DB2 Activity Page 1 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Section 1. Introduction

What this tutorial is about

Tuning and configuring a DB2® database can be a complex process that sometimes

overwhelms new DBAs. There are, however, a great number of tools, functions, and

applications included with DB2 that make this task much simpler. The release of DB2

Universal DatabaseTM V8.1 includes new tools and information and improves the

usability of existing performance resources.

This tutorial is designed to assist you with your preparation for the DB2 V8.1 for Linux,

UNIX®, and WindowsTM Database Administration exam (#701). It focuses on Part 4 of

the exam, entitled "Monitoring DB2 Activity." The tutorial covers the different options a

DBA has for tuning and monitoring their DB2 environments. It is broken down into a

series of sections with each focusing on available information or configurations. At the

beginning of each section is a list of objectives that you should achieve before you

move on to the next section.

All references to DB2 in this tutorial refer to DB2 UDB V8.1 for Linux, UNIX, and

Windows, and do not apply to other platforms.

Who should take this tutorial

You should take this tutorial if you want to learn more about measuring and tuning the

performance of DB2.

This tutorial is written for DB2 database administrators who have a basic understanding

of DB2. It will also be useful for developers who want to be able to understand

introductory performance and monitoring information for DB2. Both groups of

individuals should have a good knowledge of SQL.

Software requirements

To complete the steps in this tutorial, you will need to have a version of DB2 UDB V8.1

installed on a system. Many of the tools and functions described in the tutorial are new

to V8.1, so DB2 V7.1 or V7.2 cannot be used.

You can download a free trial version of DB2 Universal Database V8.1 Personal

Edition.

The SAMPLE database should also be created. An application called db2sampl can

be found in the DB2 home directory db2HomeDirectory/bin. Running this

application will create the database SAMPLE and populate it with base data.

Page 2 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Documentation

There are references to documentation in Adobe Acrobat PDF format throughout the

tutorial. You can download the documentation separately from the Personal Edition.

This documentation can also be downloaded as a series of HTML files. Check out the

Resources on page 57 section to see where the different DB2 documents can be found

in the PDF files.

About the author

Drew Bradstock works as a DB2 consultant for

IBM. His department is responsible for the

migration of applications from other RDBMSs to

DB2. He has previously worked with the DB2

Performance department and specializes in the

design and performance tuning of large-scale

benchmarks and proofs of concept. When not

getting the best out of DB2, he's training for his first

triathlon. You can reach Drew at

drewkb@ca.ibm.com.

Monitoring DB2 Activity Page 3 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Section 2. Capturing snapshots

What are snapshots?

DB2 snapshots are point-in-time views into what DB2 is doing and how it is performing.

They are used in the performance tuning of instances, databases, and applications

running on DB2. The information returned from a snapshot is a combination of

cumulative information and data that applies only to a single moment.

So why should I use them?

Snapshots are the only method you have to view a large amount of the behavior of

DB2. There are tools built into V8.1 that allow you to track the memory usage of the

instances and databases. These tools often do not give you information that is detailed

enough. Snapshots are actually used by all the third-party analysis tools that run on

DB2. The tools are simply taking snapshots constantly and parsing the data into an

easily viewed format.

What types of snapshots are there?

Each type of snapshot captures a different set of data about DB2:

• Database

• Table space

• Tables

• Buffer pools

• Locks

• Database Manager

• Applications

These are covered in detail later in this section.

Objectives for this section:

1. Understand what snapshots are

2. Learn how to take a snapshot

3. Know what types of snapshots are available

4. Understand when to take which type of snapshot

5. Practice working with snapshot information

How are snapshots taken?

A snapshot can be taken using an API call in a program, or from the command line.

Page 4 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

API call

Snapshot information can be returned from a function call in a program. This

information can then be parsed and used by an application. The syntax for all the API

snapshot calls is beyond the scope of this tutorial. Further information on the API calls

can be found in the DB2 System Monitor Guide and Reference. Chapter 3, entitled

"Using the snapshot monitor," has a section entitled "Snapshot Monitor API request

types" that has all the information you should need.

Command line

Normally, the snapshot data is called from the command line and dumped into a text

file for future analysis. Often, people will write scripts to take snapshots on regular

intervals to observe the behavior of a database, or to assist in performance tuning.

Here's the command syntax:

Let's look at some example snapshot commands to see how this syntax works. Here's

Monitoring DB2 Activity Page 5 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

a command for a database snapshot:

db2 get snapshot for database on drew_db

Here's a command for a dynamic SQL snapshot, redirected to a file:

db2 get snapshot for dynamic sql on drew_db > dynSnap.drew_db

Here's a command for an application snapshot for all applications:

db2 get snapshot for all applications

Note: This snapshot will be taken on all applications currently connected to the

instance and not just those for a particular database -- even if you are currently

connected to one.

Here's a command for a buffer pool snapshot on the fourth node in a partitioned

database:

db2 get snapshot for bufferpools on drew_db at dbpartitionnum 3

Note: The dbpartitionnum field is used to specify the partition at which the

snapshot should be taken. Even if you have connected to a partition other than 0, the

snapshot will still be taken for the 0 node unless otherwise specified.

What memory is used?

The MON_HEAP_SZ variable in the database configuration specifies how much

memory is set aside for the snapshots and event monitors.

Turning snapshots on and off

The monitoring of DB2 is not turned on by default, but instead has to be set at the

connection or instance level. There are a series of monitor switches that determine if a

type of data element is monitored. There is also a memory heap set aside for

containing the information stored for monitoring.

Method 1: Setting monitor switches at the instance level

Setting the monitor switches at the instance level will affect all users connected to any

databases in that instance.

Here are the monitor switches

• DFT_MON_STMT: Statement monitor (used for dynamic SQL)

• DFT_MON_TABLE: Table monitors

Page 6 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

• DFT_MON_LOCK: Lock monitor

• DFT_MON_BUFPOOL: Buffer pool monitors

• DFT_MON_SORT: Sort monitoring

• DFT_MON_UOW: Unit of work information

• DFT_MON_TIMESTAMP: Tracks timestamp information

The values of these switches are stored in the database manager configuration

information. Here are the database manager configuration parameters:

The monitor switches are set just like any other instance configuration parameter:

db2 update dbm cfg using monitorSwitch [ON | OFF]

Here's an example:

db2 update dbm cfg using DFT_MON_SORT ON

Method 2: Setting the monitor switches at an application level

The monitor switches can also be set at an application level; setting the switches in this

way will only apply to a particular application. If you use the method listed below at a

command prompt or Command Window, then the changes will only be applicable to

that particular prompt window.

Here are the switch names:

• Bufferpool

• Lock

• Sort

• Statement

• Table

• Timestamp

• UOW

Use this command to list the switches:

db2 get monitor switches

Monitoring DB2 Activity Page 7 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

And use this command to set the switches:

db2 update monitor switches using switchName [ON | OFF]

Here's a comparison of the scope of the changes of the switches:

Note: You may have noticed that the syntax of setting the monitor switches is very

similar to that for setting the instance and database parameters. The vast majority of

the configuration commands have identical syntax, with parameters being the only

difference.

Scope of the snapshots

It is important to understand what information is being returned to you with the

snapshots and when the monitoring begins. The switches can be set dynamically at

both instance and application levels, and both affect the monitoring of connections

differently.

Page 8 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

If the monitor switches are turned on after an application has already connected, then

the information will only be captured for all actions after the switch has been turned on,

as the following diagram illustrates:

In this example, Application A has already connected to the database before the

monitor switches are turned on. When the first snapshot, Snap1, is taken, no

information is returned by the database, as the monitor switches have not been turned

on. When the second snapshot, Snap2, is taken, information on both Application A and

Application B is captured.

Resetting the switches

The monitor switches can be reset to null or 0 for all values by executing the following

command:

db2 reset monitor [ALL | for database databaseName] [at dbpartitionnum partitionNum]

Where databaseName is the name of the database and partitionNum is the node

number.

Here's an example:

db2 reset monitor for database drew_db

Database snapshots

The snapshots have been broken up into separate topics but there is still a fair amount

of information that is repeated in each. The most commonly used snapshots are the

database and dynamic SQL snapshots. (For more on the latter, see Dynamic SQL

snapshots on page 14 .)

The database snapshot captures summary information about the database. The

information in this snapshot contains an aggregate of almost all the data in all the other

Monitoring DB2 Activity Page 9 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

snapshots. The snapshot's data is a summary since the monitor switches have been

reset last.

The database snapshot is the most frequently used snapshot, and most performance

problems are obvious from the information in it. It contains information on:

• Connections

• DB2 agents

• Locks

• Sorting

• Total buffer pool activity

• SQL activity

• SQL statement volume

• Log usage

• Cache usage

• Hash joins

Here's the command for this type of snapshot:

db2 get snapshot for database on databaseName

And here's some typical output:

Connection Information

High water mark for connections = 1

Application connects = 1

Secondary connects total = 0

Applications connected currently = 1

DB2 Agents

Appls. executing in db manager currently = 0

Agents associated with applications = 1

Maximum agents associated with applications = 1

Maximum coordinating agents = 1

Locks

Locks held currently = 0

Lock waits = Not Collected

Time database waited on locks (ms) = Not Collected

Lock list memory in use (Bytes) = 792

Deadlocks detected = 0

Lock escalations = 0

Exclusive lock escalations = 0

Agents currently waiting on locks = 0

Lock Timeouts = 0

Sorting

Total Private Sort heap allocated = 0

Total Shared Sort heap allocated = 0

Shared Sort heap high water mark = 0

Total sorts = 0

Total sort time (ms) = 0

Sort overflows = 0

Active sorts = 0

Page 10 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Total Bufferpool Activity

Buffer pool data logical reads = 25

Buffer pool data physical reads = 8

Asynchronous pool data page reads = 0

Buffer pool data writes = 0

Asynchronous pool data page writes = 0

Buffer pool index logical reads = 43

Buffer pool index physical reads = 23

Asynchronous pool index page reads = 0

Buffer pool index writes = 0

Asynchronous pool index page writes = 0

Total buffer pool read time (ms) = 162

Total buffer pool write time (ms) = 0

SQL Activity

Commit statements attempted = 0

Rollback statements attempted = 0

Dynamic statements attempted = 0

Static statements attempted = 0

Failed statement operations = 0

Select SQL statements executed = 0

Update/Insert/Delete statements executed = 0

DDL statements executed = 0

SQL statement volume

Rows deleted = 0

Rows inserted = 0

Rows updated = 0

Rows selected = 0

Rows read = 9

Log Usage

Log space available to the database (Bytes) = 7140000

Log space used by the database (Bytes) = 0

Maximum secondary log space used (Bytes) = 0

Maximum total log space used (Bytes) = 0

Secondary logs allocated currently = 0

Log pages read = 0

Log pages written = 0

Cache Usage

Package cache lookups = 0

Package cache inserts = 0

Package cache overflows = 0

Package cache high water mark (Bytes) = 118968

Application section lookups = 0

Application section inserts = 0

Catalog cache lookups = 2

Catalog cache inserts = 2

Catalog cache overflows = 0

Catalog cache high water mark = 0

Hash Joins

Number of hash joins = 0

Number of hash loops = 0

Number of hash join overflows = 0

Number of small hash join overflows = 0

Database manager snapshots

Monitoring DB2 Activity Page 11 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The database manager snapshot captures information specific to the instance level.

The information centers on the total amount of memory allocated to the instance and

the number of agents that are currently active on the system.

Here's the command for this type of snapshot:

db2 get snapshot for database manager

A partial list of the fields available is included in this sample output:

Agent Info

High water mark for agents registered = 6

High water mark for agents waiting for a token = 0

Agents registered = 6

Agents waiting for a token = 0

Idle agents = 5

Sort Information

Private Sort heap allocated = 0

Private Sort heap high water mark = 256

Post threshold sorts = 0

Piped sorts requested = 6

Piped sorts accepted = 6

Table snapshots

The table snapshot contains information on the usage and creation of all tables. This

information is quite useful in determining how much work is being run against a table

and how much the table data changes. This information can then be used to decide

how your data should be laid out physically.

Here's the command for this type of snapshot

db2 get snapshot for tables on drew_db

And here's some typical table snapshot output:

Table List

Table Schema = DREWKB

Table Name = SYSI

Table Type = User

Rows Read = 3

Rows Written = 0

Overflows = 0

Page Reorgs = 0

Page 12 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Table space and buffer pool snapshots

The table space and buffer pool snapshots contain similar information. The table space

snapshot returns information on the layout of the table space and how much space is

being used. The buffer pool snapshot contains information on how much space is

currently allocated for the buffer pool and how much space will be allocated when the

database is next reset. Both snapshots contain a summary of the way in which data is

accessed from the database. This access could be done from a buffer pool, direct from

tables on disk, or through a direct read or write for LOBs or LONG objects.

Here are the commands for these types of snapshots:

db2 get snapshot for tablespaces on drew_db

db2 get snapshot for bufferpools on drew_db

And here's some typical output:

Tablespace Information

Tablespace name = STORAGE1

Tablespace ID = 3

Tablespace Type = System managed space

Tablespace Content Type = Any data

Tablespace Page size (bytes) = 4096

Tablespace Extent size (pages) = 8

Tablespace Prefetch size (pages) = 8

Buffer pool ID currently in use = 1

Buffer pool ID next startup = 1

Tablespace State = 0x'00000000'

Detailed explanation:

Normal

Total number of pages = 159

Number of usable pages = 159

Number of used pages = 159

Minimum Recovery Time =

Number of quiescers = 0

Number of containers = 1

Bufferpool Information

Node number = 0

Tablespaces using bufferpool = 4

Alter bufferpool information:

Pages left to remove = 0

Current size = 250

Post-alter size = 250

Common Information

The common information given on data usage is very complex and is not required

unless advanced performance tuning is being performed.

Buffer pool data logical reads = 39

Buffer pool data physical reads = 14

Buffer pool data writes = 0

Buffer pool index logical reads = 63

Buffer pool index physical reads = 37

This information can be used to determine the buffer pool hit ratio, as illustrated in the

figure below:

Monitoring DB2 Activity Page 13 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The ratio indicates how often the data is found in the buffer pools, instead of having to

be read a table saved on disk.

Lock snapshot

The lock snapshot is very useful in determining what locks an application currently is

holding or what locks another application is waiting on. The snapshot lists all

applications on the system and the locks that each is holding. Each lock, and each

application, is given a unique identifier number.

Here's the command for this type of snapshot:

db2 get snapshot for locks on drew_db

And here's a sample of the lock snapshot output:

Application Information

Application handle = 26

Application ID = *LOCAL.DB2.00B807181746

Sequence number = 0006

Application name = db2bp.exe

Authorization ID = DREWKB

Application status = UOW Waiting

Status change time = 03-28-2003 00:39:57.259727

Application code page = 1252

Locks held = 2

Total wait time (ms) = 125

Lock Information

Lock Name = 0x02000200000000000000000054

Lock Attributes = 0x00000000

Release Flags = 0x00000001

Lock Count = 1

Hold Count = 0

Lock Object Name = 2

Object Type = Table

Tablespace Name = USERSPACE1

Table Schema = DREWKB

Table Name = LOCKTAB

Mode = S

Dynamic SQL snapshots

Page 14 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

The dynamic SQL snapshot is used extensively to determine how well SQL statements

are performing. This snapshot summarizes the behavior of the different dynamic SQL

statements that are run. The snapshot does not capture static SQL statements, so

anything that was prebound will not show up in this list.

The snapshot is an aggregate of the information concerning the SQL statements. If a

SQL statement is executed 102 times, then there will be one entry with the summary of

the total behavior of the 102 executions. If even a single letter is different, there will be

more than one entry.

For example, there will be two entries in the dynamic SQL snapshot for these two

commands:

SQL 1: SELECT * FROM dynTab WHERE name = 'a'

SQL 1: SELECT * FROM dynTab WHERE name = 'A'

For these two commands, there will only be one entry in the snapshot for the

statement:

SQL 1: SELECT * FROM dynTab WHERE name = ?

SQL 1: SELECT * FROM dynTab WHERE name = ?

Even if the statement is executed with a different value of the parameter marker ?,

there will still be only one entry, because the SQL statement was identical.

Here's some sample SQL snapshot object information:

Number of executions = 3

Number of compilations = 1

Worst preparation time (ms) = 15

Best preparation time (ms) = 15

Internal rows deleted = 0

Internal rows inserted = 0

Rows read = 6

Internal rows updated = 0

Rows written = 0

Statement sorts = 0

Total execution time (sec.ms) = 0.038913

Total user cpu time (sec.ms) = 0.000000

Total system cpu time (sec.ms) = 0.000000

Statement text = select * from locktab with RR

Note: Don't forget that these values are the total of all executions of the statement.

Using the SQL snapshot functions

Previously, the output from the snapshots could either be accessed using APIs or

passed into a text file using a command line. All of the different snapshots now have

table functions which contain all their fields. The table function takes the output from

Monitoring DB2 Activity Page 15 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

the snapshot and parses it into a table which can then be accessed using SQL. For

snapshots that do not contain multiple similar elements, such as the data from the

database snapshot, the table will only have one row. Snapshots with multiple similar

objects, such as the Dynamic SQL snapshot, will have one row for each object.

A detailed description of the snapshot table functions can be found in the DB2 PDF

document System Monitor Guide and Reference.

Here's an example:

SELECT *

FROM TABLE( SNAPSHOT_APPL( cast (NULL as VARCHAR), -1))

as SNAPSHOT_APPL

Using dynamic SQL snapshots to find slow SQL

The example below illustrates a very useful technique for finding the SQL statement

that is taking the most time in the database:

SELECT stmt_text, total_exec_time, num_executions

FROM TABLE( SNAPSHOT_DYN_SQL('DREW_DB', -1)) as dynSnapTab

ORDER BY total_exec_time desc

FETCH FIRST 1 ROW ONLY

This second example finds the five SQL statements with the worst average execution

time:

SELECT stmt_text,

CASE WHEN num_executions = 0

THEN 0

ELSE (total_exec_time / num_executions)

END avgExecTime,

num_executions

FROM TABLE( SNAPSHOT_DYN_SQL('DREW_DB', -1)) as dynSnapTab

ORDER BY avgExecTime desc

FETCH FIRST 5 ROWS ONLY

Page 16 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Section 3. Capturing event monitor data

What are event monitors?

Snapshots are used to examine DB2 at one precise moment and aggregate

performance information. Event monitors, on the other hand, are used to monitor the

performance of DB2 over a fixed period of time. Event monitors examine transition

events in the database and consider each event as an object. This allows a very

detailed analysis of DB2's behavior.

The information that can be captured by an event monitor is similar to the snapshots.

Multiple monitors can be run at once and each monitor can also capture information on

more then one type of event. This allows different administrators to monitor different

activities independent of one other.

The event monitors can capture information on:

• Databases

• Tables

• Deadlocks

• Table spaces

• Buffer pools

• Connections

• Statements

• Transactions

The information from the event monitors can be written to:

• SQL tables

• Files

• Pipes

Objectives for this section:

1. Understand what event monitors are

2. Learn how to take an event monitor

3. Know what types of event monitors are available

4. Understand when to take which type of event monitor

5. Practice working with event monitor information

How are event monitors taken?

An event monitor first has to be created. Its information is then stored in the catalog

Monitoring DB2 Activity Page 17 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

table so that it can be reused later. The syntax of the create event monitor

command is outlined below. The syntax diagrams have been taken from the DB2

documentation in the SQL Reference in the "Create Event Monitor" section of Chapter

5, "Statements" (see Resources on page 57 ).

Page 18 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

The event monitor has a number of complex options. The key options are:

1. What will be monitored?

2. Where will the output go?

3. What conditions will be used?

Types of monitors

Each event monitor keeps track of a certain type of transition. The event types also

record their information at different times. A complete description of the event monitor

properties can be found in the DB2 document System Monitor Guide and Reference

(see Resources on page 57 ).

Monitoring DB2 Activity Page 19 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

• Database: An event of database information is recorded when the last application

disconnects from the database.

• Tables: All active table events will be recorded when the last application disconnects

from the database. An active table is one which has been altered or created since

the database was activated. The monitor captures the number of rows read and

written to the table.

• Deadlocks: A deadlock event is recorded immediately when a deadlock occurs. This

monitor also has an additional option, with details. The option will capture

additional information, such as what SQL was being executed when the deadlock

occurred and what locks were held by the application that encountered the deadlock.

The information captured by the monitor focuses on the locks involved in the

deadlock and the applications that own them.

• Buffer pools: A buffer pool event is recorded when the last application disconnects

from the database. The information captured contains the type and volume of use of

the buffer pool, use of pre-fetchers and page cleaners, and whether or not direct I/O

was used.

• Table spaces: A table space event is recorded when the last application

disconnects from the database. This monitor captures the same information as the

buffer pool monitor, but the information is summarized at a table space level.

• Connections: A connection event is recorded whenever an application disconnects

from the database.

• Transactions: A transaction event is recorded whenever a transaction finishes. The

event will be written out whenever a commit or rollback occurs. The monitor captures

all of the individual statement data and also information about the transaction, such

as its start and stop time.

Event monitor output

The event monitor output can be directed in three different ways:

1. Files. The event monitor output can be written out to one or more files. Two

parameters control the amount of space used by the monitor. Once the space limit is

reached, the event monitor will automatically flush all events and stop itself. The

default setting of both parameters is NONE, which indicates that there is no limit.

The parameters are:

• MAXFILESIZE: Maximum size of the output file. This is measured in 4 KB pages.

• MAXFILES: Maximum number of files that will be written to.

2. Pipes. The output can be written to a named pipe that you create. The name of the

pipe must be given, but it does not need to exist when the event monitor is created. It

must, however, exist when the event monitor is started.

3. Tables. The output is written out to one or more tables that exist in the database.

Each monitor element in the event monitor is mapped to a table of the same name.

The individual events are then inserted as rows. A complete description of all the

tables and their columns can be found in the SQL Reference, under the "Create

Event Monitor" overview in Chapter 5, "Statements" (see Resources on page 57 ).

Page 20 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Event conditions

The event monitors can be set to record an event only when certain conditions are met.

This is useful if you want to record information selectively. The WHERE clause can be

used to check the values of application IDs (APPL_ID), authorization ID (AUTH_ID),

and application name (APPL_NAME). Equality predicates such as >, <, and = can be

used in addition to LIKE and NOT LIKE. Multiple conditions can be used as well with

AND and OR operators.

Here's an example:

WHERE APPL_NAME = 'javaw.exe'

AND AUTH_ID LIKE '12%'

OR APPL_NAME NOT LIKE 'db2bp%'

Turning monitors on and off

Event monitors can be turned on and off at any time. The event monitor state value

represents whether or not the event monitor is running.

Here's the command to turn a monitor on or off:

db2 set event monitor eventMonitorName state [0|1]

The eventMonitorName is the name of the created event monitor. 0 will turn the

monitor off and 1 will turn it on.

The current state of the monitor can be determined by using an SQL command. The

DB2 catalog tables also contain information about the created event monitors.

SQL command

The SQL function EVENT_MON_STATE returns the state of an event monitor based on

the specified name. Here's an example of this SQL command (the table used is an

empty table commonly used as a placeholder):

SELECT EVENT_MON_STATE('drewTest) FROM SYSIBM.SYSDUMMY1

Catalog tables

There is a table, SYSIBM.SYSEVENTMONITORS, and a view on that table,

SYSCAT.EVENTMONITORS, that contain information on the event monitors that have

been created. SQL queries can be run against these tables to determine the state of

the monitors:

Monitoring DB2 Activity Page 21 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

SELECT evmonname, target_type, target,

FROM syscat.eventmonitors

where evmonname LIKE 'drew%'

The autostart options

The autostart option in the command to create an event monitor command indicates

that the event monitor is started automatically whenever the database is started.

Flushing the event monitors

Event monitor data can also be flushed to disk. This is useful if you would like to record

information such as database events that are only normally written when all

connections have been terminated. Here's an example command:

db2 flush event monitor eventMonitorName

Using event monitors

The event monitors should be used for the monitoring of very specific events or short

workloads. They are designed to give you very specific information to allow you to

diagnose a problem or behavior of your database or application. Unlike snapshots,

event monitors have an extremely heavy impact on performance of DB2. This is due to

the amount of information that is written out for every single event object. The

statement monitor has a heavy performance impact due to all the extra work the

database engine has to perform for each query: Instead of being able to just execute a

query, the DB2 engine also has to write out all the characteristics and runtime

information for the query. This information has to written to a text file, which slows

things down further.

Use the file size limits to control the amount of disk space that the event monitor output

will take up. If you are monitoring a high-volume OLTP system, the output can quickly

grow to hundreds of megabytes. Normally the event monitors are started and stopped

using scripts so that you can compare the results based on the time they ran and not

on the amount of time before the files filled up with event data. This allows you to

compare apples to apples to see if any changes you made fixed your problem.

Deadlock monitoring

One of the most common uses for the event monitors is to capture deadlock

information. If a monitor is not used, it is almost impossible to determine exactly what

locks and applications were involved in a deadlock, because when a deadlock occurs,

one of the applications involved is rolled back and all its locks are released.

The deadlock monitor traps information on all the applications and their locks. The

Page 22 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

precise SQL statement that caused the deadlock can now also be captured. Don't

forget that the application that DB2 labels as the cause of the deadlock is the last

application involved. The real cause of the deadlock may actually be a transaction that

was started much earlier by another application. Make sure you examine all the locks

and applications involved to determine what the problem is.

Here's an example deadlock monitor:

CREATE EVENT MONITOR DEADLOCK_DB

FOR DEADLOCKS

WRITE TO FILE 'deadlock_db'

MAXFILES 1

MAXFILESIZE NONE

AUTOSTART

The deadlock event monitor does not write out a lot of data and occurs sporadically, so

it is acceptable to not have a file size limit. The command above will create an event

monitor, DEADLOCK_DB, that will start whenever the database is started and write its

information out to a file.

SQL monitoring

Another common purpose for an event monitor is to keep track of SQL statements. The

SQL monitor is quite useful because it traps both dynamic and static SQL statements.

This is essential if an application makes use of precompiled SQL statements that would

not be captured using a SQL snapshot.

An event is recorded for every SQL statement that is executed. The properties of each

statement, such as the number of rows read, selected, deleted, etc., is recorded, and is

not presented as an aggregate total as it is in a snapshot. The execution timeframe and

start and stop times are recorded as well. This allows detailed analysis of transactions

and of how the execution of SQL by one application affects the execution of SQL by

others.

The SQL monitor can also be used to find poorly executing code; you can do so by

searching on individual execution times if the output is put into a table. Due to the

volume of information and performance overhead of running a SQL monitor, this

technique should only be used for short tests or problem determination, and not in a

production environment.

Here's an example:

CREATE EVENT MONITOR BAR

FOR STATEMENTS

WRITE TO TABLE

STMT(TABLE drewkb.stmtTab)

includes(rows_read,rows_written_stmt_text)

This command captures statement information and outputs it into a table called

drewkb.stmtTab. This table will be created dynamically by DB2. The table could then

Monitoring DB2 Activity Page 23 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

have SQL run against it to determine which SQL read the most rows if, for instance,

you were trying to find SQL that may not be using an index.

db2eva tool

There is a graphic utility, db2eva, that will parse the output of the event monitors. This

is essential if you are working with statement or transaction data, since you may have

thousands of events to deal with. The tool only works with event monitors that have

had their output directed to files. The previous release of the tool for DB2 V7.2 worked

directly against the event monitor output files.

Here's the command to start this tool:

db2eva [db databaseName] [evm eventMonitorName]

And here is db2eva's initial selection screen:

You may receive an error when you first open this tool if you have not already captured

connection monitor data. The error in the following figure will be shown if you try and

select any of the monitor types for which you have not captured information. The

message indicates that the tool cannot find the table with the information stored in it for

the monitor.

Page 24 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Each screen differs based on the type of monitor data captured. All of the rows can be

drilled down through for more detailed information. Subsections of the SQL can even

be retrieved and examined if the option was specified with the statement monitor.

Here's a sample statement screen:

Here's some sample statement data elements:

Monitoring DB2 Activity Page 25 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Complete documentation can be found in the DB2 documentation in Command

Reference, in Chapter 1, "System Commands" (see Resources on page 57 ).

Page 26 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Section 4. Analyzing SQL with Explains

Overview

So, you've figured out that one of your SQL statements is running horribly. What do

you do about it? DB2 offers you a number of different tools to find out exactly what is

hurting your performance. Each of the tools is slightly different, but they all look at an

Explain of what the SQL is doing.

The Explain of the SQL is a graphical or text-based breakdown of how and when every

component of your SQL statement is being performed. This includes such basic

information as:

• Tables being accessed

• Indexes being used

• When data is joined

• When data is sorted

The Explain also captures much more complex information, which is quite useful in

breaking down exactly what is happening with the SQL:

• Table and index cardinality

• Order of tables and indexes being joined

• Cardinality of the results sets

• What fields are being selected in each section of the query

• Sort methods

• Timeframe of each section of the SQL

Objectives for this section:

1. Be able to identify how to analyze SQL in DB2

2. Be able to use each tool to analyze your SQL

3. Understand what tools are available and when to use each one

Understanding Explain information

The most important concept you need to master to understand Explain information is

the timeron. A timeron is the unit of measurement used by the DB2 optimizer for the

amount of time and resources that a query will take to complete. The timeron is a

combination of time, CPU utilization, I/O, and other factors. Due to the changing values

of these parameters, the number of timerons needed to execute a query is dynamic

and can change from execution to execution. The timeron is also an invented unit of

measurement, and there is not a formula that can be used to translate the number of

Monitoring DB2 Activity Page 27 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

timerons to the time in seconds that a query will take to execute. The timeron will be

the unit you use to determine if one query execution path is faster then another. Don't

worry if the number of timerons of a query varies by 10 or 20 between compilations, as

this could easily be due to changes in CPU activity, disk activity, or database usage.

SQL translation

All SQL that is executed by the database first has to be compiled. This is done by

reducing the query down to an algebraic statement that the DB2 optimizer can then

analyze. This algebraic statement is referred to as the query graph model, and is

worked with throughout the optimization process. The figure below lists the stages of

optimization and parsing each query passes through before it is executed.

The final output of the optimization process is an access plan. The access plan is the

path and steps that DB2 will take to execute a query. This information is what is

displayed by all the Explain tools. Access plans appear at first to be quite complicated,

but with some practice you will find that they are very easy to understand and analyze.

Plans can be examined in one of three forms:

• Visual tree model: The plan is shown in a GUI that allows you to point and click on

different components of the tree to drill down into more detailed information. The tree

is a series of different colored symbols, each with a specific meaning, that are

displayed as a tree graph. The visual tree model can be examined using the DB2

Visual Explain tool. It is the easiest tool to work with for beginners, since it displays

less information at once. Also, the GUI is easier to use to find information about a

specific section.

Page 28 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

• Text tree model: The plan is shown as an ASCII text-based tree graph model. All of

the different sections of the SQL query are drawn as different text shapes and then

connected using text lines. The shape of the tree is identical to the one shown in the

visual tree model. The text model can be difficult to read if the SQL is very complex,

since the text lines will wrap and distort the model. This often occurs if you are

viewing the text output using simple text editors such as vi for UNIX. The diagram

will be easy to view if you import the file into a text editor with better functionality.

The text tree model is displayed using db2exfmt, dynexpln, and db2expln,

which we'll discuss in subsequent panels.

• Text information: The text information includes all of the specific details about how

the query is executed and about the statistics and field selection that the optimizer

used to determine the access plan. The information can be quite detailed, depending

on the Explain tool used. A summary of the table statistics is included at the end of

the output to allow you to understand the optimizer's assumptions and optimization

data. All of the information in the text section of the Explain output can be found in

the Visual Explain tool by drilling down through the components of the query.

Visual Explain

The Visual Explain tool is called from the DB2 Control Center. To access it, open the

DB2 Control Center, find and open the database that you want to run the SQL against.

Right-click on the database name and select Explain SQL, as illustrated in the figure

below.

Monitoring DB2 Activity Page 29 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The new menu asks for the SQL that you would like to Explain. There are a number of

other options available:

• Get: Allows you to open text files where you have stored SQL.

• Save: Saves the SQL statement you are working with to a text file.

• Query number: Allows you to assign a specific labeled number to the query.

• Query tag: An alphanumeric label for the query.

• Optimization level: Sets the optimization level of the query (see Optimization level

on page 38 ).

• Populate columns: This option stores the access plan information in a series of

tables in your database. This allows you to view the access plan and explain

information later without having to recompile the SQL statement.

Page 30 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Once the SQL has been executed successfully, a new menu opens that shows you the

access plan for the query. A smaller window, labeled Overview, is also created. This

window highlights with a blue rectangle the part of the access plan that you are

viewing. This is handy when you are working with very large and complex queries and

it is difficult to grasp which section you are currently looking at. There is a slide bar on

the left side of the main window that allows you to control the zoom. This only affects

the view and does not give you any additional information.

Monitoring DB2 Activity Page 31 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Every component of the access plan can be clicked to reveal more detailed information

on the component. It is a good idea to try clicking through all the different object types

to get comfortable with the query information that you have available.

Page 32 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Visual Explain components

There are a few Visual Explain components that you need to understand to work with

the tool. All of the component explanations on this panel are based on the example

below:

Monitoring DB2 Activity Page 33 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Table object node

The table object indicates the table with which the query section is working. The object

can be drilled down on to view the statistics of the table. This information can then be

further drilled down for index, clustering, and referencing column data. In this example,

there are two table objects in the picture: TBC.SALESFACT and TBC.PRODUCT.

Index object node

The index object indicates the name of the index. The index object is always attached

to the table that it is scanning. In this example, the index name is MAR1 and it is on the

table TBC.MARKET.

Page 34 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Operator node

The operator node object indicates that an action is being performed on the object(s)

that point to it. These actions include sorting and retrieving of data, joining tables or

result sets, and scanning data. Each operation is clearly labeled. In this example there

are three different actions being performed. Two of the tables are having table scans

performed, one index is being scanned, and two data sets are being joined using the

hashjoin algorithm.

Return node

The final object is the result set object. This object contains summary information about

the query and what is being returned from the completed SQL. The timeron value of

the object is the total length measurement of the time in timerons needed to complete

the query.

Monitoring DB2 Activity Page 35 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Query information

There are a number of key parameters that greatly affect how a query will execute and

how DB2 will compile it.

Parameter values

The Visual Explain tool has all of the parameters that affect the query compilation

summarized in one menu. The text-based tools have the list of parameter values

summarized at the beginning of the output file. A full explanation of each parameter

can be found in the DB2 documentation Administration Guide: Performance under the

Database Manager and database configuration sections (see Resources on page 57 ).

Here is the optimization parameter menu option:

Here is the optimization parameter list:

Page 36 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Let's look at these parameters in some detail.

• AVG_APPLS (average applications): This parameter indicates the average number

of applications that will be running concurrently against the database. DB2 uses this

information to determine how heavily the sort space and buffer pools will be used

and how much space the query will likely be able to use.

• SORTHEAP (sort heap): The sort heap is the amount of space available in memory

to perform a sort. If the sort requires more memory then is available for a sort heap,

then part of the sort data will have to be paged to disk. This can have a negative

impact on performance, so sorts should be kept in memory as much as possible.

• LOCKLIST (lock list): This indicates the amount of memory available for DB2 to

store locking information for each application. If the lock list space is quite small,

then DB2 may have to escalate some locks to allow room for all the locks being held

by the applications.

• MAXLOCKS (maximum lock list percentage): This parameter controls what

percentage of the total lock list space one application can have. If an application tries

to use up too much memory by having too many open locks, DB2 will escalate some

of the locks to free up space in the lock list.

• NUM_FREQVALUES (number of frequency values): The number of frequency

values is used by the DB2 Runstats utility to control how many of the most frequent

values DB2 will keep in memory. This information is used by the optimizer to

determine what percentage of the total result set a predicate in a WHERE clause will

Monitoring DB2 Activity Page 37 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

eliminate.

• NUM_QUANTILES (number of data quantiles): The number of quantiles is used by

the DB2 Runstats utility to control how many quantiles are captured for column data.

Increasing the number of quantiles will give DB2 more information on the distribution

of data in the database.

• DBHEAP (database heap): The database heap controls the amount of memory

available for database object information. The objects include indexes, tables, buffer

pools, and table spaces. The event monitors and log buffer information is also stored

here.

• CPUSPEED (CPU speed): This parameter is the speed of the computer. If the value

is set to -1, then a CPU speed measurement program is used by DB2 to determine

the proper setting.

• BUFFPAGE and buffer pool size: The optimizer uses the size of the available

buffer pools in its optimization data. Increasing or decreasing the buffer pool size can

have a significant impact on the access plan.

Optimization level

The most important parameter that affects the access plan is the optimization level.

This field tells the optimizer how much effort and what techniques it should use to

determine the access plan. A higher level will cause the optimizer to use more complex

algorithms and algebraic analysis -- and much more time -- to generate the final plan.

Each optimization class uses a different subset of all the rules and statistics. A very

detailed description of these levels can be found in the DB2 documentation SQL

Reference in Chapter 5, "Statements," in the section "Set Current Query Optimization"

(see Resources on page 57 ). A short summary of each optimization level is included

below.

• 0 -- Use a minimal amount of optimization

• 1 -- Use a degree of optimization roughly comparable to DB2/6000 Version 1, plus

some additional low-cost features not found in Version 1

• 2 -- Use features of opt class 5, but with a simplified join algorithm

• 3 -- Perform a moderate amount of optimization; similar to the query optimization

characteristics of DB2 for MVS/ESA

• 5 -- Use a significant amount of optimization; with Heuristic Rules (default)

• 7 -- Use a significant amount of optimization; without Heuristic Rules

• 9 -- Use all available optimization techniques

A general rule of thumb is to use the following optimization levels for the different query

workloads:

• Very simple OLTP: 0 or 1. For queries that require very little optimization and rely

heavily on primary key index searches or very simple joins.

• OLTP: 1. For simple queries that involve a small number of tables and joins involving

indexes on the tables.

Page 38 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

• Mixed OLTP and reporting: 5. For a workload that involves complex OLTP or

reports involving many complex joins on multiple tables.

• Very complex data mining or decision support: 9. Queries that require significant

analysis of data statistics and can run for a long time (over a minute) should use the

highest optimization level. The optimization will take much longer but the

improvements that can be found in the access plan normally outweigh the extra

compilation time.

Explain table format command

The db2exfmt tool processes information that has been inserted into the Explain

tables. These tables are used by DB2 to record all the information on the different

components of the SQL and the optimization information. When you used the Visual

Explain tool, the tables were created automatically for you. If you use the text tools first,

then you will have to run a script to create the tables first. Execute the command listed

below in the misc subdirectory of the home directory of the DB2 instance to which you

are attached. All of the tables and indexes will be created for you.

Here's the Explain table error message:

And here's the appropriate command:

db2 connect to databaseName

db2 -tvf EXPLAIN.DDL

The db2exfmt tool is different in that it works with information that has already been

captured and then presents it in an easy-to-read format. After that is complete,

populate the explain tables.

Capturing Explain information

The easiest method to capture Explain information is to store your SQL in a text file

and then run it from the DB2 Command Line Processor (CLP) in Windows or from the

command line in UNIX or Linux. DB2 has a variable, CURRENT EXPLAIN MODE, that

determines if Explain information is captured. This variable can be set to have DB2

populate the Explain tables whenever a query is executed. Here's the appropriate

command to do so:

db2 SET CURRENT EXPLAIN MODE [no | yes | explain]

Monitoring DB2 Activity Page 39 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

And here are the potential values for CURRENT EXPLAIN MODE:

• NO: This is the default; the query is executed normally

• YES: The query is executed normally and the explain information is captured as well

• EXPLAIN: The query is not executed but the explain information is populated into

the tables

Here's an example:

Using the tool

db2exfmt has a large number of options that allow you to pick the Explain statements

that you would like to work with. You can also control what information is shown. The

easiest method is to use all of the defaults, which will then cause the tool to return

information on the most recently explained statement:

Here's sample query summary output:

Page 40 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

And here's sample query tree information:

Monitoring DB2 Activity Page 41 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Using the db2expln and dynexpln tools

The db2expln tool can be called from the command line to obtain the access plan for

the query. However, this tool does not return optimizer information. The dynexpln tool

is useful for explaining dynamic queries that do not use parameter markers. Information

on both tools can be found in the DB2 document Administration Guide: Performance in

Chapter 7, "SQL Explain Facility" (see Resources on page 57 ).

db2expln

This utility is normally used to analyze SQL statements that are stored in static SQL

packages. However, it can also be used to execute SQL dynamically using the

following parameters:

• -database -- Name of the database to connect to

• -statement -- Enclose the statement in quotes to execute a dynamic SQL

statement

• -stmtfile fileName -- A file containing a list of SQL statements to be executed

Page 42 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

• -terminal -- Directs the output to the screen

• -output fileName -- Directs the output to the specified filename

Here's an example command for this tool:

db2expln -database drew_db -statement "select * from syscat.tables" -terminal

And here's the sample output:

dynexpln

The dynexpln tool has been kept for backward compatibility with earlier versions of

DB2. You can use the dynamic options of db2expln to perform all of the functions of

db2expln. When you use db2expln, the statement is prepared as a real dynamic

SQL statement and the access plan is generated from the SQL cache. The dynexpln

tool prepares the statement as static SQL, which does not give as accurate an access

plan and does not allow parameter markers.

Monitoring DB2 Activity Page 43 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

Troubleshooting SQL

Entire books have been written on how to improve SQL, but here are a few key points

that you should keep in mind when you begin working with the Explain tools.

Use of indexes. Is the query using the indexes you expect? Make sure that table

scans are not occurring on tables you thought had indexes on them. This information

can easily be found by looking at the access plan diagram. If the indexes do exist, then

check the cardinality or order of the index keys. It is often not what you expected.

Table cardinality and use of 'SELECT *'. Sometimes the DB2 optimizer will decide

that it is faster to scan an entire table due to the number of columns that you are

bringing back. Perhaps the table is quite small, or perhaps it's just not efficient to scan

an index and then return a large number of rows that return all the columns of the table.

Try to return only the columns that you actually need. Take a look at what columns are

being returned in each section of the query to see if you really need them and to see if

that is why a table scan is occurring.

Optimization level is too low. Many DBAs lower the optimization level to 1 to reduce

the amount of time required for query preparation. Sometimes, raising the optimization

level to 5 will allow the optimizer to find a better access plan without you having to

create a new index to improve performance. This value can easily be adjusted in the

Visual Explain tool when you explain a query. It can also be changed at the command

line using the following command:

db2 set current query optimization [0|1|2|3|5|7|9]

Page 44 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Section 5. Using the Health Center and Memory

Visualizer

Overview

The Memory Visualizer and the Health Center are two new tools that are included with

DB2 V8.1. The Memory Visualizer gives you the ability to easily monitor and record the

memory usage of an instance, its databases, and the applications that are connected

to them. The Health Center allows you to monitor the health of the databases in the

background without having to constantly check on them.

Objectives for this section:

1. Be able to run the Memory Visualizer and Health Center tools

2. Understand the different options and screens in each

3. Understand why and how the tools should be used

Using the Memory Visualizer

DB2 V8.1 has a new tool included with the Control Center that gives you the ability to

observe how memory is actually being allocated in real time. In previous releases of

DB2, it was difficult to figure out how effectively the memory being allocated to the

heaps was being used. The new Memory Visualizer allows you to observe all of the

memory allocated to the instance and its databases in a graphical format.

This tool is called at an instance level. In the Control Center, find and select the

instance and right-click it. Select View memory usage.

Monitoring DB2 Activity Page 45 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The tool will track the current memory allocation for almost all the memory heaps. The

top half of the tool is used to display the exact values of the parameters. You can also

select the parameters that will be graphed in the memory utilization graph. The refresh

intervals allow you to control how often the tool samples the database for information

about the memory heaps. If you are looking at a system where exact information is

needed about a certain workload or behavior, then the refresh age could be set to a

very small value, such as five seconds. If you are just using this tool to monitor

behavior of the database in a production environment, then a longer refresh age of five

or ten minutes may be fine.

Page 46 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

The output from the tool can also be saved to a file. You can open this file later and

view the exact parameter value in addition to the plotted graphs of the data. All of these

files are given the extension of mdf. Initially the plot will be empty when you open a

saved file. If you select a parameter, then the graphical data you captured on it will

appear.

Why should I use Memory Visualizer?

Memory Visualizer gives you access to much more information than before. Certain

parameters in DB2 were often tuned by trial and error, and it was difficult to know how

much space was really being used. This lead to memory being wasted due to

over-allocation.

In addition to providing memory visualization, the tool also allows you to set alarms on

the values of the parameters. The output of the alarms is written to a file called

threshold, which is in the same directory as db2diag.log. This directory is in the

instance home directory. The location varies depending on what you selected when

you created the instance.

Monitoring DB2 Activity Page 47 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

DB2 Health Center

The Health Center is another new tool added to DB2 V8.1. The DB2 Health Center is a

convenient tool to monitor the health and performance of multiple databases from one

panel. The tool can be used to determine if:

• There are sufficient resources, such as free memory, table space containers, or

logging storage, to accomplish tasks.

• Resources are used efficiently.

• Tasks complete within acceptable periods of time or without significant degradation

in performance.

• Resources or database objects are not left indefinitely in unusable states.

The warnings given by the Health Center can then be combined with the information

from the other DB2 Center tools to help to identify and correct any problems.

Opening the Health Center

Page 48 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

The Health Center can be opened using its own icon in the program menu or from

within one of the other GUI Center tools.

To open it from the Control Center, click Tools => Health Center.

To open it from the program menu:

Using the Health Center

The Health Center has an easy-to-use interface that displays only the selected level of

severity for the database problems. In the upper left corner of the tool there are four

icons that are used to select the severity level.

Monitoring DB2 Activity Page 49 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The four options are:

1. Shows instances and databases in an alarm state only.

2. Shows instances and databases in either an alarm or warning state.

3. Shows instances and databases in any of the following states: alarm, warning, and

attention.

4. Shows instances and databases in any of the following states: alarm, warning,

attention, and normal. You can also view databases and instances that cannot be

monitored.

Activating the Health Monitor

The Health Monitor has to be started before monitoring can occur. This is done by

right-clicking on the instance and selecting Start Health Monitor.

Adding alerts

The Health Center has predefined default values determining the parameters and

levels for which an alarm or warning message will be given. The alerts are defined by

right-clicking the desired database or instance. Select Configure=>Database Health

Indicator Settings to open the parameter alert menu. The warning and alarm levels

can be set in this menu.

Page 50 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

A parameter has to be enabled for the Health Center return messages about its

warning and alarms. Clicking Enable will open a new menu with options on what to do

if the event occurs.

Alert options

Monitoring DB2 Activity Page 51 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The alert options allow you to run a specific DB2 task or to execute a script that you

have created on the system. There are a number of settings that you can control, such

as the name of the script, the ID under which it should run, and whether the script is a

DB2 command script or an OS script.

The task option works in conjunction with the task scheduler tool. To use this option,

you have to install the Database Tools Catalog at installation time. The task scheduler

can be used to set up DB2 tasks such as Runstats, Reorg, or a database backup.

Multiple tasks and scripts can be executed for any one alarm or warning. This gives

you more flexibility in how you handle certain situations.

Page 52 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Section 6. Leveraging the DB2 Governor and the Query

Patroller

Overview

There are two main tools produced by IBM for DB2 that allow you to monitor and

control the execution of SQL against your databases. The DB2 Governor is used to

control what priority users and applications have for executing their SQL. The DB2

Query Patroller provides query and resource management for decision support

systems. The tool accepts, analyzes, prioritizes, and can schedule all the queries that

pass through your system.

Objectives for this section:

1. Understand what query monitoring tools are available

2. Understand when to use the DB2 Governor and the Query Patroller

What is the DB2 Governor?

The DB2 Governor is used to monitor a user's activity and, if required, take appropriate

actions. It collects statistics on a regular basis defined by a configuration file. The

Governor then evaluates the statistics against a set of rules that are also defined in the

configuration file. Based on these rules, the Governor may change the application's

priority, or force the application off the database.

When is it used?

The Governor is used in large production environments where many queries are

running against large databases. These queries can take a very long time if they are

being used for data mining or decision support systems (DSS). The Governor allows

you to assign a higher priority to queries that need to be finished soon or to lower the

priority of queries taking up too many resources. A separate instance of the Governor

is run against each instance that you are monitoring.

Documentation

A full description of the DB2 Governor can be found in the DB2 documentation in

Administration Guide: Performance in Chapter 9, "Using the Governor" (see Resources

on page 57 ).

Using the Governor

Monitoring DB2 Activity Page 53 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The Governor is controlled by a configuration file, where the rules of the system are

stored. These rules are used to determine if the priority of the application running the

SQL needs to be changed. If a rule is activated, then the Governor performs the

specified action, such as reducing the priority of the application or forcing it off the

database.

Starting the Governor

A daemon must be started for each database that you would like monitored. The

daemon can be started from the command line using the following command:

db2gov start databaseName configFile logFile

Stop the daemon with the following command:

db2gov stop databaseName

Here's an example of these commands in action:

db2gov start drew_db govrules.cfg gov.log

db2gov stop drew_db

Configuration file

The configuration file is used to store all the rules that the Governor daemon will use to

control the applications. Here are some of the file's syntax requirements:

• Comments are delimited inside braces ({ }).

• Most entries can be specified in uppercase, lowercase, or mixed-case characters.

The exception is the application name, specified as an argument to the applname

rule, which is case sensitive.

• Each rule ends with a semicolon (;).

DB2 Governor rules

Required rules

You must specify a rule about when the daemon should wake up to monitor the

database. This rule includes information on which database to monitor, CPU usage

(not available with Windows), and the sleep interval between monitoring.

A sample rule:

interval 30; dbname drew_db; account 45;

This rule activates the daemon every 30 seconds. If an interval value is not given, the

Page 54 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

default value of 120 seconds is used. The database in the example is the drew_db

database, and the CPU information will be examined every 45 seconds.

The clauses for the rules must be entered in a specific order as detailed below:

• desc (optional): A comment about the rule, enclosed in quotation marks.

• time (optional): The time during the day when the rule is evaluated.

• authid (optional): One or more authorization IDs under which the application

executes statements.

• applname (optional): The name of the executable or object file that connects to the

database. This name is case sensitive.

• setlimit: The limits that the Governor checks. These can be one of several,

including CPU time, number of rows returned, idle time, and so on.

• action (optional): The action to take if a limit is reached. If no action is specified,

the Governor reduces the priority of agents working for the application by 10 when a

limit is reached. Actions against the application can include reducing its agent

priority, forcing it to disconnect from the database, or setting scheduling options for

its operations.

Some more example rules:

desc "Restrict the Unit of Work to be less then 30 minutes"

setlimit uowtime 1800 action force;

desc 'Slow the use of the java tools down for the training user'

authid training

applname javaw.exe

setlimit cpu 5 locks 100 rowssel 1000;

desc "Increase the priority of an important application so it always completes quickly"

applname importantApp .exe

setlimit

cpu 1

locks 1

rowssel 1

action priority -20;

desc "During day hours do not let anyone run for more than 10 seconds"

time 8:30 17:00

setlimit cpu 10

action force;

What is the DB2 Query Patroller?

The DB2 Query Patroller provides query and resource management for decision

support systems. The tool accepts, analyzes, prioritizes, and can schedule all the

queries that pass through your system. Once the queries are completed, the users will

also be notified. This functionality is extremely useful in a large environment where the

queries may take hours to complete or where different departments may have different

priorities or uses of the system.

Monitoring DB2 Activity Page 55 of 59

http://www7b.software.ibm.com/dmdd/ Presented by DB2 Developer Domain

The Query Patroller will also perform load balancing by redirecting work to the

appropriate database partitions to ensure that one is not being used too heavily. The

tool is used only with the multiple partition option for DB2 ESE in an SMP or MMP

environment.

With the latest revision, IBM has completely rearchitected DB2 Query Patroller from the

ground up, integrating the query governing functionality into a DB2 Query Patroller

Center similar to the DB2 Control Center and providing an easy-to-use user interface.

The Query Patroller is completely server-based with no client software required.

Who should use the Query Patroller?

The Query Patroller tool is used by DBAs who administer large databases and data

warehouses. These systems are quite large and have many users running complex

queries against them. Without the Query Patroller, it is difficult to manage all the SQL

being run against the systems.

Documentation

The Query Patroller is included as part of the DB2 Warehouse Manager tool (see

Resources on page 57 ).

Page 56 of 59 Monitoring DB2 Activity

Presented by DB2 Developer Domain http://www7b.software.ibm.com/dmdd/

Section 7. Conclusion

Summary

Having finished this tutorial, you should have achieved the following objectives:

1. Understand what snapshots are

2. Learn how to take a snapshot

3. Know what types of snapshots are available