Professional Documents

Culture Documents

UBICC Final Alam 19

Uploaded by

Usman TariqOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

UBICC Final Alam 19

Uploaded by

Usman TariqCopyright:

Available Formats

End-to-End Delay Measurement for Instant Messaging Relay Nodes

Muhammad T. Alam, Student Member IEEE, Zheng Da Wu, Member IEEE

School of IT

Bond University, Australia

muhamta123@gmail.com

ABSTRACT

In this paper, we provide complete end-to-end delay analyses including the relay

nodes for instant messages. Message Session Relay Protocol (MSRP) is used to

provide congestion control for large messages in the Instant Messaging (IM)

service. Large messages are broken into several chunks. These chunks may

traverse through a maximum number of two relay nodes before reaching

destination according to the IETF specification of the MSRP relay extensions. We

discuss the current solutions of sending large instant messages and introduce a

proposal to reduce message flows in the IM service. The analysis presented in this

paper is divided into two parts. At the former part, we consider virtual traffic

parameter i.e., the relay nodes are stateless non-blocking for scalability purpose.

This type of relay node is also assumed to have input rate at constant bit rate. The

later part of the analysis considers relay nodes to be blocking and the input

parameter to be exponential. The performance analysis with the models introduced

in this paper is simple and straight forward, which lead to reduced message flows

in the IM service. Also, using our model analysis a delay based optimization

problem can be easily deduced.

Keywords: Instant messaging, MSRP, Stateless, Chunking.

1 INTRODUCTION named MESSAGE. The SIP MESSAGE method

(RFC 3428 [1]), is able to transport any kind of

In this paper, we provide complete end-to-end payload in the body of the message, formatted with

delay analyses including the relay nodes for instant an appropriate MIME (Multipurpose Internet Mail

messages. Instant messaging (IM) is one of today’s Extensions) type. 3GPP TS 23.228 [25] already

most popular services. Thus, it is not a surprise that contains requirements for Application Servers (ASs)

3G IP Multimedia Subsystem (IMS) already has this to be able to send textual information to an IMS

service well supported in its architecture. IM is the terminal. 3GPP TS 24.229 [2] introduces support for

service that allows an IMS user to send some content the MESSAGE method extension. The specification

to another user in near-real time. The content in an mandates IMS terminals to implement the

instant message is typically a text message, but can MESSAGE method [1] and to allow implementation

be an HTML page, a picture, a file containing a song, to be an optional feature in ASs.

a video clip, or any generic file. The work over instant messaging [4, 5, 6]

There are two modes of operation of the instant observed so far lacks a thorough analysis of the

message service, depending on whether they are scalable behavior of the nodes involved in providing

stand-alone instant message, not having any relation the IM service. The messages of IM may be very

with previous or future instant message. This mode large. Large instant messages have disadvantages

of IM is referred to as “pager mode”. The model is like service behavior is too slow on low bandwidth

also similar to the SMS (Short Message Service) in links and more importantly, messages get fragmented

cellular networks. The other model is referred to as over some transport protocol and then look at SIP

session based instant message that is sent as part of extension that resolve this issue. Even if messages

an existing session, typically established with a SIP are compressed, sometimes SIP messages can be too

(Session Initiation Protocol) INVITE request. Both large. Another problem with SIP is that the fact that

modes have different requirements and constraints, any proxy can change the transport protocol from

hence the implementation of both models. TCP (Transmission Control Protocol) to UDP (User

The IETF (Internet Engineering Task Force) has Datagram Protocol) or other transport protocols and

created an extension to SIP that allows a SIP UA vice versa. The protocols other than TCP and SCTP

(User Agent) to send an instant message to another (Stream Control Transmission Protocol) are not

UA. The extension consists of a new SIP method famous for congestion control. If an IMS terminal is

Ubiquitous Computing and Communication Journal

sending a large instant message over a transport the message into chunks and deliver each chunk in a

protocol that does not offer congestion control, the separate SEND request. The message ID corresponds

network proxies can become congested and stop to the whole message, so the receiver can also use it

processing other SIP requests like INVITE, to reassemble the message and tell which chunks

SUBSCRIBE, etc. Even if a terminal sends large SIP belong with which message.

MESSAGE over a transport protocol that Long chunks may be interrupted in mid-

implements end-to-end congestion control e.g., TCP, transmission to ensure fairness across shared

SCTP, the next proxy can switch to UDP and transport connections. This chunking mechanism

congestion may occur. allows a sender to interrupt a chunk part of the way

To solve the issue of large message passing and through sending it. The ability to interrupt messages

congestion control in IM, a limit has been placed on allows multiple sessions to share a TCP connection,

the SIP MESSAGE method such that MESSAGE and for large messages to be sent efficiently while

requests cannot exceed the MTU (Maximum not blocking other messages that share the same

Transmit Unit) minus 200 bytes. If the MTU is not connection, or even the same MSRP session. Any

known, this limit is 1300 bytes. Another solution to chunk that is larger than 2048 octets MUST be

sending SIP MESSAGE requests with large bodies to interruptible [24].

use the content indirection mechanism [3]. Content Another characteristic of MSRP is that, MSRP

indirection allows replacing a MIME body part with messages no not traverse SIP proxies. This is an

an external reference, which is typically an HTTP advantage, since SIP proxies are not bothered with

(Hyper Text Transfer Protocol) URI (Universal proxying large instant messages. Also, MSRP does

Resource Identifier). not run over UDP or any other transport protocol that

Another solution to getting around the size limit does not offer end-to-end congestion control. It

problem with MESSAGE is to use session-based IM supports instant messages to traverse zero, one or

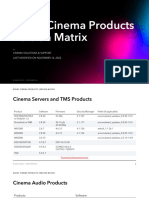

mode rather than pager mode. Session-based instant two MSRP relays (see Figure 1). The relay extension

message mode uses the SIP INVITE method to of MSRP is defined in [7].

establish a session. An IMS terminal establishes a

session to send and receive instant messages via

Message Session Relay Protocol (MSRP) [24]. Relay Relay

MSRP is a simple text-based protocol whose main Source node 1 node 2 Destination

characteristic is that it runs over transport protocols

that offer congestion control. In the IMS, MSRP is Flow 1

implemented in the IMS terminals. Analysis is

required to determine the service order of such

Flow 2

servers. Our work in this paper is to analyze the

delay bound of the relay nodes that implements the

MSRP to provide instant messaging service. The

benefit of the work lies in the simplicity of the model

derivation. Flow v

The rest of the paper is organized as follows.

Section 2 provides a review of MSRP. The SEND (β , α ) (β , α )

chunking system, our proposal of scalability over

MSRP relay nodes, delay analysis for both work Figure 1: IM with maximum 2 relay nodes

conserving non-blocking and blocking situation are

described in Section 3. Finally we conclude the paper

in Section 4. The default is that SEND messages are

acknowledged hop-by-hop. Each relay node that

receives a SEND request acknowledges receipt of the

2 BACKGROUND request before forwarding the content to the next

relay or the final target. When sending large content,

There are currently three methods defined in the client may split up a message into smaller pieces;

MSRP after the INVITE message is sent for an IM each SEND request might contain only a portion of

session set up: i) SEND: sends an instant message of the complete message. For example, when Alice

any arbitrary length from one endpoint to another, ii) sends Bob a 4GB file called "file.mpeg", she sends

VISIT: and endpoint connects to another end point, several SEND requests each with a portion of the

and iii) REPORT: endpoint or a relay provides complete message. Relays can repack message

message delivery notifications. fragments en-route. As individual parts of the

MSRP does not impose any restriction on the complete message arrive at the final destination

size of an instant message. If an IMS user, Alice client, the receiving client can optionally send

wants to deliver a very large message, she can split REPORT requests indicating delivery status. MSRP

Ubiquitous Computing and Communication Journal

nodes can send individual portions of a complete SEND chunks at the relay nodes if they do not keep

message in multiple SEND requests. As relays transaction states of a chunk flow for scalability

receive chunks they can reassemble or re-fragment purpose.

them as long as they resend the resulting chunks in Long chunks are interrupted in mid-transmission

order. to ensure fairness across shared transport

A series of papers [8-10] have studied the connections. To support this, MSRP uses a

capacity scaling in relay networks. These works boundary-based framing mechanism. The start line

quantify the impact of large wireless relay networks of an MSRP request contains a unique identifier that

in terms of signal-to-noise ratio. Most of the work is also used to indicate the end of the request.

focuses on characterizing one relay node only. The Included at the end of the end-line, there is a flag that

work of H. Bolcskei et all in [10] demonstrated that indicates whether this is the last chunk of data for

significance performance gains can be obtained in this message or whether the message will be

wireless relay networks employing terminals with continued in a subsequent chunk. There is also a

multiple-input multiple-output (MIMO) capability. Byte-Range header field in the request that indicates

However, these works do not address the issue of that the overall position of this chunk inside the

characterizing traffic parameter in relay nodes where complete message.

the relay nodes do not keep the transaction states. A This chunking mechanism allows a sender to

signification challenge is to schedule the large interrupt a chunk part of the way through sending it.

chunks and characterize the traffic parameters under The ability to interrupt messages allows multiple

delay bounds. sessions to share a TCP connection, and for large

In any IMS network the capacity messages to be sent efficiently while not blocking

(memory/storage) is large for IM communication. other messages that share the same connection, or

Large messages have to be broken down into chunks even the same MSRP session. As mentioned before

to overcome the fixed size limit fact. Real time that any chunk that is larger than 2048 octets MUST

service of IM is always desirable. However, issues be interruptible. While MSRP would be simpler to

arise if a) the relay nodes in between source and implement if each MSRP session used its own TCP

destination IMS terminals possess slow links b) connection, there are compelling reasons to conserve

traffic order gets distorted before reaching relay connection. For example, the TCP peer may be a

nodes and c) relay nodes maintains transaction states. relay device that connects to many other peers. Such

Therefore efficient service discipline of the chunks a device will scale better if each peer does not create

of IM is necessary. In an IM system, transmission a large number of connections. The chunking

time typically depends on the number of chunks in mechanism only applies to the SEND method, as it is

messages. Moreover, service time of the chunks the only method used to transfer message content

depends on batch size arrivals. The number of [24]. We call the chunking mechanism i.e., breaking

broken chunks in a large message is not fixed. Thus one large SEND message into several SEND

analysis of such system is not trivial. In this work, messages a SEND system.

we explore delay characteristics of instant messages Proposal: Traditional MSRP [24] may be used

when the messages traverse via relay nodes. without traditional session set up in IMS to provide

The end to end delay bound of IMS instant the congestion control. Also, MSRP relay nodes

messages indeed requires much attention. Although, should not keep transaction states for the SEND

the study of the fundamental frameworks, namely chunks.

Integrated and Differentiated services have a long The benefit of this proposed technique contains

history, defining the flow characteristic of an IMS reduced message flows in the network as well as

instant message traversing the relay nodes gaining scalability at the relay nodes. In order to

(maximum number of relay nodes is two for an IMS comply with this, we propose the following

terminal [7]) under MSRP is not trivial due to the scheduling. The detail analysis is provided below

arbitrary number of chunks in IM messages. We that captures the delay bound of the relay nodes.

analyze the end-to-end delay for an IM under work We provide analysis of one relay node first in

conserving situation. The delay bound is useful to terms of aggregate flows of two SEND message

formulate the optimization of transmission IM end- chunk flows and delay bound, which will later be

to-end transmission time. used to compute delay bound for SEND systems

with two relay nodes, source and destination. We

assume the following for the analysis. One IMS

3 MODELLING source terminal sends multiple large instant

messages (SEND) to the same destination via two

The large sized SEND messages in IM, MSRP relay nodes. Each of the SEND messages is broken

delivers in several SEND messages, where each into small SEND chunks. It is to be noted that we are

SEND contains one chunk of the overall message. assuming relay nodes do not keep transaction states

The crucial aspect in this paper is the ordering of of the chunks. Though, the IETF draft [7] specifies

Ubiquitous Computing and Communication Journal

that the relays may keep transaction states for a very

⎛ Lmax ⎞ ⎛ Li ⎞

short time, it will be expensive to keep such states any relay node is no longer than ⎜ ⎟ + ⎜⎜ ⎟

⎟

for the relay nodes if there is huge number of clients ⎝ c ⎠ ⎝ i ⎠α

being served and traffic flows are massive. We are [11].

only interested in busy traffic situation for the We adopt the characteristic of traffic model in

derivation of properties in this research and thus [12, 13] which has been widely adopted for

server analysis with stateless assumption is more characterizing network traffic. If the total traffic of a

flow F (t1 ,t 2 ) arriving in the time interval (t1 , t 2 ]

practical. We assume message chunks are served

according to the order of delivery time tag of the

previous node and all chunks are treated as if they is bounded by:

belong to a single flow due to the elastic and massive F (t1 , t 2 ) ≤ σ + ρ (t 2 − t1 ) (1)

flows of SEND chunk messages in a flow. Thus Then the flow is referred to as conforming to the

performance analysis of an individual flow at a relay

node can be achieved by analyzing the aggregated traffic parameter (σ )

, ρ . Here the assumptions are

flows at this node. under non-overflow condition with a flow injection

to a leaky bucket with parameters of buffer size, σ

3.1 For Non-blocking Relay Nodes and output rate ρ . In other words, ρ is the average

traffic rate in the long run and σ is the burst bound

Here we adopt a scheduler which services a job

according to delivery time stamps of the pervious of the flow (σ )

, ρ . It is practical to assume that

node. We aim to provide work conserving but the links of relay nodes will be subject to delay

stateless scheduling of the chunks. Every message bound in terms of propagation delay. We consider a

chunks has message id that identifies which SEND chunk to be arrived only after its last bit has arrived

message it belongs. The source node generates the to a relay node and the delivery time of a chunk at a

chunks and delivers them to a relay node. The node is the time when the last bit of the chunk leaves

ordering is considered to be the order as the source the relay node. Note that we are considering the

node generates chunks for the first relay node and input traffic as the constant bit rate for the relay

then first relay node for the second relay node and so nodes in this section.

on. During the delivery time these chunks may If we consider steady state of the network i.e.,

receive time stamp tags. These chunks may reach / traffic load less than one then a chunk will only be

propagate to the relay node out of order, and hence delayed at a node if there is a chunk being served or

the arrival times of the chunks to the relay node may there are chunks waiting in the buffer with earlier

not always be in order of the order id of the message delivery time stamps, we assume that the start time

chunks. of each busy period is initialized at 0. Here, a busy

Let the propagation delay and link capacity of period is an interval of time during which the

any link are 0 and c, respectively. The sequence of transmission queue of the output link is continuously

chunks transmitted by a source to a destination is backlogged which is consistent with [12].

referred to as a flow (Figure 1). The paths via relay The previous node’s delivery time stamp tag of

nodes are predetermined as defined in the MSRP each chunk lags behind its arrival time at any relay

relay extensions [7] (The relay nodes are authorized node. Note that chunks are served by the order of

by explicitly by the end terminals). Let, at a relay their previous node’s delivery time stamps which is

node chunk k of a flow i is attached with a time our assumptions. Thus, the delay for each chunk to

stamp tag according to the delivery time from the traverse the network remains the same only if the

⎛L ⎞ time stamps of all chunks are increased by a constant

previous node of Ai + ⎜⎜ i ⎟⎟ where α j , Li , and D at the previous node. We can assume that, if the

k

α

⎝ i ⎠ burst of each flow is bounded and the capacity of any

link is no less than the average rate of the flows

Aik are the input rate, chunk size and the arrival traversing the link, there exists a worst case delay

time of chunk k of flow i respectively; the delivery bound in the network, i.e., the worst case delay of a

order time stamp of chunk k of flow i is updated at flow to traverse any pair of relay nodes is bounded.

the next relay node with an increment Since we consider that the relay node need not

keep transaction states of the SEND flows, chunks in

⎛ Lmax ⎞ ⎛ Li ⎞

of ⎜ ⎟ + ⎜⎜ ⎟ , and chunks are served at the

⎟

the buffer are served by the order of their delivery

⎝ c ⎠ ⎝αi ⎠ time stamp tags, not their arrival times. There is also

no distinct relation between the delivery time stamp

increment order of their previous node’s delivery

of a chunk and its arrival time. Thus, a chunk with an

order time tag, where, Lmax is the maximum size of earlier delivery time stamp than another chunk,

chunk in all flows. Under these conditions, it is easy though it arrives later, may be served first. This may

to perceive that the worst case delay of a flow i at happen due to the well-known traffic distortion

Ubiquitous Computing and Communication Journal

problem [12]. Therefore in this regard, it is more β1 + α1 (Ri − max{Ri −1 , min {Ai , Ai ,...., Ai ,}})

reasonable to evaluate a chunk’s delay with reference n 1 1 2 n

to its previous delivery node’s time stamp, rather in

than its arrival time at the current node. We need to ≥ ∑ Ls

characterize traffic of this kind of scheduling for the s =ii

relay nodes. We define a parameter (β , α ) such that (2)

the total traffic of the flow of chunks, whose time ( p

{

β2 +α2 Rj − max Rj −1, min Aj , Aj ,....,Aj , 1

{ 1 2 p

}})

stamps are in the range of (t1 , t 2 ] , is no larger jp

than β + α (t 2 − t1 ) , which is similar to the (σ , ρ ) ≥ ∑Ls

s= j1

traffic model. We assume that chunks are ordered by

their previous node’s delivery time stamps as (3)

P1 , P2 ,..., Pk ,....( Ri ≥ R j , if i > j , where Ri is Since,

the previous node’s delivery time tag of chunk Pi).

For any two chunks Pm and Pk,

{

max Rin , R j p = Rk , }

k

(k ≥ m ), β + α (Rk − Rm ) ≥ ∑ Li , where Li is {

⎧⎪min Ai1 , Ai2 ,...., Ain , , ⎫⎪ }

i =m

the size of Pi. A chunk may receive service as long

min ⎨

{⎬

⎪⎩min A j1 , A j2 ,...., A j p , ⎪⎭ }

as there is no chunk in the buffer when it arrives.

= min {Am , Am +1 ,...., Ak } and

Thus, it is necessary to take into account the arrival

time of a chunk to characterize traffic in a relay node.

min {Ri1 −1 , R j1 −1 } = Rm −1 ,

Therefore, we define the traffic parameter for any

two chunks of a flow as follows: for any two chunks We have,

Pk and Pm of a flow

(β1 + β2 ) + (α1 +α2 )

(k ≥ m ≥ 1),

( {

× Rk − max Rm−1, min{Am, Am+1,...,Ak } })

β + α(Rk − max {Rm−1, min {Am , Am+1,...,Ak }}) ≥ ∑Li

k

i =m

[ ( {

≥ β1 +α1 × Rin − max Ri1−1, min Ai1 , Ai2 ,....,Ain { }})]

where Ai is the arrival time of chunk Pi, i=1,2,…; we

refer to F (t1 , t 2 ) = β + α (t 2 − t1 ) in the time [ ( { { }})]≥ ∑L

k

+ β2 +α2 × Rjp − max Rj1−1, min Aj1 , Aj2 ,....,Ajp s

s=m

interval (t1 , t 2 ] as the traffic function of this flow

(4)

with the traffic parameter (β , α ) . We apply the Application of Theorem 1: If the function of all

additive property of (σ , ρ ) traffic model [12] to traffic flows are known, the virtual traffic aggregated

function can be derived by Theorem 1.

obtain the following:

However, the chunk pattern may be distorted at a

Proposition 1: Given two flows with traffic

relay node. In such case, we can provide the

parameters (β 1 , α 1 ) and (β 2 , α 2 ) the traffic following relation for a flow in terms of worst case

parameter of the aggregated traffic of the two flows delay of the outgoing traffic.

is (β 1 )

+ β 2 , α1 + α 2 . Proposition 2: Assume that the traffic parameter

of the input traffic of a SEND chunk flow at a relay

Proof: Assume that chunks are ordered by their

delivery order. Given any two chunks Pk and

( )

node is β , α and the worst case delay to traverse

a relay node is D (let the mean service time of a

Pm (k ≥ m ) of the aggregated flow, assuming chunk at this current node is d). We can characterize

chunks Pi1 , Pi2 ,...... and the output traffic of this flow as β ′, ( α ) where the

(

Pin , i1 < i2 < .... < in and n ≤ (k − m + 1) bel ) buffer requirement

{

β ′ = max 0, α (D − d ) + Lmax + β . }

is

ong to flow 1, and the rest of the chunks

Proof: Assume that chunks are ordered by their

Pj1 , Pj2 ,...... and delivery times at this current node, i.e., for chunks

(

Pj p , j1 < j2 < .... < j p and p ≤ (k − m + 1) ) (

Pk and Pm k ≥ m, Tk ≥ Tm where the delivery )

belong to flow 2. Thus for the virtual traffic order time tag of chunk Pi , i = 1,2,..., is Ti and is

parameter, we have

also the arrival time of Pi of the output traffic. As

Ubiquitous Computing and Communication Journal

the worst case delay of a chunk is D, we have the v

following relation: this node is c, c ≥ ∑ α i . Under these assumptions,

Ti ≤ Ri + D (5) i =1

the wost case delay bound at a current relay node is:

Again, since the delivery order of each chunk is

1⎡ v ⎤

delayed by d and

∑ (β i − α iθ i ) + Lmax ⎥ (9)

β ′ = max{0, α (D − d ) + Lmax }+ β . ,

⎢

c ⎣ i =1

for any ⎦

two chunks k and m (k ≥ m ≥ 1) , we get Proof: For any chunk Pk if we assume m to be

β ′ + α [Rk + d − max{Rm−1 + d , Tm }] the biggest integer k > m > 0 such that Rk < Rm

[ {

≥ β ′ + α Rk + d − max Rm−1 + d , Rm + D }] and Tk > Tm where Ri and Ti are the previous

node’s delivery time tag and the delivery time of Pi

{

≥ min β + α (Rk − Rm−1 ), β + Lmax + α (Rk − Rm ) } at current node. Thus

(6) Rm > Rk ≥ Ri , for all m<i<k

And

(10)

β +α[Rk − max {Rm−1, min [Am , Am+1,....,Ak ]}]

k

≥ ∑Li Tk > Ti ≥ Tm , for all m<i<k

i=m (11)

k

i..e., α(Rk −Rm−1 ) ≥ ∑Li

In other words, Pm is transmitted before chunks

i=m Pm +1 ,..., Pk ; however, its previous node’s delivery

(7)

time tag is greater than that of chunks Pm +1 ,...Pk .

Now let the previous node’s delivery order of a

Thus

chunk Pi , i = 1,2,..., at the outgoing link of the

Lm

⎛L ⎞ min{Am +1 ,..., Ak } > Tm − (12)

relay node is: Ri′ = Ri + D + ⎜ max ⎟ . Thus c

⎝ α ⎠ ⎛L ⎞

from Eq. (6) and (7) we have: Since, Pm +1 ,..., Pk arrive after Tm − ⎜ m ⎟ and

β + α[Rk′ − max{Rm′ −1 , min[Tm ,Tm+1 ,...Tk ]}] ⎝ c ⎠

depart before Pk at the current relay node, we have

≥ min{β + α(Rk − Rm−1 ), β + Lmax + α(Rk − Rm )} k

⎧k k

⎫ k

∑L i

≥ min⎨∑Li , ∑Li + Lmax ⎬ ≥ ∑Li Tk = Tm + i = m +1

(13)

⎩i=m i=m+1 ⎭ i=m c

(8) Note that Ri ≥ Ai for all i = 1,2,..., and thus

Thus the characteristic of traffic parameter for worst

case Delay D ( )

is β ′, α and proposition 2 is Rk ≥ Ri ≥ Ai ≥ Tm − ⎜ m ⎟

⎛L ⎞

for

proved. ⎝ c ⎠

Next we analyse the worst case delay bound of a i = m + 1,..., k − 1 . Furthermore we have the traffic

SEND chunk flow to traverse a relay node. function,

Proposition 3: Let, Pki be the kth chunk of flow i

and assume that the chunks are ordered according to

{

θi = maxmin{Rmi −1 − min{Ami , Ami +1,...,Aki }},0

k≥m>1

}

their current node’s delivery order time tag. Define = min{Ami , Ami +1,...,Aki }+θ

θi = max{mink ≥m>1{R i

m−1 {

− min A , A ,..., A ,0

i

m

i

m+1

i

k }} }

≤ max{Rmi −1, min{Ami , Ami +1,...,Aki }}

i i

where R and A are the delivery time tag from

m m

previous node and the arrival time at current node of

i.e.,

Pmi ; Lmax be the maximum size of a chunk. Assume

βi +αi [Rki − (min{Ami , Ami +1,...,Aki }+θi )] ≥ ∑Lij

k

that the input traffic of a relay node consists of flows

1,2,…, v , whose traffic parameters are (β i , α i ) j=1

respectively and the capacity of the output link of (14)

Since, chunks Pm +1 ,..., Pk comprise the chunks of

flows 1,2,… v , we have

Ubiquitous Computing and Communication Journal

are updated / serviced by an increment d at the relay

⎧

[

⎨βi + αi Rk − (min{Am , Am+1 ,...,Ak }+ θi ) ≥ ∑Li ⎬

⎫

]

v k

∑ i i i i

node, then input traffic parameter for the next relay

i =1 ⎩ i =m+1 ⎭ node is (β ′, α ) where buffer requirement

i.e., is β ′ = max{0, α (D + δ − d ) + L }+ β . max

k v

⎛ ⎞⎡ ⎛ L ⎞⎤ v

The delay bound of proposition 3 can further be

∑ Li ≤ ∑(βi − αiθi ) + ⎜ ∑αi ⎟⎢Rk − ⎜Tm − m ⎟⎥

⎝ i=1 ⎠⎣ ⎝ c ⎠⎦ ⎛ v

αi ⎞

i =m+1 i =1

tightened. For instance, if ⎜

⎝

∑ ⎟ → 0, then the

c ⎠

(15) i =1

From Eq. (13) and Eq. (15) we have worst case delay bound would be

k

⎛ v

⎞

∑L m

⎜ ∑ β i + Lmax ⎟

Tk = Tm + i=m+1 ⎝ i =1 ⎠ . On the other hand, if

c c

θ = min i {θ i } , and the delivery time tag at the

⎛ v ⎞⎡ ⎛ L ⎞⎤ v

⎜∑αi ⎟⎢Rk −⎜Tm − m ⎟⎥ + ∑(βi −αiθi ) pervious node of all chunks are decreased by θ ,

⎝ i=1 ⎠⎣ ⎝ c ⎠⎦ i=1 then the traffic functions of all flows remain the

≤ Tm +

c same and the actual worst case delay bound from

⎛ v ⎞

⎜ ∑ β i + Lmax ⎟

v

(βi −αiθi )

Lmax ∑ ⎝ i =1 ⎠ − θ . Therefore, it

≤ Rk + + i=1 proposition 3 is

c c c

(16) is possible to tighten the worst case delay as well in

this instance. If all chunks’ delivery time stamps at

If there does not exist such m, then P1 ,..., Pk −1 all

the previous node are increased or decreased by a

leave the node before Pk and thus have constant at the entrance to a relay node, their

delivery time remains unchanged. If all chunks’

⎛k

⎞ v v

∑ Li ⎜ ∑ i ⎟ k ∑ (β i − α iθ i )

α R + previous node’s delivery time tag decreased by θ ,

Tk = i =1 ≤ ⎝ =1 ⎠

i i =1 applying proposition 3, for any chunk Pk we have the

following:

c c v

i.e., ∑ [β i − α i (θ i − θ )] + Lmax

v Tk − (Rk − θ ) ≤ i −1

∑ (β i − α iθ i ) c

Tk − Rk ≤ i =1

i.e.,

c v

Thus the delay is bounded

(17)

by ∑ [β i − α i (θ i − θ )] + Lmax

Tk − Rk ≤ i −1

−θ

∑ (β )

v

i − α iθ i c

Lmax (18)

i =1

+ and proposition 3 is i.e., the worst case delay is bounded by

c c v

proved.

Application of proposition 2 and proposition 3: ∑ [β i − α i (θ i − θ )] + Lmax

The proposed propositions are straight forward for

i −1

−θ

performance analysis. From the above relation, we c

can also characterize the outgoing traffic parameter Now if we take the propagation delay into account,

of a relay node for a given propagation delay, δ . the increment for flow n, 1 ≤ n ≤ v , should be

Let δ be the propagation delay of a chunk of a flow v

(β , α ) i.e, the propagation delay of a chunk from a ∑ [β i − α i (θ i − θ )] + Lmax

relay node to the next relay node. Then the worst

i −1

− θ + δ n ,i where

c

case delay of a flow is D + δ if this is the first relay

node i.e., there is no update at the previous node of

δ n,i is the propagation delay of flow n to traverse

this flow. Here we assume that this is the first relay the link between relay node i and its next adjacent

node and the flows arrive from the source directly to relay node.

this node. In this case if all of the chunks of the flow Example: In order to further analyze the

Ubiquitous Computing and Communication Journal

proposed propositions, consider two cases. relay nodes as:

Let two flows; flow 1 and flow 2 are contending for ⎡ v ⎤

2L

the bandwidth of a link with a capacity of . The

⎢ ∑ (β ri − α riθ i ) + Lmax ⎥ v

c ∑ ⎢ i =1

r =1, 2⎢ c

⎥ + ∑ α 2i δ 2,e

⎥ i =1

L ⎢⎣ ⎥⎦

reserved bandwidths of the two flows are both ,

c

(19)

and all chunks are of size L. However, the inter-

arrival times of two consecutive chunks of flows 1 Where, r represents the index of relay nodes, α 2i is

and 2 are c and c/2, respectively. Assume that the the traffic rate of flow i reaching the end destination

first chunks of both flows arrive at time 0, and the

terminal e from the second relay node, and δ 2,e is the

Aik ,

arrival time of the kth chunk of flow i, i=1,2, is

propagation delay for a chunk of a flow to reach

(k − 1)c from the second relay node to the destination end

where Ai = ( k − 1)c if i=1, and Ai =

k k

if terminal. Eq. (19) achieves the goal of our work in

2

stateless work conserving situation. Note that the

i=2. The previous node’s time tag attached to the kth

design and analysis of the above work are consistent

chunk of flow i is, however, kc, which is

with [18-20] with the traffic parameter behaving as

independent of i and will make each flow attain its virtual clock arrivals as shown in [21]. Li and

reserved bandwidth. Therefore, it can be observed Knightly [22, 23] provided analysis for multihop

that the worst case delay of flow 1 is c, and it is stateless scheduling, but the simplicity of our

infinity for flow 2. However, if the previous node’s analysis is perhaps preferred to be deployed

time tag of the kth chunk of flow i, i=1,2, is set to regarding virtual traffic flows.

Aik + c , then the worst case delays of both flows So we far we developed scheduling model with

become infinity. We can observe such characteristic consideration that the relay nodes are in steady state

from the propositions we derived. The delivery order that is the traffic load is less than one. However, this

at the previous node attached to the kth chunk of situation may not hold and the chunks may be

blocked in overload condition. Losses may occur

flow 2 are i) kc and ii) Aik + c respectively. In the because chunks are rejected when they arrive at a full

first case the traffic parameters of the two flows are (arrival) buffer. In this case a full retransmission is

⎛ L⎞ ⎛ L⎞ initiated by the sender IMS terminal after a timeout,

⎜ 0, ⎟ and ⎜ 0, ⎟ i.e., same. By the aggregate a significant increase in the end-to-end delay.

⎝ c⎠ ⎝ c⎠ Chunks may also be corrupted as they arrive a relay

property from proposition 1, we have the traffic node. There is a large series of recent work on the

⎛ 2L ⎞ asymptotic analysis of loss messages with different

parameter of the aggregate flow as ⎜ 0, ⎟ and server characteristics. The characteristic of loss

⎝ c ⎠ probability for corrupted messages can be located in

c Abramov’s PhD work in [15]. Abramov showed the

by proposition 3 the delay bound of any chunk is effect of adding redundant chunks to a large message.

2 Summarizing from [14, 15] to our context, adding

since θ 1 = θ 1 = 0 . Therefore, since the delivery redundant chunks when the load at relay node is

order at the previous node of a chunk lags behind its slightly greater than 1, will decrease message loss

arrival time, bounded by c and infinity in flows 1 and probability with the rate of geometric progression.

2 respectively, and then the worst case delay of the But, adding redundant chunk is not profitable if the

3c load is much greater than 1, i.e., the loss probability

flows are and infinity respectively. In the later will increase for this case.

2

⎛ L⎞

case, the traffic parameter of flow 2 is ⎜ ∞, ⎟ . 3.2 For Overloaded Relay Nodes

⎝ c⎠

⎛ 2L ⎞ In this section of the paper, we analyze chunks

Thus the aggregate traffic flow is ⎜ ∞, ⎟ and the being blocked or rejected due to full buffer. In the

⎝ c ⎠ previous section we presented delay analysis for one

worst case delay is infinity. Thus, we see that the source and destination with two intermediate relay

worst case delays of both flows become infinity nodes. In practical, there may be times chunks arrive

according to our analysis. in batches at the relay nodes from the same IMS

From the above analysis using proposition 3, we terminal [7]. Alternatively, chunks from the different

can find the end-to-end delay bound for an IMS sources can traverse the same two relay nodes in a

source to an IMS destination terminal using two network. We focus on analyzing the response time

Ubiquitous Computing and Communication Journal

and mean transmission times of chunks in batch given probability.

arrival conditions in this section. Let the batches of The value of the time-out period is set to a multiple k

chunks arrive as Poisson process with mean arrival of the estimated mean transmission time, for some

rate λ0 (number of batch arrivals per unit time) and k ≥ 1 set by the user. Let, the rate of successful

mean batch size b. We provide thorough derivation transmissions be λ ′′ . This makes the probability that

of mean transmission time from a source IMS a chunk is successfully transmitted to the

terminal to a destination IMS terminal including two λ ′′

relay nodes in between them. Here we focus on receiver . Thus the probability that a chunk is

analyzing the response time and mean transmission

λ

rejected at either of the relay nodes due to a full

times of SEND systems.

buffer is,

In a batch-Poisson stream, we can assume that

successive batches arrive after intervals which are λ ′′

p f = 1− (22)

independent and exponentially distributed. Let the λ

service rates (service times being independent of An IMS terminal may also define the probability of

each other and of the arrival process), are µ1 and retransmission p r . For p r = 0 , there will be no

µ2 for relay node 1 and 2, respectively. The retransmissions. This will impact with higher losses

departure process from the first relay node is of chunks but lower congestion. For p r = 1 , there is

approximated as Poisson with unit batches. We see always a retransmission attempt and so there are no

that both the relay nodes behave as M/GI/1 servers losses and the number of retransmissions is unlimited.

under the above assumptions though a deeper This causes extra load on the network which might

analysis to correlate the arrival process of the second result in congestion and hence significantly longer

relay node with the departure process at the first delays.

relay node is required. Any chunk which has not It is desirable for every system that losses do not

transferred successfully is lost. We are interested of

exceed a specified maximum loss rate Lr . This leads

the losses due to the full buffer in this section. By

full buffer we mean that the relay nodes are to the relation with the chunk arrival rates as follows:

considered to have finite buffer only. (1 − p r )(λ − λ ′′) ≤ bλ0 Lr (23)

We assume the effect on the sender and receiver is We now provide the expressions for relay node

the same as a full-buffer loss. Obviously, losses due utilization below.

to a full buffer at each node, cause an additional For relay node 1, the utilization is

transmission delay by a timeout of duration much

λ′

greater than the per-link transmission delay and U1 = (24)

typically a multiple of the estimated end-to-end delay. µ1

Let, the retransmission rate i.e., the net rate at which Where λ ′ is the throughput from the first relay node.

chunks are resent to the sender node due to losses of

And for the second relay node, the utilization is:

all types is λ r . The total rate of chunk arrivals, λ ′′

including retransmissions, is therefore U2 = (25)

µ2

λ = bλ0 + λ r (20)

Note that we assumed λ the batch arrival rates

Let, T1 , T2 , T3 be the constant transmission delays

at the first relay node, λ ′ the batch arrival rates at

for a chunk passing over the links between the sender the second relay node which is the throughput of the

and first relay node, first relay node and second relay first relay node and λ ′′ the throughput of the second

node, and second relay node and the receiver IMS

relay node. Here we find that the mean rate of

terminal respectively. Hence the transmission delay

retransmission in the system is,

for successful chunks, excluding the time spent in the

nodes, is λ r = p r (λ − λ ′′) (26)

T = T1 + T2 + T3 (21) With these analyses, it is plain to compute the

two throughputs of the relay nodes. For M/M/1

If a transmission has been successful, then the machines performance evaluation can be performed

receiver sends a REPORT message for the from the following expressions:

successfully delivered chunk to the sender node

through the route, according to the MSRP. When the ρ1 (1 − ρ1A ) µ1 1

λ′ = (27)

arrival buffer at a node is full, the rejection of an 1 − ρ1A +1 1

arriving chunk will result in no 200OK being sent.

The value of the sender’s timer will reach the pre- ρ (1 − ρ 2A ) µ 2 2

specified time-out value, the chunk will be deemed

λ ′′ = 2 (28)

1 − ρ 2A +1 2

lost and a retransmission will be attempted with a

Ubiquitous Computing and Communication Journal

ρ1 , ρ 2 are U1(2−U1)(M2B −b) +(λ′) b3M2S U1(1−U1b)

2

Where, load at the first relay node and ′

W (0) =

*

+

the second relay node respectively; and A1 , A2 are 2λ′b2(U1 −1) λ′b(U1 −1)

the buffer sizes of the first relay node and the second (34)

relay node respectively.

Let the service time random variable be S, with mean

M 2 B and M 2 S are the second moments of batch

1 size and service time distributions, respectively.

s= , the batch size random variable be B with The total expected response time at relay node 2,

µ W2 can be computed as the M/M/1 response time

mean b, Yn be the queue length immediately after approximation as:

) (1− (A +1)ρ )

the last chunk in the nth batch departs and G X (z ) be 1

W2 = A2

+ A2 ρ2A2 +1

µ2 (1− ρ2 )(1− ρ

A2 2 2

the generating function of the probability mass 2

function of discrete random variable X. Let (35)

U n denotes the number of chunks that arrive during The total expected response time W, i.e., the

the service of (all the chunks in) the nth batch. Let combined time spent in the two relay nodes on a

π n (k ) = P(Yn = k ) for n ≥ 1, k ≥ 0, so that successful transmission attempt, is the sum of the

expected response times at each node, i.e.,

∞

GYn ( z ) = ∑ π n (k ) z k . At equilibrium, assuming W = W1 + W2 (36)

k =0 Thus, the mean transmission time (MTT) for a

chunk that is successful on its first attempt is

this exists, let π n (.) → π (.) and

MTT = W + T (37)

GYn (.) → GY (.) as n → ∞ . The random variable Eq. (37) achieves the goal of out model. Failed

B denotes a generic batch size random variable chunks, due to full buffer, retry a number of times

Bn and we use V similarly to denote a generic given by the retransmission probability p r . Because

each retry is made independently of previous

instance of Vn . attempts, this number of attempts is a geometric

Let the sojourn time, or waiting time, in the random variable with parameter p r . The overhead

queue of the last chunk in a batch – i.e. the sum of

the time it spends waiting to start service and its incurred by a failed transmission, i.e. the elapsed

service time – be W. time between the start of an attempt that

The Laplace-Stieltjes transform of the response time subsequently fails and the start of the next attempt,

distribution in such an M/GI/1/∞ queue with batch consists of the time-out delay of k*MTT for chunks

arrivals can then be shown to be given by: lost due to a full buffer (k mean successful

[1 − GB (H )]W * (θ ) = (1 − ρ1′)[GB (S * (θ )) − GB (H )]

transmission times). We express this overhead, L as

follows:

(29) L = k * MTT * p f (38)

θ

−1

Where z = G (1 − ) (30)

λ′

B

θ = λ ′(1 − GB ( z )) (31) 4 CONCLUSIONS

and

Providing instant messaging in real time is

H = z −1 S * (θ ). (32) indeed open challenge today. Previous works on

W is the average response time. * here denotes data relay nodes are centered on one node only. We have

indexed by relay node i = 1,2. shown a complete en-to-end delay evaluation that

includes two relay nodes (maximum number that a

The mean waiting time (and arbitrary higher

source MRSP terminal can select) for both buffer

moments) at relay node 1 can be approximated by

blocking and non-blocking situation. In the former

applying the recurrence formula of the Laplace-

analysis constant bit rate was considered to be

Stieltjes transform of the response time distribution

consistent with Cruz’s [12, 13] famous work

(σ )

of the M/GI/1/∞ queue with batch arrivals. The

computation of higher moments of waiting time of conserving virtual traffic parameter model , ρ .

this type can be located in [16, 17]. With our model, the performance evaluation of end-

We have, to-end delay for large instant messages becomes

W1 = −W *′ ( x) | x =0 (33) straight forward. The later analysis was provided for

Where buffer overloaded situation. The analysis presented

leads to the common optimization problem of

minimizing Eq. (37) with the respect to the relay

Ubiquitous Computing and Communication Journal

node throughputs, utilizations and buffer sizes. We Scheduling”, Parallel and Distributed Systems, IEEE

believe this is an interesting and useful avenue to be Transactions on, Vol: 16 (12) (2005), pp: 1166-1177.

explored for a large SEND system which is our [12] R. L. Cruz, “A calculus for network delay. I.

future area of investigation. Network elements in isolation”, Information Theory,

IEEE Transactions on, Vol: 37 (1), (1991), pp: 114 –

131, Digital Object Identifier 10.1109/18.61109.

5 REFERENCES [13] R. L. Cruz, “A calculus for network delay.

II. Network analysis”, Information Theory, IEEE

[1] B. Campbell, J. Rosenberg, H. Schulzrine, C. Transactions on, Vol: 37 (1), (1991), pp: 132 – 141,

Huitema, and D. Gurle, “Session Initiation Protocol Digital Object Identifier 10.1109/18.61110

(SIP) Extension for Instant Messaging”, RFC 3428, [14] V. M. Abramov, “Asymptotic behaviour of

Internet Engineering Task Force, (2002). the number of lost messages” SIAM J. Appl. Math.

[2] 3GPP, Internet Protocol (IP) multimedia call Vol: 64, (2004), pp: 746-761.

control protocol based on Session Initiation Protocol [15] V. M. Abramov, “Asymptotic methods for

(SIP) and Session Description Protocol (SDP); Stage queuing systems and networks with application to

3, TS 24.229. telecommunications”, PhD Thesis, School of

[3] E. Burger, “A Mechanism for Content Mathematics Science, Tel Aviv University, (2004).

Indirection in Session Initiation Protocol (SIP) [16] N. Gulpinar, P. Harrison, B. Rustem,

Messages” RFC 4483, Internet Engineering Task “Performance Optimization of Mean Response Time

Force, (2006). in a Tandem Router Network with Batch Arrivals”,

[4] M. Debbabi, M. Rahman, W. Lin, J. Gopal, S. Network Operations and Management Symposium,

Sridhar, “Standard SIP-based instant messaging and 10th IEEE/IFIP, (2006), pp: 1 – 4.

presence APIs for networked devices”, Networked [17] N. Gulpinar, P. Harrison, B. Rustem, “An

Appliances, Proceedings. IEEE 5th International optimisation model for a two-node router network”,

Workshop on, 30-31 Oct. (2002), pp: 59 - 64 Modeling, Analysis, and Simulation of Computer

[5] M. Gomez, J. L. Megias, C. Bueno, C. Brocal, and Telecommunications Systems, 2004.

“Interworking between the Multimedia Messaging Proceedings IEEE. 4-8 Oct. 2004, pp: 147 – 156.

Service (MMS) and the 3G IP Multimedia subsystem [18] J. Kaur, H. M. Vin, “Core-stateless

(IMS) Instant Messaging Service”, IEEE 16th guaranteed throughput networks”, IEEE INFOCOM,

International Symposium on Personal, Indoor and Vol: 3, 2003, pp: 2155 – 2165.

Mobile Radio communications, (2005), pp: 22742- [19] J. Kaur, H. M. Vin, “Core-stateless

278. guaranteed rate scheduling algorithms”, IEEE

[6] L. Zhou, “An empirical Investigation of INFOCOM, Vol: 3, 2001, pp: 1484 – 1492.

Deception Behaviour in Instant Messaging”, IEEE [20] Z. Zhang, Z. Duan, and Y. Gao , “A core

Transactions on Personal Communication, Vol: 48 stateless bandwidth broker architecture for scalable

(2), (2005), pp: 147-160. support of guaranteed services", Parallel and

[7] C. Jennings, R. Mahy, A. B. Roach, “Relay Distributed Systems, IEEE Transactions on, Vol: 15

Extensions for the Message Sessions Relay Protocol (2), 2004, pp: 167 – 182.

(MSRP)”, Internet Engineering Task force, draft-ietf- [21] Z. Zhang, Z. Duan, and Y. Hou, “Virtual

simple-msrp-relays-09.txt, (2007), Work on Progress. time reference system: a unifying scheduling

[8] M. Gastpar, M. Vetterli, “On the capacity of framework for scalable support of guaranteed

wireless networks: the relay case” INFOCOM 2002. services”, Selected Areas in Communications, IEEE

Twenty-First Annual Joint Conference of the IEEE Journal on, Vol: 18 (12), 2000, pp: 2684 – 2695.

Computer and Communications Societies. [22] C. Li, E. W. Knightly, “Coordinated

Proceedings. IEEE, Vol: 3, 23-27 June (2002), pp: multihop scheduling: a framework for end-to-end

1577 – 1586, Digital Object Identifier services”, Networking, IEEE/ACM Transactions on,

10.1109/INFCOM.2002.1019409 Vol: 10 (6), Dec. 2002, pp: 776 – 789.

[9] B. Wang, J. Zhang, A. Host-Madsen, “On the [23] C. Li, E. W. Knightly, “Schedulability

capacity of MIMO relay channels”, Information criterion and performance analysis of coordinated

Theory, IEEE Transactions on, Vol: 51 (1), Jan. schedulers”, Networking, IEEE/ACM Transactions

(2005), pp: 29 – 43, Digital Object Identifier on

10.1109/TIT.2004.839487 Vol: 13 (2), April 2005, pp: 276 – 287.

[10] H. B¨olcskei, R. U. Nabar, O. Oyman, A. J. [24] B. Campbell, R. Mahy, C. Jennings, “The

Paulraj, “Capacity Scaling Laws in MIMO Relay Message Session Relay Protocol”. Internet-Draft

Networks”, IEEE Transactions on Wireless draft-ietf-simple-message-sessions-18, Internet

Communications, Vol: 5 (6), June (2006), pp: 1433- Engineering Task Force, 2007, Work in Progress.

1443. [25] 3GPP, TSG SSA, IP Multimedia Subsystem

[11] Z. Zhang, Z. Duan, and Y. Hou, (IMS) – Stage 2 (Release 7), TS 23.228 v.7.3.0,

“Fundamental Trade-offs in Aggregate Packet 2006-03.

Ubiquitous Computing and Communication Journal

You might also like

- Nest - Js A Progressive Node - Js Framework by Greg MagolanDocument303 pagesNest - Js A Progressive Node - Js Framework by Greg MagolanSreobru Sreobru100% (3)

- Master Thesis On LTE and 5G Full ReportDocument55 pagesMaster Thesis On LTE and 5G Full ReportNancyNo ratings yet

- CitectHistorian V4.2 R1 Vol1Document329 pagesCitectHistorian V4.2 R1 Vol1melin2000No ratings yet

- PacketNotificationWPMC2011 VwebDocument5 pagesPacketNotificationWPMC2011 VwebSunny Girija SapruNo ratings yet

- MQTT Protocol: Message Queue Telemetry Transport for IoTDocument18 pagesMQTT Protocol: Message Queue Telemetry Transport for IoTRauf LalaNo ratings yet

- Short Message Peer To Peer Protocol (SMPP)Document9 pagesShort Message Peer To Peer Protocol (SMPP)Sajid ShahNo ratings yet

- Network Programming: MT-24C Assignment-1Document17 pagesNetwork Programming: MT-24C Assignment-1Inder2002No ratings yet

- SMPPDocument89 pagesSMPPyeswanthvgraNo ratings yet

- Tcp/Ip Protocol SuiteDocument17 pagesTcp/Ip Protocol Suitemadhavi gulhaneNo ratings yet

- Communication: Distributed Systems Principles and ParadigmsDocument63 pagesCommunication: Distributed Systems Principles and ParadigmsZain HamzaNo ratings yet

- Netwoking GunDocument5 pagesNetwoking GunChishale FridayNo ratings yet

- Classify Modems According To Synchronization Ans:: Data Communication Question Bank Fifth Semester CS & ITDocument19 pagesClassify Modems According To Synchronization Ans:: Data Communication Question Bank Fifth Semester CS & ITNicky SanthoshNo ratings yet

- Unit 5 IOTDocument40 pagesUnit 5 IOTRagul AadithyaNo ratings yet

- Chapter 3 IoT ProtocolsDocument52 pagesChapter 3 IoT Protocolskrishnareddy_chintalaNo ratings yet

- GSM Short Message ServiceDocument13 pagesGSM Short Message ServiceihabgamilNo ratings yet

- Midterm Exam Questions on Computer NetworksDocument2 pagesMidterm Exam Questions on Computer NetworksMony JosephNo ratings yet

- 201980090184+computer Network Final AssignmentDocument10 pages201980090184+computer Network Final AssignmentMd. Ziaul Haque ShiponNo ratings yet

- Final Solved Asssignment Mb0035Document14 pagesFinal Solved Asssignment Mb0035Avinash SinghNo ratings yet

- SMS Protocols and Architecture ExplainedDocument13 pagesSMS Protocols and Architecture ExplainedZahid Ali GujjarNo ratings yet

- Ss7 Protocol StackDocument6 pagesSs7 Protocol Stackk3kishorekumarNo ratings yet

- Multicast in Mpls Environment: P.F.I.E.VDocument13 pagesMulticast in Mpls Environment: P.F.I.E.Vhem777No ratings yet

- Traffic Analysis of A Short Message Service Network: January 2010Document5 pagesTraffic Analysis of A Short Message Service Network: January 2010shania msipaNo ratings yet

- Priority SlotDocument6 pagesPriority Slotravijangde10No ratings yet

- Efficient Way of Avoiding Time Delay and Congestion For Performing Secure Multimedia TransmissionDocument8 pagesEfficient Way of Avoiding Time Delay and Congestion For Performing Secure Multimedia TransmissionInternational Journal of Application or Innovation in Engineering & ManagementNo ratings yet

- vLAN SwitchingDocument30 pagesvLAN Switchingapi-3728377100% (1)

- UNIT3NGNDocument5 pagesUNIT3NGNshubham chauhanNo ratings yet

- Iot Unit 4Document53 pagesIot Unit 4SRHNo ratings yet

- Communication ProtocolsTCPDocument11 pagesCommunication ProtocolsTCPDhiviyansh Punamiya OT3 - 433No ratings yet

- CN R19 Unit-5Document49 pagesCN R19 Unit-5Perla Dayana Sri VarshaNo ratings yet

- Computer Communications Software DesignDocument24 pagesComputer Communications Software DesignChris RessoNo ratings yet

- Win SteinDocument8 pagesWin SteinJongNo ratings yet

- 5 IJAEST Volume No 3 Issue No 1 Designing of MODBUS For Continues Process Control 024 028Document5 pages5 IJAEST Volume No 3 Issue No 1 Designing of MODBUS For Continues Process Control 024 028iserpNo ratings yet

- Assignment1 IotDocument5 pagesAssignment1 IotAKNo ratings yet

- M2M Communication Scheduling Techniques Using LTE/LTE-A: Ali Ghubaish (A Paper Written Under The Guidance ofDocument14 pagesM2M Communication Scheduling Techniques Using LTE/LTE-A: Ali Ghubaish (A Paper Written Under The Guidance ofNoobstaxD AhmedNo ratings yet

- AN1630 - High Performance Communication NetworksDocument17 pagesAN1630 - High Performance Communication NetworksPavithra SunNo ratings yet

- Ceragon - Jumbo Frames - Technical BriefDocument7 pagesCeragon - Jumbo Frames - Technical BriefBernardo PortillaNo ratings yet

- Performance Comparison of MPLS and ATM Based NetworksDocument10 pagesPerformance Comparison of MPLS and ATM Based NetworksHumbertoAlejandroDiazNo ratings yet

- Networking NotesDocument11 pagesNetworking NotesTest AletheNo ratings yet

- Introduction To Mobile Ad Hoc NetworksDocument23 pagesIntroduction To Mobile Ad Hoc Networksakilaa_krishNo ratings yet

- Sensor Unite 2Document8 pagesSensor Unite 2MOHAMMAD DANISH KHANNo ratings yet

- CT1e 02 Standardization BodiesDocument9 pagesCT1e 02 Standardization BodiesAmir ShahaniNo ratings yet

- PIP: A Connection-Oriented, Multi-Hop, Multi-Channel TDMA-based MAC For High Throughput Bulk TransferDocument14 pagesPIP: A Connection-Oriented, Multi-Hop, Multi-Channel TDMA-based MAC For High Throughput Bulk Transferrtavares_980738No ratings yet

- Ussd Gateways Esme AND GSM ServicesDocument103 pagesUssd Gateways Esme AND GSM Services15octNo ratings yet

- Unit 2 - Advance Computer Networks - WWW - Rgpvnotes.inDocument21 pagesUnit 2 - Advance Computer Networks - WWW - Rgpvnotes.inprince keshriNo ratings yet

- GSM SignalDocument62 pagesGSM Signalitzme1No ratings yet

- Tdfferszbblk LDocument10 pagesTdfferszbblk LSoumyaprakash PaniNo ratings yet

- SS7 Protocol StackDocument6 pagesSS7 Protocol StackKishor AdkhaleNo ratings yet

- Assignment 1Document5 pagesAssignment 1Parker Bollinger IIINo ratings yet

- Deploying MPLS and DiffServDocument7 pagesDeploying MPLS and DiffServMohammed MarouanNo ratings yet

- Network Viva Questions and AnswersDocument29 pagesNetwork Viva Questions and Answersreshmaitagi50% (2)

- CCS7 (Common Channel Signaling Number 7)Document16 pagesCCS7 (Common Channel Signaling Number 7)Michella Y0% (1)

- UNIT-II Material: Megabits Per Second (MBPS)Document14 pagesUNIT-II Material: Megabits Per Second (MBPS)Rahul TiwariNo ratings yet

- SCTP vs TCP - Key Differences and Performance AnalysisDocument25 pagesSCTP vs TCP - Key Differences and Performance AnalysisCatán PatánNo ratings yet

- ISO OSI Model: 7-Layer Framework Explained in 40 CharactersDocument8 pagesISO OSI Model: 7-Layer Framework Explained in 40 CharactersdklikeNo ratings yet

- Dynamic Cluster Based Adaptive Gateway Discovery Mechanisms in An Integrated Internet ManetDocument7 pagesDynamic Cluster Based Adaptive Gateway Discovery Mechanisms in An Integrated Internet ManetIAEME PublicationNo ratings yet

- Unit - 3 NotesDocument48 pagesUnit - 3 NotesAnish RajNo ratings yet

- How Segment Routing Improves Network Scalability and ControlDocument5 pagesHow Segment Routing Improves Network Scalability and Controlmohammed meladNo ratings yet

- Introduction to Internet & Web Technology: Internet & Web TechnologyFrom EverandIntroduction to Internet & Web Technology: Internet & Web TechnologyNo ratings yet

- Special Issue On ICIT 2009 Conference - Bioinfomatics and Image - Ubiquitous Computing and Communication Journal (ISSN 1992-8424)Document57 pagesSpecial Issue On ICIT 2009 Conference - Bioinfomatics and Image - Ubiquitous Computing and Communication Journal (ISSN 1992-8424)Usman TariqNo ratings yet

- A Novel Opportunistic Spectrum Access For Applications in Cognitive RadioDocument5 pagesA Novel Opportunistic Spectrum Access For Applications in Cognitive RadioUsman TariqNo ratings yet

- Paper-146 20070929.pdf 146Document11 pagesPaper-146 20070929.pdf 146Usman TariqNo ratings yet

- UbiCC Journal - Volume 4 Number 3 - Ubiquitous Computing and Communication JournalDocument100 pagesUbiCC Journal - Volume 4 Number 3 - Ubiquitous Computing and Communication JournalUsman TariqNo ratings yet

- A Framework For User-Centered and Context-Aware Identity Management in Mobile Ad Hoc Networks (UCIM)Document11 pagesA Framework For User-Centered and Context-Aware Identity Management in Mobile Ad Hoc Networks (UCIM)Usman TariqNo ratings yet

- Efficent Method For Breaking Rsa SchemeDocument5 pagesEfficent Method For Breaking Rsa SchemeUsman TariqNo ratings yet

- Ubicc Conference Management System FlyerDocument2 pagesUbicc Conference Management System FlyerUsman TariqNo ratings yet

- Special Issue On ICIT 2009 Conference - Applied Computing - UbiCC Journal - UbiCC Journal, WWW - Ubicc.org, Ubiquitous Computing and Communication JournalDocument82 pagesSpecial Issue On ICIT 2009 Conference - Applied Computing - UbiCC Journal - UbiCC Journal, WWW - Ubicc.org, Ubiquitous Computing and Communication JournalUsman TariqNo ratings yet

- Ubicc Conference Management System FlyerDocument2 pagesUbicc Conference Management System FlyerUsman Tariq100% (2)

- Design and Performance Analysis of Optical Cdma System Using Newly Designed Multiwavelength Prime Sequence Codes at 1gbps Bit RateDocument5 pagesDesign and Performance Analysis of Optical Cdma System Using Newly Designed Multiwavelength Prime Sequence Codes at 1gbps Bit RateUsman TariqNo ratings yet

- Remote Experimentation Using Augmented RealityDocument9 pagesRemote Experimentation Using Augmented RealityUsman Tariq100% (3)

- IEEEkeywordsDocument140 pagesIEEEkeywordsgurudatha265No ratings yet

- Paper-146 20070929.pdf 146Document11 pagesPaper-146 20070929.pdf 146Usman TariqNo ratings yet

- Performance of Switched Diversity With Post-Examining Selection in CDMA SystemDocument6 pagesPerformance of Switched Diversity With Post-Examining Selection in CDMA SystemUsman TariqNo ratings yet

- Demand Prepaging For Flash Memory Using Static Program AnalysisDocument10 pagesDemand Prepaging For Flash Memory Using Static Program AnalysisUsman TariqNo ratings yet

- An Architecture For Ubiquitous Applications: Ubiquitous Computing and Communication JournalDocument8 pagesAn Architecture For Ubiquitous Applications: Ubiquitous Computing and Communication JournalUsman Tariq100% (1)

- WSNOPSYS Article 159 Final 159Document6 pagesWSNOPSYS Article 159 Final 159Usman TariqNo ratings yet

- A Pervasive Biometric Identification Services Platform Using Support Vector MachinesDocument4 pagesA Pervasive Biometric Identification Services Platform Using Support Vector MachinesUsman TariqNo ratings yet

- VC AKA-Last Version 121 121Document14 pagesVC AKA-Last Version 121 121Usman TariqNo ratings yet

- Volume2no474ubiquitous 74Document15 pagesVolume2no474ubiquitous 74Usman Tariq100% (1)

- A Hybrid Multi-Channel MAC Protocol With Virtual Mechanism and Power Control For Wireless Sensor NetworksDocument12 pagesA Hybrid Multi-Channel MAC Protocol With Virtual Mechanism and Power Control For Wireless Sensor NetworksUsman TariqNo ratings yet

- An Architecture For Ubiquitous Applications: Ubiquitous Computing and Communication JournalDocument8 pagesAn Architecture For Ubiquitous Applications: Ubiquitous Computing and Communication JournalUsman Tariq100% (1)

- XML in .NET Demo - 13Document21 pagesXML in .NET Demo - 13Usman TariqNo ratings yet

- Variable Step Size Algorithms For Network Echo Cancellation - 213Document12 pagesVariable Step Size Algorithms For Network Echo Cancellation - 213Usman TariqNo ratings yet

- User Friendly Congestion Pricingin 3G - 24 - 24Document10 pagesUser Friendly Congestion Pricingin 3G - 24 - 24Usman TariqNo ratings yet

- Using Sensors Sensability-Final - 50Document9 pagesUsing Sensors Sensability-Final - 50Usman TariqNo ratings yet

- Designing An Efficient Dmcis With Wireless Sensor and Ad Hoc NetworksDocument7 pagesDesigning An Efficient Dmcis With Wireless Sensor and Ad Hoc NetworksUsman TariqNo ratings yet

- Ubiroads-Specialissue Iera 165 165Document10 pagesUbiroads-Specialissue Iera 165 165Usman TariqNo ratings yet

- Ubiquitous Computing and Communication Journal - 128Document8 pagesUbiquitous Computing and Communication Journal - 128Usman TariqNo ratings yet

- UBIROADS07-Towards Efficient Routing in Vehicular 168 168Document5 pagesUBIROADS07-Towards Efficient Routing in Vehicular 168 168Usman TariqNo ratings yet

- Lane Community College Library Revised 12/09/2019 LMC/CDDocument2 pagesLane Community College Library Revised 12/09/2019 LMC/CDLyrah DivinaNo ratings yet

- Latest Thesis Topics in Wireless CommunicationDocument5 pagesLatest Thesis Topics in Wireless Communicationxgkeiiygg100% (2)

- Manual Web Services and CICSDocument153 pagesManual Web Services and CICSLuis RamirezNo ratings yet

- Unity 14 PLC CommunicationDocument12 pagesUnity 14 PLC CommunicationDennis IsikaNo ratings yet

- Ncbi 3Document6 pagesNcbi 3Muaris BowoNo ratings yet

- Browserstack ErrorDocument13 pagesBrowserstack ErrorRagNo ratings yet

- Bluej - Google SearchDocument1 pageBluej - Google SearchCreeperPie GamingNo ratings yet

- Configuring Ohs and Essbase With SSL For ObieeDocument15 pagesConfiguring Ohs and Essbase With SSL For ObieeKrishna Kant NemaNo ratings yet

- Ge 5 Activity 5Document2 pagesGe 5 Activity 5Aiva May CuaboNo ratings yet

- RMIDocument3 pagesRMIcheikhobernaoui93No ratings yet

- Manual - Cisco Networks - From Basic To AdvancedDocument460 pagesManual - Cisco Networks - From Basic To AdvancedJmontNo ratings yet

- Gmail - Your Friday Afternoon Trip With UberDocument3 pagesGmail - Your Friday Afternoon Trip With UberAbid Hossain RupamNo ratings yet

- Manual ChemBioOffice y Chem3DDocument579 pagesManual ChemBioOffice y Chem3DByronNo ratings yet

- 20EG01P Communication Skill in English Work Book Unit 02Document20 pages20EG01P Communication Skill in English Work Book Unit 02Thanmay JSNo ratings yet

- Down On Barney's Farm (Episode) - Barney Wiki - FandomDocument4 pagesDown On Barney's Farm (Episode) - Barney Wiki - FandomchefchadsmithNo ratings yet

- Mtconnect Installation Manual v3.1Document116 pagesMtconnect Installation Manual v3.1Minh Sang TrầnNo ratings yet

- Congestion or Low Throughput Mitigation Strategy v1 2Document21 pagesCongestion or Low Throughput Mitigation Strategy v1 2Abdilla RaisNo ratings yet

- PHP DocDocument192 pagesPHP DocEphrem ChernetNo ratings yet

- Dolby Version Matrix 2022 November 14Document6 pagesDolby Version Matrix 2022 November 14jcll lopezNo ratings yet

- Week04 FlexBoxDocument96 pagesWeek04 FlexBoxKkimm ChiiNo ratings yet

- Forticlient 5.6.0 Windows Release NotesDocument23 pagesForticlient 5.6.0 Windows Release NoteswmtNo ratings yet

- Watchlock User Guide - ENDocument85 pagesWatchlock User Guide - ENSarwat Naim SiddiquiNo ratings yet

- Raw Sosmed MikrotikDocument2 pagesRaw Sosmed MikrotikKucing100% (1)

- ManifestDocument8 pagesManifestRaoul DesouhantNo ratings yet

- Types of CloudDocument7 pagesTypes of Cloud385swayamNo ratings yet

- TCSE Is A Search Engine Specializing in Exploring Transcripts of TED TalkDocument2 pagesTCSE Is A Search Engine Specializing in Exploring Transcripts of TED TalkFariz IbrahimNo ratings yet

- Wiki Web Way (Practical Manual To Earn Money On Internet)Document79 pagesWiki Web Way (Practical Manual To Earn Money On Internet)AbundioTecaNo ratings yet

- TVT DVR Quick Start GuideDocument15 pagesTVT DVR Quick Start Guidesubir15No ratings yet