Professional Documents

Culture Documents

Algo

Uploaded by

rajOriginal Description:

Original Title

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Algo

Uploaded by

rajCopyright:

Available Formats

COMP171

Fall 2005

Introduction to

Analysis of Algorithms

Introduction to Analysis of Algorithms / Slide 2

Introduction

What is Algorithm?

a clearly specified set of simple instructions to be followed to

solve a problem

Takes

a set of values, as input and

produces a value, or set of values, as output

May be specified

In

English

As a computer program

As a pseudo-code

Data structures

Methods of organizing data

Program = algorithms + data structures

Introduction to Analysis of Algorithms / Slide 3

Introduction

Why

need algorithm analysis ?

writing a working program is not good enough

The program may be inefficient!

If the program is run on a large data set, then the

running time becomes an issue

Introduction to Analysis of Algorithms / Slide 4

An Example

A city has n stops. A bus driver wishes to follow the shortest

path from one stop to another. Between very two stops, if a

road exists, it may take a different time from other roads. Also,

roads are one-way, i.e., the road from view point 1 to 2, is

different from that from view point 2 to 1.

How to find the shortest path between any two pairs?

A Nave approach:

List all the paths between a given pair of view points

Compute the travel time for each.

Choose the shortest one.

How many paths are there?

Introduction to Analysis of Algorithms / Slide 5

# of paths

(n/e)n

Will be impossible to run your algorithm for n = 30

Need a way to compare two algorithms

Introduction to Analysis of Algorithms / Slide 6

Example: Selection Problem

Given

a list of N numbers, determine the kth

largest, where k N.

Algorithm 1:

(1) Read N numbers into an array

(2) Sort the array in decreasing order by some

simple algorithm

(3) Return the element in position k

Introduction to Analysis of Algorithms / Slide 7

Example: Selection Problem

Algorithm

2:

(1) Read the first k elements into an array and sort

them in decreasing order

(2) Each remaining element is read one by one

If

smaller than the kth element, then it is ignored

Otherwise, it is placed in its correct spot in the array,

bumping one element out of the array.

(3) The element in the kth position is returned as

the answer.

Introduction to Analysis of Algorithms / Slide 8

Example: Selection Problem

Which

algorithm is better when

N =100 and k = 100?

N =100 and k = 1?

What

happens when N = 1,000,000 and k =

500,000?

There exist better algorithms

Introduction to Analysis of Algorithms / Slide 9

Algorithm Analysis

We only analyze correct algorithms

An algorithm is correct

Incorrect algorithms

If, for every input instance, it halts with the correct output

Might not halt at all on some input instances

Might halt with other than the desired answer

Analyzing an algorithm

Predicting the resources that the algorithm requires

Resources include

Memory

Communication

bandwidth

Computational time (usually most important)

Introduction to Analysis of Algorithms / Slide 10

Algorithm Analysis

Factors affecting the running time

computer

compiler

algorithm used

input to the algorithm

The

content of the input affects the running time

typically, the input size (number of items in the input) is the main

consideration

E.g. sorting problem the number of items to be sorted

E.g. multiply two matrices together the total number of

elements in the two matrices

Machine model assumed

Instructions are executed one after another, with no

concurrent operations Not parallel computers

Introduction to Analysis of Algorithms / Slide 11

Worst- / average- / best-case

Worst-case running time of an algorithm

The longest running time for any input of size n

An upper bound on the running time for any input

guarantee that the algorithm will never take longer

Example: Sort a set of numbers in increasing order; and the

data is in decreasing order

The worst case can occur fairly often

E.g.

Best-case running time

in searching a database for a particular piece of information

sort a set of numbers in increasing order; and the data is

already in increasing order

Average-case running time

May be difficult to define what average means

Introduction to Analysis of Algorithms / Slide 12

Running-time of algorithms

Bounds

are for the algorithms, rather than

programs

programs are just implementations of an algorithm,

and almost always the details of the program do

not affect the bounds

Bounds

are for algorithms, rather than

problems

A problem can be solved with several algorithms,

some are more efficient than others

Introduction to Analysis of Algorithms / Slide 13

But, how to measure the time?

Multiplication and addition: which one takes longer?

How do we measure >=, assignment, &&, ||, etc etc

Machine dependent?

Slides courtesy of Prof. Saswati Sarkar

Introduction to Analysis of Algorithms / Slide 14

What is the efficiency of an

algorithm?

Run time in the computer: Machine Dependent

Example: Need to multiply two positive integers a and b

Subroutine 1: Multiply a and b

Subroutine 2:

V = a,

W= b

While W > 1

V V + a; W W-1

Output V

Introduction to Analysis of Algorithms / Slide 15

Solution: Machine Independent

Analysis

We assume that every basic operation takes constant time:

Example Basic Operations:

Addition, Subtraction, Multiplication, Memory Access

Non-basic Operations:

Sorting, Searching

Efficiency of an algorithm is the number of basic

operations it performs

We do not distinguish between the basic operations.

Introduction to Analysis of Algorithms / Slide 16

Subroutine 1 uses ? basic operation

Subroutine 2 uses ? basic operations

Subroutine ? is more efficient.

This measure is good for all large input sizes

In fact, we will not worry about the exact values, but will

look at ``broad classes of values, or the growth rates

Let there be n inputs.

If an algorithm needs n basic operations and another

needs 2n basic operations, we will consider them to be in

the same efficiency category.

However, we distinguish between exp(n), n, log(n)

Introduction to Analysis of Algorithms / Slide 17

Growth Rate

The idea is to establish a relative order among functions

for large n

c , n0 > 0 such that f(N) c g(N) when N n0

f(N) grows no faster than g(N) for large N

Introduction to Analysis of Algorithms / Slide 18

Asymptotic notation: Big-Oh

f(N)

= O(g(N))

There are positive constants c and n0 such

that

f(N) c g(N) when N n0

The

growth rate of f(N) is less than or equal to

the growth rate of g(N)

g(N) is an upper bound on f(N)

Introduction to Analysis of Algorithms / Slide 19

Big-Oh: example

Let

f(N) = 2N2. Then

f(N) = O(N4)

f(N) = O(N3)

f(N) = O(N2) (best answer, asymptotically tight)

Introduction to Analysis of Algorithms / Slide 20

Big Oh: more examples

N2 / 2 3N = O(N2)

1 + 4N = O(N)

7N2 + 10N + 3 = O(N2) = O(N3)

log10 N = log2 N / log2 10 = O(log2 N) = O(log N)

sin N = O(1); 10 = O(1), 1010 = O(1)

i 1

i N N O( N 2 )

2

2

3

i

O

(

N

)

i1

N

log N + N = O(N)

N = O(2N), but 2N is not O(N)

210N is not O(2N)

Introduction to Analysis of Algorithms / Slide 21

Some rules

When considering the growth rate of a function using

Big-Oh

Ignore the lower order terms and the coefficients of

the highest-order term

No need to specify the base of logarithm

Changing the base from one constant to another changes the

value of the logarithm by only a constant factor

If T1(N) = O(f(N) and T2(N) = O(g(N)), then

T1(N) + T2(N) = max(O(f(N)), O(g(N))),

T1(N) * T2(N) = O(f(N) * g(N))

Introduction to Analysis of Algorithms / Slide 22

Big-Omega

c , n0 > 0 such that f(N) c g(N) when N n0

f(N) grows no slower than g(N) for large N

Introduction to Analysis of Algorithms / Slide 23

Big-Omega

= (g(N))

There are positive constants c and n0 such

that

f(N) c g(N) when N n0

f(N)

The

growth rate of f(N) is greater than or

equal to the growth rate of g(N).

Introduction to Analysis of Algorithms / Slide 24

Big-Omega: examples

Let

f(N) = 2N2. Then

f(N) = (N)

f(N) = (N2)

(best answer)

Introduction to Analysis of Algorithms / Slide 25

f(N) = (g(N))

the growth rate of f(N) is the same as the growth rate

of g(N)

Introduction to Analysis of Algorithms / Slide 26

Big-Theta

= (g(N)) iff

f(N) = O(g(N)) and f(N) = (g(N))

The growth rate of f(N) equals the growth rate

of g(N)

Example: Let f(N)=N2 , g(N)=2N2

f(N)

We write f(N) = O(g(N)) and f(N) = (g(N)), thus

f(N) = (g(N)).

Introduction to Analysis of Algorithms / Slide 27

Some rules

If

T(N) is a polynomial of degree k, then

T(N) = (Nk).

For

logarithmic functions,

T(logm N) = (log N).

Introduction to Analysis of Algorithms / Slide 28

Little-oh

f(N)

= o(g(N))

f(N) = O(g(N)) and f(N) (g(N))

The growth rate of f(N) is less than the growth

rate of g(N)

Introduction to Analysis of Algorithms / Slide 29

Using L' Hopital's rule

L' Hopital's rule

If lim f ( N ) and lim g ( N )

n

then

f (N )

lim

=

n g ( N )

f ( N )

lim

n g ( N )

Determine the relative growth rates by using L' Hopital's rule

f (N )

compute

lim

g(N )

if 0:

f(N) = o(g(N))

if constant 0: f(N) = (g(N))

if :

g(N) = o(f(N))

limit oscillates: no relation

Introduction to Analysis of Algorithms / Slide 30

Example Functions

sqrt(n) , n, 2n, ln n, exp(n), n + sqrt(n) , n + n2

limn sqrt(n) /n = 0,

sqrt(n) is o(n)

limn n/sqrt(n) = infinity,

n is o(sqrt(n))

limn n /2n = 1/2,

limn 2n /n = 2,

n is (2n), (2n)

2n is (n), (n)

Introduction to Analysis of Algorithms / Slide 31

Typical Growth Rates

Introduction to Analysis of Algorithms / Slide 32

Introduction to Analysis of Algorithms / Slide 33

Introduction to Analysis of Algorithms / Slide 34

Growth rates

Doubling

the input size

f(N) = c

f(N) = log N

f(N) = N

f(N) = N2

f(N) = N3

f(N) = 2N

Advantages

f(2N) = f(N) = c

f(2N) = f(N) + log 2

f(2N) = 2 f(N)

f(2N) = 4 f(N)

f(2N) = 8 f(N)

f(2N) = f2(N)

of algorithm analysis

To eliminate bad algorithms early

pinpoints the bottlenecks, which are worth coding

carefully

Introduction to Analysis of Algorithms / Slide 35

Example

Calculate

3

i

i 1

4N

2N+2

Lines 1 and 4 count for one unit each

Line 3: executed N times, each time four units

Line 2: (1 for initialization, N+1 for all the tests, N for

all the increments) total 2N + 2

total cost: 6N + 4 O(N)

Introduction to Analysis of Algorithms / Slide 36

General Rules

For

loops

at most the running time of the statements inside

the for-loop (including tests) times the number of

iterations.

Nested

for loops

the running time of the statement multiplied by the

product of the sizes of all the for-loops.

O(N2)

Introduction to Analysis of Algorithms / Slide 37

General rules (contd)

Consecutive

statements

These just add

O(N) + O(N2) = O(N2)

If/Else

never more than the running time of the test plus the larger of

the running times of S1 and S2.

Introduction to Analysis of Algorithms / Slide 38

Another Example

Maximum

Subsequence Sum Problem

Given (possibly negative) integers A1, A2, ....,

j

An, find the maximum value of Ak

k i

For convenience, the maximum subsequence sum

is 0 if all the integers are negative

E.g.

for input 2, 11, -4, 13, -5, -2

Answer: 20 (A2 through A4)

Introduction to Analysis of Algorithms / Slide 39

Algorithm 1: Simple

Exhaustively tries all possibilities (brute force)

O(N3)

Introduction to Analysis of Algorithms / Slide 40

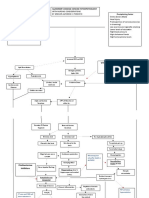

Algorithm 2: Divide-and-conquer

Divide-and-conquer

split the problem into two roughly equal subproblems, which

are then solved recursively

patch together the two solutions of the subproblems to arrive

at a solution for the whole problem

The maximum subsequence sum can be

Entirely in the left half of the input

Entirely in the right half of the input

It crosses the middle and is in both halves

Introduction to Analysis of Algorithms / Slide 41

Algorithm 2 (contd)

The first two cases can be solved recursively

For the last case:

find the largest sum in the first half that includes the last

element in the first half

the largest sum in the second half that includes the first

element in the second half

add these two sums together

Introduction to Analysis of Algorithms / Slide 42

Example: 8 numbers in a sequence,

4 3

5 2

-1

2 6 -2

Max subsequence sum for first half =6 (4, -3, 5)

second half =8 (2, 6)

Max subsequence sum for first half ending at the last element is

4 (4, -3, 5, -2)

Max subsequence sum for sum second half starting at the first

element is 7 (-1, 2, 6)

Max subsequence sum spanning the middle is 11?

Max subsequence spans the middle 4, -3, 5, -2, -1, 2, 6

Slides courtesy of Prof. Saswati Sarkar

Introduction to Analysis of Algorithms / Slide 43

Algorithm 2

O(1)

T(m/2)

T(m/2)

O(m)

O(1)

Introduction to Analysis of Algorithms / Slide 44

Algorithm 2 (contd)

Recurrence

equation

T (1) 1

N

T (N ) 2T ( ) N

2

2 T(N/2): two subproblems, each of size N/2

N: for patching two solutions to find solution to

whole problem

Introduction to Analysis of Algorithms / Slide 45

Algorithm 2 (contd)

Solving the recurrence:

N

) N

2

N

4T ( ) 2N

4

N

8 T ( ) 3N

8

T (N ) 2T (

2k T (

With k=log N (i.e. 2k = N), we have

T ( N ) N T (1) N log N

N log N N

Thus, the running time is O(N log N)

faster than solution 1 for large data sets

N

) kN

k

2

You might also like

- Stack and Queue: COMP171 Fall 2005Document44 pagesStack and Queue: COMP171 Fall 2005dasbhadarNo ratings yet

- Lower Bound Sorting Radix SortDocument20 pagesLower Bound Sorting Radix Sortganesh12julyNo ratings yet

- Analysis of Algorithms Review: Adapted From Notes of S. Sarkar of Upenn, Skiena of Stony Brook, EtcDocument32 pagesAnalysis of Algorithms Review: Adapted From Notes of S. Sarkar of Upenn, Skiena of Stony Brook, EtcrajNo ratings yet

- COMP171 Data Structure & Algorithm: Tutorial 1 TA: M.Y.ChanDocument46 pagesCOMP171 Data Structure & Algorithm: Tutorial 1 TA: M.Y.ChanMandeep SinghNo ratings yet

- CDocument61 pagesCrajNo ratings yet

- RecursionDocument41 pagesRecursionrajNo ratings yet

- Shoe Dog: A Memoir by the Creator of NikeFrom EverandShoe Dog: A Memoir by the Creator of NikeRating: 4.5 out of 5 stars4.5/5 (537)

- Never Split the Difference: Negotiating As If Your Life Depended On ItFrom EverandNever Split the Difference: Negotiating As If Your Life Depended On ItRating: 4.5 out of 5 stars4.5/5 (838)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureFrom EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureRating: 4.5 out of 5 stars4.5/5 (474)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeFrom EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeRating: 4 out of 5 stars4/5 (5782)

- Grit: The Power of Passion and PerseveranceFrom EverandGrit: The Power of Passion and PerseveranceRating: 4 out of 5 stars4/5 (587)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceFrom EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceRating: 4 out of 5 stars4/5 (890)

- The Yellow House: A Memoir (2019 National Book Award Winner)From EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Rating: 4 out of 5 stars4/5 (98)

- On Fire: The (Burning) Case for a Green New DealFrom EverandOn Fire: The (Burning) Case for a Green New DealRating: 4 out of 5 stars4/5 (72)

- The Little Book of Hygge: Danish Secrets to Happy LivingFrom EverandThe Little Book of Hygge: Danish Secrets to Happy LivingRating: 3.5 out of 5 stars3.5/5 (399)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryFrom EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryRating: 3.5 out of 5 stars3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnFrom EverandTeam of Rivals: The Political Genius of Abraham LincolnRating: 4.5 out of 5 stars4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaFrom EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaRating: 4.5 out of 5 stars4.5/5 (265)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersFrom EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersRating: 4.5 out of 5 stars4.5/5 (344)

- The Emperor of All Maladies: A Biography of CancerFrom EverandThe Emperor of All Maladies: A Biography of CancerRating: 4.5 out of 5 stars4.5/5 (271)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyFrom EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyRating: 3.5 out of 5 stars3.5/5 (2219)

- The Unwinding: An Inner History of the New AmericaFrom EverandThe Unwinding: An Inner History of the New AmericaRating: 4 out of 5 stars4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreFrom EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreRating: 4 out of 5 stars4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)From EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Rating: 4.5 out of 5 stars4.5/5 (119)

- Her Body and Other Parties: StoriesFrom EverandHer Body and Other Parties: StoriesRating: 4 out of 5 stars4/5 (821)

- Police Planning 2ndDocument8 pagesPolice Planning 2ndLester BalagotNo ratings yet

- Junkspace: Hilary PowellDocument5 pagesJunkspace: Hilary PowellPilar PinchartNo ratings yet

- V.I.P. Very Important Points: Dr. Adel Al HarbiDocument143 pagesV.I.P. Very Important Points: Dr. Adel Al HarbiSukainah AL-AbkaryNo ratings yet

- United States Court of Appeals, Third CircuitDocument15 pagesUnited States Court of Appeals, Third CircuitScribd Government DocsNo ratings yet

- Basic Physics II - Course OverviewDocument2 pagesBasic Physics II - Course OverviewNimesh SinglaNo ratings yet

- Grammar - Unit 6Document3 pagesGrammar - Unit 6Fátima Castellano AlcedoNo ratings yet

- GRIFFITHS, J.A. - The Vihuela Fantasia. A Comparative Study of Forms and Styles (Monash University, 1983)Document643 pagesGRIFFITHS, J.A. - The Vihuela Fantasia. A Comparative Study of Forms and Styles (Monash University, 1983)Gérard Reyne100% (13)

- Jsi Eng Paper 1Document4 pagesJsi Eng Paper 1Sharifah Jannatul AjilahNo ratings yet

- MedCon Template - Melena+DOC+Septic ConditionDocument26 pagesMedCon Template - Melena+DOC+Septic ConditionPramudia DeniNo ratings yet

- Aug. 16, 2019 - Friday Aug. 16, 2019 - FridayDocument3 pagesAug. 16, 2019 - Friday Aug. 16, 2019 - FridayYssh GozumNo ratings yet

- Analogy and LogicDocument2 pagesAnalogy and LogicCOMELEC CARNo ratings yet

- RFQ for cat and dog food from Arbab TradersDocument2 pagesRFQ for cat and dog food from Arbab TradersAnas AltafNo ratings yet

- Panaya SetupGuideDocument17 pagesPanaya SetupGuidecjmilsteadNo ratings yet

- Challenges of Quality Worklife and Job Satisfaction for Food Delivery EmployeesDocument15 pagesChallenges of Quality Worklife and Job Satisfaction for Food Delivery EmployeesStephani shethaNo ratings yet

- MEASURING HORIZONTAL DISTANCESDocument11 pagesMEASURING HORIZONTAL DISTANCESJosiah M. TubagaNo ratings yet

- Gujarat State Eligibility Test: Gset SyllabusDocument6 pagesGujarat State Eligibility Test: Gset SyllabussomiyaNo ratings yet

- Assignment 2Document28 pagesAssignment 2Jinglin DengNo ratings yet

- Seminar Assignments Ticket Vending MachineDocument13 pagesSeminar Assignments Ticket Vending MachineCandy SomarNo ratings yet

- Baptism of ApostlesDocument67 pagesBaptism of ApostlesaudubelaiaNo ratings yet

- Protein Structure Prediction Methods (2D and 3DDocument38 pagesProtein Structure Prediction Methods (2D and 3Dth_kiranNo ratings yet

- Urology: Hydronephrosis and Kidney StonesDocument13 pagesUrology: Hydronephrosis and Kidney StonesendiNo ratings yet

- Dilkeswar PDFDocument21 pagesDilkeswar PDFDilkeshwar PandeyNo ratings yet

- Design, Fabrication and Performance Evaluation of A Coffee Bean (RoasterDocument7 pagesDesign, Fabrication and Performance Evaluation of A Coffee Bean (RoasterAbas S. AcmadNo ratings yet

- How To Think Like Da Vinci PDFDocument48 pagesHow To Think Like Da Vinci PDFTushar Walia100% (5)

- HR CONCLAVE DraftDocument7 pagesHR CONCLAVE DraftKushal SainNo ratings yet

- HTTPDocument40 pagesHTTPDherick RaleighNo ratings yet

- UNIX Concepts: 1. How Are Devices Represented in UNIX?Document15 pagesUNIX Concepts: 1. How Are Devices Represented in UNIX?api-3764166No ratings yet

- Doctors not guilty of negligence in patient's deathDocument1 pageDoctors not guilty of negligence in patient's deathAlleine TupazNo ratings yet

- THE PHILIPPINE JUDICIAL SYSTEM: PRE-SPANISH AND SPANISH PERIODDocument17 pagesTHE PHILIPPINE JUDICIAL SYSTEM: PRE-SPANISH AND SPANISH PERIODFranchesca Revello100% (1)

- Pathophysiology of Alzheimer's Disease With Nursing ConsiderationsDocument10 pagesPathophysiology of Alzheimer's Disease With Nursing ConsiderationsTiger Knee100% (1)