Professional Documents

Culture Documents

2.review Techniques

Uploaded by

arunlalds0 ratings0% found this document useful (0 votes)

187 views21 pagesThe document discusses various techniques for reviewing software documents including the objectives of reviews, what is reviewed, defect amplification, metrics for reviews, types of reviews (informal and formal), roles in reviews, and guidelines for effective reviews. The primary goals of reviews are to find errors early before they become defects, improve quality, and train participants.

Original Description:

Review Techniques

Original Title

2.Review Techniques

Copyright

© © All Rights Reserved

Available Formats

PPT, PDF, TXT or read online from Scribd

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentThe document discusses various techniques for reviewing software documents including the objectives of reviews, what is reviewed, defect amplification, metrics for reviews, types of reviews (informal and formal), roles in reviews, and guidelines for effective reviews. The primary goals of reviews are to find errors early before they become defects, improve quality, and train participants.

Copyright:

© All Rights Reserved

Available Formats

Download as PPT, PDF, TXT or read online from Scribd

0 ratings0% found this document useful (0 votes)

187 views21 pages2.review Techniques

Uploaded by

arunlaldsThe document discusses various techniques for reviewing software documents including the objectives of reviews, what is reviewed, defect amplification, metrics for reviews, types of reviews (informal and formal), roles in reviews, and guidelines for effective reviews. The primary goals of reviews are to find errors early before they become defects, improve quality, and train participants.

Copyright:

© All Rights Reserved

Available Formats

Download as PPT, PDF, TXT or read online from Scribd

You are on page 1of 21

Review Techniques

Primary objective of formal technical reviews

Find errors during the process so that they do

not become defects after release of the

software.

Benefit - the early discovery of errors so they

do not propagate to the next step in the

software process.

What Are Reviews?

a meeting conducted by technical people for

technical people

a technical assessment of a work product

created during the software engineering

process

a software quality assurance mechanism

a training ground

What Do We Look For?

• Errors and defects

• Error—a quality problem found before the

software is released to end users

• Defect—a quality problem found only after

the software has been released to end-users

Defect Amplification

• Defect Amplification

• A defect amplification model [IBM81] can be

used to illustrate the generation and detection

of errors during the design and code generation

actions of a software process.

Defect Amplification Model

• A box represents a software development step. During the

step, errors may be inadvertently generated.

• Review may fail to uncover newly generated errors and errors

from previous steps, resulting in some number of errors that

are passed through.

• In some cases, errors passed through from previous steps are

amplified (amplification factor, x) by current work.

• box subdivisions represent each of these characteristics and the

percent of efficiency for detecting errors, a function of the

thoroughness of the review.

Defect amplification –no reviews

• Hypothetical example of defect amplification

for a software development process in which

no reviews are conducted.

• Each test step uncovers and corrects 50

percent of all incoming errors without

introducing any new errors.

• Ten preliminary design defects are amplified

to 94 errors before testing commences.

Twelve latent errors are released to the field.

Defect amplification –Reviews Conducted

• Same conditions except that design and code

reviews are conducted as part of each

development step.

• Ten initial preliminary design errors are

amplified to 24 errors before testing

commences.

• Only three latent errors exist.

Review metrics and their use

• Preparation effort Ep- the effort in person

hours required to review a work product prior

to actual review meeting

• Assessment effort Ea- the effort in person –

hours that is expended during actual review

• Rework effort Er the effort that is dedicated to

the correction of those errors uncovered

during the review

• Work product size WPS a measure of the size

of work product that has been reviewed

• Minor errors found Errminor –the number of

errors found that can be categorized as minor

• Major errors found Errmajor the number of

errors found that can be categorized as major

Analyzing Metrics

• The total review effort and the total number

of errors discovered are defined as

• Ereview =Ep + Ea + Er

• Errtot =errminor+errmajor

• Error density = err tot/WPS

• Error density represents the errors found per

unit of work product.

Reference Model for technical reviews

Informal Reviews

• Informal reviews include:

a simple desk check of a software engineering work product

with a colleague

a casual meeting (involving more than 2 people) for the

purpose of reviewing a work product, or the review-oriented

aspects of pair programming

pair programming encourages continuous review as a work

product (design or code) is created.

• The benefit is immediate discovery of errors and better work

product quality as a consequence.

Formal Technical Reviews

• The objectives of an FTR are:

• to uncover errors in function, logic, or implementation for

• any representation of the software

• to verify that the software under review meets its

• requirements

• to ensure that the software has been represented according

• to predefined standards

• to achieve software that is developed in a uniform manner

• to make projects more manageable

• The FTR is actually a class of reviews that includes

• walkthroughs and inspections

The review meeting

• Regardless of the FTR format that is chosen every review

meeting should abide by the following constraints

• Between three and five people should be involved in the

review

• Advance preparation should occur but should require no

more than two hours of work for each person

• The duration of the review meeting should be less than two

hours

• Focus is on a work product (e.g., a portion of a

• requirements model, a detailed component design,

• source code for a component

The Players

• Producer—the individual who has developed the work

• Product informs the project leader that the work product is

complete and that a review is required

• Review leader—evaluates the product for readiness,

• generates copies of product materials, and distributes

• them to two or three reviewers for advance preparation.

• Reviewer(s)—expected to spend between one and two

• hours reviewing the product, making notes, and

• otherwise becoming familiar with the work.

• Recorder—reviewer who records (in writing) all important

• issues raised during the review.

Review guidelines

• Review the product, not the producer.

• Set an agenda and maintain it.

• Limit debate and rebuttal.

• Enunciate problem areas, but don't attempt to solve

every problem noted.

• Take written notes.

• Limit the number of participants and insist upon

advance Preparation.

• Develop a checklist for each product that is

likely to be reviewed.

• Allocate resources and schedule time for FTRs.

• Conduct meaningful training for all reviewers.

• Review your early reviews

You might also like

- Real Time Operating System A Complete Guide - 2020 EditionFrom EverandReal Time Operating System A Complete Guide - 2020 EditionNo ratings yet

- Icmp Error ReportingDocument58 pagesIcmp Error ReportingSanjeev SubediNo ratings yet

- BayesianNetworks ReducedDocument14 pagesBayesianNetworks Reducedastir1234No ratings yet

- Face Recognition Using Neural NetworkDocument31 pagesFace Recognition Using Neural Networkvishnu vNo ratings yet

- Sample Introduction and ObjectivesDocument9 pagesSample Introduction and Objectivesyumira khateNo ratings yet

- The Embedded Design Life Cycle: - Product Specification - Hardware/software Partitioning - Hardware/software IntegrationDocument18 pagesThe Embedded Design Life Cycle: - Product Specification - Hardware/software Partitioning - Hardware/software IntegrationTejinder Singh SagarNo ratings yet

- Metrics For Process and Projects Chapter 15 & 22: Roger S. 6 Edition)Document25 pagesMetrics For Process and Projects Chapter 15 & 22: Roger S. 6 Edition)farhan sdfNo ratings yet

- Introduction To CMOS VLSI Design (E158) Final ProjectDocument4 pagesIntroduction To CMOS VLSI Design (E158) Final ProjectbashasvuceNo ratings yet

- Software Engineering - Functional Point (FP) Analysis - GeeksforGeeksDocument12 pagesSoftware Engineering - Functional Point (FP) Analysis - GeeksforGeeksGanesh PanigrahiNo ratings yet

- Capstone Project ReportDocument45 pagesCapstone Project ReportPrabh JeetNo ratings yet

- Chapter 22 - Software Configuration ManagementDocument15 pagesChapter 22 - Software Configuration Managementmalik assadNo ratings yet

- Chap 6 - Software ReuseDocument51 pagesChap 6 - Software ReuseMohamed MedNo ratings yet

- SE - Unit 1 - The Process and The Product - PPTDocument15 pagesSE - Unit 1 - The Process and The Product - PPTZameer Ahmed SaitNo ratings yet

- Realtime Operating SystemsDocument1 pageRealtime Operating Systemssarala20021990100% (1)

- PDC 1 - PD ComputingDocument12 pagesPDC 1 - PD ComputingmishaNo ratings yet

- The Systems Development EnvironmentDocument6 pagesThe Systems Development EnvironmentAhimbisibwe BakerNo ratings yet

- IT2403 Systems Analysis and Design: (Compulsory)Document6 pagesIT2403 Systems Analysis and Design: (Compulsory)Akber HassanNo ratings yet

- CW COMP1631 59 Ver1 1617Document10 pagesCW COMP1631 59 Ver1 1617dharshinishuryaNo ratings yet

- Cse345p Bi LabDocument30 pagesCse345p Bi LabkamalNo ratings yet

- CS2301-Software Engineering 2 MarksDocument17 pagesCS2301-Software Engineering 2 MarksDhanusha Chandrasegar SabarinathNo ratings yet

- 2.1 Basic Design Concepts in TQMDocument6 pages2.1 Basic Design Concepts in TQMDr.T. Pridhar ThiagarajanNo ratings yet

- Ee382M - Vlsi I: Spring 2009 (Prof. David Pan) Final ProjectDocument13 pagesEe382M - Vlsi I: Spring 2009 (Prof. David Pan) Final ProjectsepritaharaNo ratings yet

- Android Malware Detection Using Machine LearningDocument31 pagesAndroid Malware Detection Using Machine LearningNALLURI PREETHINo ratings yet

- Unit 7Document56 pagesUnit 7Abhinav Abz100% (1)

- Lesson Plan ESDocument5 pagesLesson Plan ESsureshvkumarNo ratings yet

- Seham Ali 2021: Chapter-1Document33 pagesSeham Ali 2021: Chapter-1سميره شنيمرNo ratings yet

- KPIT-A Development Tools PartnerDocument23 pagesKPIT-A Development Tools PartnerNaeem PatelNo ratings yet

- Cs8494 Software Engineering: Course Instructor Mrs.A.Tamizharasi, AP/CSE, RMDEC Mrs. Remya Rose S, AP/CSE, RMDECDocument23 pagesCs8494 Software Engineering: Course Instructor Mrs.A.Tamizharasi, AP/CSE, RMDEC Mrs. Remya Rose S, AP/CSE, RMDECTamizharasi ANo ratings yet

- Software Engineering and Project ManagementDocument23 pagesSoftware Engineering and Project ManagementUmair Aziz KhatibNo ratings yet

- Lisa - Add New Front: Process Matching/Installation and Qualification (IQ)Document62 pagesLisa - Add New Front: Process Matching/Installation and Qualification (IQ)Thanh Vũ NguyễnNo ratings yet

- Embedded System (9168)Document5 pagesEmbedded System (9168)Victoria FrancoNo ratings yet

- Digital Camera CasestudyDocument57 pagesDigital Camera CasestudyAnonymous aLRiBR100% (1)

- Reduced Instruction Set Computer 1 PDFDocument6 pagesReduced Instruction Set Computer 1 PDFKunal PawarNo ratings yet

- 17CS62 CGVDocument297 pages17CS62 CGVCHETHAN KUMAR SNo ratings yet

- Microprocessors Lab Manual 408331: Hashemite UniversityDocument63 pagesMicroprocessors Lab Manual 408331: Hashemite UniversitytargetNo ratings yet

- 156 - Engineering - Drawing - EE-155 - Lab - Manual Final-2Document24 pages156 - Engineering - Drawing - EE-155 - Lab - Manual Final-2Hamza AkhtarNo ratings yet

- Speech Recognition Calculator: ECE 4007 Section L02 - Group 08Document23 pagesSpeech Recognition Calculator: ECE 4007 Section L02 - Group 08GJ CarsonNo ratings yet

- Data Scientist Test Task V2Document1 pageData Scientist Test Task V2suryanshNo ratings yet

- Pressman CH 23 Estimation For Software Projects 2Document37 pagesPressman CH 23 Estimation For Software Projects 2Suman MadanNo ratings yet

- Prototyping: Prototyping: Prototypes and Production - Open Source Versus Closed SourceDocument14 pagesPrototyping: Prototyping: Prototypes and Production - Open Source Versus Closed Sourcerajeswarikannan0% (1)

- Pressman CH 25 Risk ManagementDocument35 pagesPressman CH 25 Risk ManagementSuma H R hr100% (1)

- 04 - 05-AI-Knowledge and ReasoningDocument61 pages04 - 05-AI-Knowledge and ReasoningderbewNo ratings yet

- MaualTesting QuestionsDocument23 pagesMaualTesting QuestionsRohit KhuranaNo ratings yet

- Computer Science Capstone Project: Course DescriptionDocument7 pagesComputer Science Capstone Project: Course DescriptionGrantham UniversityNo ratings yet

- 5 - Field Technician Networking and StorageDocument12 pages5 - Field Technician Networking and StoragenaveenNo ratings yet

- Power PC vs. MIPS: by Saumil Shah and Joel MartinDocument31 pagesPower PC vs. MIPS: by Saumil Shah and Joel MartinbaptbaptNo ratings yet

- Digital Signal Processors and Architectures (DSPA) Unit-2Document92 pagesDigital Signal Processors and Architectures (DSPA) Unit-2Chaitanya DuggineniNo ratings yet

- The Object-Oriented Approach To Design: Use Case RealizationDocument59 pagesThe Object-Oriented Approach To Design: Use Case Realizationmohamed187No ratings yet

- INF305 Notes (Pressman)Document57 pagesINF305 Notes (Pressman)dinesh_panchariaNo ratings yet

- SQA MidTermExam Online Paper 03122020 105129pmDocument2 pagesSQA MidTermExam Online Paper 03122020 105129pmAisha ArainNo ratings yet

- ISR Case StudyDocument48 pagesISR Case StudysnehalNo ratings yet

- SPM PPT U1Document29 pagesSPM PPT U1Venkata Nikhil Chakravarthy KorrapatiNo ratings yet

- Final Project Synopsis "Age and Gender Detection"Document17 pagesFinal Project Synopsis "Age and Gender Detection"Ankit KumarNo ratings yet

- SWT3 TimDocument75 pagesSWT3 TimNguyen Minh Hieu (K17 HL)100% (1)

- Ad SW Final Revision Essay QuestionDocument4 pagesAd SW Final Revision Essay QuestionKAREEM Abo ELsouDNo ratings yet

- Compiler Construction: Chapter 1: Introduction To CompilationDocument65 pagesCompiler Construction: Chapter 1: Introduction To CompilationazimkhanNo ratings yet

- Advanced JAVA ProgrammingDocument7 pagesAdvanced JAVA ProgrammingSaurabh TargeNo ratings yet

- Speech Emotion Detection (CNN Algorithm)Document29 pagesSpeech Emotion Detection (CNN Algorithm)narendra rahmanNo ratings yet

- AnswerDocument4 pagesAnswerFernando SalasNo ratings yet

- System Software Module 2Document156 pagesSystem Software Module 2arunlalds0% (1)

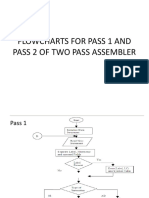

- Flowcharts For Pass 1 and Pass 2 ofDocument4 pagesFlowcharts For Pass 1 and Pass 2 ofarunlaldsNo ratings yet

- Assembler Module 1-1Document23 pagesAssembler Module 1-1arunlaldsNo ratings yet

- Module 4Document31 pagesModule 4arunlaldsNo ratings yet

- System Software Module 1Document67 pagesSystem Software Module 1arunlalds0% (1)

- 4 ProjectschedulingDocument21 pages4 ProjectschedulingarunlaldsNo ratings yet

- Project Management ConceptsDocument19 pagesProject Management ConceptsarunlaldsNo ratings yet

- Product Metrics For SoftwareDocument26 pagesProduct Metrics For SoftwarearunlaldsNo ratings yet

- 1.software TestingDocument40 pages1.software TestingarunlaldsNo ratings yet

- 3.OO TestingDocument9 pages3.OO TestingarunlaldsNo ratings yet

- An Agile View of Process: Software Engineering: A Practitioner's Approach 6 Edition Roger S. PressmanDocument36 pagesAn Agile View of Process: Software Engineering: A Practitioner's Approach 6 Edition Roger S. PressmanarunlaldsNo ratings yet

- Estimation For Software ProjectsDocument57 pagesEstimation For Software ProjectsarunlaldsNo ratings yet

- 2.testing Conventional Applications1Document56 pages2.testing Conventional Applications1arunlaldsNo ratings yet

- Pressman 7 CH 15Document23 pagesPressman 7 CH 15arunlaldsNo ratings yet

- 3.software Quality AssuranceDocument22 pages3.software Quality AssurancearunlaldsNo ratings yet

- 4.pressman CH 8 Analysis ModelingDocument43 pages4.pressman CH 8 Analysis Modelingarunlalds100% (2)

- 6.pressman CH 12 User Interface DesignDocument37 pages6.pressman CH 12 User Interface DesignarunlaldsNo ratings yet

- 1.quality ConceptsDocument12 pages1.quality ConceptsarunlaldsNo ratings yet

- Chapter 05 1Document20 pagesChapter 05 1arunlaldsNo ratings yet

- Principles That Guide Practice: Software Engineering: A Practitioner's Approach, 7/eDocument20 pagesPrinciples That Guide Practice: Software Engineering: A Practitioner's Approach, 7/earunlaldsNo ratings yet

- An Agile View of Process: Software Engineering: A Practitioner's Approach 6 Edition Roger S. PressmanDocument36 pagesAn Agile View of Process: Software Engineering: A Practitioner's Approach 6 Edition Roger S. PressmanarunlaldsNo ratings yet

- 5.pressman CH 10 Architectural DesignDocument21 pages5.pressman CH 10 Architectural DesignarunlaldsNo ratings yet

- 5.1 Java Database Programming: 5.1.1 JDBC CharacteristicsDocument60 pages5.1 Java Database Programming: 5.1.1 JDBC Characteristicsarunlalds100% (1)

- Network Programming in JavaDocument66 pagesNetwork Programming in JavaarunlaldsNo ratings yet

- Module 5Document66 pagesModule 5arunlaldsNo ratings yet

- Module 4 JavaDocument38 pagesModule 4 JavaarunlaldsNo ratings yet

- Dokumen Pendamping Ijazah: Diploma SupplementDocument4 pagesDokumen Pendamping Ijazah: Diploma SupplementRichardo AdoNo ratings yet

- BU MSC Maths SyllabusDocument12 pagesBU MSC Maths Syllabusleo_3455No ratings yet

- Motion Media and InformationDocument29 pagesMotion Media and InformationLerwin GaringaNo ratings yet

- CordicDocument21 pagesCordic2015mdaamir2015No ratings yet

- ImagingTools D82Document114 pagesImagingTools D82gimmyhead_69No ratings yet

- Pantone ColorSuite For Hexachrome - Pantone Handbook of Color in Hexachrome Process WorkflowDocument66 pagesPantone ColorSuite For Hexachrome - Pantone Handbook of Color in Hexachrome Process Workflowscri50% (2)

- Finale Exam of CTTCDocument189 pagesFinale Exam of CTTCDilip BhagatNo ratings yet

- Forrester Wave Customer Identity and Access ManagementDocument20 pagesForrester Wave Customer Identity and Access ManagementRanjan PrakashNo ratings yet

- Fundamental Data ConceptDocument12 pagesFundamental Data Conceptpisabandmut100% (1)

- BitDefender Log FileDocument3 pagesBitDefender Log FileYulistio ErvanoNo ratings yet

- Ayman Naser Curriculum VitaeDocument13 pagesAyman Naser Curriculum VitaeKhaled AlmansoorNo ratings yet

- AVR USB Programmer ManualV2Document20 pagesAVR USB Programmer ManualV2annbennNo ratings yet

- Sukhi Hone Ka Upay B.BR - Pt.Shri Ravindraji 'Aatman' - Pravachan ListDocument5 pagesSukhi Hone Ka Upay B.BR - Pt.Shri Ravindraji 'Aatman' - Pravachan ListAnshu JainNo ratings yet

- TOPIC: Science - Micro-Organisms TERM: Autumn Year Group: 6: Lesson Number Key Objectives Tasks Resources IntroDocument4 pagesTOPIC: Science - Micro-Organisms TERM: Autumn Year Group: 6: Lesson Number Key Objectives Tasks Resources IntroAndrewCooke99No ratings yet

- Cuando Eramos Invencibles - 6pvmrof PDFDocument2 pagesCuando Eramos Invencibles - 6pvmrof PDFLeon Garcia Jimmi100% (1)

- A Novel Method For Reconciliation - Julian Et. AlDocument12 pagesA Novel Method For Reconciliation - Julian Et. AlIgnacio UribeNo ratings yet

- Polytechnic University of The PhilippinesDocument10 pagesPolytechnic University of The PhilippinesPamela AmitNo ratings yet

- Chapter 5 Data ProcessingDocument26 pagesChapter 5 Data ProcessingMelchor CarabayasNo ratings yet

- Coordinate Systems Hotfix ReadmeDocument12 pagesCoordinate Systems Hotfix ReadmebaoloilongNo ratings yet

- Best 20 Interview Questions For APIDocument4 pagesBest 20 Interview Questions For APIVijjuNo ratings yet

- Computer Programming MT114 - 2020/2021: Idrissa S. ADocument10 pagesComputer Programming MT114 - 2020/2021: Idrissa S. AAzimina BehuttaNo ratings yet

- Handling Precaution For Optical Disk Drive & MediaDocument2 pagesHandling Precaution For Optical Disk Drive & MediaVichi Arvind SharmaNo ratings yet

- Apacheview User Guide: Ies Virtual Environment 2012Document74 pagesApacheview User Guide: Ies Virtual Environment 2012leungNo ratings yet

- O Portability Across DBMS o TransparencyDocument5 pagesO Portability Across DBMS o TransparencyIsraelNo ratings yet

- SCADAConnectSimulator FireDocument29 pagesSCADAConnectSimulator FireMidwardFranciscoQuispeLivisiNo ratings yet

- RoselleDocument1 pageRoselleAron Jay MejiaNo ratings yet

- Case Study 1 University Database Application Till Exp 3Document11 pagesCase Study 1 University Database Application Till Exp 3sendtoshekar100% (1)

- What Is A GC Buffer Busy WaitDocument2 pagesWhat Is A GC Buffer Busy Waitmrstranger1981No ratings yet

- REF54 techENkDocument118 pagesREF54 techENkBrian LewisNo ratings yet

- Inventario Zapatos PDFDocument2 pagesInventario Zapatos PDFarmandoNo ratings yet